├── LICENSE

├── README.md

├── commons.py

├── configs

├── cvc-eng-ppgs-three-emo-cycleloss.json

├── cvc-eng-ppgs-three-emo.json

├── cvc-whispers-multi.json

└── cvc-whispers-three-emo.json

├── cvc627.png

├── data_utils_engppg.py

├── data_utils_whisper.py

├── losses.py

├── mel_processing.py

├── models.py

├── modules.py

├── ppg.py

├── ppgemoconvert_exp.py

├── preprocess_ppg.py

├── train_eng_ppg_emo_loss.py

├── train_whisper_emo.py

├── utils.py

├── whisper

├── LICENSE

├── README.md

├── __init__.py

├── __pycache__

│ ├── __init__.cpython-37.pyc

│ ├── audio.cpython-37.pyc

│ ├── decoding.cpython-37.pyc

│ ├── model.cpython-37.pyc

│ ├── tokenizer.cpython-37.pyc

│ └── utils.cpython-37.pyc

├── assets

│ ├── gpt2

│ │ ├── merges.txt

│ │ ├── special_tokens_map.json

│ │ ├── tokenizer_config.json

│ │ └── vocab.json

│ ├── mel_filters.npz

│ └── multilingual

│ │ ├── added_tokens.json

│ │ ├── merges.txt

│ │ ├── special_tokens_map.json

│ │ ├── tokenizer_config.json

│ │ └── vocab.json

├── audio.py

├── decoding.py

├── model.py

├── normalizers

│ ├── __init__.py

│ ├── basic.py

│ ├── english.json

│ └── english.py

├── tokenizer.py

└── utils.py

├── whisperconvert_exp.py

└── whisperconvert_longaudio.py

/LICENSE:

--------------------------------------------------------------------------------

1 | MIT License

2 |

3 | Copyright (c) 2023 ConsistencyVC

4 |

5 | Permission is hereby granted, free of charge, to any person obtaining a copy

6 | of this software and associated documentation files (the "Software"), to deal

7 | in the Software without restriction, including without limitation the rights

8 | to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

9 | copies of the Software, and to permit persons to whom the Software is

10 | furnished to do so, subject to the following conditions:

11 |

12 | The above copyright notice and this permission notice shall be included in all

13 | copies or substantial portions of the Software.

14 |

15 | THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

16 | IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

17 | FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

18 | AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

19 | LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

20 | OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

21 | SOFTWARE.

22 |

--------------------------------------------------------------------------------

/README.md:

--------------------------------------------------------------------------------

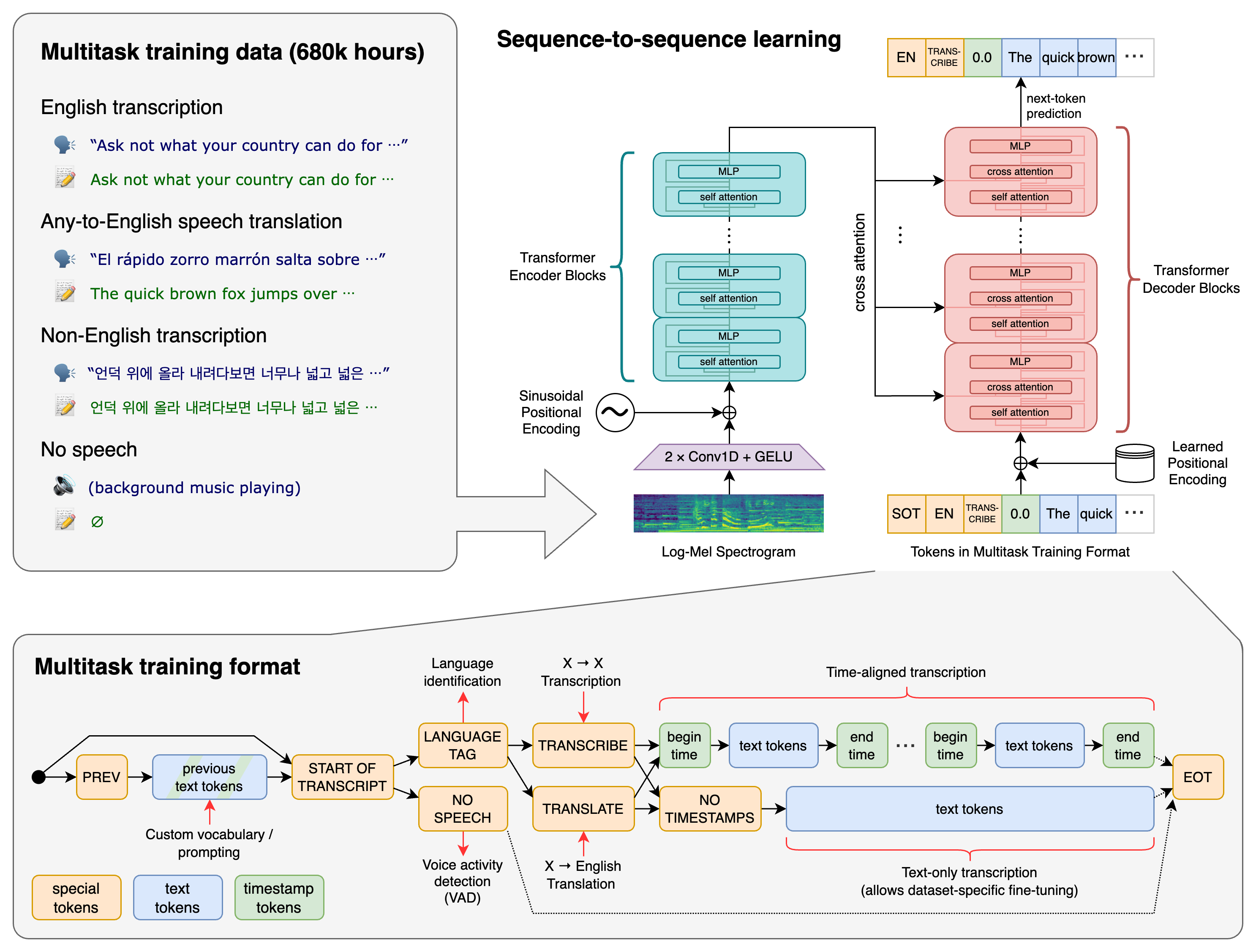

1 | # ConsistencyVC-voive-conversion

2 |

3 | ## Using joint training speaker encoder with consistency loss to achieve cross-lingual voice conversion and expressive voice conversion

4 |

5 | Demo page: https://consistencyvc.github.io/ConsistencyVC-demo-page

6 |

7 | The whisper medium model can be downloaded here: https://drive.google.com/file/d/1PZsfQg3PUZuu1k6nHvavd6OcOB_8m1Aa/view?usp=drive_link

8 |

9 | The pre-trained models are available here:https://drive.google.com/drive/folders/1KvMN1V8BWCzJd-N8hfyP283rLQBKIbig?usp=sharing

10 |

11 | Note: The audio needs to be 16KHz for train and inference.

12 |

13 |  14 |

15 |

16 |

17 |

18 | # Inference with the pre-trained models (use WEO as example)

19 |

20 | Generate the WEO of the source speech in [src](https://github.com/ConsistencyVC/ConsistencyVC-voive-conversion/blob/467ed5e632b2b328d01c87cb73e92b26b36deb05/whisperconvert_exp.py#L39C1-L39C1) by preprocess_ppg.py.

21 |

22 | Copy the root of the reference speech to [tgt](https://github.com/ConsistencyVC/ConsistencyVC-voive-conversion/blob/467ed5e632b2b328d01c87cb73e92b26b36deb05/whisperconvert_exp.py#L47)

23 |

24 | Use whisperconvert_exp.py to achieve voice conversion using WEO as content information.

25 |

26 | For ConsistencyEVC, use ppgemoconvert_exp.py to achieve voice conversion using ppg as content information.

27 |

28 | # Inference for the long audio

29 | I uploaded a new py file for the inference of long audio.

30 | You don't need to run the whisper by another file, just change [this part](https://github.com/ConsistencyVC/ConsistencyVC-voive-conversion/blob/83f72b0801240e7d932c9314431df6e75f2d1c22/whisperconvert_longaudio.py#L41) and run this py file.

31 |

32 | # Train models by your dataset

33 |

34 | Use ppg.py to generate the PPG.

35 |

36 | Use preprocess_ppg.py to generate the WEO.

37 |

38 | ## If you want to use WEO to train a cross-lingual voice conversion model:

39 |

40 | First you need to train the model without speaker consistency loss for 100k steps:

41 |

42 | change [this line](https://github.com/ConsistencyVC/ConsistencyVC-voive-conversion/blob/b5e8e984dffd5a12910d1846e25b128298933e40/train_whisper_emo.py#L214C11-L214C11) to

43 |

44 | ```python

45 | loss_gen_all = loss_gen + loss_fm + loss_mel + loss_kl# + loss_emo

46 | ```

47 |

48 | run the py file:

49 |

50 | ```python

51 | python train_whisper_emo.py -c configs/cvc-whispers-multi.json -m cvc-whispers-three

52 | ```

53 |

54 | Then change [this line](https://github.com/ConsistencyVC/ConsistencyVC-voive-conversion/blob/71cf17a5b65c12987ea7fba74d1d173ea1aae5cb/train_whisper_emo.py#L214) back to finetune this model with speaker consistency loss

55 |

56 | ```python

57 | python train_whisper_emo.py -c configs/cvc-whispers-three-emo.json -m cvc-whispers-three

58 | ```

59 |

60 | ## If you want to use PPG to train an expressive voice conversion model:

61 |

62 | First you need to train the model without speaker consistency loss for 100k steps:

63 |

64 | change [this line](https://github.com/ConsistencyVC/ConsistencyVC-voive-conversion/blob/71cf17a5b65c12987ea7fba74d1d173ea1aae5cb/train_eng_ppg_emo_loss.py#L311) to

65 |

66 | ```python

67 | loss_gen_all = loss_gen + loss_fm + loss_mel + loss_kl# + loss_emo

68 | ```

69 |

70 | run the py file:

71 |

72 | ```python

73 | python train_eng_ppg_emo_loss.py -c configs/cvc-eng-ppgs-three-emo.json -m cvc-eng-ppgs-three-emo

74 | ```

75 |

76 | Then change [this line](https://github.com/ConsistencyVC/ConsistencyVC-voive-conversion/blob/71cf17a5b65c12987ea7fba74d1d173ea1aae5cb/train_eng_ppg_emo_loss.py#L311) back to finetune this model with speaker consistency loss

77 |

78 | ```python

79 | python train_eng_ppg_emo_loss.py -c configs/cvc-eng-ppgs-three-emo-cycleloss.json -m cvc-eng-ppgs-three-emo

80 | ```

81 |

82 |

83 | # Reference

84 |

85 | The code structure is based on [FreeVC-s](https://github.com/OlaWod/FreeVC). Suggestion: please follow the instruction of FreeVC to install python requirements.

86 |

87 | The WEO content feature is based on [LoraSVC](https://github.com/PlayVoice/lora-svc).

88 |

89 | The PPG is from the [phoneme recognition model](https://huggingface.co/speech31/wav2vec2-large-english-TIMIT-phoneme_v3).

90 |

91 |

92 |

--------------------------------------------------------------------------------

/commons.py:

--------------------------------------------------------------------------------

1 | import math

2 | import numpy as np

3 | import torch

4 | from torch import nn

5 | from torch.nn import functional as F

6 |

7 |

8 | def init_weights(m, mean=0.0, std=0.01):

9 | classname = m.__class__.__name__

10 | if classname.find("Conv") != -1:

11 | m.weight.data.normal_(mean, std)

12 |

13 |

14 | def get_padding(kernel_size, dilation=1):

15 | return int((kernel_size*dilation - dilation)/2)

16 |

17 |

18 | def convert_pad_shape(pad_shape):

19 | l = pad_shape[::-1]

20 | pad_shape = [item for sublist in l for item in sublist]

21 | return pad_shape

22 |

23 |

24 | def intersperse(lst, item):

25 | result = [item] * (len(lst) * 2 + 1)

26 | result[1::2] = lst

27 | return result

28 |

29 |

30 | def kl_divergence(m_p, logs_p, m_q, logs_q):

31 | """KL(P||Q)"""

32 | kl = (logs_q - logs_p) - 0.5

33 | kl += 0.5 * (torch.exp(2. * logs_p) + ((m_p - m_q)**2)) * torch.exp(-2. * logs_q)

34 | return kl

35 |

36 |

37 | def rand_gumbel(shape):

38 | """Sample from the Gumbel distribution, protect from overflows."""

39 | uniform_samples = torch.rand(shape) * 0.99998 + 0.00001

40 | return -torch.log(-torch.log(uniform_samples))

41 |

42 |

43 | def rand_gumbel_like(x):

44 | g = rand_gumbel(x.size()).to(dtype=x.dtype, device=x.device)

45 | return g

46 |

47 |

48 | def slice_segments(x, ids_str, segment_size=4):

49 | ret = torch.zeros_like(x[:, :, :segment_size])

50 | for i in range(x.size(0)):

51 | idx_str = ids_str[i]

52 | idx_end = idx_str + segment_size

53 | ret[i] = x[i, :, idx_str:idx_end]

54 | return ret

55 |

56 |

57 | def rand_slice_segments(x, x_lengths=None, segment_size=4):

58 | b, d, t = x.size()

59 | if x_lengths is None:

60 | x_lengths = t

61 | ids_str_max = x_lengths - segment_size + 1

62 | ids_str = (torch.rand([b]).to(device=x.device) * ids_str_max).to(dtype=torch.long)

63 | ret = slice_segments(x, ids_str, segment_size)

64 | return ret, ids_str

65 |

66 |

67 | def rand_spec_segments(x, x_lengths=None, segment_size=4):

68 | b, d, t = x.size()

69 | if x_lengths is None:

70 | x_lengths = t

71 | ids_str_max = x_lengths - segment_size

72 | ids_str = (torch.rand([b]).to(device=x.device) * ids_str_max).to(dtype=torch.long)

73 | ret = slice_segments(x, ids_str, segment_size)

74 | return ret, ids_str

75 |

76 |

77 | def get_timing_signal_1d(

78 | length, channels, min_timescale=1.0, max_timescale=1.0e4):

79 | position = torch.arange(length, dtype=torch.float)

80 | num_timescales = channels // 2

81 | log_timescale_increment = (

82 | math.log(float(max_timescale) / float(min_timescale)) /

83 | (num_timescales - 1))

84 | inv_timescales = min_timescale * torch.exp(

85 | torch.arange(num_timescales, dtype=torch.float) * -log_timescale_increment)

86 | scaled_time = position.unsqueeze(0) * inv_timescales.unsqueeze(1)

87 | signal = torch.cat([torch.sin(scaled_time), torch.cos(scaled_time)], 0)

88 | signal = F.pad(signal, [0, 0, 0, channels % 2])

89 | signal = signal.view(1, channels, length)

90 | return signal

91 |

92 |

93 | def add_timing_signal_1d(x, min_timescale=1.0, max_timescale=1.0e4):

94 | b, channels, length = x.size()

95 | signal = get_timing_signal_1d(length, channels, min_timescale, max_timescale)

96 | return x + signal.to(dtype=x.dtype, device=x.device)

97 |

98 |

99 | def cat_timing_signal_1d(x, min_timescale=1.0, max_timescale=1.0e4, axis=1):

100 | b, channels, length = x.size()

101 | signal = get_timing_signal_1d(length, channels, min_timescale, max_timescale)

102 | return torch.cat([x, signal.to(dtype=x.dtype, device=x.device)], axis)

103 |

104 |

105 | def subsequent_mask(length):

106 | mask = torch.tril(torch.ones(length, length)).unsqueeze(0).unsqueeze(0)

107 | return mask

108 |

109 |

110 | @torch.jit.script

111 | def fused_add_tanh_sigmoid_multiply(input_a, input_b, n_channels):

112 | n_channels_int = n_channels[0]

113 | in_act = input_a + input_b

114 | t_act = torch.tanh(in_act[:, :n_channels_int, :])

115 | s_act = torch.sigmoid(in_act[:, n_channels_int:, :])

116 | acts = t_act * s_act

117 | return acts

118 |

119 |

120 | def convert_pad_shape(pad_shape):

121 | l = pad_shape[::-1]

122 | pad_shape = [item for sublist in l for item in sublist]

123 | return pad_shape

124 |

125 |

126 | def shift_1d(x):

127 | x = F.pad(x, convert_pad_shape([[0, 0], [0, 0], [1, 0]]))[:, :, :-1]

128 | return x

129 |

130 |

131 | def sequence_mask(length, max_length=None):

132 | if max_length is None:

133 | max_length = length.max()

134 | x = torch.arange(max_length, dtype=length.dtype, device=length.device)

135 | return x.unsqueeze(0) < length.unsqueeze(1)

136 |

137 |

138 | def generate_path(duration, mask):

139 | """

140 | duration: [b, 1, t_x]

141 | mask: [b, 1, t_y, t_x]

142 | """

143 | device = duration.device

144 |

145 | b, _, t_y, t_x = mask.shape

146 | cum_duration = torch.cumsum(duration, -1)

147 |

148 | cum_duration_flat = cum_duration.view(b * t_x)

149 | path = sequence_mask(cum_duration_flat, t_y).to(mask.dtype)

150 | path = path.view(b, t_x, t_y)

151 | path = path - F.pad(path, convert_pad_shape([[0, 0], [1, 0], [0, 0]]))[:, :-1]

152 | path = path.unsqueeze(1).transpose(2,3) * mask

153 | return path

154 |

155 |

156 | def clip_grad_value_(parameters, clip_value, norm_type=2):

157 | if isinstance(parameters, torch.Tensor):

158 | parameters = [parameters]

159 | parameters = list(filter(lambda p: p.grad is not None, parameters))

160 | norm_type = float(norm_type)

161 | if clip_value is not None:

162 | clip_value = float(clip_value)

163 |

164 | total_norm = 0

165 | for p in parameters:

166 | param_norm = p.grad.data.norm(norm_type)

167 | total_norm += param_norm.item() ** norm_type

168 | if clip_value is not None:

169 | p.grad.data.clamp_(min=-clip_value, max=clip_value)

170 | total_norm = total_norm ** (1. / norm_type)

171 | return total_norm

172 |

--------------------------------------------------------------------------------

/configs/cvc-eng-ppgs-three-emo-cycleloss.json:

--------------------------------------------------------------------------------

1 | {

2 | "train": {

3 | "log_interval": 200,

4 | "eval_interval": 6000,

5 | "seed": 1235,

6 | "epochs": 10000,

7 | "learning_rate": 2e-4,

8 | "betas": [0.8, 0.99],

9 | "eps": 1e-9,

10 | "batch_size": 42,

11 | "fp16_run": true,

12 | "lr_decay": 0.999875,

13 | "segment_size": 24000,

14 | "init_lr_ratio": 1,

15 | "warmup_epochs": 0,

16 | "c_mel": 45,

17 | "c_kl": 1.0,

18 | "use_sr": false,

19 | "max_speclen": 300,

20 | "port": "8006"

21 | },

22 | "data": {

23 | "training_files":"train.txt",

24 | "validation_files":"test.txt",

25 | "max_wav_value": 32768.0,

26 | "sampling_rate": 16000,

27 | "filter_length": 1024,

28 | "hop_length": 320,

29 | "win_length": 1024,

30 | "n_mel_channels": 80,

31 | "mel_fmin": 0.0,

32 | "mel_fmax": null

33 | },

34 | "model": {

35 | "inter_channels": 192,

36 | "hidden_channels": 192,

37 | "filter_channels": 768,

38 | "n_heads": 2,

39 | "n_layers": 6,

40 | "kernel_size": 3,

41 | "p_dropout": 0.1,

42 | "resblock": "1",

43 | "resblock_kernel_sizes": [3,7,11],

44 | "resblock_dilation_sizes": [[1,3,5], [1,3,5], [1,3,5]],

45 | "upsample_rates": [10,8,2,2],

46 | "upsample_initial_channel": 512,

47 | "upsample_kernel_sizes": [20,16,4,4],

48 | "n_layers_q": 3,

49 | "use_spectral_norm": false,

50 | "gin_channels": 256,

51 | "ssl_dim": 44,

52 | "use_spk": false

53 | }

54 | }

55 |

--------------------------------------------------------------------------------

/configs/cvc-eng-ppgs-three-emo.json:

--------------------------------------------------------------------------------

1 | {

2 | "train": {

3 | "log_interval": 200,

4 | "eval_interval": 6000,

5 | "seed": 1235,

6 | "epochs": 10000,

7 | "learning_rate": 2e-4,

8 | "betas": [0.8, 0.99],

9 | "eps": 1e-9,

10 | "batch_size": 108,

11 | "fp16_run": true,

12 | "lr_decay": 0.999875,

13 | "segment_size": 8960,

14 | "init_lr_ratio": 1,

15 | "warmup_epochs": 0,

16 | "c_mel": 45,

17 | "c_kl": 1.0,

18 | "use_sr": false,

19 | "max_speclen": 300,

20 | "port": "8006"

21 | },

22 | "data": {

23 | "training_files":"train.txt",

24 | "validation_files":"test.txt",

25 | "max_wav_value": 32768.0,

26 | "sampling_rate": 16000,

27 | "filter_length": 1024,

28 | "hop_length": 320,

29 | "win_length": 1024,

30 | "n_mel_channels": 80,

31 | "mel_fmin": 0.0,

32 | "mel_fmax": null

33 | },

34 | "model": {

35 | "inter_channels": 192,

36 | "hidden_channels": 192,

37 | "filter_channels": 768,

38 | "n_heads": 2,

39 | "n_layers": 6,

40 | "kernel_size": 3,

41 | "p_dropout": 0.1,

42 | "resblock": "1",

43 | "resblock_kernel_sizes": [3,7,11],

44 | "resblock_dilation_sizes": [[1,3,5], [1,3,5], [1,3,5]],

45 | "upsample_rates": [10,8,2,2],

46 | "upsample_initial_channel": 512,

47 | "upsample_kernel_sizes": [20,16,4,4],

48 | "n_layers_q": 3,

49 | "use_spectral_norm": false,

50 | "gin_channels": 256,

51 | "ssl_dim": 44,

52 | "use_spk": false

53 | }

54 | }

55 |

--------------------------------------------------------------------------------

/configs/cvc-whispers-multi.json:

--------------------------------------------------------------------------------

1 | {

2 | "train": {

3 | "log_interval": 200,

4 | "eval_interval": 6000,

5 | "seed": 1235,

6 | "epochs": 10000,

7 | "learning_rate": 2e-4,

8 | "betas": [0.8, 0.99],

9 | "eps": 1e-9,

10 | "batch_size": 108,

11 | "fp16_run": true,

12 | "lr_decay": 0.999875,

13 | "segment_size": 8960,

14 | "init_lr_ratio": 1,

15 | "warmup_epochs": 0,

16 | "c_mel": 45,

17 | "c_kl": 1.0,

18 | "use_sr": false,

19 | "max_speclen": 300,

20 | "port": "8001"

21 | },

22 | "data": {

23 | "training_files":"train.txt",

24 | "validation_files":"test.txt",

25 | "max_wav_value": 32768.0,

26 | "sampling_rate": 16000,

27 | "filter_length": 1024,

28 | "hop_length": 320,

29 | "win_length": 1024,

30 | "n_mel_channels": 80,

31 | "mel_fmin": 0.0,

32 | "mel_fmax": null

33 | },

34 | "model": {

35 | "inter_channels": 192,

36 | "hidden_channels": 192,

37 | "filter_channels": 768,

38 | "n_heads": 2,

39 | "n_layers": 6,

40 | "kernel_size": 3,

41 | "p_dropout": 0.1,

42 | "resblock": "1",

43 | "resblock_kernel_sizes": [3,7,11],

44 | "resblock_dilation_sizes": [[1,3,5], [1,3,5], [1,3,5]],

45 | "upsample_rates": [10,8,2,2],

46 | "upsample_initial_channel": 512,

47 | "upsample_kernel_sizes": [20,16,4,4],

48 | "n_layers_q": 3,

49 | "use_spectral_norm": false,

50 | "gin_channels": 256,

51 | "ssl_dim": 1024,

52 | "use_spk": false

53 | }

54 | }

55 |

--------------------------------------------------------------------------------

/configs/cvc-whispers-three-emo.json:

--------------------------------------------------------------------------------

1 | {

2 | "train": {

3 | "log_interval": 200,

4 | "eval_interval": 2500,

5 | "seed": 1235,

6 | "epochs": 10000,

7 | "learning_rate": 2e-4,

8 | "betas": [0.8, 0.99],

9 | "eps": 1e-9,

10 | "batch_size": 42,

11 | "fp16_run": true,

12 | "lr_decay": 0.999875,

13 | "segment_size": 24000,

14 | "init_lr_ratio": 1,

15 | "warmup_epochs": 0,

16 | "c_mel": 45,

17 | "c_kl": 1.0,

18 | "use_sr": false,

19 | "max_speclen": 300,

20 | "port": "8006"

21 | },

22 | "data": {

23 | "training_files":"train.txt",

24 | "validation_files":"test.txt",

25 | "max_wav_value": 32768.0,

26 | "sampling_rate": 16000,

27 | "filter_length": 1024,

28 | "hop_length": 320,

29 | "win_length": 1024,

30 | "n_mel_channels": 80,

31 | "mel_fmin": 0.0,

32 | "mel_fmax": null

33 | },

34 | "model": {

35 | "inter_channels": 192,

36 | "hidden_channels": 192,

37 | "filter_channels": 768,

38 | "n_heads": 2,

39 | "n_layers": 6,

40 | "kernel_size": 3,

41 | "p_dropout": 0.1,

42 | "resblock": "1",

43 | "resblock_kernel_sizes": [3,7,11],

44 | "resblock_dilation_sizes": [[1,3,5], [1,3,5], [1,3,5]],

45 | "upsample_rates": [10,8,2,2],

46 | "upsample_initial_channel": 512,

47 | "upsample_kernel_sizes": [20,16,4,4],

48 | "n_layers_q": 3,

49 | "use_spectral_norm": false,

50 | "gin_channels": 256,

51 | "ssl_dim": 1024,

52 | "use_spk": false

53 | }

54 | }

55 |

--------------------------------------------------------------------------------

/cvc627.png:

--------------------------------------------------------------------------------

https://raw.githubusercontent.com/ConsistencyVC/ConsistencyVC-voive-conversion/b1506a2c4c68337de922b624c0df1a1dd034419d/cvc627.png

--------------------------------------------------------------------------------

/data_utils_engppg.py:

--------------------------------------------------------------------------------

1 | import time

2 | import os

3 | import random

4 | import numpy as np

5 | import torch

6 | import torch.utils.data

7 |

8 | import commons

9 | from mel_processing import spectrogram_torch, spec_to_mel_torch

10 | from utils import load_wav_to_torch, load_filepaths_and_text, transform

11 | #import h5py

12 |

13 |

14 | """Multi speaker version"""

15 | class TextAudioSpeakerLoader(torch.utils.data.Dataset):

16 | """

17 | 1) loads audio, speaker_id, text pairs

18 | 2) normalizes text and converts them to sequences of integers

19 | 3) computes spectrograms from audio files.

20 | """

21 | def __init__(self, audiopaths, hparams):

22 | self.audiopaths = load_filepaths_and_text(audiopaths)

23 | self.max_wav_value = hparams.data.max_wav_value

24 | self.sampling_rate = hparams.data.sampling_rate

25 | self.filter_length = hparams.data.filter_length

26 | self.hop_length = hparams.data.hop_length

27 | self.win_length = hparams.data.win_length

28 | self.sampling_rate = hparams.data.sampling_rate

29 | self.use_sr = hparams.train.use_sr

30 | self.use_spk = hparams.model.use_spk

31 | self.spec_len = hparams.train.max_speclen

32 |

33 | random.seed(1235)

34 | random.shuffle(self.audiopaths)

35 | self._filter()

36 |

37 | def _filter(self):

38 | """

39 | Filter text & store spec lengths

40 | """

41 | # Store spectrogram lengths for Bucketing

42 | # wav_length ~= file_size / (wav_channels * Bytes per dim) = file_size / (1 * 2)

43 | # spec_length = wav_length // hop_length

44 |

45 | lengths = []

46 | for audiopath in self.audiopaths:

47 | lengths.append(os.path.getsize(audiopath[0]) // (2 * self.hop_length))

48 | self.lengths = lengths

49 |

50 | def get_audio(self, filename):

51 | audio, sampling_rate = load_wav_to_torch(filename)

52 | if sampling_rate != self.sampling_rate:

53 | raise ValueError("{} SR doesn't match target {} SR,the audio is{}".format(

54 | sampling_rate, self.sampling_rate,filename))

55 | audio_norm = audio / self.max_wav_value

56 | audio_norm = audio_norm.unsqueeze(0)

57 | spec_filename = filename.replace(".wav", ".f{}h{}w{}spec.pt".format(self.filter_length, self.hop_length, self.win_length))

58 | if os.path.exists(spec_filename):

59 | try:

60 | spec = torch.load(spec_filename)

61 | except:

62 | print(spec_filename,"不存在")

63 | spec = spectrogram_torch(audio_norm, self.filter_length,

64 | self.sampling_rate, self.hop_length, self.win_length,

65 | center=False)

66 | spec = torch.squeeze(spec, 0)

67 | torch.save(spec, spec_filename)

68 | else:

69 | #import sys

70 | #print(spec_filename,"不存在")

71 | #sys.exit()

72 | spec = spectrogram_torch(audio_norm, self.filter_length,

73 | self.sampling_rate, self.hop_length, self.win_length,

74 | center=False)

75 | spec = torch.squeeze(spec, 0)

76 | torch.save(spec, spec_filename)

77 |

78 | if self.use_spk:

79 | spk_filename = filename.replace(".wav", ".npy")

80 | spk_filename = spk_filename.replace("DUMMY", "dataset/spk")

81 | spk = torch.from_numpy(np.load(spk_filename))

82 |

83 | if not self.use_sr:

84 | c_filename = filename.replace(".wav", "_eng_ppg.pt")

85 | #c_filename = c_filename.replace("DUMMY", "dataset/wavlm")

86 | c = torch.load(c_filename).squeeze(0)

87 |

88 | else:

89 | i = random.randint(68,92)

90 | '''

91 | basename = os.path.basename(filename)[:-4]

92 | spkname = basename[:4]

93 | #print(basename, spkname)

94 | with h5py.File(f"dataset/rs/wavlm/{spkname}/{i}.hdf5","r") as f:

95 | c = torch.from_numpy(f[basename][()]).squeeze(0)

96 | #print(c)

97 | '''

98 | import sys

99 | sys.exit()

100 | c_filename = filename.replace(".wav", f"_{i}.pt")

101 | c_filename = c_filename.replace("DUMMY", "dataset/sr/wavlm")

102 | c = torch.load(c_filename).squeeze(0)

103 |

104 | # 2023.01.10 update: code below can deteriorate model performance

105 | # I added these code during cleaning up, thinking that it can offer better performance than my provided checkpoints, but actually it does the opposite.

106 | # What an act of 'adding legs to a snake'!

107 | '''

108 | lmin = min(c.size(-1), spec.size(-1))

109 | spec, c = spec[:, :lmin], c[:, :lmin]

110 | audio_norm = audio_norm[:, :lmin*self.hop_length]

111 | _spec, _c, _audio_norm = spec, c, audio_norm

112 | while spec.size(-1) < self.spec_len:

113 | spec = torch.cat((spec, _spec), -1)

114 | c = torch.cat((c, _c), -1)

115 | audio_norm = torch.cat((audio_norm, _audio_norm), -1)

116 | start = random.randint(0, spec.size(-1) - self.spec_len)

117 | end = start + self.spec_len

118 | spec = spec[:, start:end]

119 | c = c[:, start:end]

120 | audio_norm = audio_norm[:, start*self.hop_length:end*self.hop_length]

121 | '''

122 |

123 | #if self.use_spk:

124 | # return c, spec, audio_norm, spk

125 | #else:

126 | return c, spec, audio_norm

127 |

128 | def __getitem__(self, index):

129 | return self.get_audio(self.audiopaths[index][0])

130 |

131 | def __len__(self):

132 | return len(self.audiopaths)

133 |

134 |

135 | class TextAudioSpeakerCollate():

136 | """ Zero-pads model inputs and targets

137 | """

138 | def __init__(self, hps):

139 | self.hps = hps

140 | self.use_sr = hps.train.use_sr

141 | self.use_spk = hps.model.use_spk

142 |

143 | def __call__(self, batch):

144 | """Collate's training batch from normalized text, audio and speaker identities

145 | PARAMS

146 | ------

147 | batch: [text_normalized, spec_normalized, wav_normalized, sid]

148 | """

149 | # Right zero-pad all one-hot text sequences to max input length

150 | _, ids_sorted_decreasing = torch.sort(

151 | torch.LongTensor([x[0].size(1) for x in batch]),

152 | dim=0, descending=True)

153 |

154 | max_spec_len = max([x[1].size(1) for x in batch])

155 | max_wav_len = max([x[2].size(1) for x in batch])

156 |

157 | spec_lengths = torch.LongTensor(len(batch))

158 | wav_lengths = torch.LongTensor(len(batch))

159 | if self.use_spk:

160 | spks = torch.FloatTensor(len(batch), batch[0][3].size(0))

161 | else:

162 | spks = None

163 |

164 | c_padded = torch.FloatTensor(len(batch), batch[0][0].size(0), max_spec_len)

165 | spec_padded = torch.FloatTensor(len(batch), batch[0][1].size(0), max_spec_len)

166 | wav_padded = torch.FloatTensor(len(batch), 1, max_wav_len)

167 | c_padded.zero_()

168 | spec_padded.zero_()

169 | wav_padded.zero_()

170 |

171 | for i in range(len(ids_sorted_decreasing)):

172 | row = batch[ids_sorted_decreasing[i]]

173 |

174 | c = row[0]

175 | c_padded[i, :, :c.size(1)] = c

176 |

177 | spec = row[1]

178 | spec_padded[i, :, :spec.size(1)] = spec

179 | spec_lengths[i] = spec.size(1)

180 |

181 | wav = row[2]

182 | wav_padded[i, :, :wav.size(1)] = wav

183 | wav_lengths[i] = wav.size(1)

184 |

185 | if self.use_spk:

186 | spks[i] = row[3]

187 |

188 | spec_seglen = spec_lengths[-1] if spec_lengths[-1] < self.hps.train.max_speclen + 1 else self.hps.train.max_speclen + 1

189 | wav_seglen = spec_seglen * self.hps.data.hop_length

190 |

191 | spec_padded, ids_slice = commons.rand_spec_segments(spec_padded, spec_lengths, spec_seglen)

192 | wav_padded = commons.slice_segments(wav_padded, ids_slice * self.hps.data.hop_length, wav_seglen)

193 |

194 | c_padded = commons.slice_segments(c_padded, ids_slice, spec_seglen)[:,:,:-1]

195 |

196 | spec_padded = spec_padded[:,:,:-1]

197 | wav_padded = wav_padded[:,:,:-self.hps.data.hop_length]

198 |

199 | if self.use_spk:

200 | return c_padded, spec_padded, wav_padded, spks

201 | else:

202 | return c_padded, spec_padded, wav_padded

203 |

204 |

205 | class DistributedBucketSampler(torch.utils.data.distributed.DistributedSampler):

206 | """

207 | Maintain similar input lengths in a batch.

208 | Length groups are specified by boundaries.

209 | Ex) boundaries = [b1, b2, b3] -> any batch is included either {x | b1 < length(x) <=b2} or {x | b2 < length(x) <= b3}.

210 |

211 | It removes samples which are not included in the boundaries.

212 | Ex) boundaries = [b1, b2, b3] -> any x s.t. length(x) <= b1 or length(x) > b3 are discarded.

213 | """

214 | def __init__(self, dataset, batch_size, boundaries, num_replicas=None, rank=None, shuffle=True):

215 | super().__init__(dataset, num_replicas=num_replicas, rank=rank, shuffle=shuffle)

216 | self.lengths = dataset.lengths

217 | self.batch_size = batch_size

218 | self.boundaries = boundaries

219 |

220 | self.buckets, self.num_samples_per_bucket = self._create_buckets()

221 | print(self.num_samples_per_bucket)

222 | self.total_size = sum(self.num_samples_per_bucket)

223 | self.num_samples = self.total_size // self.num_replicas

224 |

225 | def _create_buckets(self):

226 | buckets = [[] for _ in range(len(self.boundaries) - 1)]

227 | for i in range(len(self.lengths)):

228 | length = self.lengths[i]

229 | idx_bucket = self._bisect(length)

230 | if idx_bucket != -1:

231 | buckets[idx_bucket].append(i)

232 |

233 | for i in range(len(buckets) - 1, 0, -1):

234 | if len(buckets[i]) == 0:

235 | buckets.pop(i)

236 | self.boundaries.pop(i+1)

237 |

238 | num_samples_per_bucket = []

239 | for i in range(len(buckets)):

240 | len_bucket = len(buckets[i])

241 | total_batch_size = self.num_replicas * self.batch_size

242 | rem = (total_batch_size - (len_bucket % total_batch_size)) % total_batch_size

243 | num_samples_per_bucket.append(len_bucket + rem)

244 | return buckets, num_samples_per_bucket

245 |

246 | def __iter__(self):

247 | # deterministically shuffle based on epoch

248 | g = torch.Generator()

249 | g.manual_seed(self.epoch)

250 |

251 | indices = []

252 | if self.shuffle:

253 | for bucket in self.buckets:

254 | indices.append(torch.randperm(len(bucket), generator=g).tolist())

255 | else:

256 | for bucket in self.buckets:

257 | indices.append(list(range(len(bucket))))

258 |

259 | batches = []

260 | for i in range(len(self.buckets)):

261 | bucket = self.buckets[i]

262 | len_bucket = len(bucket)

263 | ids_bucket = indices[i]

264 | num_samples_bucket = self.num_samples_per_bucket[i]

265 |

266 | # add extra samples to make it evenly divisible

267 | rem = num_samples_bucket - len_bucket

268 | ids_bucket = ids_bucket + ids_bucket * (rem // len_bucket) + ids_bucket[:(rem % len_bucket)]

269 |

270 | # subsample

271 | ids_bucket = ids_bucket[self.rank::self.num_replicas]

272 |

273 | # batching

274 | for j in range(len(ids_bucket) // self.batch_size):

275 | batch = [bucket[idx] for idx in ids_bucket[j*self.batch_size:(j+1)*self.batch_size]]

276 | batches.append(batch)

277 |

278 | if self.shuffle:

279 | batch_ids = torch.randperm(len(batches), generator=g).tolist()

280 | batches = [batches[i] for i in batch_ids]

281 | self.batches = batches

282 |

283 | assert len(self.batches) * self.batch_size == self.num_samples

284 | return iter(self.batches)

285 |

286 | def _bisect(self, x, lo=0, hi=None):

287 | if hi is None:

288 | hi = len(self.boundaries) - 1

289 |

290 | if hi > lo:

291 | mid = (hi + lo) // 2

292 | if self.boundaries[mid] < x and x <= self.boundaries[mid+1]:

293 | return mid

294 | elif x <= self.boundaries[mid]:

295 | return self._bisect(x, lo, mid)

296 | else:

297 | return self._bisect(x, mid + 1, hi)

298 | else:

299 | return -1

300 |

301 | def __len__(self):

302 | return self.num_samples // self.batch_size

303 |

--------------------------------------------------------------------------------

/data_utils_whisper.py:

--------------------------------------------------------------------------------

1 | import time

2 | import os

3 | import random

4 | import numpy as np

5 | import torch

6 | import torch.utils.data

7 |

8 | import commons

9 | from mel_processing import spectrogram_torch, spec_to_mel_torch

10 | from utils import load_wav_to_torch, load_filepaths_and_text, transform

11 | #import h5py

12 |

13 |

14 | """Multi speaker version"""

15 | class TextAudioSpeakerLoader(torch.utils.data.Dataset):

16 | """

17 | 1) loads audio, speaker_id, text pairs

18 | 2) normalizes text and converts them to sequences of integers

19 | 3) computes spectrograms from audio files.

20 | """

21 | def __init__(self, audiopaths, hparams):

22 | self.audiopaths = load_filepaths_and_text(audiopaths)

23 | self.max_wav_value = hparams.data.max_wav_value

24 | self.sampling_rate = hparams.data.sampling_rate

25 | self.filter_length = hparams.data.filter_length

26 | self.hop_length = hparams.data.hop_length

27 | self.win_length = hparams.data.win_length

28 | self.sampling_rate = hparams.data.sampling_rate

29 | self.use_sr = hparams.train.use_sr

30 | self.use_spk = hparams.model.use_spk

31 | self.spec_len = hparams.train.max_speclen

32 |

33 | random.seed(1235)

34 | random.shuffle(self.audiopaths)

35 | self._filter()

36 |

37 | def _filter(self):

38 | """

39 | Filter text & store spec lengths

40 | """

41 | # Store spectrogram lengths for Bucketing

42 | # wav_length ~= file_size / (wav_channels * Bytes per dim) = file_size / (1 * 2)

43 | # spec_length = wav_length // hop_length

44 |

45 | lengths = []

46 | for audiopath in self.audiopaths:

47 | lengths.append(os.path.getsize(audiopath[0]) // (2 * self.hop_length))

48 | self.lengths = lengths

49 |

50 | def get_audio(self, filename):

51 | audio, sampling_rate = load_wav_to_torch(filename)

52 | if sampling_rate != self.sampling_rate:

53 | raise ValueError("{} SR doesn't match target {} SR,the audio is{}".format(

54 | sampling_rate, self.sampling_rate,filename))

55 | audio_norm = audio / self.max_wav_value

56 | audio_norm = audio_norm.unsqueeze(0)

57 | #spec_filename = filename.replace(".wav", ".spec.pt")

58 | spec_filename = filename.replace(".wav", ".f{}h{}w{}spec.pt".format(self.filter_length, self.hop_length, self.win_length))

59 | if os.path.exists(spec_filename):

60 | try:

61 | spec = torch.load(spec_filename)

62 | except:

63 | print(spec_filename)

64 | spec = spectrogram_torch(audio_norm, self.filter_length,

65 | self.sampling_rate, self.hop_length, self.win_length,

66 | center=False)

67 | spec = torch.squeeze(spec, 0)

68 | torch.save(spec, spec_filename)

69 | else:

70 | print(spec_filename)

71 | spec = spectrogram_torch(audio_norm, self.filter_length,

72 | self.sampling_rate, self.hop_length, self.win_length,

73 | center=False)

74 | spec = torch.squeeze(spec, 0)

75 | torch.save(spec, spec_filename)

76 |

77 | if self.use_spk:

78 | spk_filename = filename.replace(".wav", ".npy")

79 | spk_filename = spk_filename.replace("DUMMY", "dataset/spk")

80 | spk = torch.from_numpy(np.load(spk_filename))

81 |

82 | if not self.use_sr:

83 | c_filename = filename.replace(".wav", "whisper.pt.npy")

84 | #c_filename = c_filename.replace("DUMMY", "dataset/wavlm")

85 | #c = torch.load(c_filename).squeeze(0)

86 | c=torch.from_numpy(np.load(c_filename))

87 | c=c.transpose(1,0)

88 | else:

89 | i = random.randint(68,92)

90 | '''

91 | basename = os.path.basename(filename)[:-4]

92 | spkname = basename[:4]

93 | #print(basename, spkname)

94 | with h5py.File(f"dataset/rs/wavlm/{spkname}/{i}.hdf5","r") as f:

95 | c = torch.from_numpy(f[basename][()]).squeeze(0)

96 | #print(c)

97 | '''

98 | c_filename = filename.replace(".wav", f"_{i}.pt")

99 | c_filename = c_filename.replace("DUMMY", "dataset/sr/wavlm")

100 | c = torch.load(c_filename).squeeze(0)

101 |

102 | # 2023.01.10 update: code below can deteriorate model performance

103 | # I added these code during cleaning up, thinking that it can offer better performance than my provided checkpoints, but actually it does the opposite.

104 | # What an act of 'adding legs to a snake'!

105 | '''

106 | lmin = min(c.size(-1), spec.size(-1))

107 | spec, c = spec[:, :lmin], c[:, :lmin]

108 | audio_norm = audio_norm[:, :lmin*self.hop_length]

109 | _spec, _c, _audio_norm = spec, c, audio_norm

110 | while spec.size(-1) < self.spec_len:

111 | spec = torch.cat((spec, _spec), -1)

112 | c = torch.cat((c, _c), -1)

113 | audio_norm = torch.cat((audio_norm, _audio_norm), -1)

114 | start = random.randint(0, spec.size(-1) - self.spec_len)

115 | end = start + self.spec_len

116 | spec = spec[:, start:end]

117 | c = c[:, start:end]

118 | audio_norm = audio_norm[:, start*self.hop_length:end*self.hop_length]

119 | '''

120 |

121 | #if self.use_spk:

122 | # return c, spec, audio_norm, spk

123 | #else:

124 | return c, spec, audio_norm

125 |

126 | def __getitem__(self, index):

127 | return self.get_audio(self.audiopaths[index][0])

128 |

129 | def __len__(self):

130 | return len(self.audiopaths)

131 |

132 |

133 | class TextAudioSpeakerCollate():

134 | """ Zero-pads model inputs and targets

135 | """

136 | def __init__(self, hps):

137 | self.hps = hps

138 | self.use_sr = hps.train.use_sr

139 | self.use_spk = hps.model.use_spk

140 |

141 | def __call__(self, batch):

142 | """Collate's training batch from normalized text, audio and speaker identities

143 | PARAMS

144 | ------

145 | batch: [text_normalized, spec_normalized, wav_normalized, sid]

146 | """

147 | # Right zero-pad all one-hot text sequences to max input length

148 | _, ids_sorted_decreasing = torch.sort(

149 | torch.LongTensor([x[0].size(1) for x in batch]),

150 | dim=0, descending=True)

151 |

152 | max_spec_len = max([x[1].size(1) for x in batch])

153 | max_wav_len = max([x[2].size(1) for x in batch])

154 |

155 | spec_lengths = torch.LongTensor(len(batch))

156 | wav_lengths = torch.LongTensor(len(batch))

157 | if self.use_spk:

158 | spks = torch.FloatTensor(len(batch), batch[0][3].size(0))

159 | else:

160 | spks = None

161 |

162 | c_padded = torch.FloatTensor(len(batch), batch[0][0].size(0), max_spec_len)

163 | spec_padded = torch.FloatTensor(len(batch), batch[0][1].size(0), max_spec_len)

164 | wav_padded = torch.FloatTensor(len(batch), 1, max_wav_len)

165 | c_padded.zero_()

166 | spec_padded.zero_()

167 | wav_padded.zero_()

168 |

169 | for i in range(len(ids_sorted_decreasing)):

170 | row = batch[ids_sorted_decreasing[i]]

171 |

172 | c = row[0]

173 | c_padded[i, :, :c.size(1)] = c

174 |

175 | spec = row[1]

176 | spec_padded[i, :, :spec.size(1)] = spec

177 | spec_lengths[i] = spec.size(1)

178 |

179 | wav = row[2]

180 | wav_padded[i, :, :wav.size(1)] = wav

181 | wav_lengths[i] = wav.size(1)

182 |

183 | if self.use_spk:

184 | spks[i] = row[3]

185 |

186 | spec_seglen = spec_lengths[-1] if spec_lengths[-1] < self.hps.train.max_speclen + 1 else self.hps.train.max_speclen + 1

187 | wav_seglen = spec_seglen * self.hps.data.hop_length

188 |

189 | spec_padded, ids_slice = commons.rand_spec_segments(spec_padded, spec_lengths, spec_seglen)

190 | wav_padded = commons.slice_segments(wav_padded, ids_slice * self.hps.data.hop_length, wav_seglen)

191 |

192 | c_padded = commons.slice_segments(c_padded, ids_slice, spec_seglen)[:,:,:-1]

193 |

194 | spec_padded = spec_padded[:,:,:-1]

195 | wav_padded = wav_padded[:,:,:-self.hps.data.hop_length]

196 |

197 | if self.use_spk:

198 | return c_padded, spec_padded, wav_padded, spks

199 | else:

200 | return c_padded, spec_padded, wav_padded

201 |

202 |

203 | class DistributedBucketSampler(torch.utils.data.distributed.DistributedSampler):

204 | """

205 | Maintain similar input lengths in a batch.

206 | Length groups are specified by boundaries.

207 | Ex) boundaries = [b1, b2, b3] -> any batch is included either {x | b1 < length(x) <=b2} or {x | b2 < length(x) <= b3}.

208 |

209 | It removes samples which are not included in the boundaries.

210 | Ex) boundaries = [b1, b2, b3] -> any x s.t. length(x) <= b1 or length(x) > b3 are discarded.

211 | """

212 | def __init__(self, dataset, batch_size, boundaries, num_replicas=None, rank=None, shuffle=True):

213 | super().__init__(dataset, num_replicas=num_replicas, rank=rank, shuffle=shuffle)

214 | self.lengths = dataset.lengths

215 | self.batch_size = batch_size

216 | self.boundaries = boundaries

217 |

218 | self.buckets, self.num_samples_per_bucket = self._create_buckets()

219 | print(self.num_samples_per_bucket)

220 | self.total_size = sum(self.num_samples_per_bucket)

221 | self.num_samples = self.total_size // self.num_replicas

222 |

223 | def _create_buckets(self):

224 | buckets = [[] for _ in range(len(self.boundaries) - 1)]

225 | for i in range(len(self.lengths)):

226 | length = self.lengths[i]

227 | idx_bucket = self._bisect(length)

228 | if idx_bucket != -1:

229 | buckets[idx_bucket].append(i)

230 |

231 | for i in range(len(buckets) - 1, 0, -1):

232 | if len(buckets[i]) == 0:

233 | buckets.pop(i)

234 | self.boundaries.pop(i+1)

235 |

236 | num_samples_per_bucket = []

237 | for i in range(len(buckets)):

238 | len_bucket = len(buckets[i])

239 | total_batch_size = self.num_replicas * self.batch_size

240 | rem = (total_batch_size - (len_bucket % total_batch_size)) % total_batch_size

241 | num_samples_per_bucket.append(len_bucket + rem)

242 | return buckets, num_samples_per_bucket

243 |

244 | def __iter__(self):

245 | # deterministically shuffle based on epoch

246 | g = torch.Generator()

247 | g.manual_seed(self.epoch)

248 |

249 | indices = []

250 | if self.shuffle:

251 | for bucket in self.buckets:

252 | indices.append(torch.randperm(len(bucket), generator=g).tolist())

253 | else:

254 | for bucket in self.buckets:

255 | indices.append(list(range(len(bucket))))

256 |

257 | batches = []

258 | for i in range(len(self.buckets)):

259 | bucket = self.buckets[i]

260 | len_bucket = len(bucket)

261 | ids_bucket = indices[i]

262 | num_samples_bucket = self.num_samples_per_bucket[i]

263 |

264 | # add extra samples to make it evenly divisible

265 | rem = num_samples_bucket - len_bucket

266 | ids_bucket = ids_bucket + ids_bucket * (rem // len_bucket) + ids_bucket[:(rem % len_bucket)]

267 |

268 | # subsample

269 | ids_bucket = ids_bucket[self.rank::self.num_replicas]

270 |

271 | # batching

272 | for j in range(len(ids_bucket) // self.batch_size):

273 | batch = [bucket[idx] for idx in ids_bucket[j*self.batch_size:(j+1)*self.batch_size]]

274 | batches.append(batch)

275 |

276 | if self.shuffle:

277 | batch_ids = torch.randperm(len(batches), generator=g).tolist()

278 | batches = [batches[i] for i in batch_ids]

279 | self.batches = batches

280 |

281 | assert len(self.batches) * self.batch_size == self.num_samples

282 | return iter(self.batches)

283 |

284 | def _bisect(self, x, lo=0, hi=None):

285 | if hi is None:

286 | hi = len(self.boundaries) - 1

287 |

288 | if hi > lo:

289 | mid = (hi + lo) // 2

290 | if self.boundaries[mid] < x and x <= self.boundaries[mid+1]:

291 | return mid

292 | elif x <= self.boundaries[mid]:

293 | return self._bisect(x, lo, mid)

294 | else:

295 | return self._bisect(x, mid + 1, hi)

296 | else:

297 | return -1

298 |

299 | def __len__(self):

300 | return self.num_samples // self.batch_size

301 |

--------------------------------------------------------------------------------

/losses.py:

--------------------------------------------------------------------------------

1 | import torch

2 | from torch.nn import functional as F

3 |

4 | import commons

5 |

6 |

7 | def feature_loss(fmap_r, fmap_g):

8 | loss = 0

9 | for dr, dg in zip(fmap_r, fmap_g):

10 | for rl, gl in zip(dr, dg):

11 | rl = rl.float().detach()

12 | gl = gl.float()

13 | loss += torch.mean(torch.abs(rl - gl))

14 |

15 | return loss * 2

16 |

17 |

18 | def discriminator_loss(disc_real_outputs, disc_generated_outputs):

19 | loss = 0

20 | r_losses = []

21 | g_losses = []

22 | for dr, dg in zip(disc_real_outputs, disc_generated_outputs):

23 | dr = dr.float()

24 | dg = dg.float()

25 | r_loss = torch.mean((1-dr)**2)

26 | g_loss = torch.mean(dg**2)

27 | loss += (r_loss + g_loss)

28 | r_losses.append(r_loss.item())

29 | g_losses.append(g_loss.item())

30 |

31 | return loss, r_losses, g_losses

32 |

33 |

34 | def generator_loss(disc_outputs):

35 | loss = 0

36 | gen_losses = []

37 | for dg in disc_outputs:

38 | dg = dg.float()

39 | l = torch.mean((1-dg)**2)

40 | gen_losses.append(l)

41 | loss += l

42 |

43 | return loss, gen_losses

44 |

45 |

46 | def kl_loss(z_p, logs_q, m_p, logs_p, z_mask):

47 | """

48 | z_p, logs_q: [b, h, t_t]

49 | m_p, logs_p: [b, h, t_t]

50 | """

51 | z_p = z_p.float()

52 | logs_q = logs_q.float()

53 | m_p = m_p.float()

54 | logs_p = logs_p.float()

55 | z_mask = z_mask.float()

56 | #print(logs_p)

57 | kl = logs_p - logs_q - 0.5

58 | kl += 0.5 * ((z_p - m_p)**2) * torch.exp(-2. * logs_p)

59 | kl = torch.sum(kl * z_mask)

60 | l = kl / torch.sum(z_mask)

61 | return l

62 |

--------------------------------------------------------------------------------

/mel_processing.py:

--------------------------------------------------------------------------------

1 | import math

2 | import os

3 | import random

4 | import torch

5 | from torch import nn

6 | import torch.nn.functional as F

7 | import torch.utils.data

8 | import numpy as np

9 | import librosa

10 | import librosa.util as librosa_util

11 | from librosa.util import normalize, pad_center, tiny

12 | from scipy.signal import get_window

13 | from scipy.io.wavfile import read

14 | from librosa.filters import mel as librosa_mel_fn

15 |

16 | MAX_WAV_VALUE = 32768.0

17 |

18 |

19 | def dynamic_range_compression_torch(x, C=1, clip_val=1e-5):

20 | """

21 | PARAMS

22 | ------

23 | C: compression factor

24 | """

25 | return torch.log(torch.clamp(x, min=clip_val) * C)

26 |

27 |

28 | def dynamic_range_decompression_torch(x, C=1):

29 | """

30 | PARAMS

31 | ------

32 | C: compression factor used to compress

33 | """

34 | return torch.exp(x) / C

35 |

36 |

37 | def spectral_normalize_torch(magnitudes):

38 | output = dynamic_range_compression_torch(magnitudes)

39 | return output

40 |

41 |

42 | def spectral_de_normalize_torch(magnitudes):

43 | output = dynamic_range_decompression_torch(magnitudes)

44 | return output

45 |

46 |

47 | mel_basis = {}

48 | hann_window = {}

49 |

50 |

51 | def spectrogram_torch(y, n_fft, sampling_rate, hop_size, win_size, center=False):

52 | if torch.min(y) < -1.:

53 | print('min value is ', torch.min(y))

54 | if torch.max(y) > 1.:

55 | print('max value is ', torch.max(y))

56 |

57 | global hann_window

58 | dtype_device = str(y.dtype) + '_' + str(y.device)

59 | wnsize_dtype_device = str(win_size) + '_' + dtype_device

60 | if wnsize_dtype_device not in hann_window:

61 | hann_window[wnsize_dtype_device] = torch.hann_window(win_size).to(dtype=y.dtype, device=y.device)

62 |

63 | y = torch.nn.functional.pad(y.unsqueeze(1), (int((n_fft-hop_size)/2), int((n_fft-hop_size)/2)), mode='reflect')

64 | y = y.squeeze(1)

65 |

66 | spec = torch.stft(y, n_fft, hop_length=hop_size, win_length=win_size, window=hann_window[wnsize_dtype_device],

67 | center=center, pad_mode='reflect', normalized=False, onesided=True, return_complex=False)

68 |

69 | spec = torch.sqrt(spec.pow(2).sum(-1) + 1e-6)

70 | return spec

71 |

72 |

73 | def spec_to_mel_torch(spec, n_fft, num_mels, sampling_rate, fmin, fmax):

74 | global mel_basis

75 | dtype_device = str(spec.dtype) + '_' + str(spec.device)

76 | fmax_dtype_device = str(fmax) + '_' + dtype_device

77 | if fmax_dtype_device not in mel_basis:

78 | mel = librosa_mel_fn(sr=sampling_rate, n_fft=n_fft, n_mels=num_mels, fmin=fmin, fmax=fmax)

79 | mel_basis[fmax_dtype_device] = torch.from_numpy(mel).to(dtype=spec.dtype, device=spec.device)

80 | spec = torch.matmul(mel_basis[fmax_dtype_device], spec)

81 | spec = spectral_normalize_torch(spec)

82 | return spec

83 |

84 |

85 | def mel_spectrogram_torch(y, n_fft, num_mels, sampling_rate, hop_size, win_size, fmin, fmax, center=False):

86 | if torch.min(y) < -1.:

87 | print('min value is ', torch.min(y))

88 | if torch.max(y) > 1.:

89 | print('max value is ', torch.max(y))

90 |

91 | global mel_basis, hann_window

92 | dtype_device = str(y.dtype) + '_' + str(y.device)

93 | fmax_dtype_device = str(fmax) + '_' + dtype_device

94 | wnsize_dtype_device = str(win_size) + '_' + dtype_device

95 | if fmax_dtype_device not in mel_basis:

96 | mel = librosa_mel_fn(sr=sampling_rate, n_fft=n_fft, n_mels=num_mels, fmin=fmin, fmax=fmax)

97 | mel_basis[fmax_dtype_device] = torch.from_numpy(mel).to(dtype=y.dtype, device=y.device)

98 | if wnsize_dtype_device not in hann_window:

99 | hann_window[wnsize_dtype_device] = torch.hann_window(win_size).to(dtype=y.dtype, device=y.device)

100 |

101 | y = torch.nn.functional.pad(y.unsqueeze(1), (int((n_fft-hop_size)/2), int((n_fft-hop_size)/2)), mode='reflect')

102 | y = y.squeeze(1)

103 |

104 | spec = torch.stft(y, n_fft, hop_length=hop_size, win_length=win_size, window=hann_window[wnsize_dtype_device],

105 | center=center, pad_mode='reflect', normalized=False, onesided=True, return_complex=False)

106 |

107 | spec = torch.sqrt(spec.pow(2).sum(-1) + 1e-6)

108 |

109 | spec = torch.matmul(mel_basis[fmax_dtype_device], spec)

110 | spec = spectral_normalize_torch(spec)

111 |

112 | return spec

113 |

--------------------------------------------------------------------------------

/models.py:

--------------------------------------------------------------------------------

1 | import copy

2 | import math

3 | import torch

4 | from torch import nn

5 | from torch.nn import functional as F

6 |

7 | import commons

8 | import modules

9 |

10 | from torch.nn import Conv1d, ConvTranspose1d, AvgPool1d, Conv2d

11 | from torch.nn.utils import weight_norm, remove_weight_norm, spectral_norm

12 | from commons import init_weights, get_padding

13 |

14 |

15 | class ResidualCouplingBlock(nn.Module):

16 | def __init__(self,

17 | channels,

18 | hidden_channels,

19 | kernel_size,

20 | dilation_rate,

21 | n_layers,

22 | n_flows=4,

23 | gin_channels=0):

24 | super().__init__()

25 | self.channels = channels

26 | self.hidden_channels = hidden_channels

27 | self.kernel_size = kernel_size

28 | self.dilation_rate = dilation_rate

29 | self.n_layers = n_layers

30 | self.n_flows = n_flows

31 | self.gin_channels = gin_channels

32 |

33 | self.flows = nn.ModuleList()

34 | for i in range(n_flows):

35 | self.flows.append(modules.ResidualCouplingLayer(channels, hidden_channels, kernel_size, dilation_rate, n_layers, gin_channels=gin_channels, mean_only=True))

36 | self.flows.append(modules.Flip())

37 |

38 | def forward(self, x, x_mask, g=None, reverse=False):

39 | if not reverse:

40 | for flow in self.flows:

41 | x, _ = flow(x, x_mask, g=g, reverse=reverse)

42 | else:

43 | for flow in reversed(self.flows):

44 | x = flow(x, x_mask, g=g, reverse=reverse)

45 | return x

46 |

47 |

48 | class Encoder(nn.Module):

49 | def __init__(self,

50 | in_channels,

51 | out_channels,

52 | hidden_channels,

53 | kernel_size,

54 | dilation_rate,

55 | n_layers,

56 | gin_channels=0):

57 | super().__init__()

58 | self.in_channels = in_channels

59 | self.out_channels = out_channels

60 | self.hidden_channels = hidden_channels

61 | self.kernel_size = kernel_size

62 | self.dilation_rate = dilation_rate

63 | self.n_layers = n_layers

64 | self.gin_channels = gin_channels

65 |

66 | self.pre = nn.Conv1d(in_channels, hidden_channels, 1)

67 | self.enc = modules.WN(hidden_channels, kernel_size, dilation_rate, n_layers, gin_channels=gin_channels)

68 | self.proj = nn.Conv1d(hidden_channels, out_channels * 2, 1)

69 |

70 | def forward(self, x, x_lengths, g=None):

71 | x_mask = torch.unsqueeze(commons.sequence_mask(x_lengths, x.size(2)), 1).to(x.dtype)

72 | x = self.pre(x) * x_mask

73 | x = self.enc(x, x_mask, g=g)

74 | stats = self.proj(x) * x_mask

75 | m, logs = torch.split(stats, self.out_channels, dim=1)

76 | z = (m + torch.randn_like(m) * torch.exp(logs)) * x_mask

77 | return z, m, logs, x_mask

78 |

79 |

80 | class Generator(torch.nn.Module):

81 | def __init__(self, initial_channel, resblock, resblock_kernel_sizes, resblock_dilation_sizes, upsample_rates, upsample_initial_channel, upsample_kernel_sizes, gin_channels=0):

82 | super(Generator, self).__init__()

83 | self.num_kernels = len(resblock_kernel_sizes)

84 | self.num_upsamples = len(upsample_rates)

85 | self.conv_pre = Conv1d(initial_channel, upsample_initial_channel, 7, 1, padding=3)

86 | resblock = modules.ResBlock1 if resblock == '1' else modules.ResBlock2

87 |

88 | self.ups = nn.ModuleList()

89 | for i, (u, k) in enumerate(zip(upsample_rates, upsample_kernel_sizes)):

90 | self.ups.append(weight_norm(

91 | ConvTranspose1d(upsample_initial_channel//(2**i), upsample_initial_channel//(2**(i+1)),

92 | k, u, padding=(k-u)//2)))

93 |

94 | self.resblocks = nn.ModuleList()

95 | for i in range(len(self.ups)):

96 | ch = upsample_initial_channel//(2**(i+1))

97 | for j, (k, d) in enumerate(zip(resblock_kernel_sizes, resblock_dilation_sizes)):

98 | self.resblocks.append(resblock(ch, k, d))

99 |

100 | self.conv_post = Conv1d(ch, 1, 7, 1, padding=3, bias=False)

101 | self.ups.apply(init_weights)

102 |

103 | if gin_channels != 0:

104 | self.cond = nn.Conv1d(gin_channels, upsample_initial_channel, 1)

105 |

106 | def forward(self, x, g=None):

107 | x = self.conv_pre(x)

108 | if g is not None:

109 | x = x + self.cond(g)

110 |

111 | for i in range(self.num_upsamples):

112 | x = F.leaky_relu(x, modules.LRELU_SLOPE)

113 | x = self.ups[i](x)

114 | xs = None

115 | for j in range(self.num_kernels):

116 | if xs is None:

117 | xs = self.resblocks[i*self.num_kernels+j](x)

118 | else:

119 | xs += self.resblocks[i*self.num_kernels+j](x)

120 | x = xs / self.num_kernels

121 | x = F.leaky_relu(x)

122 | x = self.conv_post(x)

123 | x = torch.tanh(x)

124 |

125 | return x

126 |

127 | def remove_weight_norm(self):

128 | print('Removing weight norm...')

129 | for l in self.ups:

130 | remove_weight_norm(l)

131 | for l in self.resblocks:

132 | l.remove_weight_norm()

133 |

134 |

135 | class DiscriminatorP(torch.nn.Module):

136 | def __init__(self, period, kernel_size=5, stride=3, use_spectral_norm=False):

137 | super(DiscriminatorP, self).__init__()

138 | self.period = period

139 | self.use_spectral_norm = use_spectral_norm

140 | norm_f = weight_norm if use_spectral_norm == False else spectral_norm

141 | self.convs = nn.ModuleList([

142 | norm_f(Conv2d(1, 32, (kernel_size, 1), (stride, 1), padding=(get_padding(kernel_size, 1), 0))),

143 | norm_f(Conv2d(32, 128, (kernel_size, 1), (stride, 1), padding=(get_padding(kernel_size, 1), 0))),

144 | norm_f(Conv2d(128, 512, (kernel_size, 1), (stride, 1), padding=(get_padding(kernel_size, 1), 0))),

145 | norm_f(Conv2d(512, 1024, (kernel_size, 1), (stride, 1), padding=(get_padding(kernel_size, 1), 0))),

146 | norm_f(Conv2d(1024, 1024, (kernel_size, 1), 1, padding=(get_padding(kernel_size, 1), 0))),

147 | ])

148 | self.conv_post = norm_f(Conv2d(1024, 1, (3, 1), 1, padding=(1, 0)))

149 |

150 | def forward(self, x):

151 | fmap = []

152 |

153 | # 1d to 2d

154 | b, c, t = x.shape

155 | if t % self.period != 0: # pad first

156 | n_pad = self.period - (t % self.period)

157 | x = F.pad(x, (0, n_pad), "reflect")

158 | t = t + n_pad

159 | x = x.view(b, c, t // self.period, self.period)

160 |

161 | for l in self.convs:

162 | x = l(x)

163 | x = F.leaky_relu(x, modules.LRELU_SLOPE)

164 | fmap.append(x)

165 | x = self.conv_post(x)

166 | fmap.append(x)

167 | x = torch.flatten(x, 1, -1)

168 |

169 | return x, fmap

170 |

171 |

172 | class DiscriminatorS(torch.nn.Module):

173 | def __init__(self, use_spectral_norm=False):

174 | super(DiscriminatorS, self).__init__()

175 | norm_f = weight_norm if use_spectral_norm == False else spectral_norm

176 | self.convs = nn.ModuleList([

177 | norm_f(Conv1d(1, 16, 15, 1, padding=7)),

178 | norm_f(Conv1d(16, 64, 41, 4, groups=4, padding=20)),

179 | norm_f(Conv1d(64, 256, 41, 4, groups=16, padding=20)),

180 | norm_f(Conv1d(256, 1024, 41, 4, groups=64, padding=20)),

181 | norm_f(Conv1d(1024, 1024, 41, 4, groups=256, padding=20)),

182 | norm_f(Conv1d(1024, 1024, 5, 1, padding=2)),

183 | ])

184 | self.conv_post = norm_f(Conv1d(1024, 1, 3, 1, padding=1))

185 |

186 | def forward(self, x):

187 | fmap = []

188 |

189 | for l in self.convs:

190 | x = l(x)

191 | x = F.leaky_relu(x, modules.LRELU_SLOPE)

192 | fmap.append(x)

193 | x = self.conv_post(x)

194 | fmap.append(x)

195 | x = torch.flatten(x, 1, -1)

196 |

197 | return x, fmap

198 |

199 |

200 | class MultiPeriodDiscriminator(torch.nn.Module):

201 | def __init__(self, use_spectral_norm=False):

202 | super(MultiPeriodDiscriminator, self).__init__()

203 | periods = [2,3,5,7,11]

204 |

205 | discs = [DiscriminatorS(use_spectral_norm=use_spectral_norm)]

206 | discs = discs + [DiscriminatorP(i, use_spectral_norm=use_spectral_norm) for i in periods]

207 | self.discriminators = nn.ModuleList(discs)

208 |

209 | def forward(self, y, y_hat):

210 | y_d_rs = []

211 | y_d_gs = []

212 | fmap_rs = []

213 | fmap_gs = []

214 | for i, d in enumerate(self.discriminators):

215 | y_d_r, fmap_r = d(y)

216 | y_d_g, fmap_g = d(y_hat)

217 | y_d_rs.append(y_d_r)

218 | y_d_gs.append(y_d_g)

219 | fmap_rs.append(fmap_r)

220 | fmap_gs.append(fmap_g)

221 |

222 | return y_d_rs, y_d_gs, fmap_rs, fmap_gs

223 |

224 |

225 | class SpeakerEncoder(torch.nn.Module):

226 | def __init__(self, mel_n_channels=80, model_num_layers=3, model_hidden_size=256, model_embedding_size=256):

227 | super(SpeakerEncoder, self).__init__()

228 | self.lstm = nn.LSTM(mel_n_channels, model_hidden_size, model_num_layers, batch_first=True)

229 | self.linear = nn.Linear(model_hidden_size, model_embedding_size)

230 | self.relu = nn.ReLU()

231 |

232 | def forward(self, mels):

233 | self.lstm.flatten_parameters()

234 | _, (hidden, _) = self.lstm(mels)

235 | embeds_raw = self.relu(self.linear(hidden[-1]))

236 | return embeds_raw / torch.norm(embeds_raw, dim=1, keepdim=True)

237 |

238 | def compute_partial_slices(self, total_frames, partial_frames, partial_hop):

239 | mel_slices = []

240 | for i in range(0, total_frames-partial_frames, partial_hop):

241 | mel_range = torch.arange(i, i+partial_frames)

242 | mel_slices.append(mel_range)

243 |

244 | return mel_slices

245 |

246 | def embed_utterance(self, mel, partial_frames=128, partial_hop=64):

247 | mel_len = mel.size(1)

248 | last_mel = mel[:,-partial_frames:]

249 |

250 | if mel_len > partial_frames:

251 | mel_slices = self.compute_partial_slices(mel_len, partial_frames, partial_hop)

252 | mels = list(mel[:,s] for s in mel_slices)

253 | mels.append(last_mel)

254 | mels = torch.stack(tuple(mels), 0).squeeze(1)

255 |

256 | with torch.no_grad():

257 | partial_embeds = self(mels)

258 | embed = torch.mean(partial_embeds, axis=0).unsqueeze(0)

259 | #embed = embed / torch.linalg.norm(embed, 2)

260 | else:

261 | with torch.no_grad():

262 | embed = self(last_mel)

263 |

264 | return embed

265 |

266 |

267 | class SynthesizerTrn(nn.Module):

268 | """

269 | Synthesizer for Training

270 | """

271 |

272 | def __init__(self,

273 | spec_channels,

274 | segment_size,

275 | inter_channels,

276 | hidden_channels,

277 | filter_channels,

278 | n_heads,

279 | n_layers,

280 | kernel_size,

281 | p_dropout,

282 | resblock,

283 | resblock_kernel_sizes,

284 | resblock_dilation_sizes,

285 | upsample_rates,

286 | upsample_initial_channel,

287 | upsample_kernel_sizes,

288 | gin_channels,

289 | ssl_dim,

290 | use_spk,

291 | **kwargs):

292 |

293 | super().__init__()

294 | self.spec_channels = spec_channels

295 | self.inter_channels = inter_channels

296 | self.hidden_channels = hidden_channels

297 | self.filter_channels = filter_channels

298 | self.n_heads = n_heads

299 | self.n_layers = n_layers

300 | self.kernel_size = kernel_size

301 | self.p_dropout = p_dropout

302 | self.resblock = resblock

303 | self.resblock_kernel_sizes = resblock_kernel_sizes

304 | self.resblock_dilation_sizes = resblock_dilation_sizes

305 | self.upsample_rates = upsample_rates

306 | self.upsample_initial_channel = upsample_initial_channel

307 | self.upsample_kernel_sizes = upsample_kernel_sizes

308 | self.segment_size = segment_size

309 | self.gin_channels = gin_channels

310 | self.ssl_dim = ssl_dim

311 | self.use_spk = use_spk

312 |

313 | self.enc_p = Encoder(ssl_dim, inter_channels, hidden_channels, 5, 1, 16)

314 | self.dec = Generator(inter_channels, resblock, resblock_kernel_sizes, resblock_dilation_sizes, upsample_rates, upsample_initial_channel, upsample_kernel_sizes, gin_channels=gin_channels)

315 | self.enc_q = Encoder(spec_channels, inter_channels, hidden_channels, 5, 1, 16, gin_channels=gin_channels)

316 | self.flow = ResidualCouplingBlock(inter_channels, hidden_channels, 5, 1, 4, gin_channels=gin_channels)

317 |

318 | if not self.use_spk:

319 | self.enc_spk = SpeakerEncoder(model_hidden_size=gin_channels, model_embedding_size=gin_channels)

320 |

321 | def forward(self, c, spec, g=None, mel=None, c_lengths=None, spec_lengths=None):

322 | if c_lengths == None:

323 | c_lengths = (torch.ones(c.size(0)) * c.size(-1)).to(c.device)

324 | if spec_lengths == None:

325 | spec_lengths = (torch.ones(spec.size(0)) * spec.size(-1)).to(spec.device)

326 |

327 | if not self.use_spk:

328 | #print(torch.max(mel),torch.min(mel),mel.size())

329 | g_raw = self.enc_spk(mel.transpose(1,2))

330 | g = g_raw.unsqueeze(-1)

331 |

332 | _, m_p, logs_p, _ = self.enc_p(c, c_lengths)#这里也输入一下g会不会更好?models_g_content.py里面有模型代码

333 | z, m_q, logs_q, spec_mask = self.enc_q(spec, spec_lengths, g=g)

334 | z_p = self.flow(z, spec_mask, g=g)

335 | #print(z.size(),spec_lengths, self.segment_size)

336 | z_slice, ids_slice = commons.rand_slice_segments(z, spec_lengths, self.segment_size)

337 | #print(z_slice.size())

338 | o = self.dec(z_slice, g=g)

339 | #with torch.no_grad():

340 | # g_hat = self.enc_spk(mel.transpose(1,2))

341 |

342 | return o, ids_slice, spec_mask, (z, z_p, m_p, logs_p, m_q, logs_q),g_raw#,g_hat

343 |

344 | def infer(self, c, g=None, mel=None, c_lengths=None):

345 | if c_lengths == None:

346 | c_lengths = (torch.ones(c.size(0)) * c.size(-1)).to(c.device)

347 | if not self.use_spk:

348 | #g = self.enc_spk.embed_utterance(mel.transpose(1,2))

349 | g = self.enc_spk(mel.transpose(1,2))

350 | g = g.unsqueeze(-1)

351 |

352 | z_p, m_p, logs_p, c_mask = self.enc_p(c, c_lengths)

353 | z = self.flow(z_p, c_mask, g=g, reverse=True)

354 | o = self.dec(z * c_mask, g=g)

355 |

356 | return o

357 |

--------------------------------------------------------------------------------

/modules.py:

--------------------------------------------------------------------------------

1 | import copy

2 | import math

3 | import numpy as np

4 | import scipy

5 | import torch

6 | from torch import nn

7 | from torch.nn import functional as F

8 |

9 | from torch.nn import Conv1d, ConvTranspose1d, AvgPool1d, Conv2d

10 | from torch.nn.utils import weight_norm, remove_weight_norm

11 |

12 | import commons

13 | from commons import init_weights, get_padding

14 |

15 |

16 | LRELU_SLOPE = 0.1

17 |

18 |

19 | class LayerNorm(nn.Module):

20 | def __init__(self, channels, eps=1e-5):

21 | super().__init__()

22 | self.channels = channels

23 | self.eps = eps

24 |

25 | self.gamma = nn.Parameter(torch.ones(channels))

26 | self.beta = nn.Parameter(torch.zeros(channels))

27 |

28 | def forward(self, x):

29 | x = x.transpose(1, -1)

30 | x = F.layer_norm(x, (self.channels,), self.gamma, self.beta, self.eps)

31 | return x.transpose(1, -1)

32 |

33 |

34 | class ConvReluNorm(nn.Module):

35 | def __init__(self, in_channels, hidden_channels, out_channels, kernel_size, n_layers, p_dropout):

36 | super().__init__()

37 | self.in_channels = in_channels

38 | self.hidden_channels = hidden_channels

39 | self.out_channels = out_channels

40 | self.kernel_size = kernel_size

41 | self.n_layers = n_layers

42 | self.p_dropout = p_dropout

43 | assert n_layers > 1, "Number of layers should be larger than 0."

44 |

45 | self.conv_layers = nn.ModuleList()

46 | self.norm_layers = nn.ModuleList()

47 | self.conv_layers.append(nn.Conv1d(in_channels, hidden_channels, kernel_size, padding=kernel_size//2))

48 | self.norm_layers.append(LayerNorm(hidden_channels))

49 | self.relu_drop = nn.Sequential(

50 | nn.ReLU(),

51 | nn.Dropout(p_dropout))

52 | for _ in range(n_layers-1):

53 | self.conv_layers.append(nn.Conv1d(hidden_channels, hidden_channels, kernel_size, padding=kernel_size//2))

54 | self.norm_layers.append(LayerNorm(hidden_channels))

55 | self.proj = nn.Conv1d(hidden_channels, out_channels, 1)

56 | self.proj.weight.data.zero_()

57 | self.proj.bias.data.zero_()

58 |

59 | def forward(self, x, x_mask):

60 | x_org = x

61 | for i in range(self.n_layers):

62 | x = self.conv_layers[i](x * x_mask)

63 | x = self.norm_layers[i](x)

64 | x = self.relu_drop(x)

65 | x = x_org + self.proj(x)

66 | return x * x_mask

67 |

68 |

69 | class DDSConv(nn.Module):

70 | """

71 | Dialted and Depth-Separable Convolution

72 | """

73 | def __init__(self, channels, kernel_size, n_layers, p_dropout=0.):

74 | super().__init__()

75 | self.channels = channels

76 | self.kernel_size = kernel_size

77 | self.n_layers = n_layers

78 | self.p_dropout = p_dropout

79 |

80 | self.drop = nn.Dropout(p_dropout)

81 | self.convs_sep = nn.ModuleList()

82 | self.convs_1x1 = nn.ModuleList()

83 | self.norms_1 = nn.ModuleList()

84 | self.norms_2 = nn.ModuleList()

85 | for i in range(n_layers):

86 | dilation = kernel_size ** i

87 | padding = (kernel_size * dilation - dilation) // 2

88 | self.convs_sep.append(nn.Conv1d(channels, channels, kernel_size,

89 | groups=channels, dilation=dilation, padding=padding

90 | ))

91 | self.convs_1x1.append(nn.Conv1d(channels, channels, 1))

92 | self.norms_1.append(LayerNorm(channels))

93 | self.norms_2.append(LayerNorm(channels))

94 |

95 | def forward(self, x, x_mask, g=None):

96 | if g is not None:

97 | x = x + g

98 | for i in range(self.n_layers):

99 | y = self.convs_sep[i](x * x_mask)

100 | y = self.norms_1[i](y)

101 | y = F.gelu(y)

102 | y = self.convs_1x1[i](y)

103 | y = self.norms_2[i](y)

104 | y = F.gelu(y)

105 | y = self.drop(y)

106 | x = x + y

107 | return x * x_mask

108 |

109 |

110 | class WN(torch.nn.Module):

111 | def __init__(self, hidden_channels, kernel_size, dilation_rate, n_layers, gin_channels=0, p_dropout=0):

112 | super(WN, self).__init__()

113 | assert(kernel_size % 2 == 1)

114 | self.hidden_channels =hidden_channels

115 | self.kernel_size = kernel_size,

116 | self.dilation_rate = dilation_rate

117 | self.n_layers = n_layers

118 | self.gin_channels = gin_channels

119 | self.p_dropout = p_dropout

120 |

121 | self.in_layers = torch.nn.ModuleList()

122 | self.res_skip_layers = torch.nn.ModuleList()

123 | self.drop = nn.Dropout(p_dropout)

124 |

125 | if gin_channels != 0:

126 | cond_layer = torch.nn.Conv1d(gin_channels, 2*hidden_channels*n_layers, 1)

127 | self.cond_layer = torch.nn.utils.weight_norm(cond_layer, name='weight')

128 |

129 | for i in range(n_layers):

130 | dilation = dilation_rate ** i

131 | padding = int((kernel_size * dilation - dilation) / 2)

132 | in_layer = torch.nn.Conv1d(hidden_channels, 2*hidden_channels, kernel_size,

133 | dilation=dilation, padding=padding)

134 | in_layer = torch.nn.utils.weight_norm(in_layer, name='weight')

135 | self.in_layers.append(in_layer)

136 |

137 | # last one is not necessary

138 | if i < n_layers - 1:

139 | res_skip_channels = 2 * hidden_channels

140 | else:

141 | res_skip_channels = hidden_channels

142 |

143 | res_skip_layer = torch.nn.Conv1d(hidden_channels, res_skip_channels, 1)

144 | res_skip_layer = torch.nn.utils.weight_norm(res_skip_layer, name='weight')

145 | self.res_skip_layers.append(res_skip_layer)

146 |

147 | def forward(self, x, x_mask, g=None, **kwargs):

148 | output = torch.zeros_like(x)

149 | n_channels_tensor = torch.IntTensor([self.hidden_channels])

150 |

151 | if g is not None:

152 | g = self.cond_layer(g)

153 |

154 | for i in range(self.n_layers):

155 | x_in = self.in_layers[i](x)

156 | if g is not None:

157 | cond_offset = i * 2 * self.hidden_channels

158 | g_l = g[:,cond_offset:cond_offset+2*self.hidden_channels,:]

159 | else:

160 | g_l = torch.zeros_like(x_in)

161 |

162 | acts = commons.fused_add_tanh_sigmoid_multiply(

163 | x_in,

164 | g_l,

165 | n_channels_tensor)

166 | acts = self.drop(acts)

167 |

168 | res_skip_acts = self.res_skip_layers[i](acts)

169 | if i < self.n_layers - 1:

170 | res_acts = res_skip_acts[:,:self.hidden_channels,:]

171 | x = (x + res_acts) * x_mask

172 | output = output + res_skip_acts[:,self.hidden_channels:,:]

173 | else:

174 | output = output + res_skip_acts

175 | return output * x_mask

176 |

177 | def remove_weight_norm(self):

178 | if self.gin_channels != 0:

179 | torch.nn.utils.remove_weight_norm(self.cond_layer)

180 | for l in self.in_layers:

181 | torch.nn.utils.remove_weight_norm(l)

182 | for l in self.res_skip_layers:

183 | torch.nn.utils.remove_weight_norm(l)

184 |

185 |

186 | class ResBlock1(torch.nn.Module):

187 | def __init__(self, channels, kernel_size=3, dilation=(1, 3, 5)):

188 | super(ResBlock1, self).__init__()

189 | self.convs1 = nn.ModuleList([

190 | weight_norm(Conv1d(channels, channels, kernel_size, 1, dilation=dilation[0],

191 | padding=get_padding(kernel_size, dilation[0]))),

192 | weight_norm(Conv1d(channels, channels, kernel_size, 1, dilation=dilation[1],

193 | padding=get_padding(kernel_size, dilation[1]))),