├── .gitignore

├── Dockerfile

├── LICENSE.md

├── PhotoWCTModels

└── photo_wct.pth

├── README.md

├── TUTORIAL.md

├── ade20k_semantic_rel.npy

├── converter.py

├── demo.py

├── demo_example1.sh

├── demo_example1_fast.sh

├── demo_example3.sh

├── demo_mask_poly.png

├── demo_result_content3_seg.pgm.visualization.jpg

├── demo_result_example1.png

├── demo_result_example2.png

├── demo_result_example3.png

├── demo_result_style3_seg.pgm.visualization.jpg

├── demo_with_ade20k_ssn.py

├── demo_with_segmentation.gif

├── download_models.py

├── download_models.sh

├── models.py

├── photo_gif.py

├── photo_smooth.py

├── photo_wct.py

├── process_stylization.py

├── process_stylization_ade20k_ssn.py

├── process_stylization_folder.py

├── smooth_filter.py

└── teaser.png

/.gitignore:

--------------------------------------------------------------------------------

1 | segmentation/

2 | outputs/

3 | models/

4 | results/

5 | images/

6 | data/

7 | logs/

8 | examples

9 | .idea/

10 | notebooks/.ipynb_checkpoints/*

11 | *.tar.gz

12 | *.zip

13 | *.pkl

14 | *.pyc

15 |

--------------------------------------------------------------------------------

/Dockerfile:

--------------------------------------------------------------------------------

1 | FROM nvidia/cuda:9.1-cudnn7-devel-ubuntu16.04

2 | ENV ANACONDA /opt/anaconda3

3 | ENV CUDA_PATH /usr/local/cuda

4 | ENV PATH ${ANACONDA}/bin:${CUDA_PATH}/bin:$PATH

5 | ENV LD_LIBRARY_PATH ${ANACONDA}/lib:${CUDA_PATH}/bin64:$LD_LIBRARY_PATH

6 | ENV C_INCLUDE_PATH ${CUDA_PATH}/include

7 | RUN apt-get update && apt-get install -y --no-install-recommends \

8 | wget \

9 | axel \

10 | imagemagick \

11 | libopencv-dev \

12 | python-opencv \

13 | build-essential \

14 | cmake \

15 | git \

16 | curl \

17 | ca-certificates \

18 | libjpeg-dev \

19 | libpng-dev \

20 | axel \

21 | zip \

22 | unzip

23 | RUN wget https://repo.continuum.io/archive/Anaconda3-5.0.1-Linux-x86_64.sh -P /tmp

24 | RUN bash /tmp/Anaconda3-5.0.1-Linux-x86_64.sh -b -p $ANACONDA

25 | RUN rm /tmp/Anaconda3-5.0.1-Linux-x86_64.sh -rf

26 | RUN conda install -y pytorch=0.4.1 torchvision cuda91 -c pytorch

27 | RUN conda install -y -c anaconda pip

28 | RUN conda install -y -c menpo opencv3

29 | RUN pip install scikit-umfpack

30 | RUN pip install cupy-cuda91

31 | RUN pip install pynvrtc

32 |

--------------------------------------------------------------------------------

/LICENSE.md:

--------------------------------------------------------------------------------

1 | ## creative commons

2 |

3 | # Attribution-NonCommercial-ShareAlike 4.0 International

4 |

5 | Creative Commons Corporation (“Creative Commons”) is not a law firm and does not provide legal services or legal advice. Distribution of Creative Commons public licenses does not create a lawyer-client or other relationship. Creative Commons makes its licenses and related information available on an “as-is” basis. Creative Commons gives no warranties regarding its licenses, any material licensed under their terms and conditions, or any related information. Creative Commons disclaims all liability for damages resulting from their use to the fullest extent possible.

6 |

7 | ### Using Creative Commons Public Licenses

8 |

9 | Creative Commons public licenses provide a standard set of terms and conditions that creators and other rights holders may use to share original works of authorship and other material subject to copyright and certain other rights specified in the public license below. The following considerations are for informational purposes only, are not exhaustive, and do not form part of our licenses.

10 |

11 | * __Considerations for licensors:__ Our public licenses are intended for use by those authorized to give the public permission to use material in ways otherwise restricted by copyright and certain other rights. Our licenses are irrevocable. Licensors should read and understand the terms and conditions of the license they choose before applying it. Licensors should also secure all rights necessary before applying our licenses so that the public can reuse the material as expected. Licensors should clearly mark any material not subject to the license. This includes other CC-licensed material, or material used under an exception or limitation to copyright. [More considerations for licensors](http://wiki.creativecommons.org/Considerations_for_licensors_and_licensees#Considerations_for_licensors).

12 |

13 | * __Considerations for the public:__ By using one of our public licenses, a licensor grants the public permission to use the licensed material under specified terms and conditions. If the licensor’s permission is not necessary for any reason–for example, because of any applicable exception or limitation to copyright–then that use is not regulated by the license. Our licenses grant only permissions under copyright and certain other rights that a licensor has authority to grant. Use of the licensed material may still be restricted for other reasons, including because others have copyright or other rights in the material. A licensor may make special requests, such as asking that all changes be marked or described. Although not required by our licenses, you are encouraged to respect those requests where reasonable. [More considerations for the public](http://wiki.creativecommons.org/Considerations_for_licensors_and_licensees#Considerations_for_licensees).

14 |

15 | ## Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International Public License

16 |

17 | By exercising the Licensed Rights (defined below), You accept and agree to be bound by the terms and conditions of this Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International Public License ("Public License"). To the extent this Public License may be interpreted as a contract, You are granted the Licensed Rights in consideration of Your acceptance of these terms and conditions, and the Licensor grants You such rights in consideration of benefits the Licensor receives from making the Licensed Material available under these terms and conditions.

18 |

19 | ### Section 1 – Definitions.

20 |

21 | a. __Adapted Material__ means material subject to Copyright and Similar Rights that is derived from or based upon the Licensed Material and in which the Licensed Material is translated, altered, arranged, transformed, or otherwise modified in a manner requiring permission under the Copyright and Similar Rights held by the Licensor. For purposes of this Public License, where the Licensed Material is a musical work, performance, or sound recording, Adapted Material is always produced where the Licensed Material is synched in timed relation with a moving image.

22 |

23 | b. __Adapter's License__ means the license You apply to Your Copyright and Similar Rights in Your contributions to Adapted Material in accordance with the terms and conditions of this Public License.

24 |

25 | c. __BY-NC-SA Compatible License__ means a license listed at [creativecommons.org/compatiblelicenses](http://creativecommons.org/compatiblelicenses), approved by Creative Commons as essentially the equivalent of this Public License.

26 |

27 | d. __Copyright and Similar Rights__ means copyright and/or similar rights closely related to copyright including, without limitation, performance, broadcast, sound recording, and Sui Generis Database Rights, without regard to how the rights are labeled or categorized. For purposes of this Public License, the rights specified in Section 2(b)(1)-(2) are not Copyright and Similar Rights.

28 |

29 | e. __Effective Technological Measures__ means those measures that, in the absence of proper authority, may not be circumvented under laws fulfilling obligations under Article 11 of the WIPO Copyright Treaty adopted on December 20, 1996, and/or similar international agreements.

30 |

31 | f. __Exceptions and Limitations__ means fair use, fair dealing, and/or any other exception or limitation to Copyright and Similar Rights that applies to Your use of the Licensed Material.

32 |

33 | g. __License Elements__ means the license attributes listed in the name of a Creative Commons Public License. The License Elements of this Public License are Attribution, NonCommercial, and ShareAlike.

34 |

35 | h. __Licensed Material__ means the artistic or literary work, database, or other material to which the Licensor applied this Public License.

36 |

37 | i. __Licensed Rights__ means the rights granted to You subject to the terms and conditions of this Public License, which are limited to all Copyright and Similar Rights that apply to Your use of the Licensed Material and that the Licensor has authority to license.

38 |

39 | h. __Licensor__ means the individual(s) or entity(ies) granting rights under this Public License.

40 |

41 | i. __NonCommercial__ means not primarily intended for or directed towards commercial advantage or monetary compensation. For purposes of this Public License, the exchange of the Licensed Material for other material subject to Copyright and Similar Rights by digital file-sharing or similar means is NonCommercial provided there is no payment of monetary compensation in connection with the exchange.

42 |

43 | j. __Share__ means to provide material to the public by any means or process that requires permission under the Licensed Rights, such as reproduction, public display, public performance, distribution, dissemination, communication, or importation, and to make material available to the public including in ways that members of the public may access the material from a place and at a time individually chosen by them.

44 |

45 | k. __Sui Generis Database Rights__ means rights other than copyright resulting from Directive 96/9/EC of the European Parliament and of the Council of 11 March 1996 on the legal protection of databases, as amended and/or succeeded, as well as other essentially equivalent rights anywhere in the world.

46 |

47 | l. __You__ means the individual or entity exercising the Licensed Rights under this Public License. Your has a corresponding meaning.

48 |

49 | ### Section 2 – Scope.

50 |

51 | a. ___License grant.___

52 |

53 | 1. Subject to the terms and conditions of this Public License, the Licensor hereby grants You a worldwide, royalty-free, non-sublicensable, non-exclusive, irrevocable license to exercise the Licensed Rights in the Licensed Material to:

54 |

55 | A. reproduce and Share the Licensed Material, in whole or in part, for NonCommercial purposes only; and

56 |

57 | B. produce, reproduce, and Share Adapted Material for NonCommercial purposes only.

58 |

59 | 2. __Exceptions and Limitations.__ For the avoidance of doubt, where Exceptions and Limitations apply to Your use, this Public License does not apply, and You do not need to comply with its terms and conditions.

60 |

61 | 3. __Term.__ The term of this Public License is specified in Section 6(a).

62 |

63 | 4. __Media and formats; technical modifications allowed.__ The Licensor authorizes You to exercise the Licensed Rights in all media and formats whether now known or hereafter created, and to make technical modifications necessary to do so. The Licensor waives and/or agrees not to assert any right or authority to forbid You from making technical modifications necessary to exercise the Licensed Rights, including technical modifications necessary to circumvent Effective Technological Measures. For purposes of this Public License, simply making modifications authorized by this Section 2(a)(4) never produces Adapted Material.

64 |

65 | 5. __Downstream recipients.__

66 |

67 | A. __Offer from the Licensor – Licensed Material.__ Every recipient of the Licensed Material automatically receives an offer from the Licensor to exercise the Licensed Rights under the terms and conditions of this Public License.

68 |

69 | B. __Additional offer from the Licensor – Adapted Material.__ Every recipient of Adapted Material from You automatically receives an offer from the Licensor to exercise the Licensed Rights in the Adapted Material under the conditions of the Adapter’s License You apply.

70 |

71 | C. __No downstream restrictions.__ You may not offer or impose any additional or different terms or conditions on, or apply any Effective Technological Measures to, the Licensed Material if doing so restricts exercise of the Licensed Rights by any recipient of the Licensed Material.

72 |

73 | 6. __No endorsement.__ Nothing in this Public License constitutes or may be construed as permission to assert or imply that You are, or that Your use of the Licensed Material is, connected with, or sponsored, endorsed, or granted official status by, the Licensor or others designated to receive attribution as provided in Section 3(a)(1)(A)(i).

74 |

75 | b. ___Other rights.___

76 |

77 | 1. Moral rights, such as the right of integrity, are not licensed under this Public License, nor are publicity, privacy, and/or other similar personality rights; however, to the extent possible, the Licensor waives and/or agrees not to assert any such rights held by the Licensor to the limited extent necessary to allow You to exercise the Licensed Rights, but not otherwise.

78 |

79 | 2. Patent and trademark rights are not licensed under this Public License.

80 |

81 | 3. To the extent possible, the Licensor waives any right to collect royalties from You for the exercise of the Licensed Rights, whether directly or through a collecting society under any voluntary or waivable statutory or compulsory licensing scheme. In all other cases the Licensor expressly reserves any right to collect such royalties, including when the Licensed Material is used other than for NonCommercial purposes.

82 |

83 | ### Section 3 – License Conditions.

84 |

85 | Your exercise of the Licensed Rights is expressly made subject to the following conditions.

86 |

87 | a. ___Attribution.___

88 |

89 | 1. If You Share the Licensed Material (including in modified form), You must:

90 |

91 | A. retain the following if it is supplied by the Licensor with the Licensed Material:

92 |

93 | i. identification of the creator(s) of the Licensed Material and any others designated to receive attribution, in any reasonable manner requested by the Licensor (including by pseudonym if designated);

94 |

95 | ii. a copyright notice;

96 |

97 | iii. a notice that refers to this Public License;

98 |

99 | iv. a notice that refers to the disclaimer of warranties;

100 |

101 | v. a URI or hyperlink to the Licensed Material to the extent reasonably practicable;

102 |

103 | B. indicate if You modified the Licensed Material and retain an indication of any previous modifications; and

104 |

105 | C. indicate the Licensed Material is licensed under this Public License, and include the text of, or the URI or hyperlink to, this Public License.

106 |

107 | 2. You may satisfy the conditions in Section 3(a)(1) in any reasonable manner based on the medium, means, and context in which You Share the Licensed Material. For example, it may be reasonable to satisfy the conditions by providing a URI or hyperlink to a resource that includes the required information.

108 |

109 | 3. If requested by the Licensor, You must remove any of the information required by Section 3(a)(1)(A) to the extent reasonably practicable.

110 |

111 | b. ___ShareAlike.___

112 |

113 | In addition to the conditions in Section 3(a), if You Share Adapted Material You produce, the following conditions also apply.

114 |

115 | 1. The Adapter’s License You apply must be a Creative Commons license with the same License Elements, this version or later, or a BY-NC-SA Compatible License.

116 |

117 | 2. You must include the text of, or the URI or hyperlink to, the Adapter's License You apply. You may satisfy this condition in any reasonable manner based on the medium, means, and context in which You Share Adapted Material.

118 |

119 | 3. You may not offer or impose any additional or different terms or conditions on, or apply any Effective Technological Measures to, Adapted Material that restrict exercise of the rights granted under the Adapter's License You apply.

120 |

121 | ### Section 4 – Sui Generis Database Rights.

122 |

123 | Where the Licensed Rights include Sui Generis Database Rights that apply to Your use of the Licensed Material:

124 |

125 | a. for the avoidance of doubt, Section 2(a)(1) grants You the right to extract, reuse, reproduce, and Share all or a substantial portion of the contents of the database for NonCommercial purposes only;

126 |

127 | b. if You include all or a substantial portion of the database contents in a database in which You have Sui Generis Database Rights, then the database in which You have Sui Generis Database Rights (but not its individual contents) is Adapted Material, including for purposes of Section 3(b); and

128 |

129 | c. You must comply with the conditions in Section 3(a) if You Share all or a substantial portion of the contents of the database.

130 |

131 | For the avoidance of doubt, this Section 4 supplements and does not replace Your obligations under this Public License where the Licensed Rights include other Copyright and Similar Rights.

132 |

133 | ### Section 5 – Disclaimer of Warranties and Limitation of Liability.

134 |

135 | a. __Unless otherwise separately undertaken by the Licensor, to the extent possible, the Licensor offers the Licensed Material as-is and as-available, and makes no representations or warranties of any kind concerning the Licensed Material, whether express, implied, statutory, or other. This includes, without limitation, warranties of title, merchantability, fitness for a particular purpose, non-infringement, absence of latent or other defects, accuracy, or the presence or absence of errors, whether or not known or discoverable. Where disclaimers of warranties are not allowed in full or in part, this disclaimer may not apply to You.__

136 |

137 | b. __To the extent possible, in no event will the Licensor be liable to You on any legal theory (including, without limitation, negligence) or otherwise for any direct, special, indirect, incidental, consequential, punitive, exemplary, or other losses, costs, expenses, or damages arising out of this Public License or use of the Licensed Material, even if the Licensor has been advised of the possibility of such losses, costs, expenses, or damages. Where a limitation of liability is not allowed in full or in part, this limitation may not apply to You.__

138 |

139 | c. The disclaimer of warranties and limitation of liability provided above shall be interpreted in a manner that, to the extent possible, most closely approximates an absolute disclaimer and waiver of all liability.

140 |

141 | ### Section 6 – Term and Termination.

142 |

143 | a. This Public License applies for the term of the Copyright and Similar Rights licensed here. However, if You fail to comply with this Public License, then Your rights under this Public License terminate automatically.

144 |

145 | b. Where Your right to use the Licensed Material has terminated under Section 6(a), it reinstates:

146 |

147 | 1. automatically as of the date the violation is cured, provided it is cured within 30 days of Your discovery of the violation; or

148 |

149 | 2. automatically as of the date the violation is cured, provided it is cured within 30 days of Your discovery of the violation; or

150 |

151 | For the avoidance of doubt, this Section 6(b) does not affect any right the Licensor may have to seek remedies for Your violations of this Public License.

152 |

153 | c. For the avoidance of doubt, the Licensor may also offer the Licensed Material under separate terms or conditions or stop distributing the Licensed Material at any time; however, doing so will not terminate this Public License.

154 |

155 | d. Sections 1, 5, 6, 7, and 8 survive termination of this Public License.

156 |

157 | ### Section 7 – Other Terms and Conditions.

158 |

159 | a. The Licensor shall not be bound by any additional or different terms or conditions communicated by You unless expressly agreed.

160 |

161 | b. Any arrangements, understandings, or agreements regarding the Licensed Material not stated herein are separate from and independent of the terms and conditions of this Public License.

162 |

163 | ### Section 8 – Interpretation.

164 |

165 | a. For the avoidance of doubt, this Public License does not, and shall not be interpreted to, reduce, limit, restrict, or impose conditions on any use of the Licensed Material that could lawfully be made without permission under this Public License.

166 |

167 | b. To the extent possible, if any provision of this Public License is deemed unenforceable, it shall be automatically reformed to the minimum extent necessary to make it enforceable. If the provision cannot be reformed, it shall be severed from this Public License without affecting the enforceability of the remaining terms and conditions.

168 |

169 | c. No term or condition of this Public License will be waived and no failure to comply consented to unless expressly agreed to by the Licensor.

170 |

171 | d. Nothing in this Public License constitutes or may be interpreted as a limitation upon, or waiver of, any privileges and immunities that apply to the Licensor or You, including from the legal processes of any jurisdiction or authority.

172 |

173 | ```

174 | Creative Commons is not a party to its public licenses. Notwithstanding, Creative Commons may elect to apply one of its public licenses to material it publishes and in those instances will be considered the “Licensor.” Except for the limited purpose of indicating that material is shared under a Creative Commons public license or as otherwise permitted by the Creative Commons policies published at [creativecommons.org/policies](http://creativecommons.org/policies), Creative Commons does not authorize the use of the trademark “Creative Commons” or any other trademark or logo of Creative Commons without its prior written consent including, without limitation, in connection with any unauthorized modifications to any of its public licenses or any other arrangements, understandings, or agreements concerning use of licensed material. For the avoidance of doubt, this paragraph does not form part of the public licenses.

175 |

176 | Creative Commons may be contacted at [creativecommons.org](http://creativecommons.org/).

177 | ```

178 |

--------------------------------------------------------------------------------

/PhotoWCTModels/photo_wct.pth:

--------------------------------------------------------------------------------

https://raw.githubusercontent.com/NVIDIA/FastPhotoStyle/af0c8fecce58aa71f76488546231214f6684be02/PhotoWCTModels/photo_wct.pth

--------------------------------------------------------------------------------

/README.md:

--------------------------------------------------------------------------------

1 | [](https://raw.githubusercontent.com/NVIDIA/FastPhotoStyle/master/LICENSE.md)

2 |

3 |

4 |

5 | ## FastPhotoStyle

6 |

7 | ### License

8 | Copyright (C) 2018 NVIDIA Corporation. All rights reserved.

9 | Licensed under the CC BY-NC-SA 4.0 license (https://creativecommons.org/licenses/by-nc-sa/4.0/legalcode).

10 |

11 |  12 |

13 |

14 | ### What's new

15 |

16 | | Date | News |

17 | |----------|--------------|

18 | |2018-07-25| Migrate to pytorch 0.4.0. For pytorch 0.3.0 user, check out [FastPhotoStyle for pytorch 0.3.0](https://github.com/NVIDIA/FastPhotoStyle/releases/tag/f33e07f). |

19 | | | Add a [tutorial](TUTORIAL.md) showing 3 ways of using the FastPhotoStyle algorithm.|

20 | |2018-07-10| Our paper is accepted by the ECCV 2018 conference!!! |

21 |

22 |

23 | ### About

24 |

25 | Given a content photo and a style photo, the code can transfer the style of the style photo to the content photo. The details of the algorithm behind the code is documented in our arxiv paper. Please cite the paper if this code repository is used in your publications.

26 |

27 | [A Closed-form Solution to Photorealistic Image Stylization](https://arxiv.org/abs/1802.06474)

12 |

13 |

14 | ### What's new

15 |

16 | | Date | News |

17 | |----------|--------------|

18 | |2018-07-25| Migrate to pytorch 0.4.0. For pytorch 0.3.0 user, check out [FastPhotoStyle for pytorch 0.3.0](https://github.com/NVIDIA/FastPhotoStyle/releases/tag/f33e07f). |

19 | | | Add a [tutorial](TUTORIAL.md) showing 3 ways of using the FastPhotoStyle algorithm.|

20 | |2018-07-10| Our paper is accepted by the ECCV 2018 conference!!! |

21 |

22 |

23 | ### About

24 |

25 | Given a content photo and a style photo, the code can transfer the style of the style photo to the content photo. The details of the algorithm behind the code is documented in our arxiv paper. Please cite the paper if this code repository is used in your publications.

26 |

27 | [A Closed-form Solution to Photorealistic Image Stylization](https://arxiv.org/abs/1802.06474)

28 | [Yijun Li (UC Merced)](https://sites.google.com/site/yijunlimaverick/), [Ming-Yu Liu (NVIDIA)](http://mingyuliu.net/), [Xueting Li (UC Merced)](https://sunshineatnoon.github.io/), [Ming-Hsuan Yang (NVIDIA, UC Merced)](http://faculty.ucmerced.edu/mhyang/), [Jan Kautz (NVIDIA)](http://jankautz.com/)

29 | European Conference on Computer Vision (ECCV), 2018

30 |

31 |

32 | ### Tutorial

33 |

34 | Please check out the [tutorial](TUTORIAL.md).

35 |

36 |

37 |

--------------------------------------------------------------------------------

/TUTORIAL.md:

--------------------------------------------------------------------------------

1 | [](https://raw.githubusercontent.com/NVIDIA/FastPhotoStyle/master/LICENSE.md)

2 |

3 |

4 | ## FastPhotoStyle Tutorial

5 |

6 | In this short tutorial, we will guide you through setting up the system environment for running the FastPhotoStyle software and then show several usage examples.

7 |

8 | ### Background

9 |

10 | Existing style transfer algorithms can be divided into categories: artistic style transfer and photorealistic style transfer.

11 | For artistic style transfer, the goal is to transfer the style of a reference painting to a photo so that the stylized photo looks like a painting and carries the style of the reference painting.

12 | For photorealistic style transfer, the goal is to transfer the style of a reference photo to a photo so that the stylized photo preserves the content of the original photo but carries the style of the reference photo.

13 | The FastPhotoStyle algorithm is in the category of photorealistic style transfer.

14 |

15 | ### Algorithm

16 |

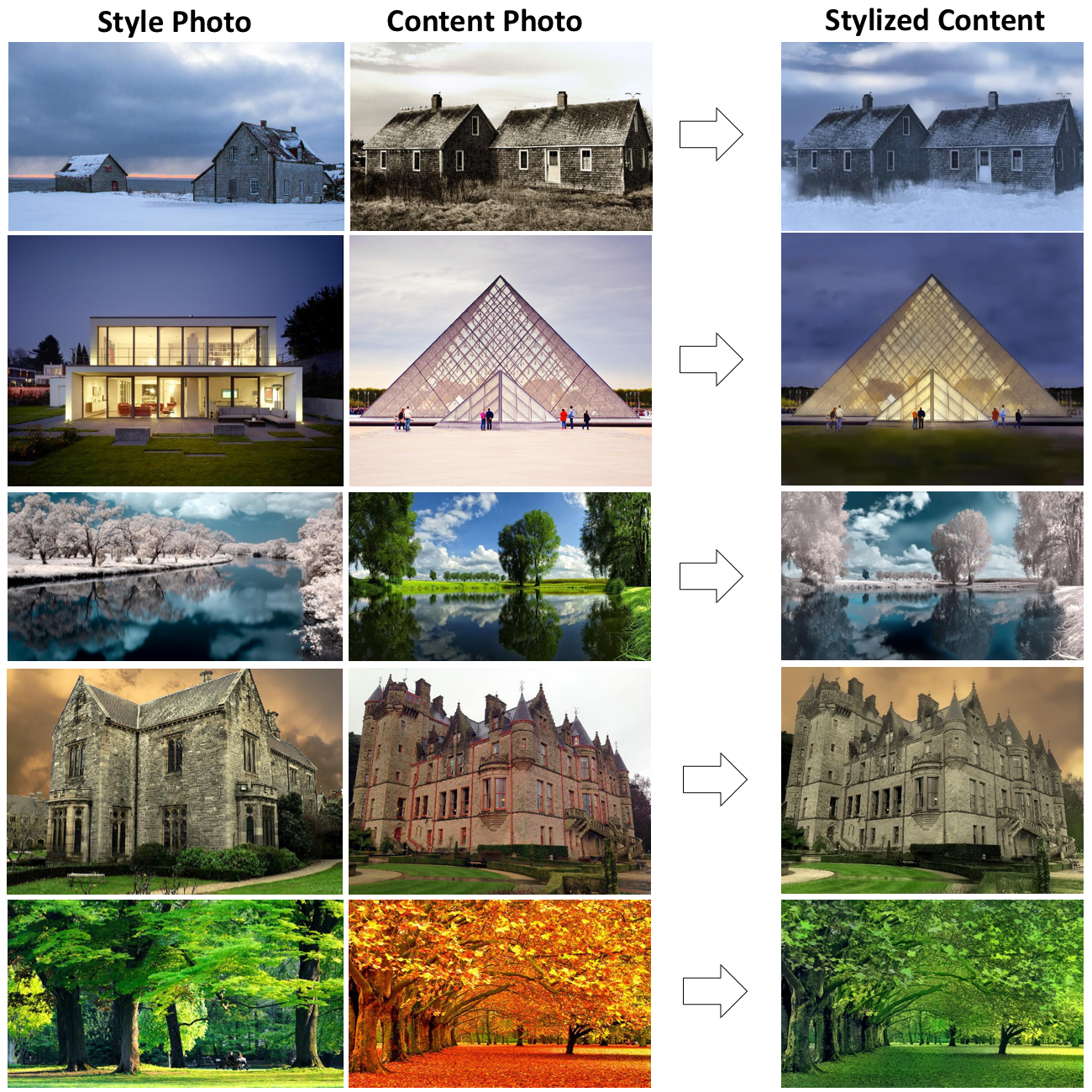

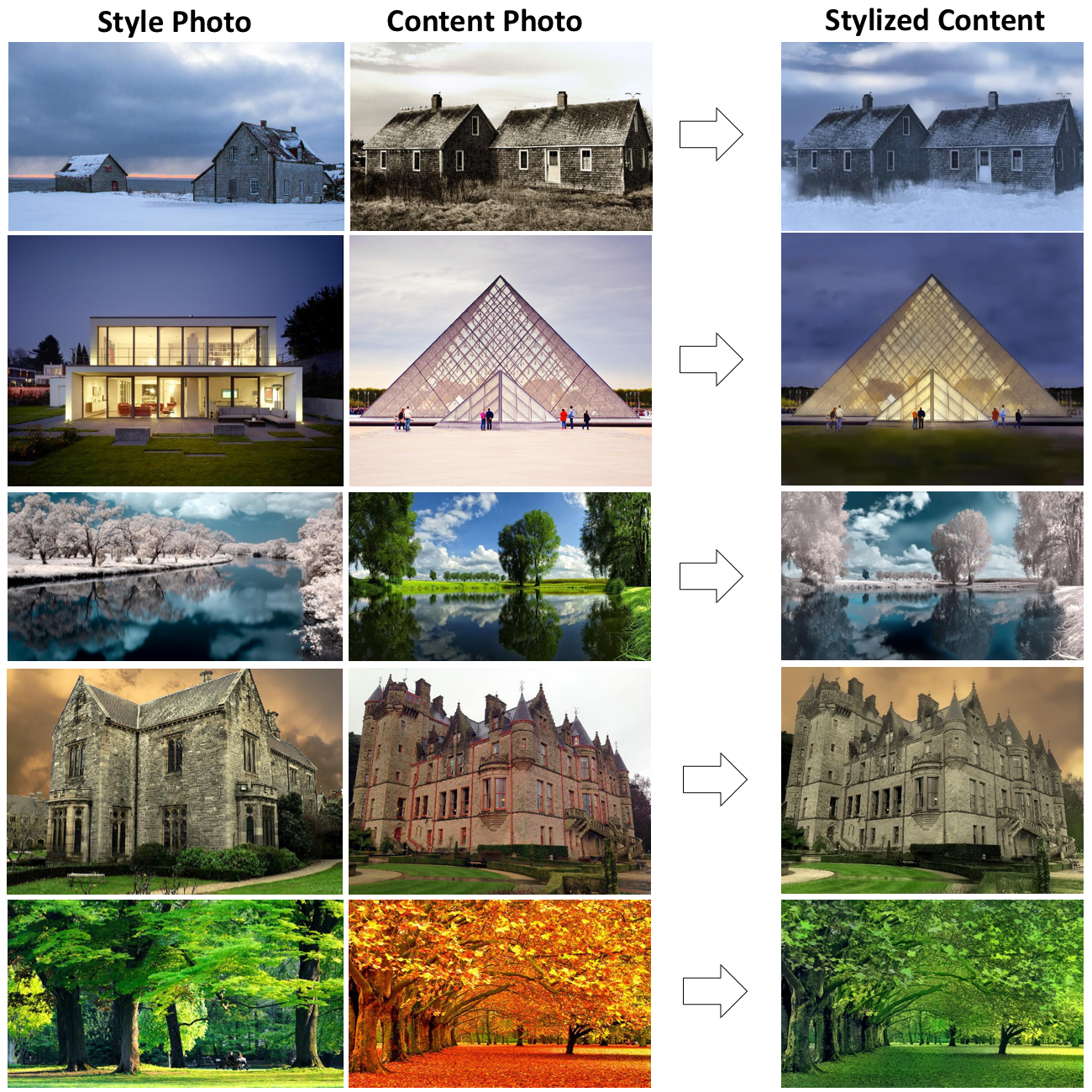

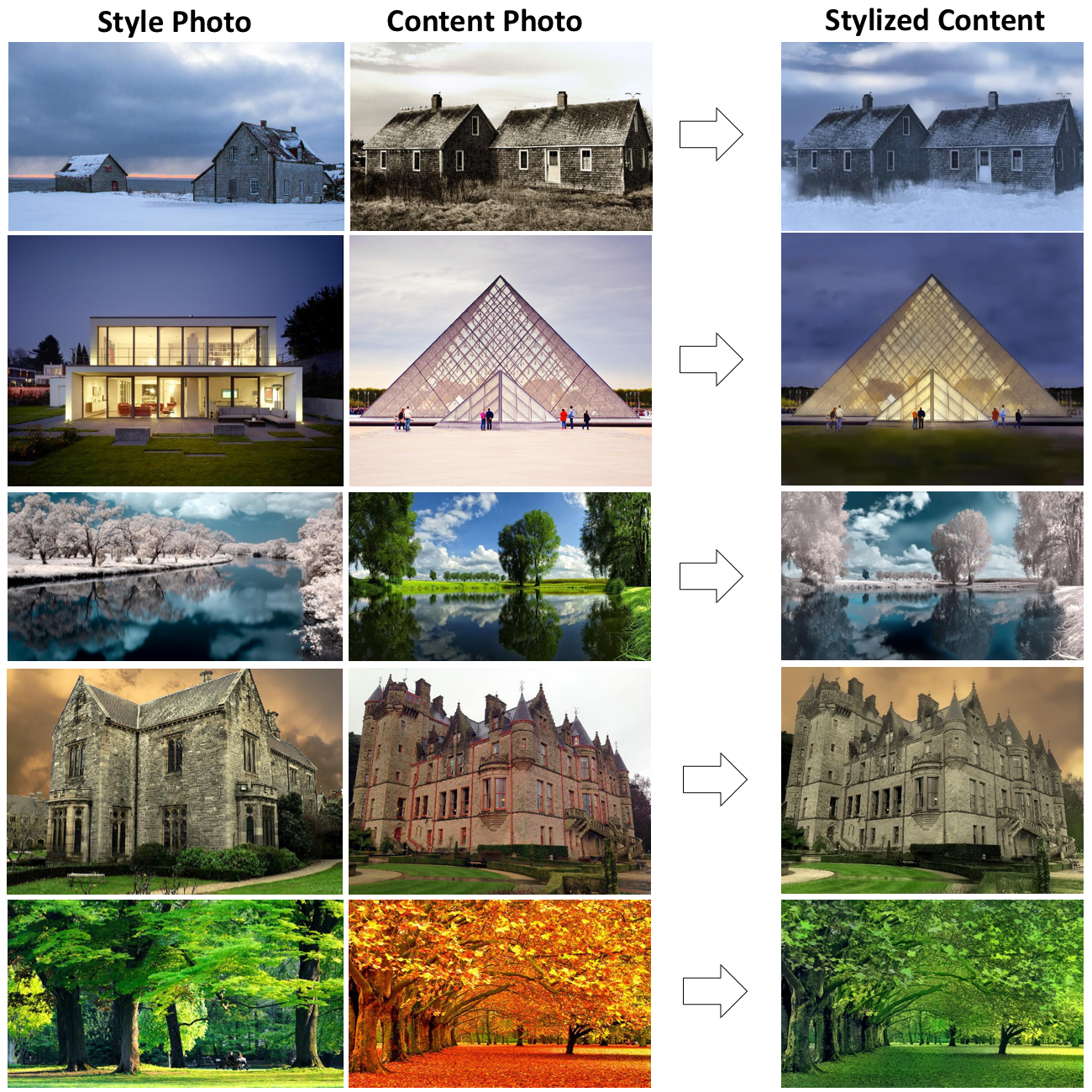

17 | FastPhotoStyle takes two images as input where one is the content image and the other is the style image. Its goal is to transfer the style of the style photo to the content photo for creating a stylized image as shown below.

18 |

19 |  20 |

21 |

20 |

21 |  22 |

23 | FastPhotoStyle divides the photorealistic stylization process into two steps.

24 | 1. **PhotoWCT:** Generate a stylized image with visible distortions by applying a whitening and coloring transform to the deep features extracted from the content and style images.

25 | 2. **Photorealistic Smoothing:** Suppress the distortion in the stylized image by applying an image smoothing filter.

26 |

27 | The output is a photorealistic image as it were captured by a camera.

28 |

29 | ### Requirements

30 |

31 | - Hardware: PC with NVIDIA Titan GPU.

32 | - Software: *Ubuntu 16.04*, *CUDA 9.1*, *Anaconda3*, *pytorch 0.4.0*

33 | - Environment variables.

34 | - export ANACONDA=PATH-TO-YOUR-ANACONDA-LIBRARY

35 | - export CUDA_PATH=/usr/local/cuda

36 | - export PATH=${ANACONDA}/bin:${CUDA_PATH}/bin:$PATH

37 | - export LD_LIBRARY_PATH=${ANACONDA}/lib:${CUDA_PATH}/bin64:$LD_LIBRARY_PATH

38 | - export C_INCLUDE_PATH=${CUDA_PATH}/include

39 | - System package

40 | - `sudo apt-get install -y axel imagemagick` (Only used for demo)

41 | - Python package

42 | - `conda install pytorch=0.4.0 torchvision cuda91 -y -c pytorch`

43 | - `pip install scikit-umfpack`

44 | - `pip install -U setuptools`

45 | - `pip install cupy`

46 | - `pip install pynvrtc`

47 | - `conda install -c menpo opencv3` (OpenCV is only required if you want to use the approximate version of the photo smoothing step.)

48 |

49 | ### Examples

50 |

51 | In the following, we will provide 3 usage examples.

52 | In the 1st example, we will run the FastPhotoStyle code without using

53 | segmentation mask.

54 | In the 2nd example, we will show how to use a labeling tool to create the segmentation masks and use them for stylization.

55 | In the 3rd example, we will show how to use a pretrained segmetnation network to automatically generate the segmetnation masks and use them for stylization.

56 |

57 | #### Example 1: Transfer style of a style photo to a content photo without using segmentation masks.

58 |

59 | You can simply type `./demo_example1.sh` to run the demo or follow the steps below.

60 | - Create image and output folders and make sure nothing is inside the folders: `mkdir images && mkdir results`

61 | - Go to the image folder: `cd images`

62 | - Download content image 1: `axel -n 1 http://freebigpictures.com/wp-content/uploads/shady-forest.jpg --output=content1.png`

63 | - Download style image 1: `axel -n 1 https://vignette.wikia.nocookie.net/strangerthings8338/images/e/e0/Wiki-background.jpeg/revision/latest?cb=20170522192233 --output=style1.png`

64 | - These images are huge. We need to resize them first. Run

65 | - `convert -resize 25% content1.png content1.png`

66 | - `convert -resize 50% style1.png style1.png`

67 | - Go back to the root folder: `cd ..`

68 | - Test the photorealistic image stylization code `python demo.py --output_image_path results/example1.png`

69 | - You should see output messages like

70 | - ```

71 | Resize image: (803,538)->(803,538)

72 | Resize image: (960,540)->(960,540)

73 | Elapsed time in stylization: 0.398996

74 | Elapsed time in propagation: 13.456573

75 | Elapsed time in post processing: 0.202319

76 | ```

77 | - You should see an output image like

78 |

79 | | Input Style Photo | Input Content Photo | Output Stylization Result |

80 | |-------------------|---------------------|---------------------------|

81 | |

22 |

23 | FastPhotoStyle divides the photorealistic stylization process into two steps.

24 | 1. **PhotoWCT:** Generate a stylized image with visible distortions by applying a whitening and coloring transform to the deep features extracted from the content and style images.

25 | 2. **Photorealistic Smoothing:** Suppress the distortion in the stylized image by applying an image smoothing filter.

26 |

27 | The output is a photorealistic image as it were captured by a camera.

28 |

29 | ### Requirements

30 |

31 | - Hardware: PC with NVIDIA Titan GPU.

32 | - Software: *Ubuntu 16.04*, *CUDA 9.1*, *Anaconda3*, *pytorch 0.4.0*

33 | - Environment variables.

34 | - export ANACONDA=PATH-TO-YOUR-ANACONDA-LIBRARY

35 | - export CUDA_PATH=/usr/local/cuda

36 | - export PATH=${ANACONDA}/bin:${CUDA_PATH}/bin:$PATH

37 | - export LD_LIBRARY_PATH=${ANACONDA}/lib:${CUDA_PATH}/bin64:$LD_LIBRARY_PATH

38 | - export C_INCLUDE_PATH=${CUDA_PATH}/include

39 | - System package

40 | - `sudo apt-get install -y axel imagemagick` (Only used for demo)

41 | - Python package

42 | - `conda install pytorch=0.4.0 torchvision cuda91 -y -c pytorch`

43 | - `pip install scikit-umfpack`

44 | - `pip install -U setuptools`

45 | - `pip install cupy`

46 | - `pip install pynvrtc`

47 | - `conda install -c menpo opencv3` (OpenCV is only required if you want to use the approximate version of the photo smoothing step.)

48 |

49 | ### Examples

50 |

51 | In the following, we will provide 3 usage examples.

52 | In the 1st example, we will run the FastPhotoStyle code without using

53 | segmentation mask.

54 | In the 2nd example, we will show how to use a labeling tool to create the segmentation masks and use them for stylization.

55 | In the 3rd example, we will show how to use a pretrained segmetnation network to automatically generate the segmetnation masks and use them for stylization.

56 |

57 | #### Example 1: Transfer style of a style photo to a content photo without using segmentation masks.

58 |

59 | You can simply type `./demo_example1.sh` to run the demo or follow the steps below.

60 | - Create image and output folders and make sure nothing is inside the folders: `mkdir images && mkdir results`

61 | - Go to the image folder: `cd images`

62 | - Download content image 1: `axel -n 1 http://freebigpictures.com/wp-content/uploads/shady-forest.jpg --output=content1.png`

63 | - Download style image 1: `axel -n 1 https://vignette.wikia.nocookie.net/strangerthings8338/images/e/e0/Wiki-background.jpeg/revision/latest?cb=20170522192233 --output=style1.png`

64 | - These images are huge. We need to resize them first. Run

65 | - `convert -resize 25% content1.png content1.png`

66 | - `convert -resize 50% style1.png style1.png`

67 | - Go back to the root folder: `cd ..`

68 | - Test the photorealistic image stylization code `python demo.py --output_image_path results/example1.png`

69 | - You should see output messages like

70 | - ```

71 | Resize image: (803,538)->(803,538)

72 | Resize image: (960,540)->(960,540)

73 | Elapsed time in stylization: 0.398996

74 | Elapsed time in propagation: 13.456573

75 | Elapsed time in post processing: 0.202319

76 | ```

77 | - You should see an output image like

78 |

79 | | Input Style Photo | Input Content Photo | Output Stylization Result |

80 | |-------------------|---------------------|---------------------------|

81 | | |

|  |

| |

82 |

83 | - As shown in the output messages, the computational bottleneck of FastPhotoStyle is the propagation step (the photorealistic smoothing step). We find that we can make this step much faster by using the guided image filtering algorithm as an approximate. To run the fast version of the demo, you can simply type `./demo_example1_fast.sh` or run.

84 | - `python demo.py --fast --output_image_path results/example1_fast.png`

85 | - You should see output messages like

86 | - ```

87 | Resize image: (803,538)->(803,538)

88 | Resize image: (960,540)->(960,540)

89 | Elapsed time in stylization: 0.342203

90 | Elapsed time in propagation: 0.039506

91 | Elapsed time in post processing: 0.203081

92 | ```

93 | - Check out the stylization result computed by the fast approximation step in `results/example1_fast.png`. It should look very similar to `results/example1.png` from the full algorithm.

94 |

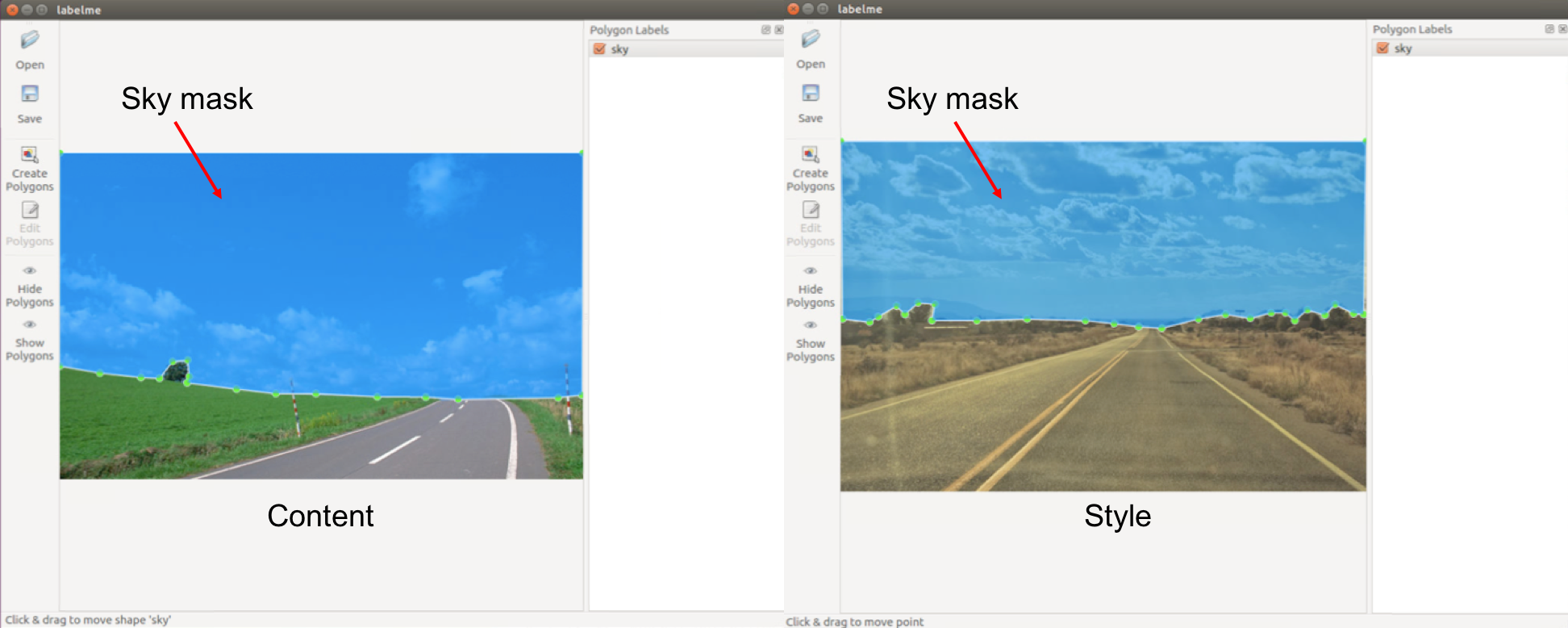

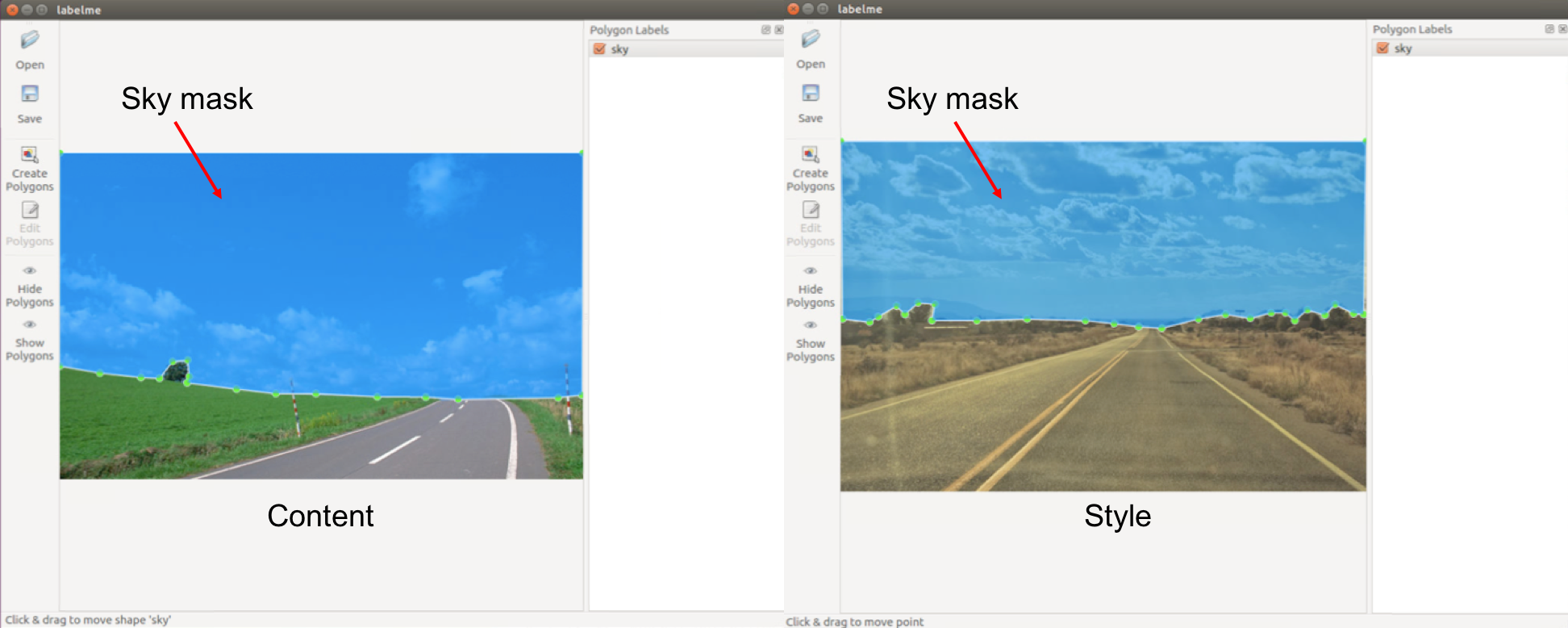

95 | #### Example 2: Transfer style of a style photo to a content photo with manually generated semantic label maps.

96 |

97 | When segmentation masks of content and style photos are available, FastPhotoStyle can utilize content–style

98 | correspondences obtained by matching the semantic labels in the segmentation masks for generating better stylization effects.

99 | In this example, we show how to manually create segmentation masks of content and style photos and use them for photorealistic style transfer.

100 |

101 | ##### Prepare label maps

102 |

103 | - Install the tool [labelme](https://github.com/wkentaro/labelme) and run the following command to start it: `labelme`

104 | - Please refer to [labelme](https://github.com/wkentaro/labelme) for details about how to use this great UI. Basically, do the following steps:

105 | - Click `Open` and load the target image (content or style)

106 | - Click `Create Polygons` and start drawing polygons in content or style image. Note that the corresponding regions (e.g., sky-to-sky) should have the same label. All unlabeled pixels will be automatically labeled as `0`.

107 | - Optional: Click `Edit Polygons` and polish the mask.

108 | - Save the labeling result.

109 |

110 |

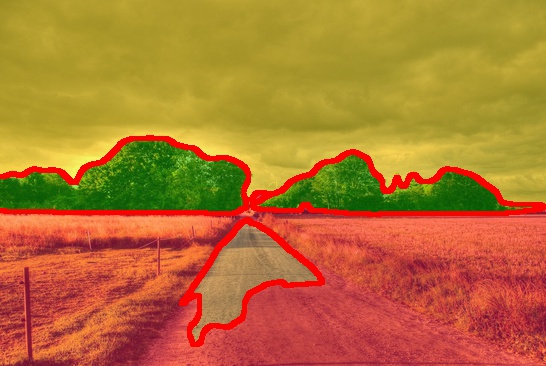

|

82 |

83 | - As shown in the output messages, the computational bottleneck of FastPhotoStyle is the propagation step (the photorealistic smoothing step). We find that we can make this step much faster by using the guided image filtering algorithm as an approximate. To run the fast version of the demo, you can simply type `./demo_example1_fast.sh` or run.

84 | - `python demo.py --fast --output_image_path results/example1_fast.png`

85 | - You should see output messages like

86 | - ```

87 | Resize image: (803,538)->(803,538)

88 | Resize image: (960,540)->(960,540)

89 | Elapsed time in stylization: 0.342203

90 | Elapsed time in propagation: 0.039506

91 | Elapsed time in post processing: 0.203081

92 | ```

93 | - Check out the stylization result computed by the fast approximation step in `results/example1_fast.png`. It should look very similar to `results/example1.png` from the full algorithm.

94 |

95 | #### Example 2: Transfer style of a style photo to a content photo with manually generated semantic label maps.

96 |

97 | When segmentation masks of content and style photos are available, FastPhotoStyle can utilize content–style

98 | correspondences obtained by matching the semantic labels in the segmentation masks for generating better stylization effects.

99 | In this example, we show how to manually create segmentation masks of content and style photos and use them for photorealistic style transfer.

100 |

101 | ##### Prepare label maps

102 |

103 | - Install the tool [labelme](https://github.com/wkentaro/labelme) and run the following command to start it: `labelme`

104 | - Please refer to [labelme](https://github.com/wkentaro/labelme) for details about how to use this great UI. Basically, do the following steps:

105 | - Click `Open` and load the target image (content or style)

106 | - Click `Create Polygons` and start drawing polygons in content or style image. Note that the corresponding regions (e.g., sky-to-sky) should have the same label. All unlabeled pixels will be automatically labeled as `0`.

107 | - Optional: Click `Edit Polygons` and polish the mask.

108 | - Save the labeling result.

109 |

110 |  111 |

112 | - The labeling result is saved in a ".json" file. By running the following command, you will get the `label.png` under `path/example_json`, which is the label map used in our code. `label.png` is a 1-channel image (usually looks totally black) consists of consecutive labels starting from 0.

113 |

114 | ```

115 | labelme_json_to_dataset example.json -o path/example_json

116 | ```

117 |

118 | ##### Stylize with label maps

119 |

120 | ```

121 | python demo.py \

122 | --content_image_path PATH-TO-YOUR-CONTENT-IMAGE \

123 | --content_seg_path PATH-TO-YOUR-CONTENT-LABEL \

124 | --style_image_path PATH-TO-YOUR-STYLE-IMAGE \

125 | --style_seg_path PATH-TO-YOUR-STYLE-LABEL \

126 | --output_image_path PATH-TO-YOUR-OUTPUT

127 | ```

128 |

129 | Below is a 3-label transferring example (images and labels are from the [DPST](https://github.com/luanfujun/deep-photo-styletransfer) work by Luan et al.):

130 |

131 |

132 |

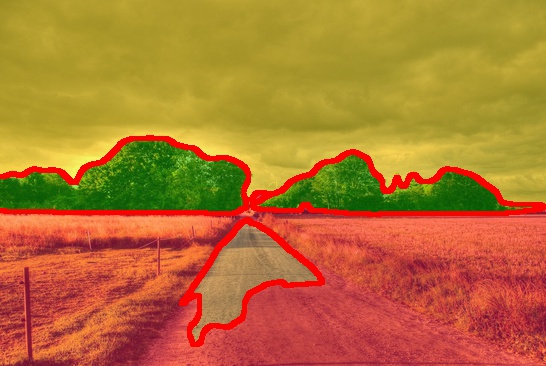

133 | #### Example 3: Transfer the style of a style photo to a content photo with automatically generated semantic label maps.

134 |

135 | In this example, we will show how to use segmentation masks of content and style photos generated by a pretrained segmentation network to achieve better stylization results.

136 | We will use the segmentation network provided from [CSAILVision/semantic-segmentation-pytorch](https://github.com/CSAILVision/semantic-segmentation-pytorch) in this example.

137 | To setup up the segmentation network, do the following steps:

138 | - Clone the CSAIL segmentation network from this fork of [CSAILVision/semantic-segmentation-pytorch](https://github.com/CSAILVision/semantic-segmentation-pytorch) using the following command

139 | `git clone https://github.com/mingyuliutw/semantic-segmentation-pytorch segmentation`

140 | - Run the demo code in [CSAILVision/semantic-segmentation-pytorch](https://github.com/CSAILVision/semantic-segmentation-pytorch) to download the network and make sure the environment is set up properly.

141 | - `cd segmentation`

142 | - `./demo_test.sh`

143 | - You should see output messages like

144 | ```

145 | 2018-XX-XX XX:XX:XX-- http://sceneparsing.csail.mit.edu//data/ADEChallengeData2016/images/validation/ADE_val_00001519.jpg

146 | Resolving sceneparsing.csail.mit.edu (sceneparsing.csail.mit.edu)... 128.30.100.255

147 | Connecting to sceneparsing.csail.mit.edu (sceneparsing.csail.mit.edu)|128.30.100.255|:80... connected.

148 | HTTP request sent, awaiting response... 200 OK

149 | Length: 62271 (61K) [image/jpeg]

150 | Saving to: ‘./ADE_val_00001519.jpg’

151 |

152 | ADE_val_00001519.jpg 100%[=====================================>] 60.81K 366KB/s in 0.2s

153 |

154 | 2018-07-25 16:55:00 (366 KB/s) - ‘./ADE_val_00001519.jpg’ saved [62271/62271]

155 |

156 | Namespace(arch_decoder='ppm_bilinear_deepsup', arch_encoder='resnet50_dilated8', batch_size=1, fc_dim=2048, gpu_id=0, imgMaxSize=1000, imgSize=[300, 400, 500, 600], model_path='baseline-resnet50_dilated8-ppm_bilinear_deepsup', num_class=150, num_val=-1, padding_constant=8, result='./', segm_downsampling_rate=8, suffix='_epoch_20.pth', test_img='ADE_val_00001519.jpg')

157 | Loading weights for net_encoder

158 | Loading weights for net_decoder

159 | Inference done!

160 | ```

161 | - Go back to the root folder `cd ..`

162 |

163 | - Now, we are ready to use the segmentation network trained on the ADE20K for automatically generating the segmentation mask.

164 | - To run the fast version of the demo, you can simply type `./demo_example3.sh` or run.

165 | - Create image and output folders and make sure nothing is inside the folders. `mkdir images && mkdir results`

166 | - Go to the image folder: `cd images`

167 | - Download content image 3: `axel -n 1 https://pre00.deviantart.net/f1a6/th/pre/i/2010/019/0/e/country_road_hdr_by_mirre89.jpg --output=content3.png`

168 | - Download style image 3: `axel -n 1 https://nerdist.com/wp-content/uploads/2017/11/Stranger_Things_S2_news_Images_V03-1024x481.jpg --output=style3.png;`

169 | - These images are huge. We need to resize them first. Run

170 | - `convert -resize 50% content3.png content3.png`

171 | - `convert -resize 50% style3.png style3.png`

172 | - Go back to the root folder: `cd ..`

173 | - **Update the python library path by** `export PYTHONPATH=$PYTHONPATH:segmentation`

174 | - We will now run the demo code that first computing the segmentation masks of content and style images and then performing photorealistic style transfer.

175 | `python demo_with_ade20k_ssn.py --output_visualization` or `python demo_with_ade20k_ssn.py --fast --output_visualization`

176 | - You should see output messages like

177 | ```

178 | Loading weights for net_encoder

179 | Loading weights for net_decoder

180 | Resize image: (546,366)->(546,366)

181 | Resize image: (485,273)->(485,273)

182 | Elapsed time in stylization: 0.890762

183 | Elapsed time in propagation: 0.014808

184 | Elapsed time in post processing: 0.197138

185 | ```

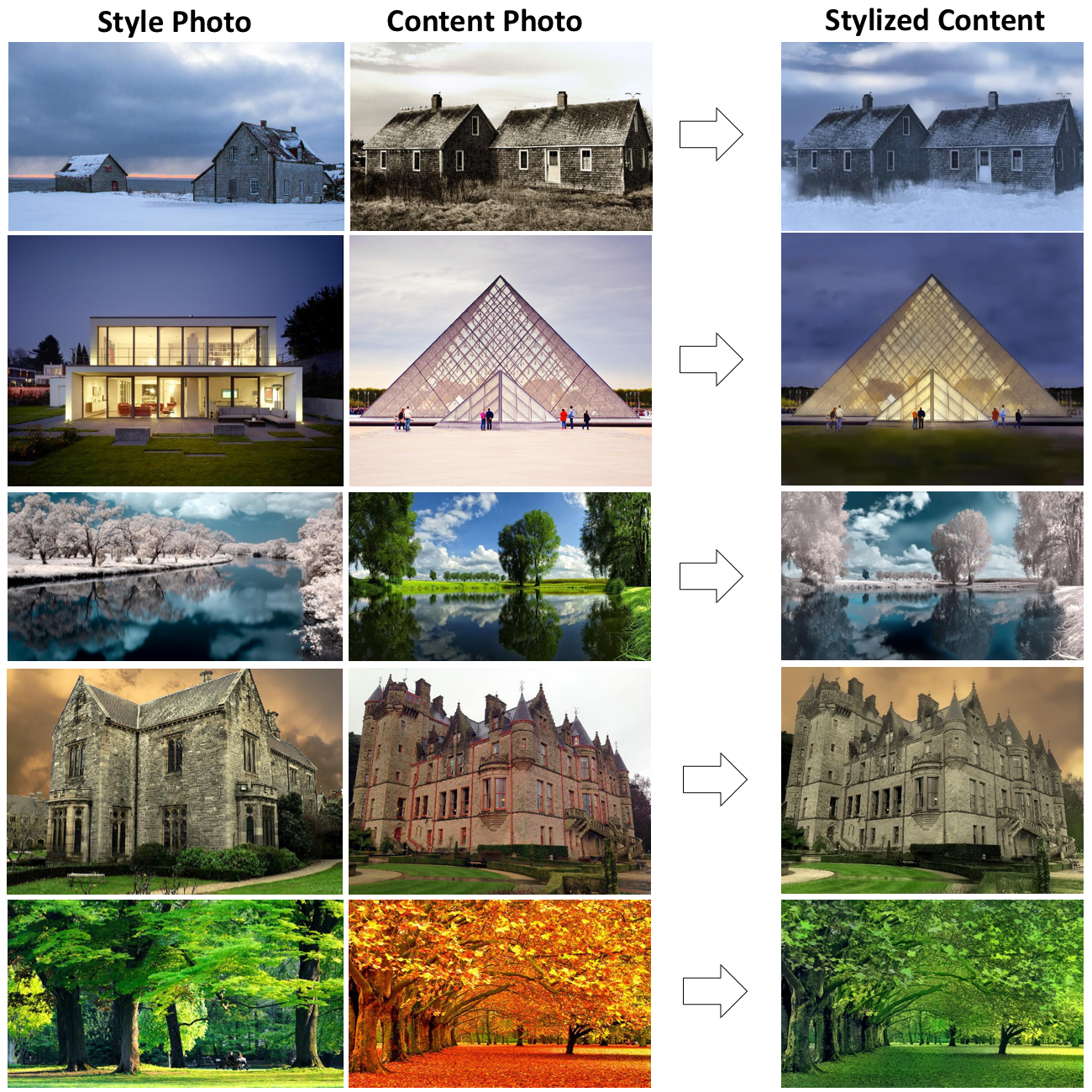

186 | - You should see an output image like

187 |

188 | | Input Style Photo | Input Content Photo | Output Stylization Result |

189 | |-------------------|---------------------|---------------------------|

190 | |

111 |

112 | - The labeling result is saved in a ".json" file. By running the following command, you will get the `label.png` under `path/example_json`, which is the label map used in our code. `label.png` is a 1-channel image (usually looks totally black) consists of consecutive labels starting from 0.

113 |

114 | ```

115 | labelme_json_to_dataset example.json -o path/example_json

116 | ```

117 |

118 | ##### Stylize with label maps

119 |

120 | ```

121 | python demo.py \

122 | --content_image_path PATH-TO-YOUR-CONTENT-IMAGE \

123 | --content_seg_path PATH-TO-YOUR-CONTENT-LABEL \

124 | --style_image_path PATH-TO-YOUR-STYLE-IMAGE \

125 | --style_seg_path PATH-TO-YOUR-STYLE-LABEL \

126 | --output_image_path PATH-TO-YOUR-OUTPUT

127 | ```

128 |

129 | Below is a 3-label transferring example (images and labels are from the [DPST](https://github.com/luanfujun/deep-photo-styletransfer) work by Luan et al.):

130 |

131 |

132 |

133 | #### Example 3: Transfer the style of a style photo to a content photo with automatically generated semantic label maps.

134 |

135 | In this example, we will show how to use segmentation masks of content and style photos generated by a pretrained segmentation network to achieve better stylization results.

136 | We will use the segmentation network provided from [CSAILVision/semantic-segmentation-pytorch](https://github.com/CSAILVision/semantic-segmentation-pytorch) in this example.

137 | To setup up the segmentation network, do the following steps:

138 | - Clone the CSAIL segmentation network from this fork of [CSAILVision/semantic-segmentation-pytorch](https://github.com/CSAILVision/semantic-segmentation-pytorch) using the following command

139 | `git clone https://github.com/mingyuliutw/semantic-segmentation-pytorch segmentation`

140 | - Run the demo code in [CSAILVision/semantic-segmentation-pytorch](https://github.com/CSAILVision/semantic-segmentation-pytorch) to download the network and make sure the environment is set up properly.

141 | - `cd segmentation`

142 | - `./demo_test.sh`

143 | - You should see output messages like

144 | ```

145 | 2018-XX-XX XX:XX:XX-- http://sceneparsing.csail.mit.edu//data/ADEChallengeData2016/images/validation/ADE_val_00001519.jpg

146 | Resolving sceneparsing.csail.mit.edu (sceneparsing.csail.mit.edu)... 128.30.100.255

147 | Connecting to sceneparsing.csail.mit.edu (sceneparsing.csail.mit.edu)|128.30.100.255|:80... connected.

148 | HTTP request sent, awaiting response... 200 OK

149 | Length: 62271 (61K) [image/jpeg]

150 | Saving to: ‘./ADE_val_00001519.jpg’

151 |

152 | ADE_val_00001519.jpg 100%[=====================================>] 60.81K 366KB/s in 0.2s

153 |

154 | 2018-07-25 16:55:00 (366 KB/s) - ‘./ADE_val_00001519.jpg’ saved [62271/62271]

155 |

156 | Namespace(arch_decoder='ppm_bilinear_deepsup', arch_encoder='resnet50_dilated8', batch_size=1, fc_dim=2048, gpu_id=0, imgMaxSize=1000, imgSize=[300, 400, 500, 600], model_path='baseline-resnet50_dilated8-ppm_bilinear_deepsup', num_class=150, num_val=-1, padding_constant=8, result='./', segm_downsampling_rate=8, suffix='_epoch_20.pth', test_img='ADE_val_00001519.jpg')

157 | Loading weights for net_encoder

158 | Loading weights for net_decoder

159 | Inference done!

160 | ```

161 | - Go back to the root folder `cd ..`

162 |

163 | - Now, we are ready to use the segmentation network trained on the ADE20K for automatically generating the segmentation mask.

164 | - To run the fast version of the demo, you can simply type `./demo_example3.sh` or run.

165 | - Create image and output folders and make sure nothing is inside the folders. `mkdir images && mkdir results`

166 | - Go to the image folder: `cd images`

167 | - Download content image 3: `axel -n 1 https://pre00.deviantart.net/f1a6/th/pre/i/2010/019/0/e/country_road_hdr_by_mirre89.jpg --output=content3.png`

168 | - Download style image 3: `axel -n 1 https://nerdist.com/wp-content/uploads/2017/11/Stranger_Things_S2_news_Images_V03-1024x481.jpg --output=style3.png;`

169 | - These images are huge. We need to resize them first. Run

170 | - `convert -resize 50% content3.png content3.png`

171 | - `convert -resize 50% style3.png style3.png`

172 | - Go back to the root folder: `cd ..`

173 | - **Update the python library path by** `export PYTHONPATH=$PYTHONPATH:segmentation`

174 | - We will now run the demo code that first computing the segmentation masks of content and style images and then performing photorealistic style transfer.

175 | `python demo_with_ade20k_ssn.py --output_visualization` or `python demo_with_ade20k_ssn.py --fast --output_visualization`

176 | - You should see output messages like

177 | ```

178 | Loading weights for net_encoder

179 | Loading weights for net_decoder

180 | Resize image: (546,366)->(546,366)

181 | Resize image: (485,273)->(485,273)

182 | Elapsed time in stylization: 0.890762

183 | Elapsed time in propagation: 0.014808

184 | Elapsed time in post processing: 0.197138

185 | ```

186 | - You should see an output image like

187 |

188 | | Input Style Photo | Input Content Photo | Output Stylization Result |

189 | |-------------------|---------------------|---------------------------|

190 | | |

|  |

| |

191 |

192 | - We can check out the segmentation results in the `results` folder.

193 |

194 | | Segmentation of the Style Photo | Segmentation of the Content Photo |

195 | |---------------------------------|-----------------------------------|

196 | |

|

191 |

192 | - We can check out the segmentation results in the `results` folder.

193 |

194 | | Segmentation of the Style Photo | Segmentation of the Content Photo |

195 | |---------------------------------|-----------------------------------|

196 | | |

|  |

197 |

198 |

199 | ### Use docker image

200 |

201 | We provide a docker image for testing the code.

202 |

203 | 1. Install docker-ce. Follow the instruction in the [Docker page](https://docs.docker.com/install/linux/docker-ce/ubuntu/#install-docker-ce-1)

204 | 2. Install nvidia-docker. Follow the instruction in the [NVIDIA-DOCKER README page](https://github.com/NVIDIA/nvidia-docker).

205 | 3. Build the docker image `docker build -t your-docker-image:v1.0 .`

206 | 4. Run an interactive session `docker run -v YOUR_PATH:YOUR_PATH --runtime=nvidia -i -t your-docker-image:v1.0 /bin/bash`

207 | 5. `cd YOUR_PATH`

208 | 6. `./demo_example1.sh`

209 |

210 | ## Acknowledgement

211 |

212 | - We express gratitudes to the great work [DPST](https://www.cs.cornell.edu/~fujun/files/style-cvpr17/style-cvpr17.pdf) by Luan et al. and their [Torch](https://github.com/luanfujun/deep-photo-styletransfer) and [Tensorflow](https://github.com/LouieYang/deep-photo-styletransfer-tf) implementations.

213 |

--------------------------------------------------------------------------------

/ade20k_semantic_rel.npy:

--------------------------------------------------------------------------------

https://raw.githubusercontent.com/NVIDIA/FastPhotoStyle/af0c8fecce58aa71f76488546231214f6684be02/ade20k_semantic_rel.npy

--------------------------------------------------------------------------------

/converter.py:

--------------------------------------------------------------------------------

1 | import os

2 |

3 | import torch

4 | import torch.nn as nn

5 | from torch.utils.serialization import load_lua

6 |

7 | from models import VGGEncoder, VGGDecoder

8 | from photo_wct import PhotoWCT

9 |

10 |

11 | def weight_assign(lua, pth, maps):

12 | for k, v in maps.items():

13 | getattr(pth, k).weight = nn.Parameter(lua.get(v).weight.float())

14 | getattr(pth, k).bias = nn.Parameter(lua.get(v).bias.float())

15 |

16 |

17 | def photo_wct_loader(p_wct):

18 | p_wct.e1.load_state_dict(torch.load('pth_models/vgg_normalised_conv1.pth'))

19 | p_wct.d1.load_state_dict(torch.load('pth_models/feature_invertor_conv1.pth'))

20 | p_wct.e2.load_state_dict(torch.load('pth_models/vgg_normalised_conv2.pth'))

21 | p_wct.d2.load_state_dict(torch.load('pth_models/feature_invertor_conv2.pth'))

22 | p_wct.e3.load_state_dict(torch.load('pth_models/vgg_normalised_conv3.pth'))

23 | p_wct.d3.load_state_dict(torch.load('pth_models/feature_invertor_conv3.pth'))

24 | p_wct.e4.load_state_dict(torch.load('pth_models/vgg_normalised_conv4.pth'))

25 | p_wct.d4.load_state_dict(torch.load('pth_models/feature_invertor_conv4.pth'))

26 |

27 |

28 | if __name__ == '__main__':

29 | if not os.path.exists('pth_models'):

30 | os.mkdir('pth_models')

31 |

32 | ## VGGEncoder1

33 | vgg1 = load_lua('models/vgg_normalised_conv1_1_mask.t7')

34 | e1 = VGGEncoder(1)

35 | weight_assign(vgg1, e1, {

36 | 'conv0': 0,

37 | 'conv1_1': 2,

38 | })

39 | torch.save(e1.state_dict(), 'pth_models/vgg_normalised_conv1.pth')

40 |

41 | ## VGGDecoder1

42 | inv1 = load_lua('models/feature_invertor_conv1_1_mask.t7')

43 | d1 = VGGDecoder(1)

44 | weight_assign(inv1, d1, {

45 | 'conv1_1': 1,

46 | })

47 | torch.save(d1.state_dict(), 'pth_models/feature_invertor_conv1.pth')

48 |

49 | ## VGGEncoder2

50 | vgg2 = load_lua('models/vgg_normalised_conv2_1_mask.t7')

51 | e2 = VGGEncoder(2)

52 | weight_assign(vgg2, e2, {

53 | 'conv0': 0,

54 | 'conv1_1': 2,

55 | 'conv1_2': 5,

56 | 'conv2_1': 9,

57 | })

58 | torch.save(e2.state_dict(), 'pth_models/vgg_normalised_conv2.pth')

59 |

60 | ## VGGDecoder2

61 | inv2 = load_lua('models/feature_invertor_conv2_1_mask.t7')

62 | d2 = VGGDecoder(2)

63 | weight_assign(inv2, d2, {

64 | 'conv2_1': 1,

65 | 'conv1_2': 5,

66 | 'conv1_1': 8,

67 | })

68 | torch.save(d2.state_dict(), 'pth_models/feature_invertor_conv2.pth')

69 |

70 | ## VGGEncoder3

71 | vgg3 = load_lua('models/vgg_normalised_conv3_1_mask.t7')

72 | e3 = VGGEncoder(3)

73 | weight_assign(vgg3, e3, {

74 | 'conv0': 0,

75 | 'conv1_1': 2,

76 | 'conv1_2': 5,

77 | 'conv2_1': 9,

78 | 'conv2_2': 12,

79 | 'conv3_1': 16,

80 | })

81 | torch.save(e3.state_dict(), 'pth_models/vgg_normalised_conv3.pth')

82 |

83 | ## VGGDecoder3

84 | inv3 = load_lua('models/feature_invertor_conv3_1_mask.t7')

85 | d3 = VGGDecoder(3)

86 | weight_assign(inv3, d3, {

87 | 'conv3_1': 1,

88 | 'conv2_2': 5,

89 | 'conv2_1': 8,

90 | 'conv1_2': 12,

91 | 'conv1_1': 15,

92 | })

93 | torch.save(d3.state_dict(), 'pth_models/feature_invertor_conv3.pth')

94 |

95 | ## VGGEncoder4

96 | vgg4 = load_lua('models/vgg_normalised_conv4_1_mask.t7')

97 | e4 = VGGEncoder(4)

98 | weight_assign(vgg4, e4, {

99 | 'conv0': 0,

100 | 'conv1_1': 2,

101 | 'conv1_2': 5,

102 | 'conv2_1': 9,

103 | 'conv2_2': 12,

104 | 'conv3_1': 16,

105 | 'conv3_2': 19,

106 | 'conv3_3': 22,

107 | 'conv3_4': 25,

108 | 'conv4_1': 29,

109 | })

110 | torch.save(e4.state_dict(), 'pth_models/vgg_normalised_conv4.pth')

111 |

112 | ## VGGDecoder4

113 | inv4 = load_lua('models/feature_invertor_conv4_1_mask.t7')

114 | d4 = VGGDecoder(4)

115 | weight_assign(inv4, d4, {

116 | 'conv4_1': 1,

117 | 'conv3_4': 5,

118 | 'conv3_3': 8,

119 | 'conv3_2': 11,

120 | 'conv3_1': 14,

121 | 'conv2_2': 18,

122 | 'conv2_1': 21,

123 | 'conv1_2': 25,

124 | 'conv1_1': 28,

125 | })

126 | torch.save(d4.state_dict(), 'pth_models/feature_invertor_conv4.pth')

127 |

128 | p_wct = PhotoWCT()

129 | photo_wct_loader(p_wct)

130 | torch.save(p_wct.state_dict(), 'PhotoWCTModels/photo_wct.pth')

131 |

--------------------------------------------------------------------------------

/demo.py:

--------------------------------------------------------------------------------

1 | """

2 | Copyright (C) 2018 NVIDIA Corporation. All rights reserved.

3 | Licensed under the CC BY-NC-SA 4.0 license (https://creativecommons.org/licenses/by-nc-sa/4.0/legalcode).

4 | """

5 |

6 | from __future__ import print_function

7 | import argparse

8 | import torch

9 | import process_stylization

10 | from photo_wct import PhotoWCT

11 | parser = argparse.ArgumentParser(description='Photorealistic Image Stylization')

12 | parser.add_argument('--model', default='./PhotoWCTModels/photo_wct.pth')

13 | parser.add_argument('--content_image_path', default='./images/content1.png')

14 | parser.add_argument('--content_seg_path', default=[])

15 | parser.add_argument('--style_image_path', default='./images/style1.png')

16 | parser.add_argument('--style_seg_path', default=[])

17 | parser.add_argument('--output_image_path', default='./results/example1.png')

18 | parser.add_argument('--save_intermediate', action='store_true', default=False)

19 | parser.add_argument('--fast', action='store_true', default=False)

20 | parser.add_argument('--no_post', action='store_true', default=False)

21 | parser.add_argument('--cuda', type=int, default=1, help='Enable CUDA.')

22 | args = parser.parse_args()

23 |

24 | # Load model

25 | p_wct = PhotoWCT()

26 | p_wct.load_state_dict(torch.load(args.model))

27 |

28 | if args.fast:

29 | from photo_gif import GIFSmoothing

30 | p_pro = GIFSmoothing(r=35, eps=0.001)

31 | else:

32 | from photo_smooth import Propagator

33 | p_pro = Propagator()

34 | if args.cuda:

35 | p_wct.cuda(0)

36 |

37 | process_stylization.stylization(

38 | stylization_module=p_wct,

39 | smoothing_module=p_pro,

40 | content_image_path=args.content_image_path,

41 | style_image_path=args.style_image_path,

42 | content_seg_path=args.content_seg_path,

43 | style_seg_path=args.style_seg_path,

44 | output_image_path=args.output_image_path,

45 | cuda=args.cuda,

46 | save_intermediate=args.save_intermediate,

47 | no_post=args.no_post

48 | )

49 |

--------------------------------------------------------------------------------

/demo_example1.sh:

--------------------------------------------------------------------------------

1 | mkdir images -p && mkdir results -p;

2 | rm images/content1.png -rf;

3 | rm images/style1.png -rf;

4 | rm results/demo_result_example1.png

5 | cd images;

6 | axel -n 1 http://freebigpictures.com/wp-content/uploads/shady-forest.jpg --output=content1.png;

7 | axel -n 1 https://vignette.wikia.nocookie.net/strangerthings8338/images/e/e0/Wiki-background.jpeg/revision/latest?cb=20170522192233 --output=style1.png;

8 | convert -resize 25% content1.png content1.png;

9 | convert -resize 50% style1.png style1.png;

10 | cd ..;

11 | python demo.py;

12 |

--------------------------------------------------------------------------------

/demo_example1_fast.sh:

--------------------------------------------------------------------------------

1 | mkdir images -p && mkdir results -p;

2 | rm images/content1.png -rf;

3 | rm images/style1.png -rf;

4 | rm results/demo_result_example1.png

5 | cd images;

6 | axel -n 1 http://freebigpictures.com/wp-content/uploads/shady-forest.jpg --output=content1.png;

7 | axel -n 1 https://vignette.wikia.nocookie.net/strangerthings8338/images/e/e0/Wiki-background.jpeg/revision/latest?cb=20170522192233 --output=style1.png;

8 | convert -resize 25% content1.png content1.png;

9 | convert -resize 50% style1.png style1.png;

10 | cd ..;

11 | python demo.py --fast --output_image_path results/example2.png;

12 |

--------------------------------------------------------------------------------

/demo_example3.sh:

--------------------------------------------------------------------------------

1 | mkdir images -p && mkdir results -p;

2 | rm images/content3.png -rf;

3 | rm images/style3.png -rf;

4 | rm results/content3_seg.pgm -rf;

5 | rm results/style3_seg.pgm -rf;

6 | rm results/stylization_with_auto_segmentation.png -rf;

7 | export PYTHONPATH=$PYTHONPATH:segmentation

8 | cd images;

9 | axel -n 1 https://pre00.deviantart.net/f1a6/th/pre/i/2010/019/0/e/country_road_hdr_by_mirre89.jpg --output=content3.png;

10 | axel -n 1 https://nerdist.com/wp-content/uploads/2017/11/Stranger_Things_S2_news_Images_V03-1024x481.jpg --output=style3.png;

11 | convert -resize 50% content3.png content3.png;

12 | convert -resize 50% style3.png style3.png;

13 | cd ..;

14 | python demo_with_ade20k_ssn.py;

15 |

--------------------------------------------------------------------------------

/demo_mask_poly.png:

--------------------------------------------------------------------------------

https://raw.githubusercontent.com/NVIDIA/FastPhotoStyle/af0c8fecce58aa71f76488546231214f6684be02/demo_mask_poly.png

--------------------------------------------------------------------------------

/demo_result_content3_seg.pgm.visualization.jpg:

--------------------------------------------------------------------------------

https://raw.githubusercontent.com/NVIDIA/FastPhotoStyle/af0c8fecce58aa71f76488546231214f6684be02/demo_result_content3_seg.pgm.visualization.jpg

--------------------------------------------------------------------------------

/demo_result_example1.png:

--------------------------------------------------------------------------------

https://raw.githubusercontent.com/NVIDIA/FastPhotoStyle/af0c8fecce58aa71f76488546231214f6684be02/demo_result_example1.png

--------------------------------------------------------------------------------

/demo_result_example2.png:

--------------------------------------------------------------------------------

https://raw.githubusercontent.com/NVIDIA/FastPhotoStyle/af0c8fecce58aa71f76488546231214f6684be02/demo_result_example2.png

--------------------------------------------------------------------------------

/demo_result_example3.png:

--------------------------------------------------------------------------------

https://raw.githubusercontent.com/NVIDIA/FastPhotoStyle/af0c8fecce58aa71f76488546231214f6684be02/demo_result_example3.png

--------------------------------------------------------------------------------

/demo_result_style3_seg.pgm.visualization.jpg:

--------------------------------------------------------------------------------

https://raw.githubusercontent.com/NVIDIA/FastPhotoStyle/af0c8fecce58aa71f76488546231214f6684be02/demo_result_style3_seg.pgm.visualization.jpg

--------------------------------------------------------------------------------

/demo_with_ade20k_ssn.py:

--------------------------------------------------------------------------------

1 | """

2 | Copyright (C) 2018 NVIDIA Corporation. All rights reserved.

3 | Licensed under the CC BY-NC-SA 4.0 license (https://creativecommons.org/licenses/by-nc-sa/4.0/legalcode).

4 | """

5 | from __future__ import print_function

6 | import argparse

7 | import os

8 | import torch

9 | import process_stylization_ade20k_ssn

10 | from torch import nn

11 | from photo_wct import PhotoWCT

12 | from segmentation.dataset import round2nearest_multiple

13 | from segmentation.models import ModelBuilder, SegmentationModule

14 | from lib.nn import user_scattered_collate, async_copy_to

15 | from lib.utils import as_numpy, mark_volatile

16 | from scipy.misc import imread, imresize

17 | import cv2

18 | from torchvision import transforms

19 | import numpy as np

20 |

21 | parser = argparse.ArgumentParser(description='Photorealistic Image Stylization')

22 | parser.add_argument('--model_path', help='folder to model path', default='baseline-resnet50_dilated8-ppm_bilinear_deepsup')

23 | parser.add_argument('--suffix', default='_epoch_20.pth', help="which snapshot to load")

24 | parser.add_argument('--arch_encoder', default='resnet50_dilated8', help="architecture of net_encoder")

25 | parser.add_argument('--arch_decoder', default='ppm_bilinear_deepsup', help="architecture of net_decoder")

26 | parser.add_argument('--fc_dim', default=2048, type=int, help='number of features between encoder and decoder')

27 | parser.add_argument('--num_val', default=-1, type=int, help='number of images to evalutate')

28 | parser.add_argument('--num_class', default=150, type=int, help='number of classes')

29 | parser.add_argument('--batch_size', default=1, type=int, help='batchsize. current only supports 1')

30 | parser.add_argument('--imgSize', default=[300, 400, 500, 600], nargs='+', type=int, help='list of input image sizes.' 'for multiscale testing, e.g. 300 400 500')

31 | parser.add_argument('--imgMaxSize', default=1000, type=int, help='maximum input image size of long edge')

32 | parser.add_argument('--padding_constant', default=8, type=int, help='maxmimum downsampling rate of the network')

33 | parser.add_argument('--segm_downsampling_rate', default=8, type=int, help='downsampling rate of the segmentation label')

34 | parser.add_argument('--gpu_id', default=0, type=int, help='gpu_id for evaluation')

35 |

36 | parser.add_argument('--model', default='./PhotoWCTModels/photo_wct.pth', help='Path to the PhotoWCT model. These are provided by the PhotoWCT submodule, please use `git submodule update --init --recursive` to pull.')

37 | parser.add_argument('--content_image_path', default="./images/content3.png")

38 | parser.add_argument('--content_seg_path', default='./results/content3_seg.pgm')

39 | parser.add_argument('--style_image_path', default='./images/style3.png')

40 | parser.add_argument('--style_seg_path', default='./results/style3_seg.pgm')

41 | parser.add_argument('--output_image_path', default='./results/example3.png')

42 | parser.add_argument('--save_intermediate', action='store_true', default=False)

43 | parser.add_argument('--fast', action='store_true', default=False)

44 | parser.add_argument('--no_post', action='store_true', default=False)

45 | parser.add_argument('--output_visualization', action='store_true', default=False)

46 | parser.add_argument('--cuda', type=int, default=1, help='Enable CUDA.')

47 | parser.add_argument('--label_mapping', type=str, default='ade20k_semantic_rel.npy')

48 | args = parser.parse_args()

49 |

50 | segReMapping = process_stylization_ade20k_ssn.SegReMapping(args.label_mapping)

51 |

52 | # Absolute paths of segmentation model weights

53 | SEG_NET_PATH = 'segmentation'

54 | args.weights_encoder = os.path.join(SEG_NET_PATH,args.model_path, 'encoder' + args.suffix)

55 | args.weights_decoder = os.path.join(SEG_NET_PATH,args.model_path, 'decoder' + args.suffix)

56 | args.arch_encoder = 'resnet50_dilated8'

57 | args.arch_decoder = 'ppm_bilinear_deepsup'

58 | args.fc_dim = 2048

59 |

60 | # Load semantic segmentation network module

61 | builder = ModelBuilder()

62 | net_encoder = builder.build_encoder(arch=args.arch_encoder, fc_dim=args.fc_dim, weights=args.weights_encoder)

63 | net_decoder = builder.build_decoder(arch=args.arch_decoder, fc_dim=args.fc_dim, num_class=args.num_class, weights=args.weights_decoder, use_softmax=True)

64 | crit = nn.NLLLoss(ignore_index=-1)

65 | segmentation_module = SegmentationModule(net_encoder, net_decoder, crit)

66 | segmentation_module.cuda()

67 | segmentation_module.eval()

68 | transform = transforms.Compose([transforms.Normalize(mean=[102.9801, 115.9465, 122.7717], std=[1., 1., 1.])])

69 |

70 | # Load FastPhotoStyle model

71 | p_wct = PhotoWCT()

72 | p_wct.load_state_dict(torch.load(args.model))

73 | if args.fast:

74 | from photo_gif import GIFSmoothing

75 | p_pro = GIFSmoothing(r=35, eps=0.001)

76 | else:

77 | from photo_smooth import Propagator

78 | p_pro = Propagator()

79 | if args.cuda:

80 | p_wct.cuda(0)

81 |

82 |

83 | def segment_this_img(f):

84 | img = imread(f, mode='RGB')

85 | img = img[:, :, ::-1] # BGR to RGB!!!

86 | ori_height, ori_width, _ = img.shape

87 | img_resized_list = []

88 | for this_short_size in args.imgSize:

89 | scale = this_short_size / float(min(ori_height, ori_width))

90 | target_height, target_width = int(ori_height * scale), int(ori_width * scale)

91 | target_height = round2nearest_multiple(target_height, args.padding_constant)

92 | target_width = round2nearest_multiple(target_width, args.padding_constant)

93 | img_resized = cv2.resize(img.copy(), (target_width, target_height))

94 | img_resized = img_resized.astype(np.float32)

95 | img_resized = img_resized.transpose((2, 0, 1))

96 | img_resized = transform(torch.from_numpy(img_resized))

97 | img_resized = torch.unsqueeze(img_resized, 0)

98 | img_resized_list.append(img_resized)

99 | input = dict()

100 | input['img_ori'] = img.copy()

101 | input['img_data'] = [x.contiguous() for x in img_resized_list]

102 | segSize = (img.shape[0],img.shape[1])

103 | with torch.no_grad():

104 | pred = torch.zeros(1, args.num_class, segSize[0], segSize[1])

105 | for timg in img_resized_list:

106 | feed_dict = dict()

107 | feed_dict['img_data'] = timg.cuda()

108 | feed_dict = async_copy_to(feed_dict, args.gpu_id)

109 | # forward pass

110 | pred_tmp = segmentation_module(feed_dict, segSize=segSize)

111 | pred = pred + pred_tmp.cpu() / len(args.imgSize)

112 | _, preds = torch.max(pred, dim=1)

113 | preds = as_numpy(preds.squeeze(0))

114 | return preds

115 |

116 |

117 | cont_seg = segment_this_img(args.content_image_path)

118 | cv2.imwrite(args.content_seg_path, cont_seg)

119 | style_seg = segment_this_img(args.style_image_path)

120 | cv2.imwrite(args.style_seg_path, style_seg)

121 | process_stylization_ade20k_ssn.stylization(

122 | stylization_module=p_wct,

123 | smoothing_module=p_pro,

124 | content_image_path=args.content_image_path,

125 | style_image_path=args.style_image_path,

126 | content_seg_path=args.content_seg_path,

127 | style_seg_path=args.style_seg_path,

128 | output_image_path=args.output_image_path,

129 | cuda=True,

130 | save_intermediate=args.save_intermediate,

131 | no_post=args.no_post,

132 | label_remapping=segReMapping,

133 | output_visualization=args.output_visualization

134 | )

135 |

--------------------------------------------------------------------------------

/demo_with_segmentation.gif:

--------------------------------------------------------------------------------

https://raw.githubusercontent.com/NVIDIA/FastPhotoStyle/af0c8fecce58aa71f76488546231214f6684be02/demo_with_segmentation.gif

--------------------------------------------------------------------------------

/download_models.py:

--------------------------------------------------------------------------------

1 | # Download code taken from Code taken from https://stackoverflow.com/questions/25010369/wget-curl-large-file-from-google-drive/39225039#39225039

2 | import requests

3 |

4 | def download_file_from_google_drive(id, destination):

5 | URL = "https://docs.google.com/uc?export=download"

6 |

7 | session = requests.Session()

8 |

9 | response = session.get(URL, params = { 'id' : id }, stream = True)

10 | token = get_confirm_token(response)

11 |

12 | if token:

13 | params = { 'id' : id, 'confirm' : token }

14 | response = session.get(URL, params = params, stream = True)

15 |

16 | save_response_content(response, destination)

17 |

18 | def get_confirm_token(response):

19 | for key, value in response.cookies.items():

20 | if key.startswith('download_warning'):

21 | return value

22 |

23 | return None

24 |

25 | def save_response_content(response, destination):

26 | CHUNK_SIZE = 32768

27 |

28 | with open(destination, "wb") as f:

29 | for chunk in response.iter_content(CHUNK_SIZE):

30 | if chunk: # filter out keep-alive new chunks

31 | f.write(chunk)

32 |

33 | file_id = '1ENgQm9TgabE1R99zhNf5q6meBvX6WFuq'

34 | destination = './models.zip'

35 | download_file_from_google_drive(file_id, destination)

--------------------------------------------------------------------------------

/download_models.sh:

--------------------------------------------------------------------------------

1 | #!/bin/bash

2 | python download_models.py

3 | unzip models.zip

4 |

--------------------------------------------------------------------------------

/models.py:

--------------------------------------------------------------------------------

1 | """

2 | Copyright (C) 2018 NVIDIA Corporation. All rights reserved.

3 | Licensed under the CC BY-NC-SA 4.0 license (https://creativecommons.org/licenses/by-nc-sa/4.0/legalcode).

4 | """

5 | import torch.nn as nn

6 |

7 |

8 | class VGGEncoder(nn.Module):

9 | def __init__(self, level):

10 | super(VGGEncoder, self).__init__()

11 | self.level = level

12 |

13 | # 224 x 224

14 | self.conv0 = nn.Conv2d(3, 3, 1, 1, 0)

15 |

16 | self.pad1_1 = nn.ReflectionPad2d((1, 1, 1, 1))

17 | # 226 x 226

18 | self.conv1_1 = nn.Conv2d(3, 64, 3, 1, 0)

19 | self.relu1_1 = nn.ReLU(inplace=True)

20 | # 224 x 224

21 |

22 | if level < 2: return

23 |

24 | self.pad1_2 = nn.ReflectionPad2d((1, 1, 1, 1))

25 | self.conv1_2 = nn.Conv2d(64, 64, 3, 1, 0)

26 | self.relu1_2 = nn.ReLU(inplace=True)

27 | # 224 x 224

28 | self.maxpool1 = nn.MaxPool2d(kernel_size=2, stride=2, return_indices=True)

29 | # 112 x 112

30 |

31 | self.pad2_1 = nn.ReflectionPad2d((1, 1, 1, 1))

32 | self.conv2_1 = nn.Conv2d(64, 128, 3, 1, 0)

33 | self.relu2_1 = nn.ReLU(inplace=True)

34 | # 112 x 112

35 |

36 | if level < 3: return

37 |

38 | self.pad2_2 = nn.ReflectionPad2d((1, 1, 1, 1))

39 | self.conv2_2 = nn.Conv2d(128, 128, 3, 1, 0)

40 | self.relu2_2 = nn.ReLU(inplace=True)

41 | # 112 x 112

42 |

43 | self.maxpool2 = nn.MaxPool2d(kernel_size=2, stride=2, return_indices=True)

44 | # 56 x 56

45 |

46 | self.pad3_1 = nn.ReflectionPad2d((1, 1, 1, 1))

47 | self.conv3_1 = nn.Conv2d(128, 256, 3, 1, 0)

48 | self.relu3_1 = nn.ReLU(inplace=True)

49 | # 56 x 56

50 |

51 | if level < 4: return

52 |

53 | self.pad3_2 = nn.ReflectionPad2d((1, 1, 1, 1))

54 | self.conv3_2 = nn.Conv2d(256, 256, 3, 1, 0)

55 | self.relu3_2 = nn.ReLU(inplace=True)

56 | # 56 x 56

57 |

58 | self.pad3_3 = nn.ReflectionPad2d((1, 1, 1, 1))

59 | self.conv3_3 = nn.Conv2d(256, 256, 3, 1, 0)

60 | self.relu3_3 = nn.ReLU(inplace=True)

61 | # 56 x 56

62 |

63 | self.pad3_4 = nn.ReflectionPad2d((1, 1, 1, 1))

64 | self.conv3_4 = nn.Conv2d(256, 256, 3, 1, 0)

65 | self.relu3_4 = nn.ReLU(inplace=True)

66 | # 56 x 56

67 |

68 | self.maxpool3 = nn.MaxPool2d(kernel_size=2, stride=2, return_indices=True)

69 | # 28 x 28

70 |

71 | self.pad4_1 = nn.ReflectionPad2d((1, 1, 1, 1))

72 | self.conv4_1 = nn.Conv2d(256, 512, 3, 1, 0)

73 | self.relu4_1 = nn.ReLU(inplace=True)

74 | # 28 x 28

75 |

76 | def forward(self, x):

77 | out = self.conv0(x)