├── .gitignore

├── CODE_OF_CONDUCT.md

├── CONTRIBUTING.md

├── LICENSE

├── NOTICE

├── README.md

├── bin

└── awsdevhour.ts

├── cdk.json

├── jest.config.js

├── lib

├── awsdevhour-backend-pipeline-stack.ts

├── awsdevhour-backend-pipeline-stage.ts

└── awsdevhour-stack.ts

├── package-lock.json

├── package.json

├── public

└── index.html

├── reklayer

└── pillow-goes-here.txt

├── rekognitionlambda

├── event.json

└── index.py

├── servicelambda

└── index.py

├── test

└── awsdevhour.test.ts

└── tsconfig.json

/.gitignore:

--------------------------------------------------------------------------------

1 | # Created by https://www.gitignore.io/api/osx,linux,python,windows,pycharm,visualstudiocode,node

2 | # Edit at https://www.gitignore.io/?templates=osx,linux,python,windows,pycharm,visualstudiocode,node

3 |

4 | ### Linux ###

5 | *~

6 |

7 | # temporary files which can be created if a process still has a handle open of a deleted file

8 | .fuse_hidden*

9 |

10 | # KDE directory preferences

11 | .directory

12 |

13 | # Linux trash folder which might appear on any partition or disk

14 | .Trash-*

15 |

16 | # .nfs files are created when an open file is removed but is still being accessed

17 | .nfs*

18 |

19 | ### Node ###

20 | # Logs

21 | logs

22 | *.log

23 | npm-debug.log*

24 | yarn-debug.log*

25 | yarn-error.log*

26 | lerna-debug.log*

27 |

28 | # Diagnostic reports (https://nodejs.org/api/report.html)

29 | report.[0-9]*.[0-9]*.[0-9]*.[0-9]*.json

30 |

31 | # Runtime data

32 | pids

33 | *.pid

34 | *.seed

35 | *.pid.lock

36 |

37 | # Directory for instrumented libs generated by jscoverage/JSCover

38 | lib-cov

39 |

40 | # Coverage directory used by tools like istanbul

41 | coverage

42 | *.lcov

43 |

44 | # nyc test coverage

45 | .nyc_output

46 |

47 | # Grunt intermediate storage (https://gruntjs.com/creating-plugins#storing-task-files)

48 | .grunt

49 |

50 | # Bower dependency directory (https://bower.io/)

51 | bower_components

52 |

53 | # node-waf configuration

54 | .lock-wscript

55 |

56 | # Compiled binary addons (https://nodejs.org/api/addons.html)

57 | build/Release

58 |

59 | # Dependency directories

60 | node_modules/

61 | jspm_packages/

62 |

63 | # TypeScript v1 declaration files

64 | typings/

65 |

66 | # TypeScript cache

67 | *.tsbuildinfo

68 |

69 | # Optional npm cache directory

70 | .npm

71 |

72 | # Optional eslint cache

73 | .eslintcache

74 |

75 | # Optional REPL history

76 | .node_repl_history

77 |

78 | # Output of 'npm pack'

79 | *.tgz

80 |

81 | # Yarn Integrity file

82 | .yarn-integrity

83 |

84 | # dotenv environment variables file

85 | .env

86 | .env.test

87 |

88 | # parcel-bundler cache (https://parceljs.org/)

89 | .cache

90 |

91 | # next.js build output

92 | .next

93 |

94 | # nuxt.js build output

95 | .nuxt

96 |

97 | # vuepress build output

98 | .vuepress/dist

99 |

100 | # Serverless directories

101 | .serverless/

102 |

103 | # FuseBox cache

104 | .fusebox/

105 |

106 | # DynamoDB Local files

107 | .dynamodb/

108 |

109 | ### OSX ###

110 | # General

111 | .DS_Store

112 | .AppleDouble

113 | .LSOverride

114 |

115 | # Icon must end with two \r

116 | Icon

117 |

118 | # Thumbnails

119 | ._*

120 |

121 | # Files that might appear in the root of a volume

122 | .DocumentRevisions-V100

123 | .fseventsd

124 | .Spotlight-V100

125 | .TemporaryItems

126 | .Trashes

127 | .VolumeIcon.icns

128 | .com.apple.timemachine.donotpresent

129 |

130 | # Directories potentially created on remote AFP share

131 | .AppleDB

132 | .AppleDesktop

133 | Network Trash Folder

134 | Temporary Items

135 | .apdisk

136 |

137 | ### PyCharm ###

138 | # Covers JetBrains IDEs: IntelliJ, RubyMine, PhpStorm, AppCode, PyCharm, CLion, Android Studio and WebStorm

139 | # Reference: https://intellij-support.jetbrains.com/hc/en-us/articles/206544839

140 |

141 | # User-specific stuff

142 | .idea/**/workspace.xml

143 | .idea/**/tasks.xml

144 | .idea/**/usage.statistics.xml

145 | .idea/**/dictionaries

146 | .idea/**/shelf

147 |

148 | # Generated files

149 | .idea/**/contentModel.xml

150 |

151 | # Sensitive or high-churn files

152 | .idea/**/dataSources/

153 | .idea/**/dataSources.ids

154 | .idea/**/dataSources.local.xml

155 | .idea/**/sqlDataSources.xml

156 | .idea/**/dynamic.xml

157 | .idea/**/uiDesigner.xml

158 | .idea/**/dbnavigator.xml

159 |

160 | # Gradle

161 | .idea/**/gradle.xml

162 | .idea/**/libraries

163 |

164 | # Gradle and Maven with auto-import

165 | # When using Gradle or Maven with auto-import, you should exclude module files,

166 | # since they will be recreated, and may cause churn. Uncomment if using

167 | # auto-import.

168 | .idea/*.xml

169 | .idea/*.iml

170 | .idea

171 | # .idea/modules

172 | # *.iml

173 | # *.ipr

174 |

175 | # CMake

176 | cmake-build-*/

177 |

178 | # Mongo Explorer plugin

179 | .idea/**/mongoSettings.xml

180 |

181 | # File-based project format

182 | *.iws

183 |

184 | # IntelliJ

185 | out/

186 |

187 | # mpeltonen/sbt-idea plugin

188 | .idea_modules/

189 |

190 | # JIRA plugin

191 | atlassian-ide-plugin.xml

192 |

193 | # Cursive Clojure plugin

194 | .idea/replstate.xml

195 |

196 | # Crashlytics plugin (for Android Studio and IntelliJ)

197 | com_crashlytics_export_strings.xml

198 | crashlytics.properties

199 | crashlytics-build.properties

200 | fabric.properties

201 |

202 | # Editor-based Rest Client

203 | .idea/httpRequests

204 |

205 | # Android studio 3.1+ serialized cache file

206 | .idea/caches/build_file_checksums.ser

207 |

208 | ### PyCharm Patch ###

209 | # Comment Reason: https://github.com/joeblau/gitignore.io/issues/186#issuecomment-215987721

210 |

211 | # *.iml

212 | # modules.xml

213 | # .idea/misc.xml

214 | # *.ipr

215 |

216 | # Sonarlint plugin

217 | .idea/sonarlint

218 |

219 | ### Python ###

220 | # Byte-compiled / optimized / DLL files

221 | __pycache__/

222 | *.py[cod]

223 | *$py.class

224 |

225 | # C extensions

226 | *.so

227 |

228 | # Distribution / packaging

229 | .Python

230 | build/

231 | develop-eggs/

232 | dist/

233 | downloads/

234 | eggs/

235 | .eggs/

236 | lib64/

237 | parts/

238 | sdist/

239 | var/

240 | wheels/

241 | pip-wheel-metadata/

242 | share/python-wheels/

243 | *.egg-info/

244 | .installed.cfg

245 | *.egg

246 | MANIFEST

247 |

248 | # PyInstaller

249 | # Usually these files are written by a python script from a template

250 | # before PyInstaller builds the exe, so as to inject date/other infos into it.

251 | *.manifest

252 | *.spec

253 |

254 | # Installer logs

255 | pip-log.txt

256 | pip-delete-this-directory.txt

257 |

258 | # Unit test / coverage reports

259 | htmlcov/

260 | .tox/

261 | .nox/

262 | .coverage

263 | .coverage.*

264 | nosetests.xml

265 | coverage.xml

266 | *.cover

267 | .hypothesis/

268 | .pytest_cache/

269 |

270 | # Translations

271 | *.mo

272 | *.pot

273 |

274 | # Django stuff:

275 | local_settings.py

276 | db.sqlite3

277 | db.sqlite3-journal

278 |

279 | # Flask stuff:

280 | instance/

281 | .webassets-cache

282 |

283 | # Scrapy stuff:

284 | .scrapy

285 |

286 | # Sphinx documentation

287 | docs/_build/

288 |

289 | # PyBuilder

290 | target/

291 |

292 | # Jupyter Notebook

293 | .ipynb_checkpoints

294 |

295 | # IPython

296 | profile_default/

297 | ipython_config.py

298 |

299 | # pyenv

300 | .python-version

301 |

302 | # pipenv

303 | # According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.

304 | # However, in case of collaboration, if having platform-specific dependencies or dependencies

305 | # having no cross-platform support, pipenv may install dependencies that don't work, or not

306 | # install all needed dependencies.

307 | #Pipfile.lock

308 |

309 | # celery beat schedule file

310 | celerybeat-schedule

311 |

312 | # SageMath parsed files

313 | *.sage.py

314 |

315 | # Environments

316 | .venv

317 | env/

318 | venv/

319 | ENV/

320 | env.bak/

321 | venv.bak/

322 |

323 | # Spyder project settings

324 | .spyderproject

325 | .spyproject

326 |

327 | # Rope project settings

328 | .ropeproject

329 |

330 | # mkdocs documentation

331 | /site

332 |

333 | # mypy

334 | .mypy_cache/

335 | .dmypy.json

336 | dmypy.json

337 |

338 | # Pyre type checker

339 | .pyre/

340 |

341 | ### VisualStudioCode ###

342 | .vscode

343 |

344 | ### VisualStudioCode Patch ###

345 | # Ignore all local history of files

346 | .history

347 |

348 | ### Windows ###

349 | # Windows thumbnail cache files

350 | Thumbs.db

351 | Thumbs.db:encryptable

352 | ehthumbs.db

353 | ehthumbs_vista.db

354 |

355 | # Dump file

356 | *.stackdump

357 |

358 | # Folder config file

359 | [Dd]esktop.ini

360 |

361 | # Recycle Bin used on file shares

362 | $RECYCLE.BIN/

363 |

364 | # Windows Installer files

365 | *.cab

366 | *.msi

367 | *.msix

368 | *.msm

369 | *.msp

370 |

371 | # Windows shortcuts

372 | *.lnk

373 |

374 | # End of https://www.gitignore.io/api/osx,linux,python,windows,pycharm,visualstudiocode,node

375 |

376 | ### CDK-specific ignores ###

377 | *.swp

378 | cdk.context.json

379 | #package-lock.json

380 | yarn.lock

381 | .cdk.staging

382 | cdk.out

383 |

384 | ### Pillow exclusion

385 |

386 | #/reklayer/python/**

--------------------------------------------------------------------------------

/CODE_OF_CONDUCT.md:

--------------------------------------------------------------------------------

1 | ## Code of Conduct

2 | This project has adopted the [Amazon Open Source Code of Conduct](https://aws.github.io/code-of-conduct).

3 | For more information see the [Code of Conduct FAQ](https://aws.github.io/code-of-conduct-faq) or contact

4 | opensource-codeofconduct@amazon.com with any additional questions or comments.

5 |

--------------------------------------------------------------------------------

/CONTRIBUTING.md:

--------------------------------------------------------------------------------

1 | # Contributing Guidelines

2 |

3 | Thank you for your interest in contributing to our project. Whether it's a bug report, new feature, correction, or additional

4 | documentation, we greatly value feedback and contributions from our community.

5 |

6 | Please read through this document before submitting any issues or pull requests to ensure we have all the necessary

7 | information to effectively respond to your bug report or contribution.

8 |

9 |

10 | ## Reporting Bugs/Feature Requests

11 |

12 | We welcome you to use the GitHub issue tracker to report bugs or suggest features.

13 |

14 | When filing an issue, please check existing open, or recently closed, issues to make sure somebody else hasn't already

15 | reported the issue. Please try to include as much information as you can. Details like these are incredibly useful:

16 |

17 | * A reproducible test case or series of steps

18 | * The version of our code being used

19 | * Any modifications you've made relevant to the bug

20 | * Anything unusual about your environment or deployment

21 |

22 |

23 | ## Contributing via Pull Requests

24 | Contributions via pull requests are much appreciated. Before sending us a pull request, please ensure that:

25 |

26 | 1. You are working against the latest source on the *main* branch.

27 | 2. You check existing open, and recently merged, pull requests to make sure someone else hasn't addressed the problem already.

28 | 3. You open an issue to discuss any significant work - we would hate for your time to be wasted.

29 |

30 | To send us a pull request, please:

31 |

32 | 1. Fork the repository.

33 | 2. Modify the source; please focus on the specific change you are contributing. If you also reformat all the code, it will be hard for us to focus on your change.

34 | 3. Ensure local tests pass.

35 | 4. Commit to your fork using clear commit messages.

36 | 5. Send us a pull request, answering any default questions in the pull request interface.

37 | 6. Pay attention to any automated CI failures reported in the pull request, and stay involved in the conversation.

38 |

39 | GitHub provides additional document on [forking a repository](https://help.github.com/articles/fork-a-repo/) and

40 | [creating a pull request](https://help.github.com/articles/creating-a-pull-request/).

41 |

42 |

43 | ## Finding contributions to work on

44 | Looking at the existing issues is a great way to find something to contribute on. As our projects, by default, use the default GitHub issue labels (enhancement/bug/duplicate/help wanted/invalid/question/wontfix), looking at any 'help wanted' issues is a great place to start.

45 |

46 |

47 | ## Code of Conduct

48 | This project has adopted the [Amazon Open Source Code of Conduct](https://aws.github.io/code-of-conduct).

49 | For more information see the [Code of Conduct FAQ](https://aws.github.io/code-of-conduct-faq) or contact

50 | opensource-codeofconduct@amazon.com with any additional questions or comments.

51 |

52 |

53 | ## Security issue notifications

54 | If you discover a potential security issue in this project we ask that you notify AWS/Amazon Security via our [vulnerability reporting page](http://aws.amazon.com/security/vulnerability-reporting/). Please do **not** create a public github issue.

55 |

56 |

57 | ## Licensing

58 |

59 | See the [LICENSE](LICENSE) file for our project's licensing. We will ask you to confirm the licensing of your contribution.

60 |

--------------------------------------------------------------------------------

/LICENSE:

--------------------------------------------------------------------------------

1 |

2 | Apache License

3 | Version 2.0, January 2004

4 | http://www.apache.org/licenses/

5 |

6 | TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

7 |

8 | 1. Definitions.

9 |

10 | "License" shall mean the terms and conditions for use, reproduction,

11 | and distribution as defined by Sections 1 through 9 of this document.

12 |

13 | "Licensor" shall mean the copyright owner or entity authorized by

14 | the copyright owner that is granting the License.

15 |

16 | "Legal Entity" shall mean the union of the acting entity and all

17 | other entities that control, are controlled by, or are under common

18 | control with that entity. For the purposes of this definition,

19 | "control" means (i) the power, direct or indirect, to cause the

20 | direction or management of such entity, whether by contract or

21 | otherwise, or (ii) ownership of fifty percent (50%) or more of the

22 | outstanding shares, or (iii) beneficial ownership of such entity.

23 |

24 | "You" (or "Your") shall mean an individual or Legal Entity

25 | exercising permissions granted by this License.

26 |

27 | "Source" form shall mean the preferred form for making modifications,

28 | including but not limited to software source code, documentation

29 | source, and configuration files.

30 |

31 | "Object" form shall mean any form resulting from mechanical

32 | transformation or translation of a Source form, including but

33 | not limited to compiled object code, generated documentation,

34 | and conversions to other media types.

35 |

36 | "Work" shall mean the work of authorship, whether in Source or

37 | Object form, made available under the License, as indicated by a

38 | copyright notice that is included in or attached to the work

39 | (an example is provided in the Appendix below).

40 |

41 | "Derivative Works" shall mean any work, whether in Source or Object

42 | form, that is based on (or derived from) the Work and for which the

43 | editorial revisions, annotations, elaborations, or other modifications

44 | represent, as a whole, an original work of authorship. For the purposes

45 | of this License, Derivative Works shall not include works that remain

46 | separable from, or merely link (or bind by name) to the interfaces of,

47 | the Work and Derivative Works thereof.

48 |

49 | "Contribution" shall mean any work of authorship, including

50 | the original version of the Work and any modifications or additions

51 | to that Work or Derivative Works thereof, that is intentionally

52 | submitted to Licensor for inclusion in the Work by the copyright owner

53 | or by an individual or Legal Entity authorized to submit on behalf of

54 | the copyright owner. For the purposes of this definition, "submitted"

55 | means any form of electronic, verbal, or written communication sent

56 | to the Licensor or its representatives, including but not limited to

57 | communication on electronic mailing lists, source code control systems,

58 | and issue tracking systems that are managed by, or on behalf of, the

59 | Licensor for the purpose of discussing and improving the Work, but

60 | excluding communication that is conspicuously marked or otherwise

61 | designated in writing by the copyright owner as "Not a Contribution."

62 |

63 | "Contributor" shall mean Licensor and any individual or Legal Entity

64 | on behalf of whom a Contribution has been received by Licensor and

65 | subsequently incorporated within the Work.

66 |

67 | 2. Grant of Copyright License. Subject to the terms and conditions of

68 | this License, each Contributor hereby grants to You a perpetual,

69 | worldwide, non-exclusive, no-charge, royalty-free, irrevocable

70 | copyright license to reproduce, prepare Derivative Works of,

71 | publicly display, publicly perform, sublicense, and distribute the

72 | Work and such Derivative Works in Source or Object form.

73 |

74 | 3. Grant of Patent License. Subject to the terms and conditions of

75 | this License, each Contributor hereby grants to You a perpetual,

76 | worldwide, non-exclusive, no-charge, royalty-free, irrevocable

77 | (except as stated in this section) patent license to make, have made,

78 | use, offer to sell, sell, import, and otherwise transfer the Work,

79 | where such license applies only to those patent claims licensable

80 | by such Contributor that are necessarily infringed by their

81 | Contribution(s) alone or by combination of their Contribution(s)

82 | with the Work to which such Contribution(s) was submitted. If You

83 | institute patent litigation against any entity (including a

84 | cross-claim or counterclaim in a lawsuit) alleging that the Work

85 | or a Contribution incorporated within the Work constitutes direct

86 | or contributory patent infringement, then any patent licenses

87 | granted to You under this License for that Work shall terminate

88 | as of the date such litigation is filed.

89 |

90 | 4. Redistribution. You may reproduce and distribute copies of the

91 | Work or Derivative Works thereof in any medium, with or without

92 | modifications, and in Source or Object form, provided that You

93 | meet the following conditions:

94 |

95 | (a) You must give any other recipients of the Work or

96 | Derivative Works a copy of this License; and

97 |

98 | (b) You must cause any modified files to carry prominent notices

99 | stating that You changed the files; and

100 |

101 | (c) You must retain, in the Source form of any Derivative Works

102 | that You distribute, all copyright, patent, trademark, and

103 | attribution notices from the Source form of the Work,

104 | excluding those notices that do not pertain to any part of

105 | the Derivative Works; and

106 |

107 | (d) If the Work includes a "NOTICE" text file as part of its

108 | distribution, then any Derivative Works that You distribute must

109 | include a readable copy of the attribution notices contained

110 | within such NOTICE file, excluding those notices that do not

111 | pertain to any part of the Derivative Works, in at least one

112 | of the following places: within a NOTICE text file distributed

113 | as part of the Derivative Works; within the Source form or

114 | documentation, if provided along with the Derivative Works; or,

115 | within a display generated by the Derivative Works, if and

116 | wherever such third-party notices normally appear. The contents

117 | of the NOTICE file are for informational purposes only and

118 | do not modify the License. You may add Your own attribution

119 | notices within Derivative Works that You distribute, alongside

120 | or as an addendum to the NOTICE text from the Work, provided

121 | that such additional attribution notices cannot be construed

122 | as modifying the License.

123 |

124 | You may add Your own copyright statement to Your modifications and

125 | may provide additional or different license terms and conditions

126 | for use, reproduction, or distribution of Your modifications, or

127 | for any such Derivative Works as a whole, provided Your use,

128 | reproduction, and distribution of the Work otherwise complies with

129 | the conditions stated in this License.

130 |

131 | 5. Submission of Contributions. Unless You explicitly state otherwise,

132 | any Contribution intentionally submitted for inclusion in the Work

133 | by You to the Licensor shall be under the terms and conditions of

134 | this License, without any additional terms or conditions.

135 | Notwithstanding the above, nothing herein shall supersede or modify

136 | the terms of any separate license agreement you may have executed

137 | with Licensor regarding such Contributions.

138 |

139 | 6. Trademarks. This License does not grant permission to use the trade

140 | names, trademarks, service marks, or product names of the Licensor,

141 | except as required for reasonable and customary use in describing the

142 | origin of the Work and reproducing the content of the NOTICE file.

143 |

144 | 7. Disclaimer of Warranty. Unless required by applicable law or

145 | agreed to in writing, Licensor provides the Work (and each

146 | Contributor provides its Contributions) on an "AS IS" BASIS,

147 | WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

148 | implied, including, without limitation, any warranties or conditions

149 | of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

150 | PARTICULAR PURPOSE. You are solely responsible for determining the

151 | appropriateness of using or redistributing the Work and assume any

152 | risks associated with Your exercise of permissions under this License.

153 |

154 | 8. Limitation of Liability. In no event and under no legal theory,

155 | whether in tort (including negligence), contract, or otherwise,

156 | unless required by applicable law (such as deliberate and grossly

157 | negligent acts) or agreed to in writing, shall any Contributor be

158 | liable to You for damages, including any direct, indirect, special,

159 | incidental, or consequential damages of any character arising as a

160 | result of this License or out of the use or inability to use the

161 | Work (including but not limited to damages for loss of goodwill,

162 | work stoppage, computer failure or malfunction, or any and all

163 | other commercial damages or losses), even if such Contributor

164 | has been advised of the possibility of such damages.

165 |

166 | 9. Accepting Warranty or Additional Liability. While redistributing

167 | the Work or Derivative Works thereof, You may choose to offer,

168 | and charge a fee for, acceptance of support, warranty, indemnity,

169 | or other liability obligations and/or rights consistent with this

170 | License. However, in accepting such obligations, You may act only

171 | on Your own behalf and on Your sole responsibility, not on behalf

172 | of any other Contributor, and only if You agree to indemnify,

173 | defend, and hold each Contributor harmless for any liability

174 | incurred by, or claims asserted against, such Contributor by reason

175 | of your accepting any such warranty or additional liability.

176 |

--------------------------------------------------------------------------------

/NOTICE:

--------------------------------------------------------------------------------

1 | Copyright Amazon.com, Inc. or its affiliates. All Rights Reserved.

2 |

--------------------------------------------------------------------------------

/README.md:

--------------------------------------------------------------------------------

1 | # AWS Dev Hour - Series 1 Demo Application (Backend)

2 | ### Type: Demo

3 | ### Repository: https://github.com/aws-samples/aws-dev-hour-backend

4 |

5 | ## AWS Dev Hour - On Twitch

6 | Do you have the skills it takes to build modern applications that are distributed and designed for scale and agility? If you’re interested in learning to build cloud native applications and architecture practices, join us for AWS Dev Hour: Building Modern Apps, a weekly Twitch show presented by AWS Training and Certification. Built by developers for developers, the series offers a hands-on approach. Over the course of 8 episodes, AWS expert hosts Ben Newton and May Kyaw will take you through the end-to-end build of a serverless application in the AWS cloud. You’ll have the chance to learn by doing, following along with the hosts and developing a cloud-native application using the AWS free tier. You’ll learn best practices for modern applications and better understand how AWS cloud-native applications differ from on-premises. Throughout the series, you’ll receive code, white papers, links to documentation, and other resources to help you progress.

7 |

8 |

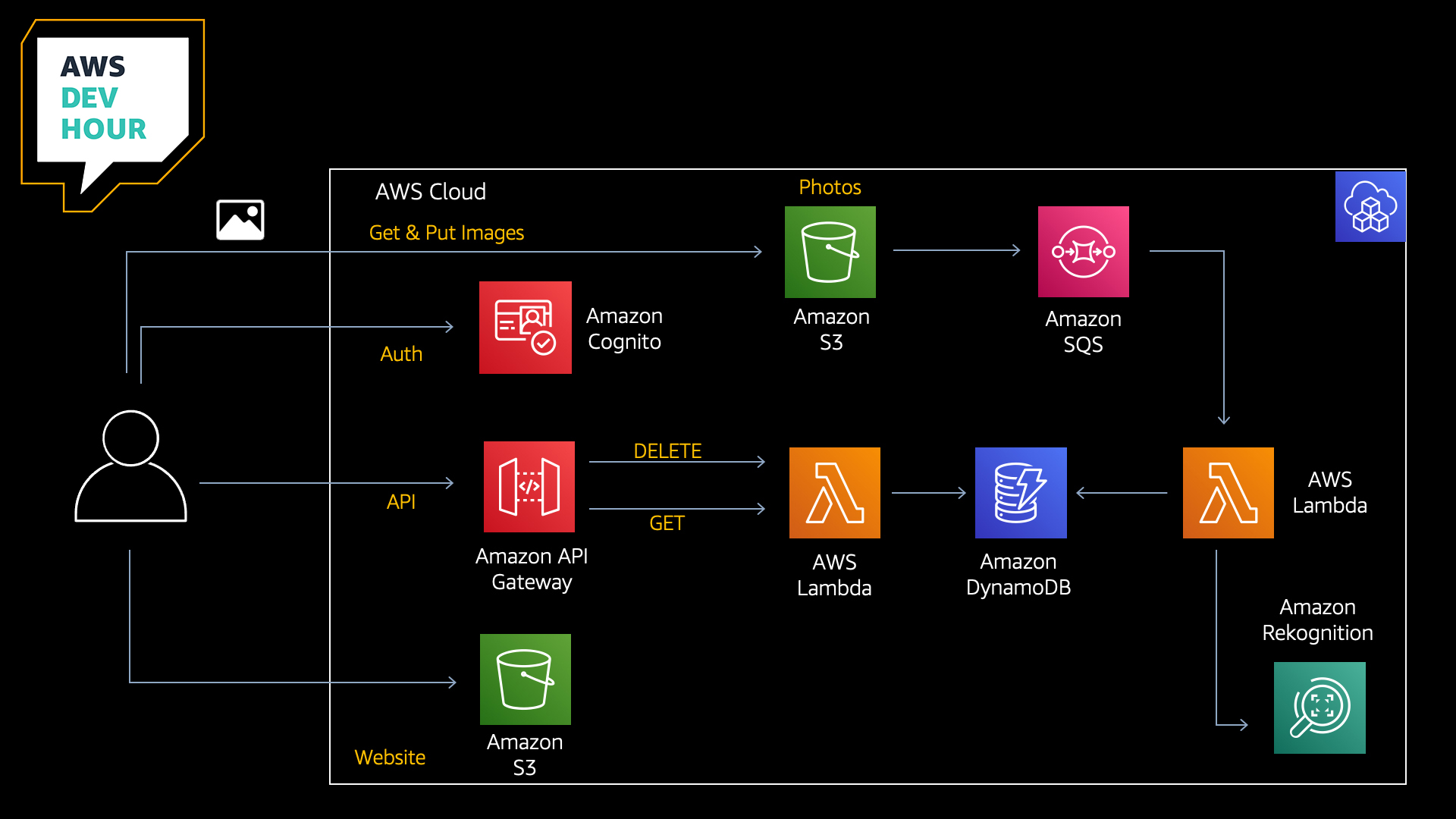

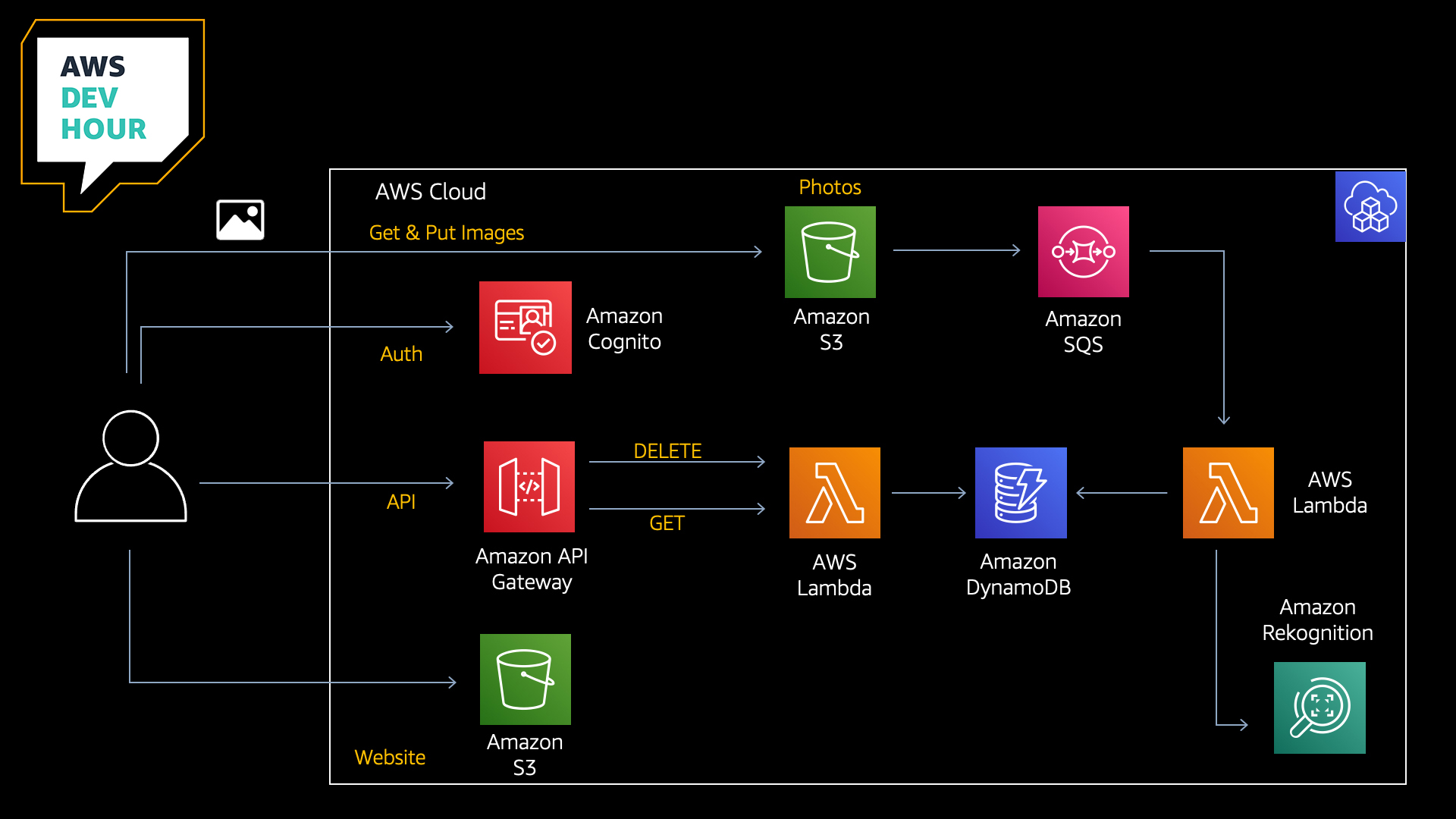

9 | ## Architecture

10 |  11 |

12 | ## Episodes

13 |

14 | During each episode, we will be progressively building this full-stack application together. Please see the following URL for schedule and episode details:

15 |

16 | [AWS Dev Hour Schedule](https://pages.awscloud.com/traincert-twitch-dev-hour?sc_icampaign=event_twitch_devhour_launch_hosts&sc_channel=sm)

17 |

18 | #### Git branch

19 |

20 | [Episode 1: CDK](https://github.com/aws-samples/aws-dev-hour-backend/tree/feature/episode1)

21 |

22 | [Episode 2: AWS Lambda & Amazon DynamoDB](https://github.com/aws-samples/aws-dev-hour-backend/tree/feature/episode2)

23 |

24 | [Episode 3: Amazon API Gateway](https://github.com/aws-samples/aws-dev-hour-backend/tree/feature/episode3)

25 |

26 | [Episode 4: AWS IAM & Amazon Cognito](https://github.com/aws-samples/aws-dev-hour-backend/tree/feature/episode4)

27 |

28 | [Episode 5: Amazon S3](https://github.com/aws-samples/aws-dev-hour-backend/tree/feature/episode5)

29 |

30 | [Episode 6: Amazon SQS](https://github.com/aws-samples/aws-dev-hour-backend/tree/feature/episode6)

31 |

32 | [Episode 7: Deployment Pipeline](https://github.com/aws-samples/aws-dev-hour-backend/tree/feature/episode7)

33 |

34 | ### Services used during the series:

35 |

36 | - Amazon Cognito

37 | - Amazon S3

38 | - Amazon Simple Queue Service

39 | - AWS Lambda

40 | - Amazon DynamoDB

41 | - Amazon Rekognition

42 | - AWS Cloud Development Kit

43 | - Amazon API Gateway

44 | - AWS CodeBuild

45 | - AWS CodePipeline

46 |

47 | ## Useful commands

48 |

49 | * `npm install` install packages

50 | * `cdk synth` emits the synthesized CloudFormation template

51 | * `cdk deploy` deploy this stack

52 | * `cdk diff` compare deployed stack with current state

53 |

54 | The `cdk.json` file tells the CDK Toolkit how to execute your app.

55 |

56 | ## Prerequisites

57 |

58 | All CDK developers need to install Node.js 10.3.0 or later, even those working in languages other than TypeScript or JavaScript. The AWS CDK Toolkit (cdk command-line tool) and the AWS Construct Library run on Node.js. The bindings for other supported languages use this back end and tool set. We suggest the latest LTS version.

59 |

60 | ```bash

61 | aws configure

62 | npm -g install typescript

63 | npm install -g aws-cdk

64 | ```

65 | If you have not yet done so, you will also need to bootstrap your account:

66 |

67 | ```bash

68 | cdk bootstrap aws://ACCOUNT-NUMBER-1/REGION-1

69 | ```

70 |

71 | for example:

72 |

73 | ```bash

74 | cdk bootstrap aws://123456789012/us-east-1

75 | ```

76 |

77 |

78 | For further information, please see:

79 |

80 | https://docs.aws.amazon.com/cdk/latest/guide/getting_started.html

81 |

82 | https://docs.aws.amazon.com/cdk/latest/guide/bootstrapping.html

83 |

84 | ### AWS Lambda - Creating assets for your AWS Lambda Layer

85 |

86 | Our AWS Lambda function uses the Pillow library for the generation of thumbnail images. This library needs to be added into our project so that we can allow the CDK to package it and create an AWS Lambda Layer for us. To do this, you can use the following steps. (Please note, creating these resources in your AWS account could incur costs, although we have tried to select free-tier eligble resources here).

87 |

88 | 1. Launch an Amazon EC2 Instance (t2-micro) using the Amazon Linux 2 AMI

89 | 2. SSH into your instance and run the following commands:

90 |

91 | ```bash

92 | sudo yum install -y python3-pip python3 python3-setuptools

93 | python3 -m venv my_app/env

94 | source ~/my_app/env/bin/activate

95 | cd my_app/env

96 | pip3 install pillow

97 | cd /home/ec2-user/my_app/env/lib/python3.7/site-packages

98 | mkdir python && cp -R ./PIL ./python && cp -R ./Pillow-8.1.0.dist-info ./python && cp -R ./Pillow.libs ./python && zip -r pillow.zip ./python

99 | ```

100 | 3. Copy the resulting archive 'pillow.zip' to your development environment (we used an Amazon S3 bucket for this)

101 | 4. Extract the archive into the 'reklayer' folder in your project directory

102 |

103 | Your project structure should look something like this:

104 |

105 | ```

106 | project-root/reklayer/python/PIL

107 | project-root/reklayer/python/Pillow-8.1.0.dist-info

108 | project-root/reklayer/python/Pillow.libs

109 | ```

110 |

111 | 5. Remove the python.zip file to clean up

112 | 6. Terminate the Amazon EC2 Instance that you created to build the archive

113 |

114 | ## Getting Started

115 |

116 | 1. `npm install`

117 |

118 | 2. `cdk deploy`

119 |

120 | A 'cdk deploy' will deploy everything that you need into your account

121 |

122 | 3. You may now test the backend by uploading an image into your Amazon S3 bucket.

123 |

124 | ## Prerequisites for Episode 7

125 |

126 | In episode 7, we are building a deployment pipeline for our application. Before we start working on our pipeline, there are a few things to point out. For this tutorial, you will need:

127 |

128 | 1. Github account

129 | 2. Github personal access token. Token should have the scopes ```repo``` and ```admin:repo_hook```

130 | 3. Github owner, repository name, branch name set up in [AWS Systems Manager - Parameter Store](https://docs.aws.amazon.com/systems-manager/latest/userguide/systems-manager-parameter-store.html)

131 | 4. Github personal access token set up in [AWS Secrets Manager](https://docs.aws.amazon.com/secretsmanager/latest/userguide/intro.html)

132 |

133 | ### Parameter Store Examples

134 |

135 | - devhour-backend-git-repo Value: aws-dev-hour-backend

136 | - devhour-backend-git-branch Value: main (Or whichever branch you would like your webhook)

137 | - devhour-backend-git-owner Value: your-github-username

138 |

139 | ### Episode 7 Notes

140 |

141 | In this tutorial, we are using ```@aws-cdk/pipelines``` module to build a deployment pipeline. As of 11 March 2021, ```@aws-cdk/pipelines``` module is in Developer Preview. So you will need to set a feature flag in ```cdk.json``` as below to use new features of the CDK framework.

142 |

143 | ```

144 | "@aws-cdk/core:newStyleStackSynthesis": "true"

145 | ```

146 |

147 | You will also need to bootstrap the stack again to accommodate the new CDK pipeline experience by running this command:

148 |

149 | ```

150 | cdk bootstrap

151 | ```

152 | If you have deployed the stack already into your account using 'cdk deploy', you may need to destroy your stack so that the pipeline can build a fresh one. You can do this using the following command:

153 |

154 | ```

155 | cdk destroy AwsdevhourStack

156 | ```

157 |

158 | Once you have done this, you can deploy your pipeline stack by running the following command:

159 |

160 | ```

161 | cdk deploy AwsdevhourBackendPipelineStack

162 | ```

163 |

164 | While deploying, AWS CodePipeline will create a webhook with your Github repo. Subsequent pushes into your repo branch will update your stack automatically, even the pipeline will self-mutate.

165 |

166 | To view your available stacks, you can run:

167 |

168 | ```

169 | cdk list

170 | ```

171 | ** Pillow Library Note **

172 |

173 | In ```awsdevhour-backend-pipeline-stack.ts``` you will notice the following:

174 |

175 | ```typescript

176 | synthAction: SimpleSynthAction.standardNpmSynth({

177 | sourceArtifact,

178 | cloudAssemblyArtifact,

179 | //This build command is to download pillow library, unzip the downloaded file and tidy up.

180 | //If you already have pillow library downloaded under reklayer/, please just run 'npm run build'

181 | buildCommand: 'rm ./reklayer/pillow-goes-here.txt && wget https://awsdevhour-twitch.s3.ap-southeast-2.amazonaws.com/pillow.zip && unzip pillow.zip && mv ./python ./reklayer && rm pillow.zip && npm run build',

182 | synthCommand: 'npm run cdk synth'

183 | })

184 | ```

185 | If you already have the Pillow library under reklayer/python, then you don't need to run this. We added it to facilitate testing during the show. You could simply do the following:

186 |

187 | ```typescript

188 | synthAction: SimpleSynthAction.standardNpmSynth({

189 | sourceArtifact,

190 | cloudAssemblyArtifact,

191 | buildCommand: 'npm run build',

192 | synthCommand: 'npm run cdk synth'

193 | })

194 | ```

195 |

196 | #### RekLayer

197 |

198 | In this tutorial, we are using [Python Imaging Library](https://pypi.org/project/Pillow/) to add image processing capabilities to our application. As part of the deployment, you can manually download the pillow library and keep under /reklayer folder in this project. So, you can keep the build simple.

199 |

200 | However, you can also download the pillow library when you set up the pipeline as shown in awsdevhour-backend-pipeline-stack.ts

201 |

202 |

203 | ## Cleanup

204 |

205 | To clean up the resources created by the CDK, run the following commands:

206 | ```bash

207 | aws s3 rm --recursive s3://{imageBucket}

208 | cdk destroy

209 | ```

210 | (Enter “y” in response to: Are you sure you want to delete (y/n)?).

211 |

212 | ## Tweaks

213 |

214 | Rekognition confidence is currently set in the rekognition lambda.

215 | ```python

216 | minConfidence = 50

217 | ```

218 | Feel free to adjust and experiment.

219 | If you change, make sure to perform another 'cdk deploy' to update the lambda function.

220 |

221 | ### Contributions

222 |

223 | We would encourage all of our AWS Dev Hour viewers to contribute to this project. For more details, please refer to 'CONTRIBUTING.md'.

224 |

225 | ### License

226 |

227 | This software is licensed under the Apache License, Version 2.0.

--------------------------------------------------------------------------------

/bin/awsdevhour.ts:

--------------------------------------------------------------------------------

1 | #!/usr/bin/env node

2 | import 'source-map-support/register';

3 | import * as cdk from '@aws-cdk/core';

4 | import { AwsdevhourStack } from '../lib/awsdevhour-stack';

5 | import { AwsdevhourBackendPipelineStack } from '../lib/awsdevhour-backend-pipeline-stack';

6 |

7 | const app = new cdk.App();

8 | new AwsdevhourStack(app, 'AwsdevhourStack');

9 | new AwsdevhourBackendPipelineStack(app, 'AwsdevhourBackendPipelineStack');

10 |

--------------------------------------------------------------------------------

/cdk.json:

--------------------------------------------------------------------------------

1 | {

2 | "app": "npx ts-node --prefer-ts-exts bin/awsdevhour.ts",

3 | "context": {

4 | "@aws-cdk/core:enableStackNameDuplicates": "true",

5 | "aws-cdk:enableDiffNoFail": "true",

6 | "@aws-cdk/core:stackRelativeExports": "true",

7 | "@aws-cdk/aws-ecr-assets:dockerIgnoreSupport": true,

8 | "@aws-cdk/aws-secretsmanager:parseOwnedSecretName": true,

9 | "@aws-cdk/aws-kms:defaultKeyPolicies": true,

10 | "@aws-cdk/aws-s3:grantWriteWithoutAcl": true,

11 | "@aws-cdk/core:newStyleStackSynthesis": "true"

12 | }

13 | }

14 |

--------------------------------------------------------------------------------

/jest.config.js:

--------------------------------------------------------------------------------

1 | module.exports = {

2 | roots: ['/test'],

3 | testMatch: ['**/*.test.ts'],

4 | transform: {

5 | '^.+\\.tsx?$': 'ts-jest'

6 | }

7 | };

8 |

--------------------------------------------------------------------------------

/lib/awsdevhour-backend-pipeline-stack.ts:

--------------------------------------------------------------------------------

1 | import * as codepipeline from '@aws-cdk/aws-codepipeline';

2 | import * as codepipeline_actions from '@aws-cdk/aws-codepipeline-actions';

3 | import { Construct, SecretValue, Stack, StackProps } from '@aws-cdk/core';

4 | import { CdkPipeline, SimpleSynthAction, ShellScriptAction } from "@aws-cdk/pipelines";

5 | import { AwsdevhourBackendPipelineStage } from "./awsdevhour-backend-pipeline-stage";

6 | import { StringParameter } from '@aws-cdk/aws-ssm';

7 | import { ManualApprovalAction } from '@aws-cdk/aws-codepipeline-actions';

8 |

9 | /**

10 | * Test

11 | * Stack to define the Devhour-series1 application pipeline

12 | *

13 | * Prerequisite:

14 | * Github personal access token should be stored in Secret Manager with id as below

15 | * Github owner value should be set up in System manager - Parameter store with name as below

16 | * Github repository value should be set up in System manager - Parameter store with name as below

17 | * Github branch value should be set up in System manager - Parameter store with name as below

18 | * */

19 |

20 | export class AwsdevhourBackendPipelineStack extends Stack {

21 | constructor(scope: Construct, id: string, props?: StackProps) {

22 | super(scope, id, props);

23 |

24 | const sourceArtifact = new codepipeline.Artifact();

25 | const cloudAssemblyArtifact = new codepipeline.Artifact();

26 |

27 | const githubOwner = StringParameter.fromStringParameterAttributes(this, 'gitOwner',{

28 | parameterName: 'devhour-backend-git-owner'

29 | }).stringValue;

30 |

31 | const githubRepo = StringParameter.fromStringParameterAttributes(this, 'gitRepo',{

32 | parameterName: 'devhour-backend-git-repo'

33 | }).stringValue;

34 |

35 | const githubBranch = StringParameter.fromStringParameterAttributes(this, 'gitBranch',{

36 | parameterName: 'devhour-backend-git-branch'

37 | }).stringValue;

38 |

39 | const pipeline = new CdkPipeline(this, 'Pipeline', {

40 | crossAccountKeys: false,

41 | cloudAssemblyArtifact,

42 | // Define application source

43 | sourceAction: new codepipeline_actions.GitHubSourceAction({

44 | actionName: 'GitHub',

45 | output: sourceArtifact,

46 | oauthToken: SecretValue.secretsManager('devhour-backend-git-access-token', {jsonField: 'devHourSeries1-git-access-token'}), // this token is stored in Secret Manager

47 | owner: githubOwner,

48 | repo: githubRepo,

49 | branch: githubBranch

50 | }),

51 | // Define build and synth commands

52 | synthAction: SimpleSynthAction.standardNpmSynth({

53 | sourceArtifact,

54 | cloudAssemblyArtifact,

55 | buildCommand: 'rm -rf ./reklayer/* && wget https://awsdevhour-twitch.s3.ap-southeast-2.amazonaws.com/pillow.zip && unzip pillow.zip && mv ./python ./reklayer && rm pillow.zip && npm run build',

56 | synthCommand: 'npm run cdk synth'

57 | })

58 | });

59 |

60 | //Define application stage

61 | const stage = pipeline.addApplicationStage(new AwsdevhourBackendPipelineStage(this, 'dev'));

62 |

63 | // stage.addActions(new ManualApprovalAction({

64 | // actionName: 'ManualApproval',

65 | // runOrder: stage.nextSequentialRunOrder(),

66 | // }));

67 |

68 | }

69 | }

70 |

--------------------------------------------------------------------------------

/lib/awsdevhour-backend-pipeline-stage.ts:

--------------------------------------------------------------------------------

1 | import { CfnOutput, Construct, Stage, StageProps } from "@aws-cdk/core";

2 | import { AwsdevhourStack } from "./awsdevhour-stack";

3 |

4 | /**

5 | * Deployable unit of awsdevhour-backend app

6 | * */

7 | export class AwsdevhourBackendPipelineStage extends Stage {

8 | constructor(scope: Construct, id: string, props?: StageProps) {

9 | super(scope, id, props);

10 |

11 | new AwsdevhourStack(this, 'AwsdevhourBackendStack-dev');

12 |

13 | }

14 | }

15 |

--------------------------------------------------------------------------------

/lib/awsdevhour-stack.ts:

--------------------------------------------------------------------------------

1 | import * as cdk from '@aws-cdk/core';

2 | import s3 = require('@aws-cdk/aws-s3');

3 | import iam = require('@aws-cdk/aws-iam');

4 | import dynamodb = require('@aws-cdk/aws-dynamodb');

5 | import lambda = require('@aws-cdk/aws-lambda');

6 | import event_sources = require('@aws-cdk/aws-lambda-event-sources');

7 | import cognito = require('@aws-cdk/aws-cognito');

8 | import { AuthorizationType, PassthroughBehavior } from '@aws-cdk/aws-apigateway';

9 | import { CfnOutput } from "@aws-cdk/core";

10 | import { Duration } from '@aws-cdk/core';

11 | import apigw = require('@aws-cdk/aws-apigateway');

12 | import s3deploy = require('@aws-cdk/aws-s3-deployment');

13 | import { HttpMethods } from '@aws-cdk/aws-s3';

14 | import sqs = require('@aws-cdk/aws-sqs');

15 | import s3n = require('@aws-cdk/aws-s3-notifications');

16 |

17 | const imageBucketName = "cdk-rekn-imgagebucket"

18 | const resizedBucketName = imageBucketName + "-resized"

19 | const websiteBucketName = "cdk-rekn-publicbucket"

20 |

21 | export class AwsdevhourStack extends cdk.Stack {

22 | constructor(scope: cdk.Construct, id: string, props?: cdk.StackProps) {

23 | super(scope, id, props);

24 |

25 | // =====================================================================================

26 | // Image Bucket

27 | // =====================================================================================

28 | const imageBucket = new s3.Bucket(this, imageBucketName, {

29 | removalPolicy: cdk.RemovalPolicy.DESTROY

30 | });

31 | new cdk.CfnOutput(this, 'imageBucket', { value: imageBucket.bucketName });

32 | const imageBucketArn = imageBucket.bucketArn;

33 | imageBucket.addCorsRule({

34 | allowedMethods: [HttpMethods.GET, HttpMethods.PUT],

35 | allowedOrigins: ["*"],

36 | allowedHeaders: ["*"],

37 | maxAge: 3000

38 | });

39 |

40 | // =====================================================================================

41 | // Thumbnail Bucket

42 | // =====================================================================================

43 | const resizedBucket = new s3.Bucket(this, resizedBucketName, {

44 | removalPolicy: cdk.RemovalPolicy.DESTROY

45 | });

46 | new cdk.CfnOutput(this, 'resizedBucket', {value: resizedBucket.bucketName});

47 | const resizedBucketArn = resizedBucket.bucketArn;

48 | resizedBucket.addCorsRule({

49 | allowedMethods: [HttpMethods.GET, HttpMethods.PUT],

50 | allowedOrigins: ["*"],

51 | allowedHeaders: ["*"],

52 | maxAge: 3000

53 | });

54 |

55 | // =====================================================================================

56 | // Construct to create our Amazon S3 Bucket to host our website

57 | // =====================================================================================

58 | const webBucket = new s3.Bucket(this, websiteBucketName, {

59 | websiteIndexDocument: 'index.html',

60 | websiteErrorDocument: 'index.html',

61 | removalPolicy: cdk.RemovalPolicy.DESTROY

62 | // publicReadAccess: true,

63 | });

64 |

65 | webBucket.addToResourcePolicy(new iam.PolicyStatement({

66 | actions: ['s3:GetObject'],

67 | resources: [webBucket.arnForObjects('*')],

68 | principals: [new iam.AnyPrincipal()],

69 | conditions: {

70 | 'IpAddress': {

71 | 'aws:SourceIp': [

72 | '*.*.*.*/*' // Please change it to your IP address or from your allowed list

73 | ]

74 | }

75 | }

76 |

77 | }))

78 | new cdk.CfnOutput(this, 'bucketURL', { value: webBucket.bucketWebsiteDomainName });

79 |

80 | // =====================================================================================

81 | // Deploy site contents to S3 Bucket

82 | // =====================================================================================

83 | new s3deploy.BucketDeployment(this, 'DeployWebsite', {

84 | sources: [ s3deploy.Source.asset('./public') ],

85 | destinationBucket: webBucket

86 | });

87 |

88 | // =====================================================================================

89 | // Amazon DynamoDB table for storing image labels

90 | // =====================================================================================

91 | const table = new dynamodb.Table(this, 'ImageLabels', {

92 | partitionKey: { name: 'image', type: dynamodb.AttributeType.STRING },

93 | removalPolicy: cdk.RemovalPolicy.DESTROY

94 | });

95 | new cdk.CfnOutput(this, 'ddbTable', { value: table.tableName });

96 |

97 | // =====================================================================================

98 | // Building our AWS Lambda Function; compute for our serverless microservice

99 | // =====================================================================================

100 | const layer = new lambda.LayerVersion(this, 'pil', {

101 | code: lambda.Code.fromAsset('reklayer'),

102 | compatibleRuntimes: [lambda.Runtime.PYTHON_3_7],

103 | license: 'Apache-2.0',

104 | description: 'A layer to enable the PIL library in our Rekognition Lambda',

105 | });

106 |

107 | // =====================================================================================

108 | // Building our AWS Lambda Function; compute for our serverless microservice

109 | // =====================================================================================

110 | const rekFn = new lambda.Function(this, 'rekognitionFunction', {

111 | code: lambda.Code.fromAsset('rekognitionlambda'),

112 | runtime: lambda.Runtime.PYTHON_3_7,

113 | handler: 'index.handler',

114 | timeout: Duration.seconds(30),

115 | memorySize: 1024,

116 | layers: [layer],

117 | environment: {

118 | "TABLE": table.tableName,

119 | "BUCKET": imageBucket.bucketName,

120 | "RESIZEDBUCKET": resizedBucket.bucketName

121 | },

122 | });

123 |

124 | imageBucket.grantRead(rekFn);

125 | resizedBucket.grantPut(rekFn);

126 | table.grantWriteData(rekFn);

127 |

128 | rekFn.addToRolePolicy(new iam.PolicyStatement({

129 | effect: iam.Effect.ALLOW,

130 | actions: ['rekognition:DetectLabels'],

131 | resources: ['*']

132 | }));

133 |

134 | // =====================================================================================

135 | // Lambda for Synchronous Front End

136 | // =====================================================================================

137 |

138 | const serviceFn = new lambda.Function(this, 'serviceFunction', {

139 | code: lambda.Code.fromAsset('servicelambda'),

140 | runtime: lambda.Runtime.PYTHON_3_7,

141 | handler: 'index.handler',

142 | environment: {

143 | "TABLE": table.tableName,

144 | "BUCKET": imageBucket.bucketName,

145 | "RESIZEDBUCKET": resizedBucket.bucketName

146 | },

147 | });

148 |

149 | imageBucket.grantWrite(serviceFn);

150 | resizedBucket.grantWrite(serviceFn);

151 | table.grantReadWriteData(serviceFn);

152 |

153 | const api = new apigw.LambdaRestApi(this, 'imageAPI', {

154 | defaultCorsPreflightOptions: {

155 | allowOrigins: apigw.Cors.ALL_ORIGINS,

156 | allowMethods: apigw.Cors.ALL_METHODS

157 | },

158 | handler: serviceFn,

159 | proxy: false,

160 | });

161 |

162 | // =====================================================================================

163 | // This construct builds a new Amazon API Gateway with AWS Lambda Integration

164 | // =====================================================================================

165 | const lambdaIntegration = new apigw.LambdaIntegration(serviceFn, {

166 | proxy: false,

167 | requestParameters: {

168 | 'integration.request.querystring.action': 'method.request.querystring.action',

169 | 'integration.request.querystring.key': 'method.request.querystring.key'

170 | },

171 | requestTemplates: {

172 | 'application/json': JSON.stringify({ action: "$util.escapeJavaScript($input.params('action'))", key: "$util.escapeJavaScript($input.params('key'))" })

173 | },

174 | passthroughBehavior: PassthroughBehavior.WHEN_NO_TEMPLATES,

175 | integrationResponses: [

176 | {

177 | statusCode: "200",

178 | responseParameters: {

179 | // We can map response parameters

180 | // - Destination parameters (the key) are the response parameters (used in mappings)

181 | // - Source parameters (the value) are the integration response parameters or expressions

182 | 'method.response.header.Access-Control-Allow-Origin': "'*'"

183 | }

184 | },

185 | {

186 | // For errors, we check if the error message is not empty, get the error data

187 | selectionPattern: "(\n|.)+",

188 | statusCode: "500",

189 | responseParameters: {

190 | 'method.response.header.Access-Control-Allow-Origin': "'*'"

191 | }

192 | }

193 | ],

194 | });

195 |

196 | // =====================================================================================

197 | // Cognito User Pool Authentication

198 | // =====================================================================================

199 | const userPool = new cognito.UserPool(this, "UserPool", {

200 | selfSignUpEnabled: true, // Allow users to sign up

201 | autoVerify: { email: true }, // Verify email addresses by sending a verification code

202 | signInAliases: { username: true, email: true }, // Set email as an alias

203 | });

204 |

205 | const userPoolClient = new cognito.UserPoolClient(this, "UserPoolClient", {

206 | userPool,

207 | generateSecret: false, // Don't need to generate secret for web app running on browsers

208 | });

209 |

210 | const identityPool = new cognito.CfnIdentityPool(this, "ImageRekognitionIdentityPool", {

211 | allowUnauthenticatedIdentities: false, // Don't allow unathenticated users

212 | cognitoIdentityProviders: [

213 | {

214 | clientId: userPoolClient.userPoolClientId,

215 | providerName: userPool.userPoolProviderName,

216 | },

217 | ],

218 | });

219 |

220 | const auth = new apigw.CfnAuthorizer(this, 'APIGatewayAuthorizer', {

221 | name: 'customer-authorizer',

222 | identitySource: 'method.request.header.Authorization',

223 | providerArns: [userPool.userPoolArn],

224 | restApiId: api.restApiId,

225 | type: AuthorizationType.COGNITO,

226 | });

227 |

228 | const authenticatedRole = new iam.Role(this, "ImageRekognitionAuthenticatedRole", {

229 | assumedBy: new iam.FederatedPrincipal(

230 | "cognito-identity.amazonaws.com",

231 | {

232 | StringEquals: {

233 | "cognito-identity.amazonaws.com:aud": identityPool.ref,

234 | },

235 | "ForAnyValue:StringLike": {

236 | "cognito-identity.amazonaws.com:amr": "authenticated",

237 | },

238 | },

239 | "sts:AssumeRoleWithWebIdentity"

240 | ),

241 | });

242 |

243 | // IAM policy granting users permission to upload, download and delete their own pictures

244 | authenticatedRole.addToPolicy(

245 | new iam.PolicyStatement({

246 | actions: [

247 | "s3:GetObject",

248 | "s3:PutObject"

249 | ],

250 | effect: iam.Effect.ALLOW,

251 | resources: [

252 | imageBucketArn + "/private/${cognito-identity.amazonaws.com:sub}/*",

253 | imageBucketArn + "/private/${cognito-identity.amazonaws.com:sub}",

254 | resizedBucketArn + "/private/${cognito-identity.amazonaws.com:sub}/*",

255 | resizedBucketArn + "/private/${cognito-identity.amazonaws.com:sub}"

256 | ],

257 | })

258 | );

259 |

260 | // IAM policy granting users permission to list their pictures

261 | authenticatedRole.addToPolicy(

262 | new iam.PolicyStatement({

263 | actions: ["s3:ListBucket"],

264 | effect: iam.Effect.ALLOW,

265 | resources: [

266 | imageBucketArn,

267 | resizedBucketArn

268 | ],

269 | conditions: {"StringLike": {"s3:prefix": ["private/${cognito-identity.amazonaws.com:sub}/*"]}}

270 | })

271 | );

272 |

273 | new cognito.CfnIdentityPoolRoleAttachment(this, "IdentityPoolRoleAttachment", {

274 | identityPoolId: identityPool.ref,

275 | roles: { authenticated: authenticatedRole.roleArn },

276 | });

277 |

278 | // Export values of Cognito

279 | new CfnOutput(this, "UserPoolId", {

280 | value: userPool.userPoolId,

281 | });

282 | new CfnOutput(this, "AppClientId", {

283 | value: userPoolClient.userPoolClientId,

284 | });

285 | new CfnOutput(this, "IdentityPoolId", {

286 | value: identityPool.ref,

287 | });

288 |

289 |

290 | // =====================================================================================

291 | // API Gateway

292 | // =====================================================================================

293 | const imageAPI = api.root.addResource('images');

294 |

295 | // GET /images

296 | imageAPI.addMethod('GET', lambdaIntegration, {

297 | authorizationType: AuthorizationType.COGNITO,

298 | authorizer: { authorizerId: auth.ref },

299 | requestParameters: {

300 | 'method.request.querystring.action': true,

301 | 'method.request.querystring.key': true

302 | },

303 | methodResponses: [

304 | {

305 | statusCode: "200",

306 | responseParameters: {

307 | 'method.response.header.Access-Control-Allow-Origin': true,

308 | },

309 | },

310 | {

311 | statusCode: "500",

312 | responseParameters: {

313 | 'method.response.header.Access-Control-Allow-Origin': true,

314 | },

315 | }

316 | ]

317 | });

318 |

319 | // DELETE /images

320 | imageAPI.addMethod('DELETE', lambdaIntegration, {

321 | authorizationType: AuthorizationType.COGNITO,

322 | authorizer: { authorizerId: auth.ref },

323 | requestParameters: {

324 | 'method.request.querystring.action': true,

325 | 'method.request.querystring.key': true

326 | },

327 | methodResponses: [

328 | {

329 | statusCode: "200",

330 | responseParameters: {

331 | 'method.response.header.Access-Control-Allow-Origin': true,

332 | },

333 | },

334 | {

335 | statusCode: "500",

336 | responseParameters: {

337 | 'method.response.header.Access-Control-Allow-Origin': true,

338 | },

339 | }

340 | ]

341 | });

342 |

343 | // =====================================================================================

344 | // Building SQS queue and DeadLetter Queue

345 | // =====================================================================================

346 | const dlQueue = new sqs.Queue(this, 'ImageDLQueue', {

347 | queueName: 'ImageDLQueue'

348 | })

349 |

350 | const queue = new sqs.Queue(this, 'ImageQueue', {

351 | queueName: 'ImageQueue',

352 | visibilityTimeout: cdk.Duration.seconds(30),

353 | receiveMessageWaitTime: cdk.Duration.seconds(20),

354 | deadLetterQueue: {

355 | maxReceiveCount: 2,

356 | queue: dlQueue

357 | }

358 | });

359 |

360 | // =====================================================================================

361 | // Building S3 Bucket Create Notification to SQS

362 | // =====================================================================================

363 | imageBucket.addObjectCreatedNotification(new s3n.SqsDestination(queue), { prefix: 'private/' })

364 |

365 | // =====================================================================================

366 | // Lambda(Rekognition) to consume messages from SQS

367 | // =====================================================================================

368 | rekFn.addEventSource(new event_sources.SqsEventSource(queue));

369 | }

370 | }

371 |

--------------------------------------------------------------------------------

/package.json:

--------------------------------------------------------------------------------

1 | {

2 | "name": "awsdevhour",

3 | "version": "0.1.1",

4 | "bin": {

5 | "awsdevhour": "bin/awsdevhour.js"

6 | },

7 | "scripts": {

8 | "build": "tsc",

9 | "watch": "tsc -w",

10 | "test": "jest",

11 | "cdk": "cdk",

12 | "outputs": "aws cloudformation describe-stacks --stack-name dev-AwsdevhourBackendPipelineStage | jq '.Stacks | .[] | .Outputs | reduce .[] as $i ({}; .[$i.OutputKey] = $i.OutputValue)'"

13 | },

14 | "devDependencies": {

15 | "@aws-cdk/assert": "^1.121.0",

16 | "@types/jest": "^27.0.1",

17 | "@types/node": "16.7.13",

18 | "aws-cdk": "^1.121.0",

19 | "jest": "^27.1.0",

20 | "ts-jest": "^27.0.5",

21 | "ts-node": "^10.2.1",

22 | "typescript": "~4.4.2"

23 | },

24 | "dependencies": {

25 | "@aws-cdk/aws-apigateway": "^1.121.0",

26 | "@aws-cdk/aws-codepipeline": "^1.121.0",

27 | "@aws-cdk/aws-codepipeline-actions": "^1.121.0",

28 | "@aws-cdk/aws-cognito": "^1.121.0",

29 | "@aws-cdk/aws-dynamodb": "^1.121.0",

30 | "@aws-cdk/aws-iam": "^1.121.0",

31 | "@aws-cdk/aws-lambda": "^1.121.0",

32 | "@aws-cdk/aws-lambda-event-sources": "^1.121.0",

33 | "@aws-cdk/aws-s3": "^1.121.0",

34 | "@aws-cdk/aws-s3-deployment": "^1.121.0",

35 | "@aws-cdk/aws-s3-notifications": "^1.121.0",

36 | "@aws-cdk/aws-sqs": "^1.121.0",

37 | "@aws-cdk/core": "^1.121.0",

38 | "@aws-cdk/pipelines": "^1.121.0",

39 | "source-map-support": "^0.5.19"

40 | }

41 | }

42 |

--------------------------------------------------------------------------------

/public/index.html:

--------------------------------------------------------------------------------

1 |

2 |

3 |

4 |

5 | Please replace this with your frontend

6 |

7 |

8 |

9 |

10 |

--------------------------------------------------------------------------------

/reklayer/pillow-goes-here.txt:

--------------------------------------------------------------------------------

https://raw.githubusercontent.com/aws-samples/aws-dev-hour-backend/afc65bd555ff8ec667b74af0c6267374675c9510/reklayer/pillow-goes-here.txt

--------------------------------------------------------------------------------

/rekognitionlambda/event.json:

--------------------------------------------------------------------------------

1 | {

2 | "Records": [{

3 | "s3": {

4 | "bucket": {

5 | "name": "your-bucket-name"

6 | },

7 | "object": {

8 | "key": "image.jpg"

9 | }

10 | }

11 | }]

12 | }

--------------------------------------------------------------------------------

/rekognitionlambda/index.py:

--------------------------------------------------------------------------------

1 | #

2 | # Lambda function to detect labels in image using Amazon Rekognition

3 | #

4 |

5 | import logging

6 | import boto3

7 | from botocore.exceptions import ClientError

8 | import os

9 | from urllib.parse import unquote_plus

10 | from boto3.dynamodb.conditions import Key, Attr

11 | import uuid

12 | from PIL import Image

13 | import json

14 |

15 | thumbBucket = os.environ['RESIZEDBUCKET']

16 |

17 | # Set the minimum confidence for Amazon Rekognition

18 |

19 | minConfidence = 50

20 |

21 | """MinConfidence parameter (float) -- Specifies the minimum confidence level for the labels to return.

22 | Amazon Rekognition doesn't return any labels with a confidence lower than this specified value.

23 | If you specify a value of 0, all labels are returned, regardless of the default thresholds that the

24 | model version applies."""

25 |

26 | ## Instantiate service clients outside of handler for context reuse / performance

27 |

28 | # Constructor for our s3 client object

29 | s3_client = boto3.client('s3')

30 | # Constructor to create rekognition client object

31 | rekognition_client = boto3.client('rekognition')

32 | # Constructor for DynamoDB resource object

33 | dynamodb = boto3.resource('dynamodb')

34 |

35 | def handler(event, context):

36 |

37 | print("Lambda processing event: ", event)

38 |

39 | # For each message (photo) get the bucket name and key

40 | for response in event['Records']:

41 | formatted = json.loads(response['body'])

42 | for record in formatted['Records']:

43 | ourBucket = record['s3']['bucket']['name']

44 | ourKey = record['s3']['object']['key']

45 |

46 | # For each bucket/key, retrieve labels

47 | generateThumb(ourBucket, ourKey)

48 | rekFunction(ourBucket, ourKey)

49 |

50 | return

51 |

52 | def generateThumb(ourBucket, ourKey):

53 |

54 | # Clean the string to add the colon back into requested name

55 | safeKey = replaceSubstringWithColon(ourKey)

56 |

57 | # Define upload and download paths

58 | key = unquote_plus(safeKey)

59 | tmpkey = key.replace('/', '')

60 | download_path = '/tmp/{}{}'.format(uuid.uuid4(), tmpkey)

61 | upload_path = '/tmp/resized-{}'.format(tmpkey)

62 |

63 | # Download file from s3 and store it in Lambda /tmp storage (512MB avail)

64 | try:

65 | s3_client.download_file(ourBucket, key, download_path)

66 | except ClientError as e:

67 | logging.error(e)

68 | # Create our thumbnail using Pillow library

69 | resize_image(download_path, upload_path)

70 |

71 | # Upload the thumbnail to the thumbnail bucket

72 | try:

73 | s3_client.upload_file(upload_path, thumbBucket, safeKey)

74 | except ClientError as e:

75 | logging.error(e)

76 |

77 | # Be good little citizens and clean up files in /tmp so that we don't run out of space

78 | os.remove(upload_path)

79 | os.remove(download_path)

80 |

81 | return

82 |

83 | def resize_image(image_path, resized_path):

84 | with Image.open(image_path) as image:

85 | image.thumbnail(tuple(x / 2 for x in image.size))

86 | image.save(resized_path)

87 |

88 | def rekFunction(ourBucket, ourKey):

89 |

90 | # Clean the string to add the colon back into requested name which was substitued by Amplify Library.

91 | safeKey = replaceSubstringWithColon(ourKey)

92 |

93 | print('Currently processing the following image')

94 | print('Bucket: ' + ourBucket + ' key name: ' + safeKey)

95 |

96 | detectLabelsResults = {}

97 |

98 | # Try and retrieve labels from Amazon Rekognition, using the confidence level we set in minConfidence var

99 | try:

100 | detectLabelsResults = rekognition_client.detect_labels(Image={'S3Object': {'Bucket':ourBucket, 'Name':safeKey}},

101 | MaxLabels=10,

102 | MinConfidence=minConfidence)

103 |

104 | except ClientError as e:

105 | logging.error(e)

106 |

107 | # Create our array and dict for our label construction

108 |

109 | objectsDetected = []

110 |

111 | imageLabels = {

112 | 'image': safeKey

113 | }

114 |

115 | # Add all of our labels into imageLabels by iterating over response['Labels']

116 |

117 | for label in detectLabelsResults['Labels']:

118 | newItem = label['Name']

119 | objectsDetected.append(newItem)

120 | objectNum = len(objectsDetected)

121 | itemAtt = f"object{objectNum}"

122 |

123 | # We now have our shiny new item ready to put into DynamoDB

124 | imageLabels[itemAtt] = newItem

125 |

126 |

127 | # Instantiate a table resource object of our environment variable

128 | imageLabelsTable = os.environ['TABLE']

129 | table = dynamodb.Table(imageLabelsTable)

130 |

131 | # Put item into table

132 | try:

133 | table.put_item(Item=imageLabels)

134 | except ClientError as e:

135 | logging.error(e)

136 |

137 | return

138 |

139 | # Clean the string to add the colon back into requested name

140 | def replaceSubstringWithColon(txt):

141 |

142 | return txt.replace("%3A", ":")

143 |

--------------------------------------------------------------------------------

/servicelambda/index.py:

--------------------------------------------------------------------------------

1 | #

2 | # # s3 Image Rekognition Front End Microservice

3 | #

4 |

5 | import logging

6 | import boto3

7 | from botocore.exceptions import ClientError

8 | import os

9 |

10 |

11 | # Constructors for Amazon DynamoDB and S3 resource object

12 | dynamodb = boto3.resource('dynamodb')

13 | s3 = boto3.resource('s3')

14 |

15 | def handler(event, context):

16 |

17 | # Detect requested action from the Amazon API Gateway Event

18 | action = event['action']

19 | image = event['key']

20 |

21 | imageRequest = {

22 | "key": image

23 | }

24 |

25 | # GET Request from API

26 | if action == "getLabels":

27 | getResults = getLabelsFunction(imageRequest)

28 | if "image" in getResults:

29 | return getResults

30 | else:

31 | return "No Results"

32 |

33 | # DELETE Request from API

34 | if action == "deleteImage":

35 | delResults = deleteImage(imageRequest)

36 | return delResults

37 | else:

38 | raise Exception("Action not detected or recognised")

39 |

40 | def getLabelsFunction(image):

41 |

42 | key = image['key']

43 |

44 | # Instantiate a table resource object

45 | imageLabelsTable = os.environ['TABLE']

46 | table = dynamodb.Table(imageLabelsTable)

47 |

48 | # Get item from table

49 |

50 | try:

51 | response = table.get_item(Key={'image': key})

52 | item = response['Item']

53 | return item

54 |

55 | except ClientError as e:

56 | logging.error(e)

57 | return "No labels or error"

58 |

59 | def deleteImage(image):

60 |

61 | key = image['key']

62 |

63 | # Instantiate a table resource object

64 | imageLabelsTable = os.environ['TABLE']

65 | table = dynamodb.Table(imageLabelsTable)

66 |

67 | # Delete item from table

68 |

69 | try:

70 | table.delete_item(Key={'image': key})

71 |

72 | except ClientError as e:

73 | logging.error(e)

74 |

75 | bucketName = os.environ["BUCKET"]

76 | resizedBucketName = os.environ["RESIZEDBUCKET"]

77 |

78 | # Delete Photo and Thumbnail from Amazon S3

79 |

80 | try:

81 | s3.Object(bucketName, key).delete()

82 | s3.Object(resizedBucketName, key).delete()

83 |

84 | except ClientError as e:

85 | logging.error(e)

86 |

87 | return "Delete request successfully processed"

--------------------------------------------------------------------------------

/test/awsdevhour.test.ts:

--------------------------------------------------------------------------------

1 | import { expect as expectCDK, matchTemplate, MatchStyle } from '@aws-cdk/assert';

2 | import * as cdk from '@aws-cdk/core';

3 | import * as Awsdevhour from '../lib/awsdevhour-stack';

4 |

5 | test('Empty Stack', () => {

6 | const app = new cdk.App();

7 | // WHEN

8 | const stack = new Awsdevhour.AwsdevhourStack(app, 'MyTestStack');

9 | // THEN

10 | expectCDK(stack).to(matchTemplate({

11 | "Resources": {}

12 | }, MatchStyle.EXACT))

13 | });

14 |

--------------------------------------------------------------------------------

/tsconfig.json:

--------------------------------------------------------------------------------

1 | {

2 | "compilerOptions": {

3 | "target": "ES2018",

4 | "module": "commonjs",

5 | "lib": ["es2018"],

6 | "declaration": true,

7 | "strict": true,

8 | "noImplicitAny": true,

9 | "strictNullChecks": true,

10 | "noImplicitThis": true,

11 | "alwaysStrict": true,

12 | "noUnusedLocals": false,

13 | "noUnusedParameters": false,

14 | "noImplicitReturns": true,

15 | "noFallthroughCasesInSwitch": false,

16 | "inlineSourceMap": true,

17 | "inlineSources": true,

18 | "experimentalDecorators": true,

19 | "strictPropertyInitialization": false,

20 | "typeRoots": ["./node_modules/@types"]

21 | },

22 | "exclude": ["cdk.out"]

23 | }

24 |

--------------------------------------------------------------------------------

11 |

12 | ## Episodes

13 |

14 | During each episode, we will be progressively building this full-stack application together. Please see the following URL for schedule and episode details:

15 |

16 | [AWS Dev Hour Schedule](https://pages.awscloud.com/traincert-twitch-dev-hour?sc_icampaign=event_twitch_devhour_launch_hosts&sc_channel=sm)

17 |

18 | #### Git branch

19 |

20 | [Episode 1: CDK](https://github.com/aws-samples/aws-dev-hour-backend/tree/feature/episode1)

21 |

22 | [Episode 2: AWS Lambda & Amazon DynamoDB](https://github.com/aws-samples/aws-dev-hour-backend/tree/feature/episode2)

23 |

24 | [Episode 3: Amazon API Gateway](https://github.com/aws-samples/aws-dev-hour-backend/tree/feature/episode3)

25 |

26 | [Episode 4: AWS IAM & Amazon Cognito](https://github.com/aws-samples/aws-dev-hour-backend/tree/feature/episode4)

27 |

28 | [Episode 5: Amazon S3](https://github.com/aws-samples/aws-dev-hour-backend/tree/feature/episode5)

29 |

30 | [Episode 6: Amazon SQS](https://github.com/aws-samples/aws-dev-hour-backend/tree/feature/episode6)

31 |

32 | [Episode 7: Deployment Pipeline](https://github.com/aws-samples/aws-dev-hour-backend/tree/feature/episode7)

33 |

34 | ### Services used during the series:

35 |

36 | - Amazon Cognito

37 | - Amazon S3

38 | - Amazon Simple Queue Service

39 | - AWS Lambda

40 | - Amazon DynamoDB

41 | - Amazon Rekognition

42 | - AWS Cloud Development Kit

43 | - Amazon API Gateway

44 | - AWS CodeBuild

45 | - AWS CodePipeline

46 |

47 | ## Useful commands

48 |

49 | * `npm install` install packages

50 | * `cdk synth` emits the synthesized CloudFormation template

51 | * `cdk deploy` deploy this stack

52 | * `cdk diff` compare deployed stack with current state

53 |

54 | The `cdk.json` file tells the CDK Toolkit how to execute your app.

55 |

56 | ## Prerequisites

57 |

58 | All CDK developers need to install Node.js 10.3.0 or later, even those working in languages other than TypeScript or JavaScript. The AWS CDK Toolkit (cdk command-line tool) and the AWS Construct Library run on Node.js. The bindings for other supported languages use this back end and tool set. We suggest the latest LTS version.

59 |

60 | ```bash

61 | aws configure

62 | npm -g install typescript

63 | npm install -g aws-cdk

64 | ```

65 | If you have not yet done so, you will also need to bootstrap your account:

66 |

67 | ```bash

68 | cdk bootstrap aws://ACCOUNT-NUMBER-1/REGION-1