├── overview.jpg

├── requirements.txt

├── .gitignore

├── CONTRIBUTING.md

├── src

├── agent_debugger

│ ├── pycoworld

│ │ ├── types.py

│ │ ├── debugger.py

│ │ ├── extractors.py

│ │ └── interventions.py

│ ├── types.py

│ ├── agent.py

│ ├── node.py

│ ├── default_interventions.py

│ └── debugger.py

├── pycoworld

│ ├── serializable_environment.py

│ ├── levels

│ │ ├── color_memory.py

│ │ ├── level_names.py

│ │ ├── apples.py

│ │ ├── key_door.py

│ │ ├── utils.py

│ │ ├── red_green_apples.py

│ │ ├── grass_sand.py

│ │ └── base_level.py

│ ├── default_constants.py

│ ├── default_sprites_and_drapes.py

│ └── environment.py

├── impala_agent.py

└── impala_net.py

├── README.md

├── LICENSE

└── colabs

└── experiments.ipynb

/overview.jpg:

--------------------------------------------------------------------------------

https://raw.githubusercontent.com/google-deepmind/agent_debugger/HEAD/overview.jpg

--------------------------------------------------------------------------------

/requirements.txt:

--------------------------------------------------------------------------------

1 | chex

2 | dill

3 | dm-env

4 | dm-haiku

5 | dm-tree

6 | jax

7 | numpy

8 | pycolab

9 | scipy

10 |

--------------------------------------------------------------------------------

/.gitignore:

--------------------------------------------------------------------------------

1 | # Byte-compiled / optimized / DLL files

2 | __pycache__/

3 | *.py[cod]

4 | *$py.class

5 |

6 | # Distribution / packaging

7 | .Python

8 | build/

9 | develop-eggs/

10 | dist/

11 | downloads/

12 | eggs/

13 | .eggs/

14 | lib/

15 | lib64/

16 | parts/

17 | sdist/

18 | var/

19 | wheels/

20 | share/python-wheels/

21 | *.egg-info/

22 | .installed.cfg

23 | *.egg

24 | MANIFEST

25 |

--------------------------------------------------------------------------------

/CONTRIBUTING.md:

--------------------------------------------------------------------------------

1 | # How to Contribute

2 |

3 | ## Contributor License Agreement

4 |

5 | Contributions to this project must be accompanied by a Contributor License

6 | Agreement. You (or your employer) retain the copyright to your contribution,

7 | this simply gives us permission to use and redistribute your contributions as

8 | part of the project. Head over to to see

9 | your current agreements on file or to sign a new one.

10 |

11 | You generally only need to submit a CLA once, so if you've already submitted one

12 | (even if it was for a different project), you probably don't need to do it

13 | again.

14 |

15 | ## Code reviews

16 |

17 | All submissions, including submissions by project members, require review. We

18 | use GitHub pull requests for this purpose. Consult

19 | [GitHub Help](https://help.github.com/articles/about-pull-requests/) for more

20 | information on using pull requests.

21 |

22 | ## Community Guidelines

23 |

24 | This project follows [Google's Open Source Community

25 | Guidelines](https://opensource.google/conduct/).

26 |

--------------------------------------------------------------------------------

/src/agent_debugger/pycoworld/types.py:

--------------------------------------------------------------------------------

1 | # Copyright 2023 DeepMind Technologies Limited

2 | #

3 | # Licensed under the Apache License, Version 2.0 (the "License");

4 | # you may not use this file except in compliance with the License.

5 | # You may obtain a copy of the License at

6 | #

7 | # http://www.apache.org/licenses/LICENSE-2.0

8 | #

9 | # Unless required by applicable law or agreed to in writing, software

10 | # distributed under the License is distributed on an "AS IS" BASIS,

11 | # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

12 | # See the License for the specific language governing permissions and

13 | # limitations under the License.

14 | # ==============================================================================

15 |

16 | """Specific types for the Pycoworld debugger."""

17 |

18 | from typing import Union

19 |

20 | from pycolab import engine as engine_lib

21 | from pycolab import things

22 |

23 | PycolabPosition = things.Sprite.Position

24 | Position = Union[PycolabPosition, tuple[float, float]]

25 | PycolabEngine = engine_lib.Engine

26 |

27 |

28 | def to_pycolab_position(pos: Position) -> PycolabPosition:

29 | """Returns a PycolabPosition from a tuple or a PycolabPosition."""

30 | if isinstance(pos, tuple):

31 | return PycolabPosition(*pos)

32 | return pos

33 |

--------------------------------------------------------------------------------

/src/agent_debugger/types.py:

--------------------------------------------------------------------------------

1 | # Copyright 2023 DeepMind Technologies Limited

2 | #

3 | # Licensed under the Apache License, Version 2.0 (the "License");

4 | # you may not use this file except in compliance with the License.

5 | # You may obtain a copy of the License at

6 | #

7 | # http://www.apache.org/licenses/LICENSE-2.0

8 | #

9 | # Unless required by applicable law or agreed to in writing, software

10 | # distributed under the License is distributed on an "AS IS" BASIS,

11 | # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

12 | # See the License for the specific language governing permissions and

13 | # limitations under the License.

14 | # ==============================================================================

15 |

16 | """Custom types used throughout the codebase.

17 |

18 | A seed is required for the agent. For the environment, it can be included

19 | but it's not mandatory in the dm_env standard.

20 | """

21 |

22 | from typing import Any, Callable, NamedTuple

23 |

24 | import dm_env

25 |

26 | from agent_debugger.src.pycoworld import serializable_environment

27 |

28 | Action = Any

29 | Observation = Any

30 | TimeStep = dm_env.TimeStep

31 | FirstTimeStep = dm_env.StepType.FIRST

32 | MidTimeStep = dm_env.StepType.MID

33 | LastTimeStep = dm_env.StepType.LAST

34 | Environment = dm_env.Environment

35 | SerializableEnvironment = serializable_environment.SerializableEnvironment

36 | Agent = Any

37 | Rng = Any

38 | EnvState = Any

39 | EnvBuilder = Callable[[], Environment]

40 | SerializableEnvBuilder = Callable[[], SerializableEnvironment]

41 |

42 |

43 | # We don't use dataclasses as they are not supported by jax.

44 | class AgentState(NamedTuple):

45 | internal_state: Any

46 | seed: Rng

47 |

--------------------------------------------------------------------------------

/src/agent_debugger/agent.py:

--------------------------------------------------------------------------------

1 | # Copyright 2023 DeepMind Technologies Limited

2 | #

3 | # Licensed under the Apache License, Version 2.0 (the "License");

4 | # you may not use this file except in compliance with the License.

5 | # You may obtain a copy of the License at

6 | #

7 | # http://www.apache.org/licenses/LICENSE-2.0

8 | #

9 | # Unless required by applicable law or agreed to in writing, software

10 | # distributed under the License is distributed on an "AS IS" BASIS,

11 | # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

12 | # See the License for the specific language governing permissions and

13 | # limitations under the License.

14 | # ==============================================================================

15 |

16 | """Base class of agent recognized by the Agent Debugger."""

17 |

18 | import abc

19 | from typing import Any

20 |

21 | import chex

22 | import dm_env

23 |

24 | from agent_debugger.src.agent_debugger import types

25 |

26 |

27 | class DebuggerAgent(abc.ABC):

28 | """The standard agent interface to be used in the debugger.

29 |

30 | The internal state is wrapped with the seed. The step method takes an

31 | observation and not a timestep. The agent parameters (mostly neural network

32 | parameters) are fixed and are NOT part of the state.

33 | """

34 |

35 | @abc.abstractmethod

36 | def initial_state(self, rng: chex.PRNGKey) -> types.AgentState:

37 | """Returns the initial state of the agent."""

38 |

39 | @abc.abstractmethod

40 | def step(

41 | self,

42 | timestep: dm_env.TimeStep,

43 | state: types.AgentState,

44 | ) -> tuple[types.Action, Any, types.AgentState]:

45 | """Steps the agent, taking a timestep (including observation) and a state."""

46 |

--------------------------------------------------------------------------------

/src/pycoworld/serializable_environment.py:

--------------------------------------------------------------------------------

1 | # Copyright 2023 DeepMind Technologies Limited

2 | #

3 | # Licensed under the Apache License, Version 2.0 (the "License");

4 | # you may not use this file except in compliance with the License.

5 | # You may obtain a copy of the License at

6 | #

7 | # http://www.apache.org/licenses/LICENSE-2.0

8 | #

9 | # Unless required by applicable law or agreed to in writing, software

10 | # distributed under the License is distributed on an "AS IS" BASIS,

11 | # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

12 | # See the License for the specific language governing permissions and

13 | # limitations under the License.

14 | # ==============================================================================

15 |

16 | """Serializable environment class.

17 |

18 | The main feature is to ability to retrieve and set the state of an environment.

19 | """

20 |

21 | import abc

22 | from typing import Any

23 |

24 | import dm_env

25 |

26 |

27 | class SerializableEnvironment(dm_env.Environment, abc.ABC):

28 | """Abstract class with methods to set and get states.

29 |

30 | The state can be anything even though we prefer mappings like dicts for

31 | readability. It must contain all information, no compression is allowed. The

32 | state must be a parse of the environment containing only the stateful

33 | variables.

34 | There is no assumption on how the agent would use this object: please provide

35 | copies of internal attributes to avoid side effects.

36 | """

37 |

38 | @abc.abstractmethod

39 | def get_state(self) -> Any:

40 | """Returns the state of the environment."""

41 |

42 | @abc.abstractmethod

43 | def set_state(self, state: Any) -> Any:

44 | """Sets the state of the environment.

45 |

46 | Args:

47 | state: The state to set.

48 | """

49 |

--------------------------------------------------------------------------------

/src/agent_debugger/pycoworld/debugger.py:

--------------------------------------------------------------------------------

1 | # Copyright 2023 DeepMind Technologies Limited

2 | #

3 | # Licensed under the Apache License, Version 2.0 (the "License");

4 | # you may not use this file except in compliance with the License.

5 | # You may obtain a copy of the License at

6 | #

7 | # http://www.apache.org/licenses/LICENSE-2.0

8 | #

9 | # Unless required by applicable law or agreed to in writing, software

10 | # distributed under the License is distributed on an "AS IS" BASIS,

11 | # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

12 | # See the License for the specific language governing permissions and

13 | # limitations under the License.

14 | # ==============================================================================

15 |

16 | """Specific implementation of the debugger for pycoworld."""

17 |

18 | from agent_debugger.src.agent_debugger import agent as debugger_agent

19 | from agent_debugger.src.agent_debugger import debugger as dbg

20 | from agent_debugger.src.agent_debugger.pycoworld import extractors as pycolab_extractors

21 | from agent_debugger.src.agent_debugger.pycoworld import interventions as pycolab_interventions

22 | from agent_debugger.src.pycoworld import serializable_environment

23 |

24 |

25 | class PycoworldDebugger(dbg.Debugger):

26 | """Overriding the Debugger with additional interventions and extractors.

27 |

28 | Attributes:

29 | interventions: An object to intervene on pycoworld nodes.

30 | extractors: An object to extract information from pycoworld nodes.

31 | """

32 |

33 | def __init__(

34 | self,

35 | agent: debugger_agent.DebuggerAgent,

36 | env: serializable_environment.SerializableEnvironment,

37 | ) -> None:

38 | """Initializes the object."""

39 | super().__init__(agent, env)

40 |

41 | self.interventions = pycolab_interventions.PycoworldInterventions(self._env)

42 | self.extractors = pycolab_extractors.PycoworldExtractors()

43 |

--------------------------------------------------------------------------------

/src/agent_debugger/node.py:

--------------------------------------------------------------------------------

1 | # Copyright 2023 DeepMind Technologies Limited

2 | #

3 | # Licensed under the Apache License, Version 2.0 (the "License");

4 | # you may not use this file except in compliance with the License.

5 | # You may obtain a copy of the License at

6 | #

7 | # http://www.apache.org/licenses/LICENSE-2.0

8 | #

9 | # Unless required by applicable law or agreed to in writing, software

10 | # distributed under the License is distributed on an "AS IS" BASIS,

11 | # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

12 | # See the License for the specific language governing permissions and

13 | # limitations under the License.

14 | # ==============================================================================

15 |

16 | """The base node interface used in the debugger."""

17 |

18 | import dataclasses

19 | from typing import Any, Optional, Sequence

20 |

21 | from agent_debugger.src.agent_debugger import types

22 |

23 |

24 | @dataclasses.dataclass

25 | class Node:

26 | """A node is the concatenation of the agent_state and the env_state.

27 |

28 | Besides the agent and env state, it also carries the action taken by the agent

29 | and the timestep returned by the environment in the last transition. This

30 | transition can be seen as the arrow leading to the state, therefore we call it

31 | 'last_action' and 'last_timestep'. The latter will be used to step to the next

32 | state.

33 | Finally, the node also carries some future agent actions that the user wants

34 | to enforce. The first element of the list will be used to force the next

35 | transition action, and the list minus this first element will be passed to the

36 | next state.

37 | """

38 |

39 | agent_state: types.AgentState

40 | last_action: types.Action

41 | env_state: types.EnvState

42 | last_timestep: types.TimeStep

43 | forced_next_actions: Sequence[types.Action] = ()

44 | episode_step: int = 0

45 | last_agent_output: Optional[Any] = None

46 |

47 | @property

48 | def is_terminal(self) -> bool:

49 | """Returns whether the last timestep is of step_type LAST."""

50 | return self.last_timestep.step_type == types.LastTimeStep

51 |

--------------------------------------------------------------------------------

/src/pycoworld/levels/color_memory.py:

--------------------------------------------------------------------------------

1 | # Copyright 2023 DeepMind Technologies Limited

2 | #

3 | # Licensed under the Apache License, Version 2.0 (the "License");

4 | # you may not use this file except in compliance with the License.

5 | # You may obtain a copy of the License at

6 | #

7 | # http://www.apache.org/licenses/LICENSE-2.0

8 | #

9 | # Unless required by applicable law or agreed to in writing, software

10 | # distributed under the License is distributed on an "AS IS" BASIS,

11 | # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

12 | # See the License for the specific language governing permissions and

13 | # limitations under the License.

14 | # ==============================================================================

15 |

16 | """Color memory level."""

17 |

18 | import numpy as np

19 |

20 | from agent_debugger.src.pycoworld.levels import grass_sand

21 |

22 |

23 | class ColorMemoryLevel(grass_sand.GrassSandLevel):

24 | """A modification of the grass sand environment to test memory.

25 |

26 | The position of the reward is correlated with the floor type, as

27 | in grass_sand, but the agent has an egocentric view. Therefore, it must

28 | remember the color at the beginning to get the reward as fast as possible.

29 | """

30 |

31 | def __init__(self, corr: float = 1.0, large: bool = False) -> None:

32 | """Initializes the level.

33 |

34 | Args:

35 | corr: see grass_sand

36 | large: whether to enlarge the initial corridor.

37 | """

38 | if not large:

39 | above = np.array([[0, 0, 0, 0, 4, 4, 4], [0, 0, 0, 0, 4, 0, 4],

40 | [4, 4, 4, 4, 4, 0, 4], [4, 4, 4, 4, 4, 0, 4],

41 | [4, 99, 8, 0, 0, 0, 4], [4, 4, 4, 4, 4, 0, 4],

42 | [4, 4, 4, 4, 4, 0, 4], [0, 0, 0, 0, 4, 0, 4],

43 | [0, 0, 0, 0, 4, 4, 4]])

44 | else:

45 | above = np.array([[0, 0, 0, 0, 4, 4, 4], [0, 0, 0, 0, 4, 0, 4],

46 | [4, 4, 4, 4, 4, 0, 4], [4, 4, 0, 0, 0, 0, 4],

47 | [4, 99, 8, 0, 0, 0, 4], [4, 4, 0, 0, 0, 0, 4],

48 | [4, 4, 4, 4, 4, 0, 4], [0, 0, 0, 0, 4, 0, 4],

49 | [0, 0, 0, 0, 4, 4, 4]])

50 | below = np.zeros(above.shape, dtype=np.uint8)

51 | reward_pos = (np.array([1, 5]), np.array([7, 5]))

52 | super().__init__(corr, above, below, reward_pos, double_terminal_event=True)

53 |

--------------------------------------------------------------------------------

/src/pycoworld/levels/level_names.py:

--------------------------------------------------------------------------------

1 | # Copyright 2023 DeepMind Technologies Limited

2 | #

3 | # Licensed under the Apache License, Version 2.0 (the "License");

4 | # you may not use this file except in compliance with the License.

5 | # You may obtain a copy of the License at

6 | #

7 | # http://www.apache.org/licenses/LICENSE-2.0

8 | #

9 | # Unless required by applicable law or agreed to in writing, software

10 | # distributed under the License is distributed on an "AS IS" BASIS,

11 | # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

12 | # See the License for the specific language governing permissions and

13 | # limitations under the License.

14 | # ==============================================================================

15 |

16 | """Level names for backward compatibility.

17 |

18 | This module lists the names of levels for backward compatibililty with old code.

19 | We plan to deprecate this module in the future so please do not rely on it for

20 | new code.

21 | """

22 |

23 | from agent_debugger.src.pycoworld.levels import apples

24 | from agent_debugger.src.pycoworld.levels import base_level

25 | from agent_debugger.src.pycoworld.levels import color_memory

26 | from agent_debugger.src.pycoworld.levels import grass_sand

27 | from agent_debugger.src.pycoworld.levels import key_door

28 | from agent_debugger.src.pycoworld.levels import red_green_apples

29 |

30 |

31 | # Provided for backward compatibility only. Please do not use in new code.

32 | def level_by_name(level_name: str) -> base_level.PycoworldLevel:

33 | """Returns the level corresponding to a given level name.

34 |

35 | Provided for backward compatibility only. Please do not use in new code.

36 |

37 | Args:

38 | level_name: Name of the level to construct. See NAMED_LEVELS for the

39 | supported level names.

40 | """

41 | if level_name == 'apples_corner':

42 | return apples.ApplesLevel(start_type='corner')

43 | elif level_name == 'apples_full':

44 | return apples.ApplesLevel()

45 | elif level_name == 'grass_sand':

46 | return grass_sand.GrassSandLevel()

47 | elif level_name == 'grass_sand_uncorrelated':

48 | return grass_sand.GrassSandLevel(corr=0.5, double_terminal_event=True)

49 | elif level_name == 'key_door':

50 | return key_door.KeyDoorLevel()

51 | elif level_name == 'key_door_closed':

52 | return key_door.KeyDoorLevel(door_type='closed')

53 | elif level_name == 'large_color_memory':

54 | return color_memory.ColorMemoryLevel(large=True)

55 | elif level_name == 'red_green_apples':

56 | return red_green_apples.RedGreenApplesLevel()

57 |

58 | raise ValueError(f'Unknown level {level_name}.')

59 |

--------------------------------------------------------------------------------

/src/agent_debugger/pycoworld/extractors.py:

--------------------------------------------------------------------------------

1 | # Copyright 2023 DeepMind Technologies Limited

2 | #

3 | # Licensed under the Apache License, Version 2.0 (the "License");

4 | # you may not use this file except in compliance with the License.

5 | # You may obtain a copy of the License at

6 | #

7 | # http://www.apache.org/licenses/LICENSE-2.0

8 | #

9 | # Unless required by applicable law or agreed to in writing, software

10 | # distributed under the License is distributed on an "AS IS" BASIS,

11 | # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

12 | # See the License for the specific language governing permissions and

13 | # limitations under the License.

14 | # ==============================================================================

15 |

16 | """Pycoworld extractors.

17 |

18 | The extractors act on the node directly, and extract information from it.

19 | """

20 |

21 | import numpy as np

22 | from pycolab import things

23 |

24 | from agent_debugger.src.agent_debugger import node as node_lib

25 | from agent_debugger.src.agent_debugger.pycoworld import types

26 |

27 |

28 | class PycoworldExtractors:

29 | """Object containing the pycoworld extractors."""

30 |

31 | # pylint: disable=protected-access

32 | def get_element_positions(

33 | self,

34 | node: node_lib.Node,

35 | element_id: int,

36 | ) -> list[types.Position]:

37 | """Returns the position of a sprite/drape/background element.

38 |

39 | Args:

40 | node: The node to intervene on.

41 | element_id: The identifier of the element. A full list can be found in

42 | the pycoworld/default_constants.py file.

43 | """

44 | engine = node.env_state.current_game

45 | element_key = chr(element_id)

46 | if element_key in engine._sprites_and_drapes:

47 | # If it is a sprite.

48 | if isinstance(engine._sprites_and_drapes[element_key], things.Sprite):

49 | return [engine._sprites_and_drapes[element_key].position]

50 | # Otherwise, it's a drape.

51 | else:

52 | curtain = engine._sprites_and_drapes[element_key].curtain

53 | list_tuples = list(zip(*np.where(curtain)))

54 | return [types.PycolabPosition(*x) for x in list_tuples]

55 |

56 | # Last resort: we look at the board and the backdrop.

57 | board = engine._board.board

58 | list_tuples = list(zip(*np.where(board == ord(element_key))))

59 | backdrop = engine._backdrop.curtain

60 | list_tuples += list(zip(*np.where(backdrop == ord(element_key))))

61 | return [types.PycolabPosition(*x) for x in list_tuples]

62 |

63 | def get_backdrop_curtain(self, node: node_lib.Node) -> np.ndarray:

64 | """Returns the backdrop of a pycolab engine from a node."""

65 | return node.env_state.current_game._backdrop.curtain

66 |

67 | # pylint: enable=protected-access

68 |

--------------------------------------------------------------------------------

/src/impala_agent.py:

--------------------------------------------------------------------------------

1 | # Copyright 2023 DeepMind Technologies Limited

2 | #

3 | # Licensed under the Apache License, Version 2.0 (the "License");

4 | # you may not use this file except in compliance with the License.

5 | # You may obtain a copy of the License at

6 | #

7 | # http://www.apache.org/licenses/LICENSE-2.0

8 | #

9 | # Unless required by applicable law or agreed to in writing, software

10 | # distributed under the License is distributed on an "AS IS" BASIS,

11 | # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

12 | # See the License for the specific language governing permissions and

13 | # limitations under the License.

14 | # ==============================================================================

15 |

16 | """Impala agent class, which follows the DebuggerAgent interface."""

17 |

18 | from typing import Any, Callable

19 |

20 | import dm_env

21 | import haiku as hk

22 | import jax

23 | import jax.numpy as jnp

24 |

25 | from agent_debugger.src.agent_debugger import agent

26 | from agent_debugger.src.agent_debugger import types

27 |

28 |

29 | class ImpalaAgent(agent.DebuggerAgent):

30 | """Impala agent class."""

31 |

32 | def __init__(

33 | self,

34 | net_factory: Callable[[], hk.RNNCore],

35 | params: hk.Params,

36 | ) -> None:

37 | """Initializes the agent.

38 |

39 | Args:

40 | net_factory: Function to create the model.

41 | params: The parameters of the agent.

42 | """

43 | _, self._initial_state = hk.transform(

44 | lambda batch_size: net_factory().initial_state(batch_size))

45 |

46 | self._init_fn, apply_fn = hk.without_apply_rng(

47 | hk.transform(lambda obs, state: net_factory().__call__(obs, state)))

48 | self._apply_fn = jax.jit(apply_fn)

49 |

50 | self._params = params

51 |

52 | def initial_state(self, rng: jnp.ndarray) -> types.AgentState:

53 | """Returns the agent initial state."""

54 | # Wrapper method to avoid pytype attribute-error.

55 | return types.AgentState(

56 | internal_state=self._initial_state(self._params, rng, batch_size=1),

57 | seed=rng)

58 |

59 | def step(

60 | self,

61 | timestep: dm_env.TimeStep,

62 | state: types.AgentState,

63 | ) -> tuple[types.Action, Any, types.AgentState]:

64 | """Steps the agent in the environment."""

65 | net_out, next_state = self._apply_fn(self._params, timestep,

66 | state.internal_state)

67 |

68 | # Sample an action and return.

69 | action = hk.multinomial(state.seed, net_out.policy_logits, num_samples=1)

70 | action = jnp.squeeze(action, axis=-1)

71 | action = int(action)

72 |

73 | new_rng, _ = jax.random.split(state.seed)

74 | new_agent_state = types.AgentState(internal_state=next_state, seed=new_rng)

75 | return action, net_out, new_agent_state

76 |

--------------------------------------------------------------------------------

/src/pycoworld/default_constants.py:

--------------------------------------------------------------------------------

1 | # Copyright 2023 DeepMind Technologies Limited

2 | #

3 | # Licensed under the Apache License, Version 2.0 (the "License");

4 | # you may not use this file except in compliance with the License.

5 | # You may obtain a copy of the License at

6 | #

7 | # http://www.apache.org/licenses/LICENSE-2.0

8 | #

9 | # Unless required by applicable law or agreed to in writing, software

10 | # distributed under the License is distributed on an "AS IS" BASIS,

11 | # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

12 | # See the License for the specific language governing permissions and

13 | # limitations under the License.

14 | # ==============================================================================

15 |

16 | """Default constants for Pycoworld."""

17 |

18 | import enum

19 |

20 |

21 | class Tile(enum.IntEnum):

22 | """Available pycoworld tiles with their IDs.

23 |

24 | This IntEnum is only provided for better readability. There is no guarantee

25 | that the raw IDs are not used anywhere in the code and no requirement to use

26 | the enum in cases where the raw IDs are more readable.

27 | """

28 | FLOOR = 0

29 | FLOOR_R = 1

30 | FLOOR_B = 2

31 | FLOOR_G = 3

32 |

33 | WALL = 4

34 | WALL_R = 5

35 | WALL_B = 6

36 | WALL_G = 7

37 |

38 | PLAYER = 8

39 | PLAYER_R = 9

40 | PLAYER_B = 10

41 | PLAYER_G = 11

42 |

43 | TERMINAL = 12

44 | TERMINAL_R = 13

45 | TERMINAL_B = 14

46 | TERMINAL_G = 15

47 |

48 | BLOCK = 16

49 | BLOCK_R = 17

50 | BLOCK_B = 18

51 | BLOCK_G = 19

52 |

53 | REWARD = 20

54 | REWARD_R = 21

55 | REWARD_B = 22

56 | REWARD_G = 23

57 |

58 | BIG_REWARD = 24

59 | BIG_REWARD_R = 25

60 | BIG_REWARD_B = 26

61 | BIG_REWARD_G = 27

62 |

63 | HOLE = 28

64 | HOLE_R = 29

65 | HOLE_B = 30

66 | HOLE_G = 31

67 |

68 | SAND = 32

69 | GRASS = 33

70 | LAVA = 34

71 | WATER = 35

72 |

73 | KEY = 36

74 | KEY_R = 37

75 | KEY_B = 38

76 | KEY_G = 39

77 |

78 | DOOR = 40

79 | DOOR_R = 41

80 | DOOR_B = 42

81 | DOOR_G = 43

82 |

83 | SENSOR = 44

84 | SENSOR_R = 45

85 | SENSOR_B = 46

86 | SENSOR_G = 47

87 |

88 | OBJECT = 48

89 | OBJECT_R = 49

90 | OBJECT_B = 50

91 | OBJECT_G = 51

92 |

93 |

94 | # This dict defines which sensor matches which object.

95 | MATCHING_OBJECT_DICT = {

96 | Tile.SENSOR: Tile.OBJECT,

97 | Tile.SENSOR_R: Tile.OBJECT_R,

98 | Tile.SENSOR_G: Tile.OBJECT_G,

99 | Tile.SENSOR_B: Tile.OBJECT_B,

100 | }

101 |

102 | # Impassable tiles: these tiles cannot be traversed by the agent.

103 | IMPASSABLE = [

104 | Tile.WALL,

105 | Tile.WALL_R,

106 | Tile.WALL_B,

107 | Tile.WALL_G,

108 | Tile.BLOCK,

109 | Tile.BLOCK_R,

110 | Tile.BLOCK_B,

111 | Tile.BLOCK_G,

112 | Tile.DOOR,

113 | Tile.DOOR_R,

114 | Tile.DOOR_B,

115 | Tile.DOOR_G,

116 | ]

117 |

118 | REWARD_LAVA = -10.

119 | REWARD_WATER = -1.

120 | REWARD_SMALL = 1.

121 | REWARD_BIG = 5.

122 |

--------------------------------------------------------------------------------

/README.md:

--------------------------------------------------------------------------------

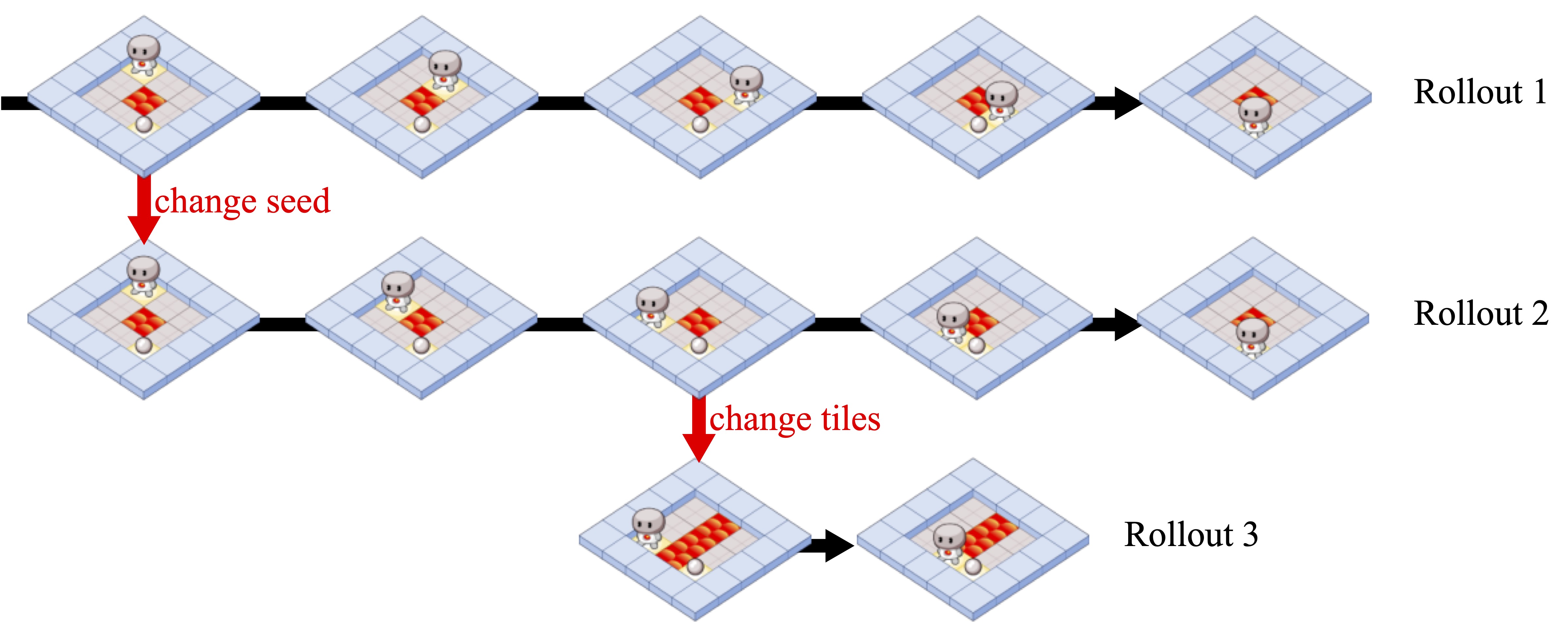

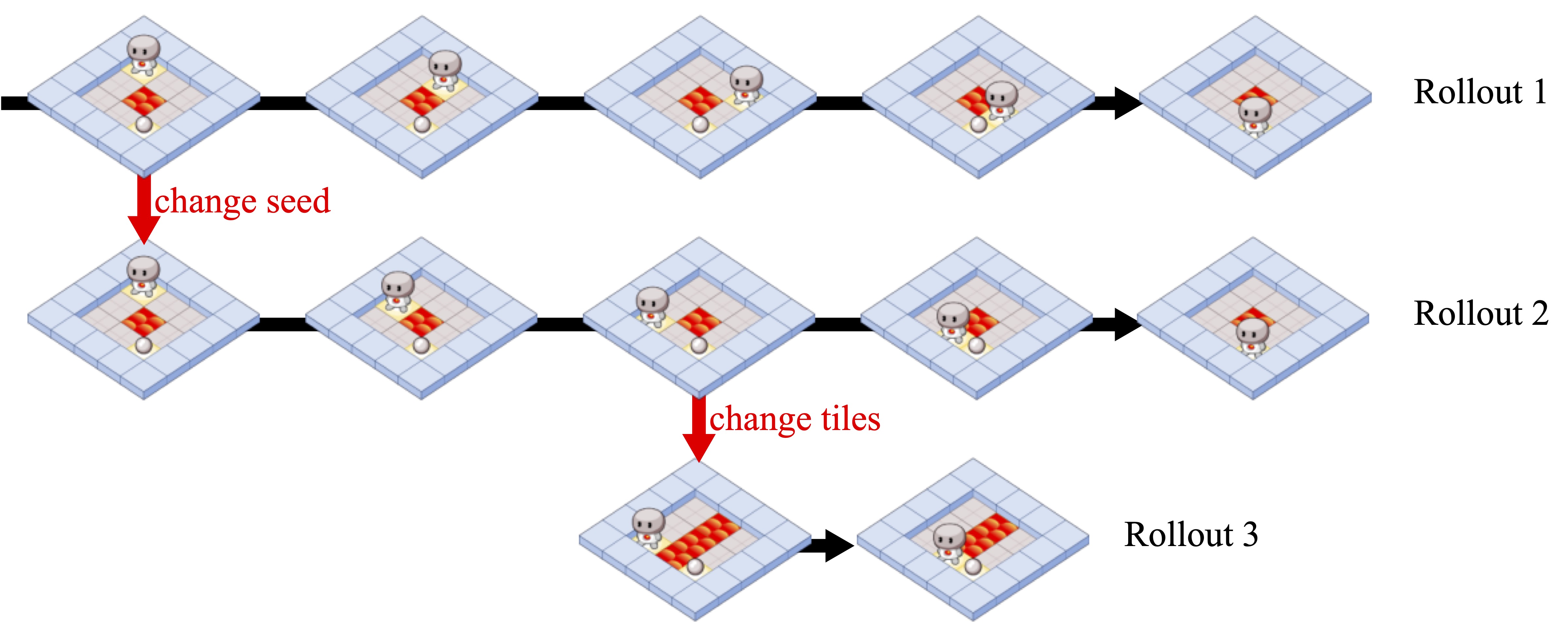

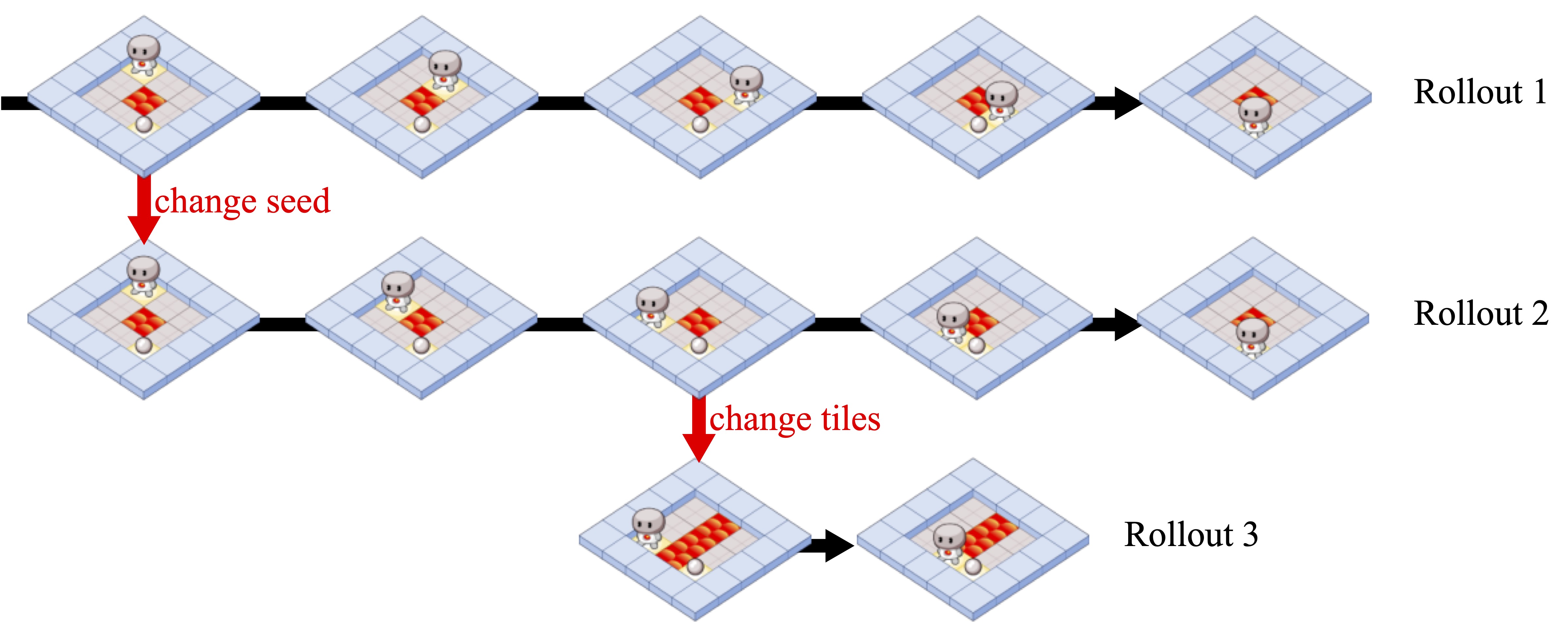

1 | # Causal Analysis of Agent Behavior for AI Safety

2 |

3 |

4 |  5 |

5 |

6 |

7 | This repository provides an implementation of our paper [Causal Analysis of Agent Behavior for AI Safety](https://arxiv.org/abs/2103.03938).

8 |

9 | >As machine learning systems become more powerful they also become increasingly unpredictable and opaque.

10 | Yet, finding human-understandable explanations of how they work is essential for their safe deployment.

11 | This technical report illustrates a methodology for investigating the causal mechanisms that drive the behaviour of artificial agents.

12 | Six use cases are covered, each addressing a typical question an analyst might ask about an agent.

13 | In particular, we show that each question cannot be addressed by pure observation alone, but instead requires conducting experiments with systematically chosen manipulations so as to generate the correct causal evidence.

14 |

15 | The main tool is the "Agent Debugger", which can be used to perform causal interventions on the environment to infer the causal model of an agent.

16 | We currently only support the environment Pycoworld, a 2D gridworld based on the open source game engine [Pycolab](https://github.com/deepmind/pycolab).

17 |

18 |

19 | ## Usage

20 |

21 | To reproduce the experiments of the paper, run the [experiments notebook](https://colab.research.google.com/github/deepmind/agent_debugger/blob/master/colabs/experiments.ipynb).

22 |

23 |

24 | ## Citing this work

25 |

26 | ```bibtex

27 | @article{deletang2021causal,

28 | author = {Gr{\'{e}}goire Del{\'{e}}tang and

29 | Jordi Grau{-}Moya and

30 | Miljan Martic and

31 | Tim Genewein and

32 | Tom McGrath and

33 | Vladimir Mikulik and

34 | Markus Kunesch and

35 | Shane Legg and

36 | Pedro A. Ortega},

37 | title = {Causal Analysis of Agent Behavior for {AI} Safety},

38 | journal = {arXiv:2103.03938},

39 | year = {2021},

40 | }

41 | ```

42 |

43 |

44 | ## License and disclaimer

45 |

46 | Copyright 2022 DeepMind Technologies Limited

47 |

48 | All software is licensed under the Apache License, Version 2.0 (Apache 2.0);

49 | you may not use this file except in compliance with the Apache 2.0 license.

50 | You may obtain a copy of the Apache 2.0 license at:

51 | https://www.apache.org/licenses/LICENSE-2.0

52 |

53 | All other materials are licensed under the Creative Commons Attribution 4.0

54 | International License (CC-BY). You may obtain a copy of the CC-BY license at:

55 | https://creativecommons.org/licenses/by/4.0/legalcode

56 |

57 | Unless required by applicable law or agreed to in writing, all software and

58 | materials distributed here under the Apache 2.0 or CC-BY licenses are

59 | distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND,

60 | either express or implied. See the licenses for the specific language governing

61 | permissions and limitations under those licenses.

62 |

63 | This is not an official Google product.

64 |

--------------------------------------------------------------------------------

/src/pycoworld/levels/apples.py:

--------------------------------------------------------------------------------

1 | # Copyright 2023 DeepMind Technologies Limited

2 | #

3 | # Licensed under the Apache License, Version 2.0 (the "License");

4 | # you may not use this file except in compliance with the License.

5 | # You may obtain a copy of the License at

6 | #

7 | # http://www.apache.org/licenses/LICENSE-2.0

8 | #

9 | # Unless required by applicable law or agreed to in writing, software

10 | # distributed under the License is distributed on an "AS IS" BASIS,

11 | # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

12 | # See the License for the specific language governing permissions and

13 | # limitations under the License.

14 | # ==============================================================================

15 |

16 | """Apples level."""

17 |

18 | import numpy as np

19 |

20 | from agent_debugger.src.pycoworld import default_constants

21 | from agent_debugger.src.pycoworld.levels import base_level

22 | from agent_debugger.src.pycoworld.levels import utils

23 |

24 | Tile = default_constants.Tile

25 |

26 |

27 | class ApplesLevel(base_level.PycoworldLevel):

28 | """Level where the goal is to pick up the reward."""

29 |

30 | def __init__(

31 | self,

32 | start_type: str = 'full_room',

33 | height: int = 8,

34 | width: int = 8,

35 | ) -> None:

36 | """Initializes the level.

37 |

38 | Args:

39 | start_type: The type of random initialization: 'full_room' forces random

40 | initialization using all cells in the room, 'corner' forces random

41 | initialization of the player around one corner of the room and the

42 | reward is initialized close to the other corner.

43 | height: Height of the grid.

44 | width: Width of the grid.

45 | """

46 | if start_type not in ['full_room', 'corner']:

47 | raise ValueError('Unrecognised start type.')

48 | self._start_type = start_type

49 | self._height = height

50 | self._width = width

51 |

52 | def foreground_and_background(

53 | self,

54 | rng: np.ndarray,

55 | ) -> tuple[np.ndarray, np.ndarray]:

56 | """See base class."""

57 | foreground = utils.room(self._height, self._width)

58 |

59 | # Sample player's and reward's initial position in the interior of the room.

60 | if self._start_type == 'full_room':

61 | player_pos, reward_pos = utils.sample_positions(

62 | rng,

63 | height_range=(1, self._height - 1),

64 | width_range=(1, self._width - 1),

65 | number_samples=2,

66 | replace=False)

67 | elif self._start_type == 'corner':

68 | # Sample positions in the top left quadrant for the player

69 | player_pos = utils.sample_positions(

70 | rng,

71 | height_range=(1, self._height // 2),

72 | width_range=(1, self._width // 2),

73 | number_samples=1)[0]

74 | # Sample positions in the bottom right quadrant for the reward

75 | reward_pos = utils.sample_positions(

76 | rng,

77 | height_range=((self._height + 1) // 2, self._height - 1),

78 | width_range=((self._width + 1) // 2, self._width - 1),

79 | number_samples=1)[0]

80 |

81 | foreground[player_pos] = Tile.PLAYER

82 | foreground[reward_pos] = Tile.REWARD

83 |

84 | background = np.full_like(foreground, Tile.FLOOR)

85 | background[reward_pos] = Tile.TERMINAL_R

86 | return foreground, background

87 |

--------------------------------------------------------------------------------

/src/pycoworld/levels/key_door.py:

--------------------------------------------------------------------------------

1 | # Copyright 2023 DeepMind Technologies Limited

2 | #

3 | # Licensed under the Apache License, Version 2.0 (the "License");

4 | # you may not use this file except in compliance with the License.

5 | # You may obtain a copy of the License at

6 | #

7 | # http://www.apache.org/licenses/LICENSE-2.0

8 | #

9 | # Unless required by applicable law or agreed to in writing, software

10 | # distributed under the License is distributed on an "AS IS" BASIS,

11 | # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

12 | # See the License for the specific language governing permissions and

13 | # limitations under the License.

14 | # ==============================================================================

15 |

16 | """Key Door level.

17 |

18 | In this level there is a key and a door. Behind the door there is a reward. The

19 | agent must learn to collect the key, open the door and obtain the reward.

20 | """

21 |

22 | import jax

23 | import jax.numpy as jnp

24 | import numpy as np

25 |

26 | from agent_debugger.src.pycoworld import default_constants

27 | from agent_debugger.src.pycoworld.levels import base_level

28 | from agent_debugger.src.pycoworld.levels import utils

29 |

30 | _VALID_DOORS = ['random', 'closed', 'open']

31 |

32 |

33 | class KeyDoorLevel(base_level.PycoworldLevel):

34 | """Level in which the agent has to unlock a door to collect the reward."""

35 |

36 | def __init__(self, door_type: str = 'random') -> None:

37 | """Initializes the level.

38 |

39 | Args:

40 | door_type: The way we sample the door state (open or closed). Must be in

41 | {'random', 'closed', 'open'}, otherwise a ValueError is raised.

42 | """

43 | if door_type not in _VALID_DOORS:

44 | raise ValueError(

45 | f'Argument door_type has an incorrect value. Expected one '

46 | f'of {_VALID_DOORS}, but got {door_type} instead.')

47 | self._door_type = door_type

48 |

49 | def foreground_and_background(

50 | self,

51 | rng: jnp.ndarray,

52 | ) -> tuple[np.ndarray, np.ndarray]:

53 | """Returns a tuple with the level foreground and background.

54 |

55 | We first determine the state of the door and use the tile associated with

56 | it (closed and open doors don't have the same id).

57 | Then, we sample the random player and key positions and add their tiles to

58 | the board. The background is full of floor tiles and a terminal event

59 | beneath the reward tile, so that the episode is terminated when the agent

60 | gets the reward.

61 |

62 | Args:

63 | rng: The jax random seed. Standard name for jax random seeds.

64 | """

65 | if self._door_type == 'closed':

66 | door_state = 'closed'

67 | elif self._door_type == 'open':

68 | door_state = 'open'

69 | elif self._door_type == 'random':

70 | rng, rng1 = jax.random.split(rng)

71 | if jax.random.uniform(rng1) < 0.5:

72 | door_state = 'open'

73 | else:

74 | door_state = 'closed'

75 |

76 | foreground = np.array([[4, 4, 4, 4, 4, 0, 0], [4, 0, 0, 0, 4, 0, 0],

77 | [4, 0, 0, 0, 4, 4, 4], [4, 0, 0, 0, 0, 20, 4],

78 | [4, 4, 4, 4, 4, 4, 4]])

79 |

80 | if door_state == 'closed':

81 | foreground[3, 4] = default_constants.Tile.DOOR_R

82 |

83 | player_pos, key_pos = utils.sample_positions(

84 | rng,

85 | height_range=(1, 4),

86 | width_range=(1, 4),

87 | number_samples=2,

88 | replace=False)

89 |

90 | foreground[player_pos] = default_constants.Tile.PLAYER

91 | foreground[key_pos] = default_constants.Tile.KEY_R

92 |

93 | background = np.zeros(foreground.shape, dtype=int)

94 | background[3, 5] = default_constants.Tile.TERMINAL_R

95 | return foreground, background

96 |

--------------------------------------------------------------------------------

/src/pycoworld/levels/utils.py:

--------------------------------------------------------------------------------

1 | # Copyright 2023 DeepMind Technologies Limited

2 | #

3 | # Licensed under the Apache License, Version 2.0 (the "License");

4 | # you may not use this file except in compliance with the License.

5 | # You may obtain a copy of the License at

6 | #

7 | # http://www.apache.org/licenses/LICENSE-2.0

8 | #

9 | # Unless required by applicable law or agreed to in writing, software

10 | # distributed under the License is distributed on an "AS IS" BASIS,

11 | # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

12 | # See the License for the specific language governing permissions and

13 | # limitations under the License.

14 | # ==============================================================================

15 |

16 | """Utilities for constructing pycoworld levels."""

17 |

18 | from typing import Optional, Sequence

19 |

20 | import chex

21 | import jax

22 | import numpy as np

23 |

24 | from agent_debugger.src.pycoworld import default_constants

25 |

26 | Tile = default_constants.Tile

27 |

28 | # A 2d slice for masking out the walls of a room.

29 | _INTERIOR = (slice(1, -1),) * 2

30 |

31 |

32 | def room(

33 | height: int,

34 | width: int,

35 | floor_tile: Tile = Tile.FLOOR,

36 | wall_tile: Tile = Tile.WALL,

37 | ) -> np.ndarray:

38 | """Returns a pycoworld representation of a room (floor surrounded by walls).

39 |

40 | Args:

41 | height: Height of the environment.

42 | width: Width of the environment.

43 | floor_tile: Tile to use for the floor.

44 | wall_tile: Tile to use for the wall.

45 |

46 | Returns:

47 | The array representation of the room.

48 | """

49 | room_array = np.full([height, width], wall_tile, dtype=np.uint8)

50 | room_array[_INTERIOR] = floor_tile

51 | return room_array

52 |

53 |

54 | def sample_positions(

55 | rng: np.ndarray,

56 | height_range: tuple[int, int],

57 | width_range: tuple[int, int],

58 | number_samples: int,

59 | replace: bool = True,

60 | exclude: Optional[np.ndarray] = None,

61 | ) -> Sequence[tuple[int, int]]:

62 | """Uniformly samples random positions in a 2d grid.

63 |

64 | Args:

65 | rng: Jax random key to use for sampling.

66 | height_range: Range (min, 1+max) in the height of the grid to sample from.

67 | width_range: Range (min, 1+max) in the width of the grid to sample from.

68 | number_samples: Number of positions to sample.

69 | replace: Whether to sample with replacement.

70 | exclude: Array of positions to exclude from the sampling. Each row of the

71 | array represents the x,y coordinates of one position.

72 |

73 | Returns:

74 | A sequence of x,y index tuples which can be used to index a 2d numpy array.

75 |

76 | Raises:

77 | ValueError: if more positions are requested than are available when sampling

78 | without replacement.

79 | """

80 | height = height_range[1] - height_range[0]

81 | width = width_range[1] - width_range[0]

82 | offset = np.array([height_range[0], width_range[0]])

83 |

84 | choices = np.arange(height * width)

85 | if exclude is not None:

86 | exclude = np.asarray(exclude) # Allow the user to pass any array-like type.

87 | chex.assert_shape(exclude, (None, 2))

88 |

89 | exclude_offset = exclude - offset

90 | exclude_indices = np.ravel_multi_index(exclude_offset.T, (height, width))

91 | mask = np.ones_like(choices, dtype=bool)

92 | mask[exclude_indices] = False

93 | choices = choices[mask]

94 |

95 | flat_indices = jax.random.choice(

96 | rng, choices, (number_samples,), replace=replace)

97 | positions_offset = np.unravel_index(flat_indices, (height, width))

98 | positions = positions_offset + offset[:, np.newaxis]

99 | return list(zip(*positions))

100 |

--------------------------------------------------------------------------------

/src/impala_net.py:

--------------------------------------------------------------------------------

1 | # Copyright 2023 DeepMind Technologies Limited

2 | #

3 | # Licensed under the Apache License, Version 2.0 (the "License");

4 | # you may not use this file except in compliance with the License.

5 | # You may obtain a copy of the License at

6 | #

7 | # http://www.apache.org/licenses/LICENSE-2.0

8 | #

9 | # Unless required by applicable law or agreed to in writing, software

10 | # distributed under the License is distributed on an "AS IS" BASIS,

11 | # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

12 | # See the License for the specific language governing permissions and

13 | # limitations under the License.

14 | # ==============================================================================

15 |

16 | """Neural network used in Impala trained agents."""

17 |

18 | from typing import NamedTuple, Optional, Sequence, Union

19 |

20 | import dm_env

21 | import haiku as hk

22 | import jax.nn

23 | import jax.numpy as jnp

24 |

25 | NetState = Union[jnp.ndarray, hk.LSTMState]

26 |

27 |

28 | # Kept as a NamedTuple as jax does not support dataclasses.

29 | class NetOutput(NamedTuple):

30 | """Dataclass to define network outputs."""

31 | policy_logits: jnp.ndarray

32 | value: jnp.ndarray

33 |

34 |

35 | class RecurrentConvNet(hk.RNNCore):

36 | """A class for Impala nets.

37 |

38 | Architecture:

39 | MLP torso -> LSTM -> MLP head -> (linear policy logits, linear value)

40 |

41 | If initialised with lstm_width=0, skips the LSTM layer.

42 | """

43 |

44 | def __init__(

45 | self,

46 | num_actions: int,

47 | conv_widths: Sequence[int],

48 | conv_kernels: Sequence[int],

49 | padding: str,

50 | torso_widths: Sequence[int],

51 | lstm_width: Optional[int],

52 | head_widths: Sequence[int],

53 | name: str = None,

54 | ) -> None:

55 | """Initializes the impala net."""

56 | super().__init__(name=name)

57 | self._num_actions = num_actions

58 | self._torso_widths = torso_widths

59 | self._head_widths = head_widths

60 | self._core = hk.LSTM(lstm_width) if lstm_width else None

61 |

62 | conv_layers = []

63 | for width, kernel_size in zip(conv_widths, conv_kernels):

64 | layer = hk.Conv2D(

65 | width, kernel_shape=[kernel_size, kernel_size], padding=padding)

66 | conv_layers += [layer, jax.nn.relu]

67 | self._conv_net = hk.Sequential(conv_layers + [hk.Flatten()])

68 |

69 | def initial_state(self, batch_size: int) -> NetState:

70 | """Returns a fresh hidden state for the LSTM core."""

71 | return self._core.initial_state(batch_size) if self._core else jnp.zeros(())

72 |

73 | def __call__(

74 | self,

75 | x: dm_env.TimeStep,

76 | state: NetState,

77 | ) -> tuple[NetOutput, NetState]:

78 | """Steps the net, applying a forward pass of the neural network."""

79 | # Apply torso.

80 | observation = x.observation['board'].astype(dtype=jnp.float32) / 255

81 | observation = jnp.expand_dims(observation, axis=0)

82 | output = self._torso(observation)

83 |

84 | if self._core is not None:

85 | output, state = self._core(output, state)

86 |

87 | policy_logits, value = self._head(output)

88 | return NetOutput(policy_logits=policy_logits[0], value=value[0]), state

89 |

90 | def _head(self, activations: jnp.ndarray) -> jnp.ndarray:

91 | """Returns new activations after applying the head network."""

92 | pre_outputs = hk.nets.MLP(self._head_widths)(activations)

93 | policy_logits = hk.Linear(self._num_actions)(pre_outputs)

94 | value = hk.Linear(1)(pre_outputs)

95 | value = jnp.squeeze(value, axis=-1)

96 | return policy_logits, value

97 |

98 | def _torso(self, inputs: jnp.ndarray) -> jnp.ndarray:

99 | """Returns activations after applying the torso to the inputs."""

100 | return hk.Sequential([self._conv_net,

101 | hk.nets.MLP(self._torso_widths)])(

102 | inputs)

103 |

--------------------------------------------------------------------------------

/src/agent_debugger/default_interventions.py:

--------------------------------------------------------------------------------

1 | # Copyright 2023 DeepMind Technologies Limited

2 | #

3 | # Licensed under the Apache License, Version 2.0 (the "License");

4 | # you may not use this file except in compliance with the License.

5 | # You may obtain a copy of the License at

6 | #

7 | # http://www.apache.org/licenses/LICENSE-2.0

8 | #

9 | # Unless required by applicable law or agreed to in writing, software

10 | # distributed under the License is distributed on an "AS IS" BASIS,

11 | # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

12 | # See the License for the specific language governing permissions and

13 | # limitations under the License.

14 | # ==============================================================================

15 |

16 | """Base interventions for the debugger."""

17 |

18 | import copy

19 | import dataclasses

20 | from typing import Union

21 |

22 | import jax

23 |

24 | from agent_debugger.src.agent_debugger import node as node_lib

25 | from agent_debugger.src.agent_debugger import types

26 | from agent_debugger.src.pycoworld import serializable_environment

27 |

28 |

29 | class DefaultInterventions:

30 | """Object containing the default debugger interventions."""

31 |

32 | def __init__(

33 | self,

34 | env: serializable_environment.SerializableEnvironment,

35 | ) -> None:

36 | """Initializes the default interventions object.

37 |

38 | Args:

39 | env: The environment to be able to reset after a seed intervention.

40 | """

41 | self._env = env

42 |

43 | def change_agent_seed(

44 | self,

45 | node: node_lib.Node,

46 | seed: Union[int, types.Rng],

47 | ) -> node_lib.Node:

48 | """Intervenes to replace the agent seed, used for acting.

49 |

50 | Args:

51 | node: The node to intervene on.

52 | seed: The new seed to put to the agent state of the node. Can be int or

53 | jax.random.PRNGKey directly.

54 |

55 | Returns:

56 | A new node, whose agent seed has been updated.

57 | """

58 | if isinstance(seed, int):

59 | seed = jax.random.PRNGKey(seed)

60 | new_agent_state = node.agent_state._replace(seed=seed)

61 | return dataclasses.replace(node, agent_state=new_agent_state)

62 |

63 | def change_env_seed(

64 | self,

65 | node: node_lib.Node,

66 | seed: int,

67 | ) -> node_lib.Node:

68 | """Intervenes to replace the seed of the environment.

69 |

70 | The state of the environment must contain an attribute 'seed' to be able to

71 | use this intervention.

72 |

73 | Args:

74 | node: The node to intervene on.

75 | seed: The new seed to put to the environment state of the node.

76 |

77 | Returns:

78 | A new node, which environment state has been modified.

79 | """

80 | # Create the new state.

81 | state = copy.deepcopy(node.env_state)

82 | state.seed = seed

83 | self._env.set_state(state)

84 |

85 | new_timestep = self._env.reset()

86 | new_state = self._env.get_state()

87 | return dataclasses.replace(

88 | node, env_state=new_state, last_timestep=new_timestep)

89 |

90 | def change_agent_next_actions(

91 | self,

92 | node: node_lib.Node,

93 | forced_next_actions: list[types.Action],

94 | ) -> node_lib.Node:

95 | """Changes the next actions of the agent at a given node.

96 |

97 | This intervention allows the user to change the N next actions of the agent,

98 | not only the next one. When stepping the debugger from this node, the list

99 | for the next node is actualised by removing the first action (since it has

100 | just been executed).

101 |

102 | Args:

103 | node: The node to intervene on.

104 | forced_next_actions: The next actions to be taken by the agent.

105 |

106 | Returns:

107 | A new node, which, when stepped from, will force the N next actions taken

108 | by the agent.

109 | """

110 | return dataclasses.replace(node, forced_next_actions=forced_next_actions)

111 |

--------------------------------------------------------------------------------

/src/pycoworld/levels/red_green_apples.py:

--------------------------------------------------------------------------------

1 | # Copyright 2023 DeepMind Technologies Limited

2 | #

3 | # Licensed under the Apache License, Version 2.0 (the "License");

4 | # you may not use this file except in compliance with the License.

5 | # You may obtain a copy of the License at

6 | #

7 | # http://www.apache.org/licenses/LICENSE-2.0

8 | #

9 | # Unless required by applicable law or agreed to in writing, software

10 | # distributed under the License is distributed on an "AS IS" BASIS,

11 | # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

12 | # See the License for the specific language governing permissions and

13 | # limitations under the License.

14 | # ==============================================================================

15 |

16 | """Red-Green Apples level.

17 |

18 | In this level there are two rooms, each containing two apples, one is red and

19 | the other is green. On each episode only one of the rooms is opened (and the

20 | other is closed by a door) and the agent's goal is to enter the opened room

21 | and reach the green apple.

22 | """

23 |

24 | from typing import Any, MutableMapping

25 |

26 | import jax

27 | import numpy as np

28 | from pycolab import engine

29 | from pycolab import things as plab_things

30 |

31 | from agent_debugger.src.pycoworld import default_sprites_and_drapes

32 | from agent_debugger.src.pycoworld.levels import base_level

33 |

34 |

35 | class RedGreenApplesLevel(base_level.PycoworldLevel):

36 | """Red-green apples level."""

37 |

38 | def __init__(self, red_lover: bool = False):

39 | """Initializes the level.

40 |

41 | Args:

42 | red_lover: Whether the red marble gives a positive or a negative reward.

43 | True means a positive reward.

44 | """

45 | super().__init__()

46 | self._red_lover = red_lover

47 |

48 | def foreground_and_background(

49 | self,

50 | rng: np.ndarray,

51 | ) -> tuple[np.ndarray, np.ndarray]:

52 | """See base class."""

53 | if jax.random.uniform(rng) < 0.5:

54 | # Room on the left is open.

55 | foreground = np.array([[4, 4, 4, 4, 4, 4, 4], [4, 0, 21, 4, 21, 0, 4],

56 | [4, 0, 23, 4, 23, 0, 4], [4, 0, 4, 4, 4, 42, 4],

57 | [4, 0, 0, 8, 0, 0, 4], [4, 4, 4, 4, 4, 4, 4]])

58 | else:

59 | # Room on the right is open.

60 | foreground = np.array([[4, 4, 4, 4, 4, 4, 4], [4, 0, 21, 4, 21, 0, 4],

61 | [4, 0, 23, 4, 23, 0, 4], [4, 42, 4, 4, 4, 0, 4],

62 | [4, 0, 0, 8, 0, 0, 4], [4, 4, 4, 4, 4, 4, 4]])

63 |

64 | background = np.array([

65 | [0, 0, 0, 0, 0, 0, 0],

66 | [0, 0, 13, 0, 13, 0, 0],

67 | [0, 0, 13, 0, 13, 0, 0],

68 | [0, 0, 0, 0, 0, 0, 0],

69 | [0, 0, 0, 0, 0, 0, 0],

70 | [0, 0, 0, 0, 0, 0, 0],

71 | ])

72 | return foreground, background

73 |

74 | def sample_game(self, rng: np.ndarray) -> engine.Engine:

75 | """See base class."""

76 | foreground, background = self.foreground_and_background(rng)

77 | drapes = base_level.default_drapes()

78 | if self._red_lover:

79 | drapes[chr(23)] = BadAppleDrape

80 | drapes[chr(21)] = default_sprites_and_drapes.RewardDrape

81 | else:

82 | drapes[chr(21)] = BadAppleDrape

83 | drapes[chr(23)] = default_sprites_and_drapes.RewardDrape

84 | return base_level.make_pycolab_engine(foreground, background, drapes=drapes)

85 |

86 |

87 | class BadAppleDrape(plab_things.Drape):

88 | """A drape for a bad apple.

89 |

90 | Collecting the bad apple punishes the agent with a negative reward of -1.

91 | """

92 |

93 | def update(

94 | self,

95 | actions: int,

96 | board: np.ndarray,

97 | layers: MutableMapping[str, np.ndarray],

98 | backdrop: np.ndarray,

99 | things: MutableMapping[str, Any],

100 | the_plot: engine.plot.Plot

101 | ) -> None:

102 | ypos, xpos = things[chr(8)].position # Get agent's position.

103 |

104 | # If the agent is in the same position as the bad apple give -1 reward and

105 | # consume the bad apple.

106 | if self.curtain[ypos, xpos]:

107 | the_plot.add_reward(-1.)

108 |

109 | # Remove bad apple.

110 | self.curtain[ypos, xpos] = False

111 |

--------------------------------------------------------------------------------

/src/pycoworld/levels/grass_sand.py:

--------------------------------------------------------------------------------

1 | # Copyright 2023 DeepMind Technologies Limited

2 | #

3 | # Licensed under the Apache License, Version 2.0 (the "License");

4 | # you may not use this file except in compliance with the License.

5 | # You may obtain a copy of the License at

6 | #

7 | # http://www.apache.org/licenses/LICENSE-2.0

8 | #

9 | # Unless required by applicable law or agreed to in writing, software

10 | # distributed under the License is distributed on an "AS IS" BASIS,

11 | # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

12 | # See the License for the specific language governing permissions and

13 | # limitations under the License.

14 | # ==============================================================================

15 |

16 | """Grass sand level."""

17 | from typing import Optional

18 |

19 | import jax

20 | import numpy as np

21 |

22 | from agent_debugger.src.pycoworld import default_constants

23 | from agent_debugger.src.pycoworld.levels import base_level

24 |

25 | Tile = default_constants.Tile

26 |

27 |

28 | class GrassSandLevel(base_level.PycoworldLevel):

29 | """The grass sand level.

30 |

31 | The goal position causally depends on the type of world. Similarly, the floor

32 | tiles (grass or sand) also causally depend on the type of world. Therefore,

33 | type of floor and reward position are correlated, but there is no causal link

34 | between them.

35 | """

36 |

37 | def __init__(

38 | self,

39 | corr: float = 1.0,

40 | above: Optional[np.ndarray] = None,

41 | below: Optional[np.ndarray] = None,

42 | reward_pos: Optional[tuple[np.ndarray, np.ndarray]] = None,

43 | double_terminal_event: Optional[bool] = False,

44 | ) -> None:

45 | """Initializes the level.

46 |

47 | Args:

48 | corr: "correlation" between reward position and world. 1.0 means that the

49 | reward position will always be on "north" for sand world and on "south"

50 | for grass world. A value of 0.5 corresponds to fully randomized position

51 | independent of the world.

52 | above: a map to use.

53 | below: a background to use.

54 | reward_pos: position of the rewards.

55 | double_terminal_event: whether to have a terminal event on both sides of

56 | the maze or only beneath the reward.

57 | """

58 | if not 0.0 <= corr <= 1.0:

59 | raise ValueError('corr variable must be a float between 0.0 and 1.0')

60 |

61 | self._corr = corr

62 | if above is None:

63 | above = np.array([[0, 0, 0, 4, 4, 4], [4, 4, 4, 4, 99, 4],

64 | [4, 4, 4, 4, 99, 4], [4, 8, 99, 99, 99, 4],

65 | [4, 4, 4, 4, 99, 4], [4, 4, 4, 4, 99, 4],

66 | [0, 0, 0, 4, 4, 4]])

67 | self._above = above

68 | if below is None:

69 | below = np.full_like(above, Tile.FLOOR)

70 | self._below = below

71 |

72 | if reward_pos is None:

73 | self._reward_pos_top = np.array([1, 4])

74 | self._reward_pos_bottom = np.array([5, 4])

75 | else:

76 | self._reward_pos_top, self._reward_pos_bottom = reward_pos

77 |

78 | self._double_terminal_event = double_terminal_event

79 |

80 | def foreground_and_background(

81 | self,

82 | rng: np.ndarray,

83 | ) -> tuple[np.ndarray, np.ndarray]:

84 | """See base class."""

85 | rng1, rng2 = jax.random.split(rng, 2)

86 | # Do not modify the base maps during sampling

87 | sampled_above = self._above.copy()

88 | sampled_below = self._below.copy()

89 |

90 | # Select world.

91 | if jax.random.uniform(rng1) < 0.5:

92 | world = 'sand'

93 | floor_type = Tile.SAND

94 | else:

95 | world = 'grass'

96 | floor_type = Tile.GRASS

97 |

98 | # Sample reward location depending on corr variable.

99 | if jax.random.uniform(rng2) <= self._corr:

100 | # Standard reward position for both worlds.

101 | if world == 'sand':

102 | used_reward_pos = self._reward_pos_top

103 | elif world == 'grass':

104 | used_reward_pos = self._reward_pos_bottom

105 | else:

106 | # Alternative reward position for both worlds.

107 | if world == 'sand':

108 | used_reward_pos = self._reward_pos_bottom

109 | elif world == 'grass':

110 | used_reward_pos = self._reward_pos_top

111 |

112 | if self._double_terminal_event:

113 | # Put terminal events everywhere first.

114 | sampled_above[self._reward_pos_top[0],

115 | self._reward_pos_top[1]] = Tile.TERMINAL_R

116 | sampled_above[self._reward_pos_bottom[0],

117 | self._reward_pos_bottom[1]] = Tile.TERMINAL_R

118 | # Draw the reward ball.

119 | sampled_above[used_reward_pos[0], used_reward_pos[1]] = Tile.REWARD

120 |

121 | # Substitute tiles with code 99 with the corresponding floor_type code.

122 | floor_tiles = np.argwhere(sampled_above == 99)

123 | for tile in floor_tiles:

124 | sampled_above[tile[0], tile[1]] = floor_type

125 |

126 | if self._double_terminal_event:

127 | # Add terminal events on both sides to the below.

128 | sampled_below[self._reward_pos_top[0],

129 | self._reward_pos_top[1]] = Tile.TERMINAL_R

130 | sampled_below[self._reward_pos_bottom[0],

131 | self._reward_pos_bottom[1]] = Tile.TERMINAL_R

132 | else:

133 | sampled_below[used_reward_pos[0], used_reward_pos[1]] = Tile.TERMINAL_R

134 |

135 | return sampled_above, sampled_below

136 |

--------------------------------------------------------------------------------

/src/pycoworld/levels/base_level.py:

--------------------------------------------------------------------------------

1 | # Copyright 2023 DeepMind Technologies Limited

2 | #

3 | # Licensed under the Apache License, Version 2.0 (the "License");

4 | # you may not use this file except in compliance with the License.

5 | # You may obtain a copy of the License at

6 | #

7 | # http://www.apache.org/licenses/LICENSE-2.0

8 | #

9 | # Unless required by applicable law or agreed to in writing, software

10 | # distributed under the License is distributed on an "AS IS" BASIS,

11 | # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

12 | # See the License for the specific language governing permissions and

13 | # limitations under the License.

14 | # ==============================================================================

15 |

16 | """Level config."""

17 |

18 | import abc

19 | from typing import Any, Mapping, Optional, Sequence, Union

20 |

21 | import numpy as np

22 | from pycolab import ascii_art

23 | from pycolab import engine

24 | from pycolab import things as pycolab_things

25 |

26 | from agent_debugger.src.pycoworld import default_constants

27 | from agent_debugger.src.pycoworld import default_sprites_and_drapes

28 |

29 | _ACTIONS = range(4)

30 | Tile = default_constants.Tile

31 |

32 |

33 | def default_sprites() -> dict[str, Any]:

34 | """Returns the default mapping of characters to sprites in the levels."""

35 | return {chr(Tile.PLAYER): default_sprites_and_drapes.PlayerSprite}

36 |

37 |

38 | def default_drapes() -> dict[str, Any]:

39 | """Returns the default mapping of characters to drapes in the levels."""

40 | return {

41 | chr(Tile.WALL): default_sprites_and_drapes.WallDrape,

42 | chr(Tile.WALL_R): default_sprites_and_drapes.WallDrape,

43 | chr(Tile.WALL_B): default_sprites_and_drapes.WallDrape,

44 | chr(Tile.WALL_G): default_sprites_and_drapes.WallDrape,

45 | chr(Tile.BLOCK): default_sprites_and_drapes.BoxDrape,

46 | chr(Tile.BLOCK_R): default_sprites_and_drapes.BoxDrape,

47 | chr(Tile.BLOCK_B): default_sprites_and_drapes.BoxDrape,

48 | chr(Tile.BLOCK_G): default_sprites_and_drapes.BoxDrape,

49 | chr(Tile.REWARD): default_sprites_and_drapes.RewardDrape,

50 | chr(Tile.REWARD_R): default_sprites_and_drapes.RewardDrape,

51 | chr(Tile.REWARD_B): default_sprites_and_drapes.RewardDrape,

52 | chr(Tile.REWARD_G): default_sprites_and_drapes.RewardDrape,

53 | chr(Tile.BIG_REWARD): default_sprites_and_drapes.BigRewardDrape,

54 | chr(Tile.BIG_REWARD_R): default_sprites_and_drapes.BigRewardDrape,

55 | chr(Tile.BIG_REWARD_B): default_sprites_and_drapes.BigRewardDrape,

56 | chr(Tile.BIG_REWARD_G): default_sprites_and_drapes.BigRewardDrape,

57 | chr(Tile.KEY): default_sprites_and_drapes.ObjectDrape,

58 | chr(Tile.KEY_R): default_sprites_and_drapes.ObjectDrape,

59 | chr(Tile.KEY_B): default_sprites_and_drapes.ObjectDrape,

60 | chr(Tile.KEY_G): default_sprites_and_drapes.ObjectDrape,

61 | chr(Tile.DOOR): default_sprites_and_drapes.DoorDrape,

62 | chr(Tile.DOOR_R): default_sprites_and_drapes.DoorDrape,

63 | chr(Tile.DOOR_B): default_sprites_and_drapes.DoorDrape,

64 | chr(Tile.DOOR_G): default_sprites_and_drapes.DoorDrape,

65 | chr(Tile.OBJECT): default_sprites_and_drapes.ObjectDrape,

66 | chr(Tile.OBJECT_R): default_sprites_and_drapes.ObjectDrape,

67 | chr(Tile.OBJECT_B): default_sprites_and_drapes.ObjectDrape,

68 | chr(Tile.OBJECT_G): default_sprites_and_drapes.ObjectDrape,

69 | }

70 |

71 |

72 | def default_schedule() -> list[str]:

73 | """Returns the default update schedule of sprites and drapes in the levels."""

74 | return [

75 | chr(Tile.PLAYER), # PlayerSprite

76 | chr(Tile.WALL),

77 | chr(Tile.WALL_R),

78 | chr(Tile.WALL_B),

79 | chr(Tile.WALL_G),

80 | chr(Tile.REWARD),

81 | chr(Tile.REWARD_R),

82 | chr(Tile.REWARD_B),

83 | chr(Tile.REWARD_G),

84 | chr(Tile.BIG_REWARD),

85 | chr(Tile.BIG_REWARD_R),

86 | chr(Tile.BIG_REWARD_B),

87 | chr(Tile.BIG_REWARD_G),

88 | chr(Tile.KEY),

89 | chr(Tile.KEY_R),

90 | chr(Tile.KEY_B),

91 | chr(Tile.KEY_G),

92 | chr(Tile.OBJECT),

93 | chr(Tile.OBJECT_R),

94 | chr(Tile.OBJECT_B),

95 | chr(Tile.OBJECT_G),

96 | chr(Tile.DOOR),

97 | chr(Tile.DOOR_R),

98 | chr(Tile.DOOR_B),

99 | chr(Tile.DOOR_G),

100 | chr(Tile.BLOCK),

101 | chr(Tile.BLOCK_R),

102 | chr(Tile.BLOCK_B),

103 | chr(Tile.BLOCK_G),

104 | ]

105 |

106 |

107 | def _numpy_to_str(array: np.ndarray) -> list[str]:

108 | """Converts numpy array into a list of strings.

109 |

110 | Args:

111 | array: a 2-D np.darray of np.uint8.

112 |

113 | Returns:

114 | A list of strings of equal length, corresponding to the entries in A.

115 | """

116 | return [''.join(map(chr, row.tolist())) for row in array]

117 |

118 |

119 | def make_pycolab_engine(

120 | foreground: np.ndarray,

121 | background: Union[np.ndarray, int],

122 | sprites: Optional[Mapping[str, pycolab_things.Sprite]] = None,

123 | drapes: Optional[Mapping[str, pycolab_things.Drape]] = None,

124 | update_schedule: Optional[Sequence[str]] = None,

125 | rng: Optional[Any] = None,

126 | ) -> engine.Engine:

127 | """Builds and returns a pycoworld game engine.

128 |

129 | Args:

130 | foreground: Array of foreground tiles.

131 | background: Array of background tiles or a single tile to use as the

132 | background everywhere.

133 | sprites: Pycolab sprites. See pycolab.ascii_art.ascii_art_to_game for more

134 | information.

135 | drapes: Pycolab drapes.

136 | update_schedule: Update schedule for sprites and drapes.

137 | rng: Random key to use for pycolab.

138 |

139 | Returns:

140 | A pycolab engine with the pycoworld game.

141 | """

142 | sprites = sprites if sprites is not None else default_sprites()

143 | drapes = drapes if drapes is not None else default_drapes()

144 | if update_schedule is None:

145 | update_schedule = default_schedule()

146 |

147 | # The pycolab engine constructor requires arrays of strings

148 | above_str = _numpy_to_str(foreground)

149 | below_str = _numpy_to_str(background)

150 |

151 | pycolab_engine = ascii_art.ascii_art_to_game(

152 | above_str, below_str, sprites, drapes, update_schedule=update_schedule)

153 | # Pycolab does not allow to add a global seed in the engine constructor.

154 | # Therefore, we have to set it manually.

155 | pycolab_engine._the_plot['rng'] = rng # pylint: disable=protected-access

156 | return pycolab_engine

157 |

158 |

159 | class PycoworldLevel(abc.ABC):

160 | """Abstract class representing a pycoworld level.

161 |

162 | A pycoworld level captures all the data that is required to define the level

163 | and implements a function that returns a pycolab game engine.

164 | """

165 |

166 | @abc.abstractmethod

167 | def foreground_and_background(

168 | self,

169 | rng: np.ndarray,

170 | ) -> tuple[np.ndarray, np.ndarray]:

171 | """Generates the foreground and background arrays of the level."""

172 |

173 | def sample_game(self, rng: np.ndarray) -> engine.Engine:

174 | """Samples and returns a game from this level.

175 |

176 | A level may contain random elements (e.g. a random goal location). This

177 | function samples and returns a particular game from this level. The base

178 | version calls foreground_and_background and constructs a pycolab engine

179 | using the arrays. In order to use custom sprites, drapes, and update

180 | schedule override this method and use make_pycolab_engine to create an

181 | engine.

182 |

183 | Args:

184 | rng: Random key to use for sampling.

185 |

186 | Returns:

187 | A pycolab game engine for the sampled game.

188 | """

189 | foreground, background = self.foreground_and_background(rng)

190 | return make_pycolab_engine(foreground, background)

191 |

192 | @property

193 | def actions(self) -> Sequence[int]:

194 | return _ACTIONS

195 |

--------------------------------------------------------------------------------

/src/agent_debugger/debugger.py:

--------------------------------------------------------------------------------

1 | # Copyright 2023 DeepMind Technologies Limited

2 | #

3 | # Licensed under the Apache License, Version 2.0 (the "License");

4 | # you may not use this file except in compliance with the License.

5 | # You may obtain a copy of the License at

6 | #

7 | # http://www.apache.org/licenses/LICENSE-2.0

8 | #

9 | # Unless required by applicable law or agreed to in writing, software

10 | # distributed under the License is distributed on an "AS IS" BASIS,

11 | # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

12 | # See the License for the specific language governing permissions and