33 |

34 |

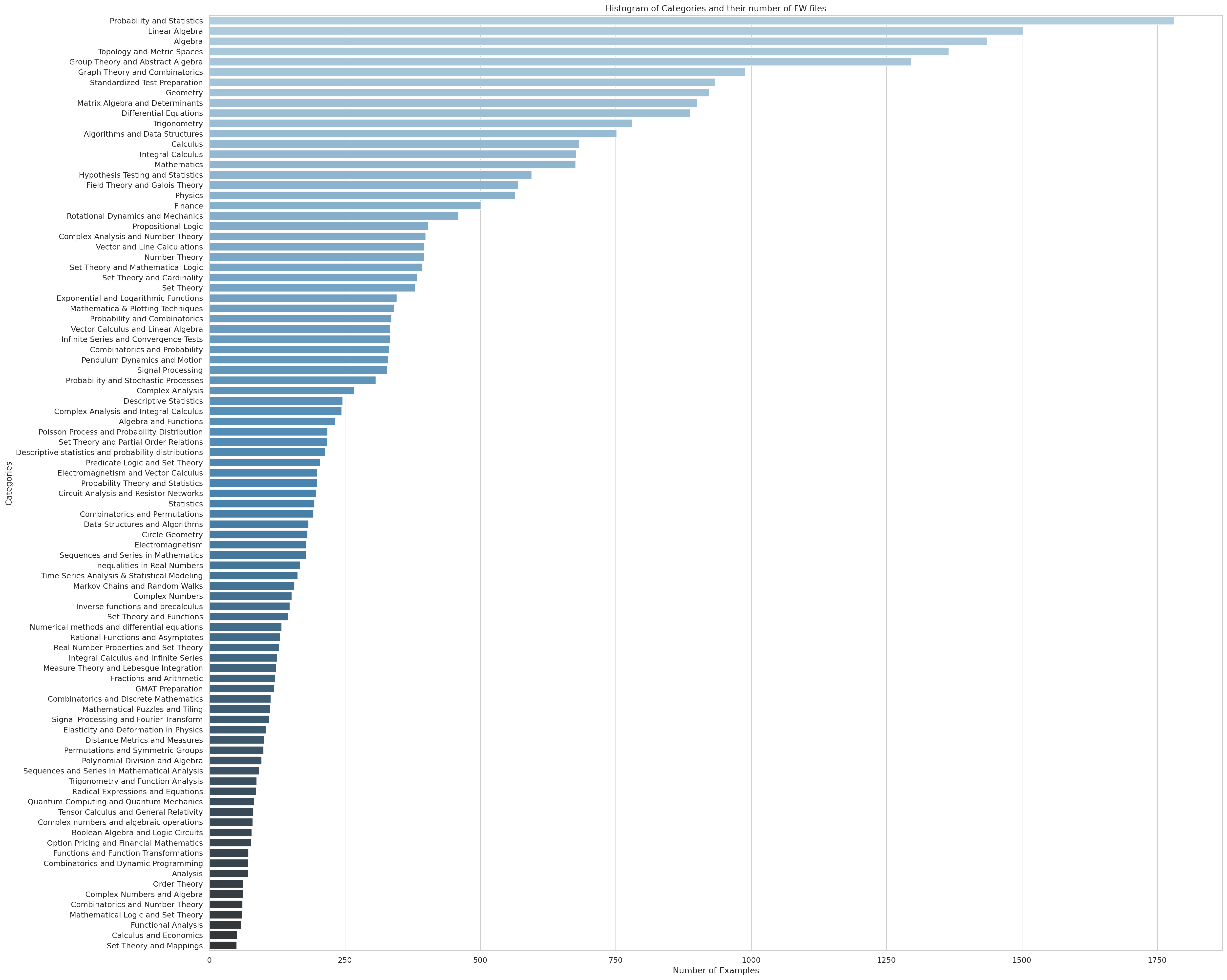

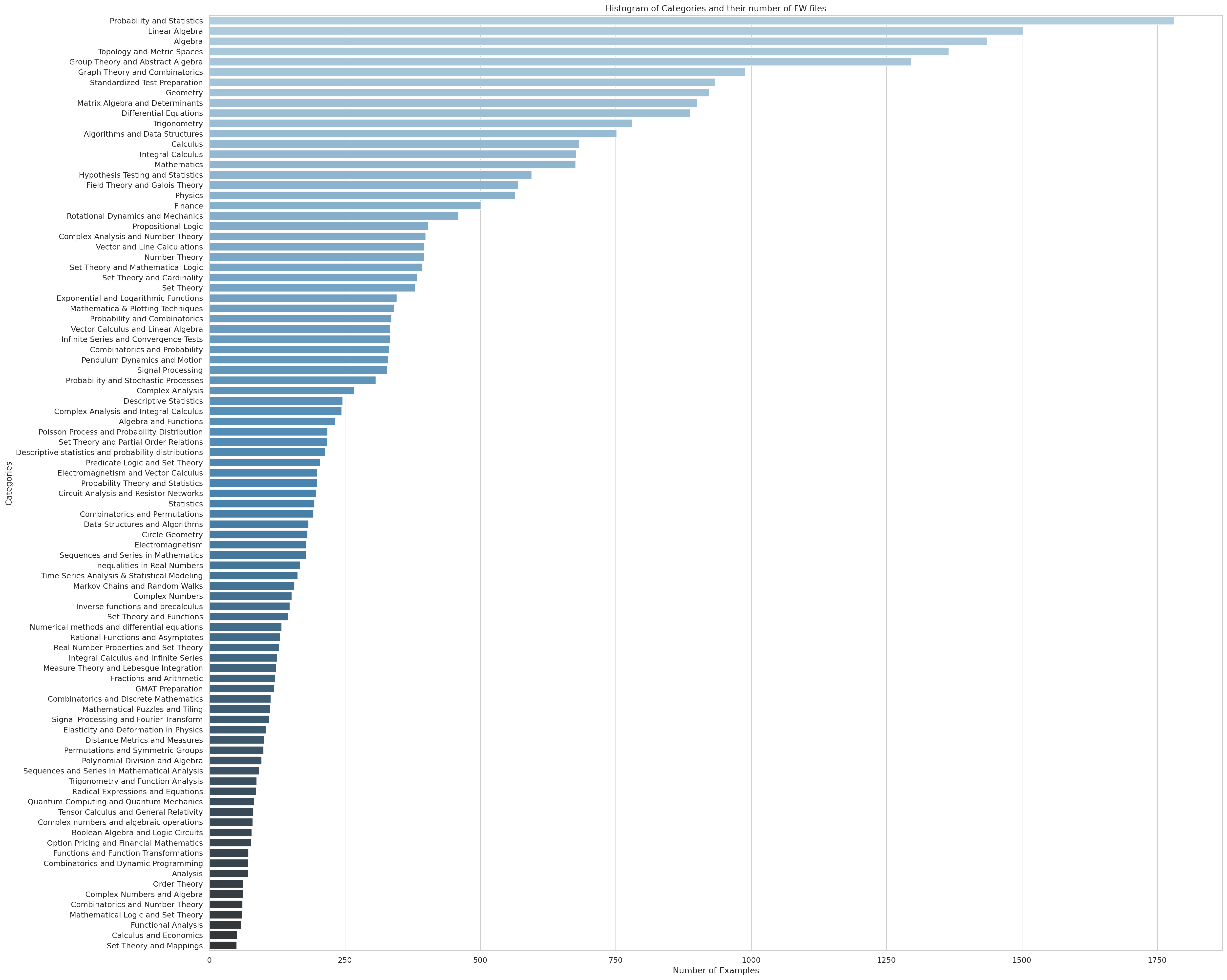

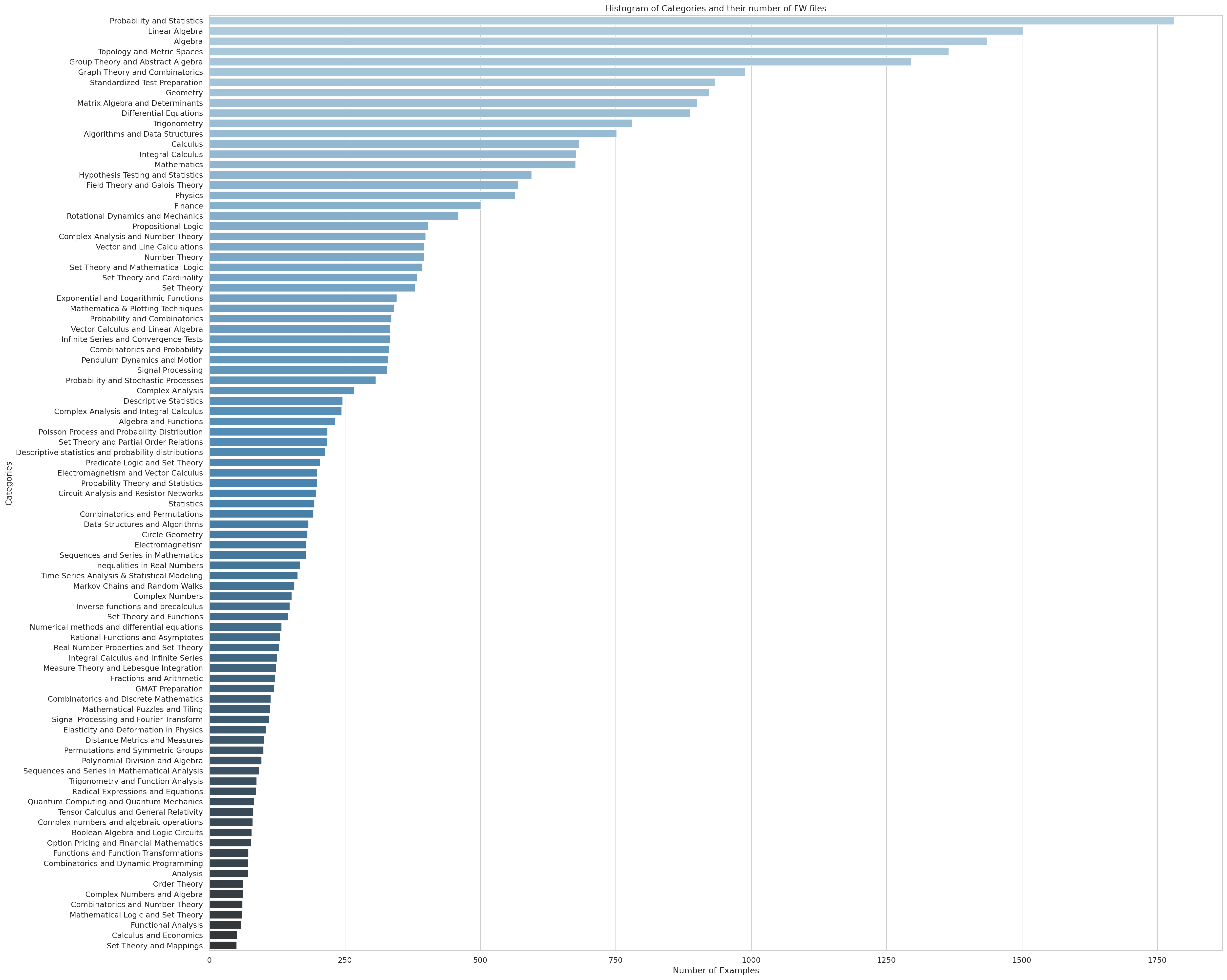

The clusters of AutoMathText

35 |

34 |

34 |  34 |

34 |