├── .gitignore

├── .travis.yml

├── LICENSE

├── Makefile

├── README.md

├── requirements.txt

├── resources

├── TokenQuery_example_1.png

├── Token_query_logo.png

└── TokenrRegex_logo.png

├── setup.cfg

├── setup.py

└── tokenquery

├── __init__.py

├── acceptors

├── __init__.py

├── core

│ ├── __init__.py

│ ├── date_opr.py

│ ├── int_opr.py

│ ├── string_opr.py

│ ├── vector_opr.py

│ └── web_opr.py

└── extended

│ └── __init__.py

├── models

├── __init__.py

├── chunk.py

├── fsa.py

├── stack.py

└── token.py

├── nlp

├── __init__.py

├── google_nlp_api.py

├── importer.py

├── pos_tagger.py

└── tokenizer.py

├── tests

├── __init__.py

├── acceptors

│ └── core

│ │ ├── int_opr_test.py

│ │ ├── string_opr_test.py

│ │ ├── vector_opr_test.py

│ │ └── web_opr_test.py

├── models

│ ├── fsa_test.py

│ ├── stack_test.py

│ └── token_test.py

├── nlp

│ ├── data

│ │ └── test.conllu

│ ├── importer_test.py

│ ├── pos_tagger_test.py

│ └── tokenizer_test.py

└── tokenquery_test.py

└── tokenquery.py

/.gitignore:

--------------------------------------------------------------------------------

1 | *.DS_Store

2 |

3 | # Byte-compiled / optimized / DLL files

4 | __pycache__/

5 | *.py[cod]

6 | *$py.class

7 |

8 | # C extensions

9 | *.so

10 |

11 | # Distribution / packaging

12 | .Python

13 | env/

14 | build/

15 | develop-eggs/

16 | dist/

17 | downloads/

18 | eggs/

19 | .eggs/

20 | lib/

21 | lib64/

22 | parts/

23 | sdist/

24 | var/

25 | *.egg-info/

26 | .installed.cfg

27 | *.egg

28 |

29 | # PyInstaller

30 | # Usually these files are written by a python script from a template

31 | # before PyInstaller builds the exe, so as to inject date/other infos into it.

32 | *.manifest

33 | *.spec

34 |

35 | # Installer logs

36 | pip-log.txt

37 | pip-delete-this-directory.txt

38 |

39 | # Unit test / coverage reports

40 | htmlcov/

41 | .tox/

42 | .coverage

43 | .coverage.*

44 | .cache

45 | nosetests.xml

46 | coverage.xml

47 | *,cover

48 | .hypothesis/

49 |

50 | # Translations

51 | *.mo

52 | *.pot

53 |

54 | # Django stuff:

55 | *.log

56 | local_settings.py

57 |

58 | # Flask stuff:

59 | instance/

60 | .webassets-cache

61 |

62 | # Scrapy stuff:

63 | .scrapy

64 |

65 | # Sphinx documentation

66 | docs/_build/

67 |

68 | # PyBuilder

69 | target/

70 |

71 | # IPython Notebook

72 | .ipynb_checkpoints

73 |

74 | # pyenv

75 | .python-version

76 |

77 | # celery beat schedule file

78 | celerybeat-schedule

79 |

80 | # dotenv

81 | .env

82 |

83 | # virtualenv

84 | venv/

85 | ENV/

86 |

87 | # Spyder project settings

88 | .spyderproject

89 |

90 | # Rope project settings

91 | .ropeproject

92 |

--------------------------------------------------------------------------------

/.travis.yml:

--------------------------------------------------------------------------------

1 | language: python

2 | python:

3 | - "3.4"

4 | # command to install dependencies

5 | install:

6 | - pip install -r requirements.txt

7 | # command to run tests

8 | script: make test

9 |

--------------------------------------------------------------------------------

/LICENSE:

--------------------------------------------------------------------------------

1 | GNU GENERAL PUBLIC LICENSE

2 | Version 3, 29 June 2007

3 |

4 | Copyright (C) 2007 Free Software Foundation, Inc.

5 | Everyone is permitted to copy and distribute verbatim copies

6 | of this license document, but changing it is not allowed.

7 |

8 | Preamble

9 |

10 | The GNU General Public License is a free, copyleft license for

11 | software and other kinds of works.

12 |

13 | The licenses for most software and other practical works are designed

14 | to take away your freedom to share and change the works. By contrast,

15 | the GNU General Public License is intended to guarantee your freedom to

16 | share and change all versions of a program--to make sure it remains free

17 | software for all its users. We, the Free Software Foundation, use the

18 | GNU General Public License for most of our software; it applies also to

19 | any other work released this way by its authors. You can apply it to

20 | your programs, too.

21 |

22 | When we speak of free software, we are referring to freedom, not

23 | price. Our General Public Licenses are designed to make sure that you

24 | have the freedom to distribute copies of free software (and charge for

25 | them if you wish), that you receive source code or can get it if you

26 | want it, that you can change the software or use pieces of it in new

27 | free programs, and that you know you can do these things.

28 |

29 | To protect your rights, we need to prevent others from denying you

30 | these rights or asking you to surrender the rights. Therefore, you have

31 | certain responsibilities if you distribute copies of the software, or if

32 | you modify it: responsibilities to respect the freedom of others.

33 |

34 | For example, if you distribute copies of such a program, whether

35 | gratis or for a fee, you must pass on to the recipients the same

36 | freedoms that you received. You must make sure that they, too, receive

37 | or can get the source code. And you must show them these terms so they

38 | know their rights.

39 |

40 | Developers that use the GNU GPL protect your rights with two steps:

41 | (1) assert copyright on the software, and (2) offer you this License

42 | giving you legal permission to copy, distribute and/or modify it.

43 |

44 | For the developers' and authors' protection, the GPL clearly explains

45 | that there is no warranty for this free software. For both users' and

46 | authors' sake, the GPL requires that modified versions be marked as

47 | changed, so that their problems will not be attributed erroneously to

48 | authors of previous versions.

49 |

50 | Some devices are designed to deny users access to install or run

51 | modified versions of the software inside them, although the manufacturer

52 | can do so. This is fundamentally incompatible with the aim of

53 | protecting users' freedom to change the software. The systematic

54 | pattern of such abuse occurs in the area of products for individuals to

55 | use, which is precisely where it is most unacceptable. Therefore, we

56 | have designed this version of the GPL to prohibit the practice for those

57 | products. If such problems arise substantially in other domains, we

58 | stand ready to extend this provision to those domains in future versions

59 | of the GPL, as needed to protect the freedom of users.

60 |

61 | Finally, every program is threatened constantly by software patents.

62 | States should not allow patents to restrict development and use of

63 | software on general-purpose computers, but in those that do, we wish to

64 | avoid the special danger that patents applied to a free program could

65 | make it effectively proprietary. To prevent this, the GPL assures that

66 | patents cannot be used to render the program non-free.

67 |

68 | The precise terms and conditions for copying, distribution and

69 | modification follow.

70 |

71 | TERMS AND CONDITIONS

72 |

73 | 0. Definitions.

74 |

75 | "This License" refers to version 3 of the GNU General Public License.

76 |

77 | "Copyright" also means copyright-like laws that apply to other kinds of

78 | works, such as semiconductor masks.

79 |

80 | "The Program" refers to any copyrightable work licensed under this

81 | License. Each licensee is addressed as "you". "Licensees" and

82 | "recipients" may be individuals or organizations.

83 |

84 | To "modify" a work means to copy from or adapt all or part of the work

85 | in a fashion requiring copyright permission, other than the making of an

86 | exact copy. The resulting work is called a "modified version" of the

87 | earlier work or a work "based on" the earlier work.

88 |

89 | A "covered work" means either the unmodified Program or a work based

90 | on the Program.

91 |

92 | To "propagate" a work means to do anything with it that, without

93 | permission, would make you directly or secondarily liable for

94 | infringement under applicable copyright law, except executing it on a

95 | computer or modifying a private copy. Propagation includes copying,

96 | distribution (with or without modification), making available to the

97 | public, and in some countries other activities as well.

98 |

99 | To "convey" a work means any kind of propagation that enables other

100 | parties to make or receive copies. Mere interaction with a user through

101 | a computer network, with no transfer of a copy, is not conveying.

102 |

103 | An interactive user interface displays "Appropriate Legal Notices"

104 | to the extent that it includes a convenient and prominently visible

105 | feature that (1) displays an appropriate copyright notice, and (2)

106 | tells the user that there is no warranty for the work (except to the

107 | extent that warranties are provided), that licensees may convey the

108 | work under this License, and how to view a copy of this License. If

109 | the interface presents a list of user commands or options, such as a

110 | menu, a prominent item in the list meets this criterion.

111 |

112 | 1. Source Code.

113 |

114 | The "source code" for a work means the preferred form of the work

115 | for making modifications to it. "Object code" means any non-source

116 | form of a work.

117 |

118 | A "Standard Interface" means an interface that either is an official

119 | standard defined by a recognized standards body, or, in the case of

120 | interfaces specified for a particular programming language, one that

121 | is widely used among developers working in that language.

122 |

123 | The "System Libraries" of an executable work include anything, other

124 | than the work as a whole, that (a) is included in the normal form of

125 | packaging a Major Component, but which is not part of that Major

126 | Component, and (b) serves only to enable use of the work with that

127 | Major Component, or to implement a Standard Interface for which an

128 | implementation is available to the public in source code form. A

129 | "Major Component", in this context, means a major essential component

130 | (kernel, window system, and so on) of the specific operating system

131 | (if any) on which the executable work runs, or a compiler used to

132 | produce the work, or an object code interpreter used to run it.

133 |

134 | The "Corresponding Source" for a work in object code form means all

135 | the source code needed to generate, install, and (for an executable

136 | work) run the object code and to modify the work, including scripts to

137 | control those activities. However, it does not include the work's

138 | System Libraries, or general-purpose tools or generally available free

139 | programs which are used unmodified in performing those activities but

140 | which are not part of the work. For example, Corresponding Source

141 | includes interface definition files associated with source files for

142 | the work, and the source code for shared libraries and dynamically

143 | linked subprograms that the work is specifically designed to require,

144 | such as by intimate data communication or control flow between those

145 | subprograms and other parts of the work.

146 |

147 | The Corresponding Source need not include anything that users

148 | can regenerate automatically from other parts of the Corresponding

149 | Source.

150 |

151 | The Corresponding Source for a work in source code form is that

152 | same work.

153 |

154 | 2. Basic Permissions.

155 |

156 | All rights granted under this License are granted for the term of

157 | copyright on the Program, and are irrevocable provided the stated

158 | conditions are met. This License explicitly affirms your unlimited

159 | permission to run the unmodified Program. The output from running a

160 | covered work is covered by this License only if the output, given its

161 | content, constitutes a covered work. This License acknowledges your

162 | rights of fair use or other equivalent, as provided by copyright law.

163 |

164 | You may make, run and propagate covered works that you do not

165 | convey, without conditions so long as your license otherwise remains

166 | in force. You may convey covered works to others for the sole purpose

167 | of having them make modifications exclusively for you, or provide you

168 | with facilities for running those works, provided that you comply with

169 | the terms of this License in conveying all material for which you do

170 | not control copyright. Those thus making or running the covered works

171 | for you must do so exclusively on your behalf, under your direction

172 | and control, on terms that prohibit them from making any copies of

173 | your copyrighted material outside their relationship with you.

174 |

175 | Conveying under any other circumstances is permitted solely under

176 | the conditions stated below. Sublicensing is not allowed; section 10

177 | makes it unnecessary.

178 |

179 | 3. Protecting Users' Legal Rights From Anti-Circumvention Law.

180 |

181 | No covered work shall be deemed part of an effective technological

182 | measure under any applicable law fulfilling obligations under article

183 | 11 of the WIPO copyright treaty adopted on 20 December 1996, or

184 | similar laws prohibiting or restricting circumvention of such

185 | measures.

186 |

187 | When you convey a covered work, you waive any legal power to forbid

188 | circumvention of technological measures to the extent such circumvention

189 | is effected by exercising rights under this License with respect to

190 | the covered work, and you disclaim any intention to limit operation or

191 | modification of the work as a means of enforcing, against the work's

192 | users, your or third parties' legal rights to forbid circumvention of

193 | technological measures.

194 |

195 | 4. Conveying Verbatim Copies.

196 |

197 | You may convey verbatim copies of the Program's source code as you

198 | receive it, in any medium, provided that you conspicuously and

199 | appropriately publish on each copy an appropriate copyright notice;

200 | keep intact all notices stating that this License and any

201 | non-permissive terms added in accord with section 7 apply to the code;

202 | keep intact all notices of the absence of any warranty; and give all

203 | recipients a copy of this License along with the Program.

204 |

205 | You may charge any price or no price for each copy that you convey,

206 | and you may offer support or warranty protection for a fee.

207 |

208 | 5. Conveying Modified Source Versions.

209 |

210 | You may convey a work based on the Program, or the modifications to

211 | produce it from the Program, in the form of source code under the

212 | terms of section 4, provided that you also meet all of these conditions:

213 |

214 | a) The work must carry prominent notices stating that you modified

215 | it, and giving a relevant date.

216 |

217 | b) The work must carry prominent notices stating that it is

218 | released under this License and any conditions added under section

219 | 7. This requirement modifies the requirement in section 4 to

220 | "keep intact all notices".

221 |

222 | c) You must license the entire work, as a whole, under this

223 | License to anyone who comes into possession of a copy. This

224 | License will therefore apply, along with any applicable section 7

225 | additional terms, to the whole of the work, and all its parts,

226 | regardless of how they are packaged. This License gives no

227 | permission to license the work in any other way, but it does not

228 | invalidate such permission if you have separately received it.

229 |

230 | d) If the work has interactive user interfaces, each must display

231 | Appropriate Legal Notices; however, if the Program has interactive

232 | interfaces that do not display Appropriate Legal Notices, your

233 | work need not make them do so.

234 |

235 | A compilation of a covered work with other separate and independent

236 | works, which are not by their nature extensions of the covered work,

237 | and which are not combined with it such as to form a larger program,

238 | in or on a volume of a storage or distribution medium, is called an

239 | "aggregate" if the compilation and its resulting copyright are not

240 | used to limit the access or legal rights of the compilation's users

241 | beyond what the individual works permit. Inclusion of a covered work

242 | in an aggregate does not cause this License to apply to the other

243 | parts of the aggregate.

244 |

245 | 6. Conveying Non-Source Forms.

246 |

247 | You may convey a covered work in object code form under the terms

248 | of sections 4 and 5, provided that you also convey the

249 | machine-readable Corresponding Source under the terms of this License,

250 | in one of these ways:

251 |

252 | a) Convey the object code in, or embodied in, a physical product

253 | (including a physical distribution medium), accompanied by the

254 | Corresponding Source fixed on a durable physical medium

255 | customarily used for software interchange.

256 |

257 | b) Convey the object code in, or embodied in, a physical product

258 | (including a physical distribution medium), accompanied by a

259 | written offer, valid for at least three years and valid for as

260 | long as you offer spare parts or customer support for that product

261 | model, to give anyone who possesses the object code either (1) a

262 | copy of the Corresponding Source for all the software in the

263 | product that is covered by this License, on a durable physical

264 | medium customarily used for software interchange, for a price no

265 | more than your reasonable cost of physically performing this

266 | conveying of source, or (2) access to copy the

267 | Corresponding Source from a network server at no charge.

268 |

269 | c) Convey individual copies of the object code with a copy of the

270 | written offer to provide the Corresponding Source. This

271 | alternative is allowed only occasionally and noncommercially, and

272 | only if you received the object code with such an offer, in accord

273 | with subsection 6b.

274 |

275 | d) Convey the object code by offering access from a designated

276 | place (gratis or for a charge), and offer equivalent access to the

277 | Corresponding Source in the same way through the same place at no

278 | further charge. You need not require recipients to copy the

279 | Corresponding Source along with the object code. If the place to

280 | copy the object code is a network server, the Corresponding Source

281 | may be on a different server (operated by you or a third party)

282 | that supports equivalent copying facilities, provided you maintain

283 | clear directions next to the object code saying where to find the

284 | Corresponding Source. Regardless of what server hosts the

285 | Corresponding Source, you remain obligated to ensure that it is

286 | available for as long as needed to satisfy these requirements.

287 |

288 | e) Convey the object code using peer-to-peer transmission, provided

289 | you inform other peers where the object code and Corresponding

290 | Source of the work are being offered to the general public at no

291 | charge under subsection 6d.

292 |

293 | A separable portion of the object code, whose source code is excluded

294 | from the Corresponding Source as a System Library, need not be

295 | included in conveying the object code work.

296 |

297 | A "User Product" is either (1) a "consumer product", which means any

298 | tangible personal property which is normally used for personal, family,

299 | or household purposes, or (2) anything designed or sold for incorporation

300 | into a dwelling. In determining whether a product is a consumer product,

301 | doubtful cases shall be resolved in favor of coverage. For a particular

302 | product received by a particular user, "normally used" refers to a

303 | typical or common use of that class of product, regardless of the status

304 | of the particular user or of the way in which the particular user

305 | actually uses, or expects or is expected to use, the product. A product

306 | is a consumer product regardless of whether the product has substantial

307 | commercial, industrial or non-consumer uses, unless such uses represent

308 | the only significant mode of use of the product.

309 |

310 | "Installation Information" for a User Product means any methods,

311 | procedures, authorization keys, or other information required to install

312 | and execute modified versions of a covered work in that User Product from

313 | a modified version of its Corresponding Source. The information must

314 | suffice to ensure that the continued functioning of the modified object

315 | code is in no case prevented or interfered with solely because

316 | modification has been made.

317 |

318 | If you convey an object code work under this section in, or with, or

319 | specifically for use in, a User Product, and the conveying occurs as

320 | part of a transaction in which the right of possession and use of the

321 | User Product is transferred to the recipient in perpetuity or for a

322 | fixed term (regardless of how the transaction is characterized), the

323 | Corresponding Source conveyed under this section must be accompanied

324 | by the Installation Information. But this requirement does not apply

325 | if neither you nor any third party retains the ability to install

326 | modified object code on the User Product (for example, the work has

327 | been installed in ROM).

328 |

329 | The requirement to provide Installation Information does not include a

330 | requirement to continue to provide support service, warranty, or updates

331 | for a work that has been modified or installed by the recipient, or for

332 | the User Product in which it has been modified or installed. Access to a

333 | network may be denied when the modification itself materially and

334 | adversely affects the operation of the network or violates the rules and

335 | protocols for communication across the network.

336 |

337 | Corresponding Source conveyed, and Installation Information provided,

338 | in accord with this section must be in a format that is publicly

339 | documented (and with an implementation available to the public in

340 | source code form), and must require no special password or key for

341 | unpacking, reading or copying.

342 |

343 | 7. Additional Terms.

344 |

345 | "Additional permissions" are terms that supplement the terms of this

346 | License by making exceptions from one or more of its conditions.

347 | Additional permissions that are applicable to the entire Program shall

348 | be treated as though they were included in this License, to the extent

349 | that they are valid under applicable law. If additional permissions

350 | apply only to part of the Program, that part may be used separately

351 | under those permissions, but the entire Program remains governed by

352 | this License without regard to the additional permissions.

353 |

354 | When you convey a copy of a covered work, you may at your option

355 | remove any additional permissions from that copy, or from any part of

356 | it. (Additional permissions may be written to require their own

357 | removal in certain cases when you modify the work.) You may place

358 | additional permissions on material, added by you to a covered work,

359 | for which you have or can give appropriate copyright permission.

360 |

361 | Notwithstanding any other provision of this License, for material you

362 | add to a covered work, you may (if authorized by the copyright holders of

363 | that material) supplement the terms of this License with terms:

364 |

365 | a) Disclaiming warranty or limiting liability differently from the

366 | terms of sections 15 and 16 of this License; or

367 |

368 | b) Requiring preservation of specified reasonable legal notices or

369 | author attributions in that material or in the Appropriate Legal

370 | Notices displayed by works containing it; or

371 |

372 | c) Prohibiting misrepresentation of the origin of that material, or

373 | requiring that modified versions of such material be marked in

374 | reasonable ways as different from the original version; or

375 |

376 | d) Limiting the use for publicity purposes of names of licensors or

377 | authors of the material; or

378 |

379 | e) Declining to grant rights under trademark law for use of some

380 | trade names, trademarks, or service marks; or

381 |

382 | f) Requiring indemnification of licensors and authors of that

383 | material by anyone who conveys the material (or modified versions of

384 | it) with contractual assumptions of liability to the recipient, for

385 | any liability that these contractual assumptions directly impose on

386 | those licensors and authors.

387 |

388 | All other non-permissive additional terms are considered "further

389 | restrictions" within the meaning of section 10. If the Program as you

390 | received it, or any part of it, contains a notice stating that it is

391 | governed by this License along with a term that is a further

392 | restriction, you may remove that term. If a license document contains

393 | a further restriction but permits relicensing or conveying under this

394 | License, you may add to a covered work material governed by the terms

395 | of that license document, provided that the further restriction does

396 | not survive such relicensing or conveying.

397 |

398 | If you add terms to a covered work in accord with this section, you

399 | must place, in the relevant source files, a statement of the

400 | additional terms that apply to those files, or a notice indicating

401 | where to find the applicable terms.

402 |

403 | Additional terms, permissive or non-permissive, may be stated in the

404 | form of a separately written license, or stated as exceptions;

405 | the above requirements apply either way.

406 |

407 | 8. Termination.

408 |

409 | You may not propagate or modify a covered work except as expressly

410 | provided under this License. Any attempt otherwise to propagate or

411 | modify it is void, and will automatically terminate your rights under

412 | this License (including any patent licenses granted under the third

413 | paragraph of section 11).

414 |

415 | However, if you cease all violation of this License, then your

416 | license from a particular copyright holder is reinstated (a)

417 | provisionally, unless and until the copyright holder explicitly and

418 | finally terminates your license, and (b) permanently, if the copyright

419 | holder fails to notify you of the violation by some reasonable means

420 | prior to 60 days after the cessation.

421 |

422 | Moreover, your license from a particular copyright holder is

423 | reinstated permanently if the copyright holder notifies you of the

424 | violation by some reasonable means, this is the first time you have

425 | received notice of violation of this License (for any work) from that

426 | copyright holder, and you cure the violation prior to 30 days after

427 | your receipt of the notice.

428 |

429 | Termination of your rights under this section does not terminate the

430 | licenses of parties who have received copies or rights from you under

431 | this License. If your rights have been terminated and not permanently

432 | reinstated, you do not qualify to receive new licenses for the same

433 | material under section 10.

434 |

435 | 9. Acceptance Not Required for Having Copies.

436 |

437 | You are not required to accept this License in order to receive or

438 | run a copy of the Program. Ancillary propagation of a covered work

439 | occurring solely as a consequence of using peer-to-peer transmission

440 | to receive a copy likewise does not require acceptance. However,

441 | nothing other than this License grants you permission to propagate or

442 | modify any covered work. These actions infringe copyright if you do

443 | not accept this License. Therefore, by modifying or propagating a

444 | covered work, you indicate your acceptance of this License to do so.

445 |

446 | 10. Automatic Licensing of Downstream Recipients.

447 |

448 | Each time you convey a covered work, the recipient automatically

449 | receives a license from the original licensors, to run, modify and

450 | propagate that work, subject to this License. You are not responsible

451 | for enforcing compliance by third parties with this License.

452 |

453 | An "entity transaction" is a transaction transferring control of an

454 | organization, or substantially all assets of one, or subdividing an

455 | organization, or merging organizations. If propagation of a covered

456 | work results from an entity transaction, each party to that

457 | transaction who receives a copy of the work also receives whatever

458 | licenses to the work the party's predecessor in interest had or could

459 | give under the previous paragraph, plus a right to possession of the

460 | Corresponding Source of the work from the predecessor in interest, if

461 | the predecessor has it or can get it with reasonable efforts.

462 |

463 | You may not impose any further restrictions on the exercise of the

464 | rights granted or affirmed under this License. For example, you may

465 | not impose a license fee, royalty, or other charge for exercise of

466 | rights granted under this License, and you may not initiate litigation

467 | (including a cross-claim or counterclaim in a lawsuit) alleging that

468 | any patent claim is infringed by making, using, selling, offering for

469 | sale, or importing the Program or any portion of it.

470 |

471 | 11. Patents.

472 |

473 | A "contributor" is a copyright holder who authorizes use under this

474 | License of the Program or a work on which the Program is based. The

475 | work thus licensed is called the contributor's "contributor version".

476 |

477 | A contributor's "essential patent claims" are all patent claims

478 | owned or controlled by the contributor, whether already acquired or

479 | hereafter acquired, that would be infringed by some manner, permitted

480 | by this License, of making, using, or selling its contributor version,

481 | but do not include claims that would be infringed only as a

482 | consequence of further modification of the contributor version. For

483 | purposes of this definition, "control" includes the right to grant

484 | patent sublicenses in a manner consistent with the requirements of

485 | this License.

486 |

487 | Each contributor grants you a non-exclusive, worldwide, royalty-free

488 | patent license under the contributor's essential patent claims, to

489 | make, use, sell, offer for sale, import and otherwise run, modify and

490 | propagate the contents of its contributor version.

491 |

492 | In the following three paragraphs, a "patent license" is any express

493 | agreement or commitment, however denominated, not to enforce a patent

494 | (such as an express permission to practice a patent or covenant not to

495 | sue for patent infringement). To "grant" such a patent license to a

496 | party means to make such an agreement or commitment not to enforce a

497 | patent against the party.

498 |

499 | If you convey a covered work, knowingly relying on a patent license,

500 | and the Corresponding Source of the work is not available for anyone

501 | to copy, free of charge and under the terms of this License, through a

502 | publicly available network server or other readily accessible means,

503 | then you must either (1) cause the Corresponding Source to be so

504 | available, or (2) arrange to deprive yourself of the benefit of the

505 | patent license for this particular work, or (3) arrange, in a manner

506 | consistent with the requirements of this License, to extend the patent

507 | license to downstream recipients. "Knowingly relying" means you have

508 | actual knowledge that, but for the patent license, your conveying the

509 | covered work in a country, or your recipient's use of the covered work

510 | in a country, would infringe one or more identifiable patents in that

511 | country that you have reason to believe are valid.

512 |

513 | If, pursuant to or in connection with a single transaction or

514 | arrangement, you convey, or propagate by procuring conveyance of, a

515 | covered work, and grant a patent license to some of the parties

516 | receiving the covered work authorizing them to use, propagate, modify

517 | or convey a specific copy of the covered work, then the patent license

518 | you grant is automatically extended to all recipients of the covered

519 | work and works based on it.

520 |

521 | A patent license is "discriminatory" if it does not include within

522 | the scope of its coverage, prohibits the exercise of, or is

523 | conditioned on the non-exercise of one or more of the rights that are

524 | specifically granted under this License. You may not convey a covered

525 | work if you are a party to an arrangement with a third party that is

526 | in the business of distributing software, under which you make payment

527 | to the third party based on the extent of your activity of conveying

528 | the work, and under which the third party grants, to any of the

529 | parties who would receive the covered work from you, a discriminatory

530 | patent license (a) in connection with copies of the covered work

531 | conveyed by you (or copies made from those copies), or (b) primarily

532 | for and in connection with specific products or compilations that

533 | contain the covered work, unless you entered into that arrangement,

534 | or that patent license was granted, prior to 28 March 2007.

535 |

536 | Nothing in this License shall be construed as excluding or limiting

537 | any implied license or other defenses to infringement that may

538 | otherwise be available to you under applicable patent law.

539 |

540 | 12. No Surrender of Others' Freedom.

541 |

542 | If conditions are imposed on you (whether by court order, agreement or

543 | otherwise) that contradict the conditions of this License, they do not

544 | excuse you from the conditions of this License. If you cannot convey a

545 | covered work so as to satisfy simultaneously your obligations under this

546 | License and any other pertinent obligations, then as a consequence you may

547 | not convey it at all. For example, if you agree to terms that obligate you

548 | to collect a royalty for further conveying from those to whom you convey

549 | the Program, the only way you could satisfy both those terms and this

550 | License would be to refrain entirely from conveying the Program.

551 |

552 | 13. Use with the GNU Affero General Public License.

553 |

554 | Notwithstanding any other provision of this License, you have

555 | permission to link or combine any covered work with a work licensed

556 | under version 3 of the GNU Affero General Public License into a single

557 | combined work, and to convey the resulting work. The terms of this

558 | License will continue to apply to the part which is the covered work,

559 | but the special requirements of the GNU Affero General Public License,

560 | section 13, concerning interaction through a network will apply to the

561 | combination as such.

562 |

563 | 14. Revised Versions of this License.

564 |

565 | The Free Software Foundation may publish revised and/or new versions of

566 | the GNU General Public License from time to time. Such new versions will

567 | be similar in spirit to the present version, but may differ in detail to

568 | address new problems or concerns.

569 |

570 | Each version is given a distinguishing version number. If the

571 | Program specifies that a certain numbered version of the GNU General

572 | Public License "or any later version" applies to it, you have the

573 | option of following the terms and conditions either of that numbered

574 | version or of any later version published by the Free Software

575 | Foundation. If the Program does not specify a version number of the

576 | GNU General Public License, you may choose any version ever published

577 | by the Free Software Foundation.

578 |

579 | If the Program specifies that a proxy can decide which future

580 | versions of the GNU General Public License can be used, that proxy's

581 | public statement of acceptance of a version permanently authorizes you

582 | to choose that version for the Program.

583 |

584 | Later license versions may give you additional or different

585 | permissions. However, no additional obligations are imposed on any

586 | author or copyright holder as a result of your choosing to follow a

587 | later version.

588 |

589 | 15. Disclaimer of Warranty.

590 |

591 | THERE IS NO WARRANTY FOR THE PROGRAM, TO THE EXTENT PERMITTED BY

592 | APPLICABLE LAW. EXCEPT WHEN OTHERWISE STATED IN WRITING THE COPYRIGHT

593 | HOLDERS AND/OR OTHER PARTIES PROVIDE THE PROGRAM "AS IS" WITHOUT WARRANTY

594 | OF ANY KIND, EITHER EXPRESSED OR IMPLIED, INCLUDING, BUT NOT LIMITED TO,

595 | THE IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR

596 | PURPOSE. THE ENTIRE RISK AS TO THE QUALITY AND PERFORMANCE OF THE PROGRAM

597 | IS WITH YOU. SHOULD THE PROGRAM PROVE DEFECTIVE, YOU ASSUME THE COST OF

598 | ALL NECESSARY SERVICING, REPAIR OR CORRECTION.

599 |

600 | 16. Limitation of Liability.

601 |

602 | IN NO EVENT UNLESS REQUIRED BY APPLICABLE LAW OR AGREED TO IN WRITING

603 | WILL ANY COPYRIGHT HOLDER, OR ANY OTHER PARTY WHO MODIFIES AND/OR CONVEYS

604 | THE PROGRAM AS PERMITTED ABOVE, BE LIABLE TO YOU FOR DAMAGES, INCLUDING ANY

605 | GENERAL, SPECIAL, INCIDENTAL OR CONSEQUENTIAL DAMAGES ARISING OUT OF THE

606 | USE OR INABILITY TO USE THE PROGRAM (INCLUDING BUT NOT LIMITED TO LOSS OF

607 | DATA OR DATA BEING RENDERED INACCURATE OR LOSSES SUSTAINED BY YOU OR THIRD

608 | PARTIES OR A FAILURE OF THE PROGRAM TO OPERATE WITH ANY OTHER PROGRAMS),

609 | EVEN IF SUCH HOLDER OR OTHER PARTY HAS BEEN ADVISED OF THE POSSIBILITY OF

610 | SUCH DAMAGES.

611 |

612 | 17. Interpretation of Sections 15 and 16.

613 |

614 | If the disclaimer of warranty and limitation of liability provided

615 | above cannot be given local legal effect according to their terms,

616 | reviewing courts shall apply local law that most closely approximates

617 | an absolute waiver of all civil liability in connection with the

618 | Program, unless a warranty or assumption of liability accompanies a

619 | copy of the Program in return for a fee.

620 |

621 | END OF TERMS AND CONDITIONS

622 |

623 | How to Apply These Terms to Your New Programs

624 |

625 | If you develop a new program, and you want it to be of the greatest

626 | possible use to the public, the best way to achieve this is to make it

627 | free software which everyone can redistribute and change under these terms.

628 |

629 | To do so, attach the following notices to the program. It is safest

630 | to attach them to the start of each source file to most effectively

631 | state the exclusion of warranty; and each file should have at least

632 | the "copyright" line and a pointer to where the full notice is found.

633 |

634 | {one line to give the program's name and a brief idea of what it does.}

635 | Copyright (C) {year} {name of author}

636 |

637 | This program is free software: you can redistribute it and/or modify

638 | it under the terms of the GNU General Public License as published by

639 | the Free Software Foundation, either version 3 of the License, or

640 | (at your option) any later version.

641 |

642 | This program is distributed in the hope that it will be useful,

643 | but WITHOUT ANY WARRANTY; without even the implied warranty of

644 | MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the

645 | GNU General Public License for more details.

646 |

647 | You should have received a copy of the GNU General Public License

648 | along with this program. If not, see .

649 |

650 | Also add information on how to contact you by electronic and paper mail.

651 |

652 | If the program does terminal interaction, make it output a short

653 | notice like this when it starts in an interactive mode:

654 |

655 | {project} Copyright (C) {year} {fullname}

656 | This program comes with ABSOLUTELY NO WARRANTY; for details type `show w'.

657 | This is free software, and you are welcome to redistribute it

658 | under certain conditions; type `show c' for details.

659 |

660 | The hypothetical commands `show w' and `show c' should show the appropriate

661 | parts of the General Public License. Of course, your program's commands

662 | might be different; for a GUI interface, you would use an "about box".

663 |

664 | You should also get your employer (if you work as a programmer) or school,

665 | if any, to sign a "copyright disclaimer" for the program, if necessary.

666 | For more information on this, and how to apply and follow the GNU GPL, see

667 | .

668 |

669 | The GNU General Public License does not permit incorporating your program

670 | into proprietary programs. If your program is a subroutine library, you

671 | may consider it more useful to permit linking proprietary applications with

672 | the library. If this is what you want to do, use the GNU Lesser General

673 | Public License instead of this License. But first, please read

674 | .

675 |

--------------------------------------------------------------------------------

/Makefile:

--------------------------------------------------------------------------------

1 | init:

2 | pip install -r requirements.txt

3 |

4 | test:

5 | pip install -r requirements.txt

6 | python -m unittest discover . "*_test.py" -v

--------------------------------------------------------------------------------

/README.md:

--------------------------------------------------------------------------------

1 |

2 |  3 |

3 |

4 |

5 | **TokenQuery** is a query language over any labeled text (sequence of tokens); very similar to regular expressions but on top of tokens. TokenQuery can be viewed as an interface to query for specific patterns in a sequence of tokens using information provided by diverse NLP engines.

6 |

7 |

8 | ## What is a `Token`?

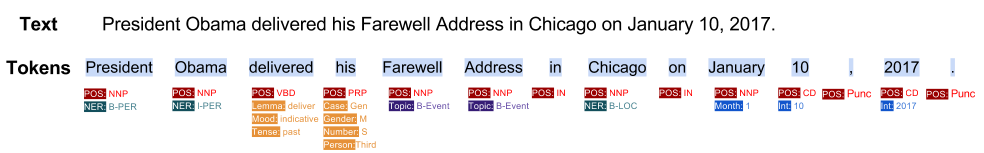

9 | In order to process text (natural language text), the common approach for natural language processing (NLP) is to break the text down into smaller processing units (tokens). Options include phonemes, morphemes, lexical information, phrases, sentences, or paragraphs. For example, this sentence :`President Obama delivered his Farewell Address in Chicago on January 10, 2017.` can be divided into tokens shown in blue highlights.

10 |

11 |

12 |  13 |

13 |

14 | Inside TokenQuery each token contains a text (textual content of token), start and end index of the span inside the original text and a set of labels (i.e. key/value pairs) provided by NLP engines. In our example, the red labels (POS tags) are coming from Stanford POS tagger, the orange labels are from Google NLP API, and purple ones are coming from an internal topic extractor. One of the challeneges for natural language processing, is the fact that each unit is providing isolated information about each token in different formats and currently is really hard to have a query considering labels coming from different processing units.

15 |

16 | TokenQuery enables us to

17 | - Combine labels from different NLP engines

18 | - Query and reasoning over tokenized text

19 | - Defining extentions for desired query functions

20 |

21 | The inital idea came from *Angel Chang* and *Christopher Manning* presented in [this paper](http://nlp.stanford.edu/pubs/tokensregex-tr-2014.pdf). They have implemeneted it (TOKENSREGEX) in Java inside *Stanford CoreNLP* software package. Our version uses a different language for the query which is extensible, more structured, and supporting more features.

22 |

23 |

24 | ## TokenQuery language

25 | The language is defined as follow. Each query consists of a group of tokens shown each inside `[` `]`s. If you want to use `]` inside your token matches you can simply use `\` to skip.

26 |

27 |

28 | ```

29 | [expr_for_token1][expr_for_token2][expr_for_token3]

30 | ```

31 | which means we are searching for a sequence of three tokens that the first token satisfies the condition provided by `expr_for_token1`, the second token satisfies the condition provided by `expr_for_token2` and so on.

32 |

33 | ## Quantifiers

34 | Likewise regular expressions, you can use quantifiers to have more compact queries. For example, the following query will match zero or more tokens satisfying the condition provided by `expr_for_token1` followed by another token satisfies condition provided by `expr_for_token2`.

35 | ```

36 | [expr_for_token1]*[expr_for_token2]

37 | ```

38 | | type | occurrence | example |

39 | | ---- | ---- | ---- |

40 | | `?` | once or not at all | `[expr_for_token]?` |

41 | | `*` | zero or more times | `[expr_for_token]*` |

42 | | `+` | one or more times | `[expr_for_token]+` |

43 | | `{x}` | x number of times | `[expr_for_token]{3}` |

44 | | `{x,y}` | between x and y number of times | `[expr_for_token]{3,5}` |

45 |

46 | ## Capturing Groups

47 | Like reguar expressions, you can define capturing groups by parentheses.

48 | for example `([expr_for_token1]+) [expr_for_token2] [expr_for_token3]` returns a group containing sequence of tokens with satisfies the condition provided by expr_for_token1. Hence, `([expr_for_token1]+) [expr_for_token2] ([expr_for_token3])` returns two groups (`chunk1` and `chunk2`) with a list of tokens matched inside each parentheses. You can also use named capturing by using `(name )`. For example `(name [expr_for_token1])` captures results under the name of `name`.

49 | If you don't provide any, it will capture all as a single group; in other words, `[expr_for_token1]+ [expr_for_token2] [expr_for_token3]` is equal to `([expr_for_token1]+ [expr_for_token2] [expr_for_token3])`.

50 |

51 | ## Token Expression

52 | Expressions (like `expr_for_token1` in the above examples) can be viewed as a list of acceptors for each token.

53 |

54 | ### Basic expressions

55 | `[label:operation(operation_input)]` is the base unit for defining a token expression, which means running `operation` on the value of `label` for this token returns if we should accept this token or not. `operation` is a function that accepts a token and optional extra setting string (`operation_input`) and returns `True` or `False`.

56 | For example, `[pos:str_eq(VBZ)]` matches any token that has a label `pos` and the string value for that is equal to `VBZ`. `str_eq` is an standard string operation check if the string is equal the extra setting string.

57 | or `[pos:str_reg(V.*)]` matches any token that has a `pos` label and the value for that label matches regex `V.*`. (i.e. any verbs)

58 | Note: If you want to check if a label exists or not and you don't care about the value of the label you can simply use this `[pos:str_reg(.*)]`.

59 | If no label provided the default will consider the text of the token. For example, `[str_reg(.*)]` will match any token or `[str_reg('painter')]` matches any token that has 'painter' as text.

60 |

61 | ### core operations (acceptors)

62 | Here is the list of predefined operations. You can extend this framework with your own defined operations.

63 |

64 | #### String

65 | This package provides string operations described below.

66 |

67 | | operation | description | examples |

68 | | ---- | ---- | ---- |

69 | | `str_eq` | string equals to extra setting string | `[str_eq(Obama)]` , `[pos:str_eq(VBZ)]` |

70 | | `str_reg` | string matches regex provided by extra setting string | `[str_req(an?)]`, `[pos:str_eq(V.*)]` |

71 | | `str_len` | lenght of the string compared to the value of extra setting string. (`==`, `>`, `<`, `!=` ,`>=`, `<=`) | `[str_len(=12)]`, `[ner:str_len(>6)]`, `[str_len(!=2)]` |

72 |

73 | **Shortened versions**

74 | For the convinence of use, exact string match is possible by having the text you want to match inside `"`s.

75 | For example `["painter"]` will match any token that its text is `painter` but not `Painter`.

76 | If you want to find tokens that matches a regex you can have your regex inside `/`s . For example `[/an?/]` matches tokens having text `a` or `an`.

77 | `[/Al.*/]` matches any token starting with `Al`.

78 | `[/km|kilometers?/]` matches `km`, `kilometer` and `kilometers`

79 |

80 | #### Int

81 | This package provides operations that will cast the value of labels into an integer and apply arithmetic operations on that.

82 |

83 | | operation | description | examples |

84 | | ---- | ---- | ---- |

85 | | `int_value` | casts the value of label into an integer and compare it to the integer provided by extra setting string (`==`, `>`, `<`, `!=` ,`>=`, `<=`) | `[int_value(==5)]` , `[month:int_value(>1)]`, `[year:int_value(>1990)]` |

86 | | `int_e` | casts the value of label into an integer and check if it is equal to int provided by extra setting string. | `[int_value(5)]` , `[month:int_value(1)]` |

87 | | `int_g` | `int_g(X)` is equivalent to use `int_value(>X)` | `[month:int_g(1)]` |

88 | | `int_l` | `int_l(X)` is equivalent to use `int_value(=X)` | `[int_ge(0)]` |

92 |

93 | #### Web

94 | This package provides operations for capturing meaningful web patterns.

95 |

96 | | operation | description | examples |

97 | | ---- | ---- | ---- |

98 | | `web_is_url` | the string is a web url | `[text:web_is_url()]` , `[freebase_id:web_is_url()]`|

99 | | `web_is_email` | the string is an email | `[text:web_is_email()]` , `[contact:web_is_email()]`|

100 | | `web_is_emoji` | the string is an emoji or emojicon | `[text:web_is_emoji()]` |

101 | | `web_is_hex_code` | the string is a hex code | `[color:web_is_hex_code()]` |

102 | | `web_is_hashtag` | the string is a hashtag | `[tag:web_is_hashtag()]` |

103 |

104 | #### Date

105 | This package provides operations for working with date and time info in iso format.

106 |

107 | | operation | description | examples |

108 | | ---- | ---- | ---- |

109 | | `date_is` | the date in iso format is same as extra setting string | `[date:date_is(2008-09-15T15:53:00)]`|

110 | | `date_is_after` | the date in iso format is after the date in extra setting string | `[date:date_is_after(2008-09-15)]` |

111 | | `date_is_before` | the date in iso format is before the date in extra setting string | `[date:date_is_before(2008-09-15)]` |

112 | | `date_y_is` | the year of the date in iso format is equal to the month in extra setting | `[date:date_y_is(2008)]` |

113 | | `date_m_is` | the month of the date in iso format is equal to the month in extra setting | `[date:date_m_is(9)]`, `[date:date_m_is(09)]`|

114 | | `date_d_is` | the day of the date in iso format is equal to the month in extra setting | `[date:date_y_is(15)]` |

115 |

116 | #### Vector

117 |

118 | | operation | description | examples |

119 | | ---- | ---- | ---- |

120 | | `vec_cos_sim` | cosine similarity between two vectors | `[word2vec:vec_cos_sim([1, 0, -2, 1.5]>0.5)]`|

121 | | `vec_cos_dist` | cosine distance between two vectors | `[word2vec:vec_cos_dist([1, 0, -2, 1.5]==0)]` |

122 | | `vec_man_dist` | manhattan distance between two vectors | `[word2vec:vec_man_dist([1, 0, -2, 1.5]>=10)]` |

123 |

124 |

125 | ## compound expressions

126 | For each token is possible to compound several basic expressions to support more complex patterns. Compounding is done using `!` (not), `&` (and) and `|` (or) symbols. For example, `[!pos:str_reg(V.*)]` means any token that it is not a verb.

127 | `[pos:str_reg(V.*)&!str_eq(is)]` matches any verb except `is`.

128 | The `!` has the highest proiority and the `&` and `|` has same priority and right associative. You can change the priority by using parentheses.

129 | ```

130 | !X and Y <=> ( (!(X)) and Y )

131 | !(X and Y) <=> ( !(X and Y) )

132 | !(X and Y) or Z <=> ( ( !(X and Y) ) or Z )

133 | (X and Y) or Z <=> ( ( X and Y) or Z )

134 | X and Y or Z <=> ( X and (Y or Z) )

135 | ```

136 |

137 | # How to install

138 | ```

139 | pip install tokenquery

140 | ```

141 | It has been test to work on python 2.7+

142 |

143 | ## How to use

144 | You can use your own tokenizer and create tokens or use our nltk wrapper to do the tokenization (see examples).

145 | We highly recommend to use a tokenizer that provides start and end of each token in the original text and the normalized value. This is surprizing helpful for visualization and debugging. For instance NLTK PTB tokenizer does not provide these info; so we wrote an script to estimate these from the output for our goal.

146 | Yes, this tool can be seen as an attempt to combine different types of information provided by NLP technologies considering using same tokenization. Currently we have integration with NLTK tokenizer and POS tagger and we are working to connect it to Spacy and google NLP API.

147 |

148 | ## NLP Examples

149 | We belive a big portion of NLP information can be expressed in terms of labels on top of tokens. Here is a list of the ones currently we use and how we represent it.

150 | - Part Of Speech tags (e.g. `[pos:/V.*/]`)

151 |

152 | - Lemma (e.g. `[lemma:'be']`)

153 |

154 | - Named-Entity tags (e.g. `[ner:"PERSON"]`)

155 |

156 | - Brown clusters

157 |

158 | | label | We | need | a | lawyer | . |

159 | |----|----|----|----|----|----|

160 | | POS | `PRP` | `VBP` | `DT` | `NN` | `.` |

161 | | bcluster| | | |`1000001101000` |

162 |

163 | And we can query members inside a cluster by tokenquery like this:

164 | `[bcluster:/100000110[0-1]+/])`

165 | which will match all of these and more. (for more info see Miller et al., NAACL 2004)

166 |

167 | | word | code |

168 | |--------|-----|

169 | | lawyer | 1000001101000 |

170 | | newspaperman | 100000110100100 |

171 | | stewardess | 100000110100101 |

172 | | toxicologist | 10000011010011 |

173 | | slang | 1000001101010 |

174 | | babysitter | 100000110101100 |

175 | | conspirator | 1000001101011010 |

176 | | womanizer | 1000001101011011 |

177 | | mailman | 10000011010111 |

178 | | salesman | 100000110110000 |

179 | | bookkeeper | 1000001101100010 |

180 | | troubleshooter | 10000011011000110 |

181 | | bouncer | 10000011011000111 |

182 | | technician | 1000001101100100 |

183 | | janitor | 1000001101100101 |

184 | | saleswoman | 1000001101100110 |

185 |

186 |

187 | - Word embeddings

188 | For word embeddings you can use exact match. You can also define fancy metrics for comparision like cosine similarity as an operation. implemente more like .

189 | e.g. `[w2v:cos_sim(A0F892<0.5)])`

190 |

191 | #### Chunks and Phrases

192 | For chunks we recommend to use IOB formatting.

193 |

194 | - Noun phrases

195 | We use label `N-PH` for noun phrase, `B-NP` as a value for starting a noun phrase and `I-NP` for Continue of a noun phrase. Or you can use directly `B-NP` as lable and keep the value for the id of that phrase in your knowledge base if any.

196 |

197 | ### Examples

198 |

199 | #### Detecting name of painters

200 | ```

201 | from tokenquery.nlp.tokenizer import Tokenizer

202 | from tokenquery.nlp.pos_tagger import POSTagger

203 | from tokenquery.tokenquery import TokenQuery

204 |

205 | # Penn Tree Bank Tokenizer

206 | tokenizer = Tokenizer('PTBTokenizer')

207 | # NLTK POS tagger

208 | pos_tagger = POSTagger()

209 |

210 | # Test sentence

211 | input_text = 'David is a painter and I work as a writer.'

212 | # Tokenizing the sentence

213 | input_tokens = tokenizer.tokenize(input_text)

214 | # adding pos tags

215 | input_tokens = pos_tagger.tag(input_tokens)

216 |

217 | # token regex to extract name of the painters

218 | token_query_1 = TokenQuery('([pos:"NNP"]) [pos:"VBZ"] [/an?/] ["painter"]')

219 | token_query_1.match_tokens(input_tokens)

220 |

221 | # lets change the sentence

222 | input_text = 'David is a famous painter and I work as a writer.'

223 | input_tokens = tokenizer.tokenize(input_text)

224 | input_tokens = pos_tagger.tag(input_tokens)

225 |

226 | # because of `famous` now your token regex 1 isn't working anymore

227 | token_query_1.match_tokens(input_tokens)

228 |

229 | # Adding possible adjectives

230 | token_query_2 = TokenQuery('([pos:"NNP"]) [pos:"VBZ"] [/an?/] [pos:"JJ"]* ["painter"]')

231 | token_query_2.match_tokens(input_tokens)

232 |

233 | # You can add labels directly

234 | input_tokens[0].add_a_label('ner', 'PERSON')

235 |

236 | # A mixture of labels will give you the same result

237 | token_query_3 = TokenQuery('([ner:"PERSON"]) [pos:"VBZ"] [/an?/] [pos:"JJ"]* ["painter"]')

238 | token_query_3.match_tokens(input_tokens)

239 |

240 | # To cover names with more tokens

241 | token_query_4 = TokenQuery('([ner:"PERSON"]+) [pos:"VBZ"] [/an?/] [pos:"JJ"]* ["painter"]')

242 | token_query_4.match_tokens(input_tokens)

243 |

244 | ```

--------------------------------------------------------------------------------

/requirements.txt:

--------------------------------------------------------------------------------

1 | nltk

2 | conllu

3 | google-api-python-client

4 | scipy

5 | sklearn

--------------------------------------------------------------------------------

/resources/TokenQuery_example_1.png:

--------------------------------------------------------------------------------

https://raw.githubusercontent.com/ramtinms/tokenquery/a6bcba2f40c7e9d0e0851b24bddad5d6dd25b273/resources/TokenQuery_example_1.png

--------------------------------------------------------------------------------

/resources/Token_query_logo.png:

--------------------------------------------------------------------------------

https://raw.githubusercontent.com/ramtinms/tokenquery/a6bcba2f40c7e9d0e0851b24bddad5d6dd25b273/resources/Token_query_logo.png

--------------------------------------------------------------------------------

/resources/TokenrRegex_logo.png:

--------------------------------------------------------------------------------

https://raw.githubusercontent.com/ramtinms/tokenquery/a6bcba2f40c7e9d0e0851b24bddad5d6dd25b273/resources/TokenrRegex_logo.png

--------------------------------------------------------------------------------

/setup.cfg:

--------------------------------------------------------------------------------

1 | [metadata]

2 | description-file = README.md

3 |

4 |

--------------------------------------------------------------------------------

/setup.py:

--------------------------------------------------------------------------------

1 | from distutils.core import setup

2 | from setuptools import find_packages

3 | setup(

4 | name='tokenquery',

5 | packages=find_packages(),

6 | version='0.1.0',

7 | description='Tokenquery - query language for tokens ',

8 | author='Ramtin Seraj',

9 | author_email='mehdizadeh.ramtin@gmail.com',

10 | url='https://github.com/ramtinms/tokenquery',

11 | download_url='https://github.com/ramtinms/tokenquery/tarball/0.1',

12 | keywords=['natural language processing', 'nlp', 'regex', 'regular expressions', 'tokenizer', 'query'],

13 | classifiers=['Intended Audience :: Information Technology',

14 | 'Intended Audience :: Science/Research',

15 | 'Topic :: Scientific/Engineering',

16 | 'Topic :: Scientific/Engineering :: Artificial Intelligence',

17 | 'Topic :: Scientific/Engineering :: Human Machine Interfaces',

18 | 'Topic :: Scientific/Engineering :: Information Analysis',

19 | 'Topic :: Text Processing',

20 | 'Topic :: Text Processing :: Filters',

21 | 'Topic :: Text Processing :: General',

22 | 'Topic :: Text Processing :: Indexing',

23 | 'Topic :: Text Processing :: Linguistic',

24 | ],

25 | install_requires=[

26 | "requests",

27 | "nltk",

28 | "conllu",

29 | "google-api-python-client",

30 | "sklearn",

31 | ],

32 |

33 | )

34 |

--------------------------------------------------------------------------------

/tokenquery/__init__.py:

--------------------------------------------------------------------------------

https://raw.githubusercontent.com/ramtinms/tokenquery/a6bcba2f40c7e9d0e0851b24bddad5d6dd25b273/tokenquery/__init__.py

--------------------------------------------------------------------------------

/tokenquery/acceptors/__init__.py:

--------------------------------------------------------------------------------

https://raw.githubusercontent.com/ramtinms/tokenquery/a6bcba2f40c7e9d0e0851b24bddad5d6dd25b273/tokenquery/acceptors/__init__.py

--------------------------------------------------------------------------------

/tokenquery/acceptors/core/__init__.py:

--------------------------------------------------------------------------------

https://raw.githubusercontent.com/ramtinms/tokenquery/a6bcba2f40c7e9d0e0851b24bddad5d6dd25b273/tokenquery/acceptors/core/__init__.py

--------------------------------------------------------------------------------

/tokenquery/acceptors/core/date_opr.py:

--------------------------------------------------------------------------------

1 | import datetime

2 | import dateutil.parser

3 |

4 | # date (Iso format)

5 | # '2013-12-25T19:20:41.391393'

6 |

7 |

8 | def date_is(token_input, operation_input):

9 | # simple version compare two strings

10 | if 'T' in token_input:

11 | date1 = token_input.split('T')[0]

12 | else:

13 | date1 = token_input

14 |

15 | if 'T' in operation_input:

16 | date2 = operation_input.split('T')[0]

17 | else:

18 | date2 = operation_input

19 |

20 | if date1 == date2:

21 | return True

22 |

23 | return False

24 |

25 |

26 | def date_is_after(token_input, operation_input):

27 | # simple version compare two strings

28 | if 'T' in token_input:

29 | date1 = token_input.split('T')[0]

30 | else:

31 | date1 = token_input

32 |

33 | if 'T' in operation_input:

34 | date2 = operation_input.split('T')[0]

35 | else:

36 | date2 = operation_input

37 |

38 | if date1 > date2:

39 | return True

40 |

41 | return False

42 |

43 |

44 | def date_is_before(token_input, operation_input):

45 | # simple version compare two strings

46 | if 'T' in token_input:

47 | date1 = token_input.split('T')[0]

48 | else:

49 | date1 = token_input

50 |

51 | if 'T' in operation_input:

52 | date2 = operation_input.split('T')[0]

53 | else:

54 | date2 = operation_input

55 |

56 | if date1 < date2:

57 | return True

58 |

59 | return False

60 |

61 |

62 | def date_y_is(token_input, operation_input):

63 | if 'T' in token_input:

64 | date1 = token_input.split('T')[0]

65 | year = date1.split('-')[0]

66 | else:

67 | year = token_input.split('-')[0]

68 |

69 | if year == operation_input:

70 | return True

71 | return False

72 |

73 |

74 | def date_m_is(token_input, operation_input):

75 | if 'T' in token_input:

76 | date1 = token_input.split('T')[0]

77 | month = date1.split('-')[1]

78 | else:

79 | month = token_input.split('-')[1]

80 | if month == operation_input:

81 | return True

82 | return False

83 |

84 |

85 | def date_d_is(token_input, operation_input):

86 | if 'T' in token_input:

87 | date1 = token_input.split('T')[0]

88 | day = date1.split('-')[2]

89 | else:

90 | day = token_input.split('-')[2]

91 | if day == operation_input:

92 | return True

93 | return False

94 |

95 | # def date_is_x_days_before(token_input, operation_input):

96 | # # utc = pytz.UTC

97 | # # publish_date = dateutil.parser.parse(selected_date)

98 | # # event_start = utc.localize(dateutil.parser.parse(event_start_date))

99 | # # margin = datetime.timedelta(days=margin_days)

100 | # # if event_start - margin <= publish_date

101 | # # return True

102 | # # else:

103 | # # return False

104 |

--------------------------------------------------------------------------------

/tokenquery/acceptors/core/int_opr.py:

--------------------------------------------------------------------------------

1 | def int_value(token_input, operation_input):

2 | # parsing operation_input

3 |

4 | cond_type = ""

5 | comp_part = operation_input.lstrip().strip()[:2]

6 | if comp_part in ['==', '>=', '<=', '!=', '<>']:

7 | cond_type = comp_part

8 | try:

9 | cond_value = int(operation_input.lstrip().strip()[2:])

10 | except ValueError:

11 | # TODO raise tokenregex error

12 | return False

13 | elif comp_part[0] in ['=', '>', '<']:

14 | cond_type = comp_part[0]

15 | try:

16 | cond_value = int(operation_input.lstrip().strip()[1:])

17 | except ValueError:

18 | # TODO raise tokenregex error

19 | return False

20 |

21 | try:

22 | text_value = int(token_input)

23 | if cond_type == "=" or cond_type == "==":

24 | return text_value == cond_value

25 | elif cond_type == "<":

26 | return text_value < cond_value

27 | elif cond_type == ">":

28 | return text_value > cond_value

29 | elif cond_type == ">=":

30 | return text_value >= cond_value

31 | elif cond_type == "<=":

32 | return text_value <= cond_value

33 | elif cond_type == "!=" or cond_type == "<>":

34 | return text_value != cond_value

35 | else:

36 | return False

37 | except ValueError:

38 | # TODO raise tokenregex error

39 | return False

40 |

41 |

42 | def int_e(token_input, operation_input):

43 | try:

44 | text_value = int(token_input)

45 | op_value = int(operation_input)

46 | return text_value == op_value

47 | except ValueError:

48 | # TODO raise tokenregex error

49 | return False

50 |

51 |

52 | def int_g(token_input, operation_input):

53 | try:

54 | text_value = int(token_input)

55 | op_value = int(operation_input)

56 | return text_value > op_value

57 | except ValueError:

58 | # TODO raise tokenregex error

59 | return False

60 |

61 |

62 | def int_l(token_input, operation_input):

63 | try:

64 | text_value = int(token_input)

65 | op_value = int(operation_input)

66 | return text_value < op_value

67 | except ValueError:

68 | # TODO raise tokenregex error

69 | return False

70 |

71 |

72 | def int_ne(token_input, operation_input):

73 | try:

74 | text_value = int(token_input)

75 | op_value = int(operation_input)

76 | return text_value != op_value

77 | except ValueError:

78 | # TODO raise tokenregex error

79 | return False

80 |

81 |

82 | def int_le(token_input, operation_input):

83 | try:

84 | text_value = int(token_input)

85 | op_value = int(operation_input)

86 | return text_value <= op_value

87 | except ValueError:

88 | # TODO raise tokenregex error

89 | return False

90 |

91 |

92 | def int_ge(token_input, operation_input):

93 | try:

94 | text_value = int(token_input)

95 | op_value = int(operation_input)

96 | return text_value >= op_value

97 | except ValueError:

98 | # TODO raise tokenregex error

99 | return False

100 |

101 | # TODO

102 | # add M , K , ...

103 | # add float

104 |

--------------------------------------------------------------------------------

/tokenquery/acceptors/core/string_opr.py:

--------------------------------------------------------------------------------

1 | import re

2 |

3 | # String operations

4 |

5 |

6 | def str_eq(token_input, operation_input):

7 | if token_input == operation_input:

8 | return True

9 | return False

10 |

11 |

12 | def str_reg(token_input, operation_input):

13 | if not token_input:

14 | return False

15 | if re.match(operation_input, token_input):

16 | return True

17 | else:

18 | return False

19 |

20 |

21 | def str_len(token_input, operation_input):

22 | # parsing operation_input

23 | cond_type = ''

24 | comp_part = operation_input.lstrip().strip()[:2]

25 | if comp_part in ['==', '>=', '<=', '!=', '<>']:

26 | cond_type = comp_part

27 | try:

28 | cond_value = int(operation_input.lstrip().strip()[2:])

29 | except ValueError:

30 | # TODO raise tokenregex error

31 | return False

32 | elif comp_part[0] in ['=', '>', '<']:

33 | cond_type = comp_part[0]

34 | try:

35 | cond_value = int(operation_input.lstrip().strip()[1:])

36 | except ValueError:

37 | # TODO raise tokenregex error

38 | return False

39 | else:

40 | return 'unknown operation'

41 |

42 | try:

43 | text_len = len(token_input)

44 | if cond_type == "==" or cond_type == "=":

45 | return text_len == cond_value

46 | elif cond_type == "<":

47 | return text_len < cond_value

48 | elif cond_type == ">":

49 | return text_len > cond_value

50 | elif cond_type == ">=":

51 | return text_len >= cond_value

52 | elif cond_type == "<=":

53 | return text_len <= cond_value

54 | elif cond_type == "!=" or cond_type == "<>":

55 | return text_len != cond_value

56 | else:

57 | return False

58 | except ValueError:

59 | # TODO raise tokenregex error

60 | return False

61 |

62 | # TODO

63 | # def str_edit_dist(token_input, operation_input):

64 | # pass

65 | # has acrylic letters

66 | # is punctuation

67 | # is stop word

68 | # is a number : 2 two II

69 |

--------------------------------------------------------------------------------

/tokenquery/acceptors/core/vector_opr.py:

--------------------------------------------------------------------------------

1 | from sklearn.metrics.pairwise import cosine_similarity

2 | from sklearn.metrics.pairwise import cosine_distances

3 | from sklearn.metrics.pairwise import manhattan_distances

4 | import numpy as np

5 |

6 |

7 | def change_string_to_vector(string):

8 | # comma seperated, spaces will be ignored

9 | vector = []

10 | string = string.split('[')[1]

11 | string = string.split(']')[0]

12 | string = string.replace(r'\s', '')

13 | for value_string in string.split(','):

14 | vector.append(float(value_string))

15 | return np.array(vector).reshape(1, -1)

16 |

17 |

18 | def vec_cos_sim(token_input, operation_input):

19 | operation_string = None

20 | ref_vector_string = None

21 | cond_value_string = None

22 | for opr_sign in ['==', '>=', '<=', '!=', '<>', '<', '>', '=']:

23 | if opr_sign in operation_input:

24 | ref_vector_string = operation_input.split(opr_sign)[0]

25 | operation_string = opr_sign

26 | cond_value_string = operation_input.split(opr_sign)[1]

27 | break

28 |

29 | if ref_vector_string and cond_value_string and operation_string:

30 | try:

31 | cond_value = float(cond_value_string)

32 | ref_vector = change_string_to_vector(ref_vector_string)

33 | token_vector = change_string_to_vector(token_input)

34 | if len(ref_vector) != len(token_vector):

35 | print ('len of vectors does not match')

36 | return False

37 | if operation_string == "=" or operation_string == "==":

38 | return cosine_similarity(token_vector, ref_vector) == cond_value

39 | elif operation_string == "<":

40 | return cosine_similarity(token_vector, ref_vector) < cond_value

41 | elif operation_string == ">":

42 | return cosine_similarity(token_vector, ref_vector) > cond_value

43 | elif operation_string == ">=":

44 | return cosine_similarity(token_vector, ref_vector) >= cond_value

45 | elif operation_string == "<=":

46 | return cosine_similarity(token_vector, ref_vector) <= cond_value

47 | elif operation_string == "!=" or operation_string == "<>":

48 | return cosine_similarity(token_vector, ref_vector) != cond_value

49 | else:

50 | return False

51 | except ValueError:

52 | # TODO raise tokenregex error

53 | return False

54 |

55 | else:

56 | # TODO raise tokenregex error

57 | print ('Problem with the operation input')

58 |

59 |