├── CONTRIBUTING.md

├── .github

└── ISSUE_TEMPLATE

│ └── bug_report.md

├── README.md

├── LICENSE

├── TF-Serving-Demo.ipynb

└── Weight_Pruning_in_Keras_with_Fashion_MNIST.ipynb

/CONTRIBUTING.md:

--------------------------------------------------------------------------------

1 | ## Contribution

2 |

3 | If you want to make a contribution to this repository, then make a PR with 'Added a notebook' by explaining what is the purpose of it.

4 |

--------------------------------------------------------------------------------

/.github/ISSUE_TEMPLATE/bug_report.md:

--------------------------------------------------------------------------------

1 | ---

2 | name: Bug report

3 | about: Create a report to help us improve

4 | title: ''

5 | labels: ''

6 | assignees: ''

7 |

8 | ---

9 |

10 | **Describe the bug**

11 | A clear and concise description of what the bug is.

12 |

13 | **To Reproduce**

14 | Steps to reproduce the behaviour:

15 | 1. Go to '...'

16 | 2. Click on '....'

17 | 3. Scroll down to '....'

18 | 4. See an error

19 |

20 | **Expected behaviour**

21 | A clear and concise description of what you expected to happen.

22 |

23 | **Screenshots**

24 | If applicable, add screenshots to help explain your problem.

25 |

26 | **Additional context**

27 | Add any other context about the problem here.

28 |

--------------------------------------------------------------------------------

/README.md:

--------------------------------------------------------------------------------

1 |

2 |

3 |

4 |

5 | [](https://gitHub.com/sayannath)

6 |

7 |

8 |  9 |

10 |

11 | # TensorFlow Notebooks

12 |

9 |

10 |

11 | # TensorFlow Notebooks

12 |  13 |

14 | ## Description

15 |

16 | **Author**: [Sayan Nath](https://sayannath.biz/)

13 |

14 | ## Description

15 |

16 | **Author**: [Sayan Nath](https://sayannath.biz/)

17 | **Last Updated**: 2021/03/29

18 |

19 | ## List of the Notebooks

20 |

21 | 1. [Post Quantise Training Notebook](https://colab.research.google.com/drive/1EysBC5PHJcg5dp9Qaj59t8jaHc_7JfgV?usp=sharing)

22 | 2. [Pruning Notebook](https://colab.research.google.com/drive/1sYTDxGSxN3B3KzbZM94ths1zuvkNqiA7?usp=sharing)

23 | 3. [Quantization Aware Training](https://colab.research.google.com/drive/1Wdso2N_76E8Xxniqd4C6T1sV5BuhKN1o?usp=sharing)

24 | 4. [Siamese Network with a Contrastive Loss]()

25 |

26 |  27 |

--------------------------------------------------------------------------------

/LICENSE:

--------------------------------------------------------------------------------

1 | Apache License

2 | Version 2.0, January 2004

3 | http://www.apache.org/licenses/

4 |

5 | TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

6 |

7 | 1. Definitions.

8 |

9 | "License" shall mean the terms and conditions for use, reproduction,

10 | and distribution as defined by Sections 1 through 9 of this document.

11 |

12 | "Licensor" shall mean the copyright owner or entity authorized by

13 | the copyright owner that is granting the License.

14 |

15 | "Legal Entity" shall mean the union of the acting entity and all

16 | other entities that control, are controlled by, or are under common

17 | control with that entity. For the purposes of this definition,

18 | "control" means (i) the power, direct or indirect, to cause the

19 | direction or management of such entity, whether by contract or

20 | otherwise, or (ii) ownership of fifty percent (50%) or more of the

21 | outstanding shares, or (iii) beneficial ownership of such entity.

22 |

23 | "You" (or "Your") shall mean an individual or Legal Entity

24 | exercising permissions granted by this License.

25 |

26 | "Source" form shall mean the preferred form for making modifications,

27 | including but not limited to software source code, documentation

28 | source, and configuration files.

29 |

30 | "Object" form shall mean any form resulting from mechanical

31 | transformation or translation of a Source form, including but

32 | not limited to compiled object code, generated documentation,

33 | and conversions to other media types.

34 |

35 | "Work" shall mean the work of authorship, whether in Source or

36 | Object form, made available under the License, as indicated by a

37 | copyright notice that is included in or attached to the work

38 | (an example is provided in the Appendix below).

39 |

40 | "Derivative Works" shall mean any work, whether in Source or Object

41 | form, that is based on (or derived from) the Work and for which the

42 | editorial revisions, annotations, elaborations, or other modifications

43 | represent, as a whole, an original work of authorship. For the purposes

44 | of this License, Derivative Works shall not include works that remain

45 | separable from, or merely link (or bind by name) to the interfaces of,

46 | the Work and Derivative Works thereof.

47 |

48 | "Contribution" shall mean any work of authorship, including

49 | the original version of the Work and any modifications or additions

50 | to that Work or Derivative Works thereof, that is intentionally

51 | submitted to Licensor for inclusion in the Work by the copyright owner

52 | or by an individual or Legal Entity authorized to submit on behalf of

53 | the copyright owner. For the purposes of this definition, "submitted"

54 | means any form of electronic, verbal, or written communication sent

55 | to the Licensor or its representatives, including but not limited to

56 | communication on electronic mailing lists, source code control systems,

57 | and issue tracking systems that are managed by, or on behalf of, the

58 | Licensor for the purpose of discussing and improving the Work, but

59 | excluding communication that is conspicuously marked or otherwise

60 | designated in writing by the copyright owner as "Not a Contribution."

61 |

62 | "Contributor" shall mean Licensor and any individual or Legal Entity

63 | on behalf of whom a Contribution has been received by Licensor and

64 | subsequently incorporated within the Work.

65 |

66 | 2. Grant of Copyright License. Subject to the terms and conditions of

67 | this License, each Contributor hereby grants to You a perpetual,

68 | worldwide, non-exclusive, no-charge, royalty-free, irrevocable

69 | copyright license to reproduce, prepare Derivative Works of,

70 | publicly display, publicly perform, sublicense, and distribute the

71 | Work and such Derivative Works in Source or Object form.

72 |

73 | 3. Grant of Patent License. Subject to the terms and conditions of

74 | this License, each Contributor hereby grants to You a perpetual,

75 | worldwide, non-exclusive, no-charge, royalty-free, irrevocable

76 | (except as stated in this section) patent license to make, have made,

77 | use, offer to sell, sell, import, and otherwise transfer the Work,

78 | where such license applies only to those patent claims licensable

79 | by such Contributor that are necessarily infringed by their

80 | Contribution(s) alone or by combination of their Contribution(s)

81 | with the Work to which such Contribution(s) was submitted. If You

82 | institute patent litigation against any entity (including a

83 | cross-claim or counterclaim in a lawsuit) alleging that the Work

84 | or a Contribution incorporated within the Work constitutes direct

85 | or contributory patent infringement, then any patent licenses

86 | granted to You under this License for that Work shall terminate

87 | as of the date such litigation is filed.

88 |

89 | 4. Redistribution. You may reproduce and distribute copies of the

90 | Work or Derivative Works thereof in any medium, with or without

91 | modifications, and in Source or Object form, provided that You

92 | meet the following conditions:

93 |

94 | (a) You must give any other recipients of the Work or

95 | Derivative Works a copy of this License; and

96 |

97 | (b) You must cause any modified files to carry prominent notices

98 | stating that You changed the files; and

99 |

100 | (c) You must retain, in the Source form of any Derivative Works

101 | that You distribute, all copyright, patent, trademark, and

102 | attribution notices from the Source form of the Work,

103 | excluding those notices that do not pertain to any part of

104 | the Derivative Works; and

105 |

106 | (d) If the Work includes a "NOTICE" text file as part of its

107 | distribution, then any Derivative Works that You distribute must

108 | include a readable copy of the attribution notices contained

109 | within such NOTICE file, excluding those notices that do not

110 | pertain to any part of the Derivative Works, in at least one

111 | of the following places: within a NOTICE text file distributed

112 | as part of the Derivative Works; within the Source form or

113 | documentation, if provided along with the Derivative Works; or,

114 | within a display generated by the Derivative Works, if and

115 | wherever such third-party notices normally appear. The contents

116 | of the NOTICE file are for informational purposes only and

117 | do not modify the License. You may add Your own attribution

118 | notices within Derivative Works that You distribute, alongside

119 | or as an addendum to the NOTICE text from the Work, provided

120 | that such additional attribution notices cannot be construed

121 | as modifying the License.

122 |

123 | You may add Your own copyright statement to Your modifications and

124 | may provide additional or different license terms and conditions

125 | for use, reproduction, or distribution of Your modifications, or

126 | for any such Derivative Works as a whole, provided Your use,

127 | reproduction, and distribution of the Work otherwise complies with

128 | the conditions stated in this License.

129 |

130 | 5. Submission of Contributions. Unless You explicitly state otherwise,

131 | any Contribution intentionally submitted for inclusion in the Work

132 | by You to the Licensor shall be under the terms and conditions of

133 | this License, without any additional terms or conditions.

134 | Notwithstanding the above, nothing herein shall supersede or modify

135 | the terms of any separate license agreement you may have executed

136 | with Licensor regarding such Contributions.

137 |

138 | 6. Trademarks. This License does not grant permission to use the trade

139 | names, trademarks, service marks, or product names of the Licensor,

140 | except as required for reasonable and customary use in describing the

141 | origin of the Work and reproducing the content of the NOTICE file.

142 |

143 | 7. Disclaimer of Warranty. Unless required by applicable law or

144 | agreed to in writing, Licensor provides the Work (and each

145 | Contributor provides its Contributions) on an "AS IS" BASIS,

146 | WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

147 | implied, including, without limitation, any warranties or conditions

148 | of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

149 | PARTICULAR PURPOSE. You are solely responsible for determining the

150 | appropriateness of using or redistributing the Work and assume any

151 | risks associated with Your exercise of permissions under this License.

152 |

153 | 8. Limitation of Liability. In no event and under no legal theory,

154 | whether in tort (including negligence), contract, or otherwise,

155 | unless required by applicable law (such as deliberate and grossly

156 | negligent acts) or agreed to in writing, shall any Contributor be

157 | liable to You for damages, including any direct, indirect, special,

158 | incidental, or consequential damages of any character arising as a

159 | result of this License or out of the use or inability to use the

160 | Work (including but not limited to damages for loss of goodwill,

161 | work stoppage, computer failure or malfunction, or any and all

162 | other commercial damages or losses), even if such Contributor

163 | has been advised of the possibility of such damages.

164 |

165 | 9. Accepting Warranty or Additional Liability. While redistributing

166 | the Work or Derivative Works thereof, You may choose to offer,

167 | and charge a fee for, acceptance of support, warranty, indemnity,

168 | or other liability obligations and/or rights consistent with this

169 | License. However, in accepting such obligations, You may act only

170 | on Your own behalf and on Your sole responsibility, not on behalf

171 | of any other Contributor, and only if You agree to indemnify,

172 | defend, and hold each Contributor harmless for any liability

173 | incurred by, or claims asserted against, such Contributor by reason

174 | of your accepting any such warranty or additional liability.

175 |

176 | END OF TERMS AND CONDITIONS

177 |

178 | APPENDIX: How to apply the Apache License to your work.

179 |

180 | To apply the Apache License to your work, attach the following

181 | boilerplate notice, with the fields enclosed by brackets "[]"

182 | replaced with your own identifying information. (Don't include

183 | the brackets!) The text should be enclosed in the appropriate

184 | comment syntax for the file format. We also recommend that a

185 | file or class name and description of purpose be included on the

186 | same "printed page" as the copyright notice for easier

187 | identification within third-party archives.

188 |

189 | Copyright 2021 Sayan Nath

190 |

191 | Licensed under the Apache License, Version 2.0 (the "License");

192 | you may not use this file except in compliance with the License.

193 | You may obtain a copy of the License at

194 |

195 | http://www.apache.org/licenses/LICENSE-2.0

196 |

197 | Unless required by applicable law or agreed to in writing, software

198 | distributed under the License is distributed on an "AS IS" BASIS,

199 | WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

200 | See the License for the specific language governing permissions and

201 | limitations under the License.

202 |

--------------------------------------------------------------------------------

/TF-Serving-Demo.ipynb:

--------------------------------------------------------------------------------

1 | {

2 | "nbformat": 4,

3 | "nbformat_minor": 0,

4 | "metadata": {

5 | "colab": {

6 | "name": "TF-Serving-HelloWorld.ipynb",

7 | "provenance": []

8 | },

9 | "kernelspec": {

10 | "name": "python3",

11 | "display_name": "Python 3"

12 | },

13 | "language_info": {

14 | "name": "python"

15 | },

16 | "accelerator": "GPU"

17 | },

18 | "cells": [

19 | {

20 | "cell_type": "markdown",

21 | "metadata": {

22 | "id": "kIUixndibP8m"

23 | },

24 | "source": [

25 | "## Intial-Setup"

26 | ]

27 | },

28 | {

29 | "cell_type": "code",

30 | "metadata": {

31 | "colab": {

32 | "base_uri": "https://localhost:8080/"

33 | },

34 | "id": "nahhdAX9a_hr",

35 | "outputId": "27f89cd1-2753-4d30-c55d-6fe9b969b0ec"

36 | },

37 | "source": [

38 | "!nvidia-smi"

39 | ],

40 | "execution_count": 1,

41 | "outputs": [

42 | {

43 | "output_type": "stream",

44 | "name": "stdout",

45 | "text": [

46 | "Fri Sep 17 15:49:57 2021 \n",

47 | "+-----------------------------------------------------------------------------+\n",

48 | "| NVIDIA-SMI 470.63.01 Driver Version: 460.32.03 CUDA Version: 11.2 |\n",

49 | "|-------------------------------+----------------------+----------------------+\n",

50 | "| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC |\n",

51 | "| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |\n",

52 | "| | | MIG M. |\n",

53 | "|===============================+======================+======================|\n",

54 | "| 0 Tesla K80 Off | 00000000:00:04.0 Off | 0 |\n",

55 | "| N/A 37C P8 28W / 149W | 0MiB / 11441MiB | 0% Default |\n",

56 | "| | | N/A |\n",

57 | "+-------------------------------+----------------------+----------------------+\n",

58 | " \n",

59 | "+-----------------------------------------------------------------------------+\n",

60 | "| Processes: |\n",

61 | "| GPU GI CI PID Type Process name GPU Memory |\n",

62 | "| ID ID Usage |\n",

63 | "|=============================================================================|\n",

64 | "| No running processes found |\n",

65 | "+-----------------------------------------------------------------------------+\n"

66 | ]

67 | }

68 | ]

69 | },

70 | {

71 | "cell_type": "markdown",

72 | "metadata": {

73 | "id": "jZoqhmp1bRx3"

74 | },

75 | "source": [

76 | "## Imports"

77 | ]

78 | },

79 | {

80 | "cell_type": "code",

81 | "metadata": {

82 | "colab": {

83 | "base_uri": "https://localhost:8080/"

84 | },

85 | "id": "Kp_7llkKbGMd",

86 | "outputId": "432ee054-b53a-401f-fcf7-73aabf5e015d"

87 | },

88 | "source": [

89 | "import os\n",

90 | "import json\n",

91 | "import tempfile\n",

92 | "import requests\n",

93 | "import numpy as np\n",

94 | "import tensorflow as tf\n",

95 | "\n",

96 | "print(tf.__version__)"

97 | ],

98 | "execution_count": 2,

99 | "outputs": [

100 | {

101 | "output_type": "stream",

102 | "name": "stdout",

103 | "text": [

104 | "2.6.0\n"

105 | ]

106 | }

107 | ]

108 | },

109 | {

110 | "cell_type": "markdown",

111 | "metadata": {

112 | "id": "Wv7KqHeFbUbF"

113 | },

114 | "source": [

115 | "## Install TF-Serving in Colab"

116 | ]

117 | },

118 | {

119 | "cell_type": "code",

120 | "metadata": {

121 | "colab": {

122 | "base_uri": "https://localhost:8080/"

123 | },

124 | "id": "ygrjjB1dbTUP",

125 | "outputId": "d6e874a4-396c-4788-e702-c839479d70b2"

126 | },

127 | "source": [

128 | "!echo \"deb http://storage.googleapis.com/tensorflow-serving-apt stable tensorflow-model-server tensorflow-model-server-universal\" | tee /etc/apt/sources.list.d/tensorflow-serving.list && \\\n",

129 | "curl https://storage.googleapis.com/tensorflow-serving-apt/tensorflow-serving.release.pub.gpg | apt-key add -\n",

130 | "!apt update\n",

131 | "!apt-get install tensorflow-model-server"

132 | ],

133 | "execution_count": 3,

134 | "outputs": [

135 | {

136 | "output_type": "stream",

137 | "name": "stdout",

138 | "text": [

139 | "deb http://storage.googleapis.com/tensorflow-serving-apt stable tensorflow-model-server tensorflow-model-server-universal\n",

140 | " % Total % Received % Xferd Average Speed Time Time Time Current\n",

141 | " Dload Upload Total Spent Left Speed\n",

142 | "100 2943 100 2943 0 0 14014 0 --:--:-- --:--:-- --:--:-- 14014\n",

143 | "OK\n",

144 | "Get:1 https://cloud.r-project.org/bin/linux/ubuntu bionic-cran40/ InRelease [3,626 B]\n",

145 | "Get:2 http://storage.googleapis.com/tensorflow-serving-apt stable InRelease [3,012 B]\n",

146 | "Ign:3 https://developer.download.nvidia.com/compute/cuda/repos/ubuntu1804/x86_64 InRelease\n",

147 | "Ign:4 https://developer.download.nvidia.com/compute/machine-learning/repos/ubuntu1804/x86_64 InRelease\n",

148 | "Get:5 https://developer.download.nvidia.com/compute/cuda/repos/ubuntu1804/x86_64 Release [696 B]\n",

149 | "Hit:6 https://developer.download.nvidia.com/compute/machine-learning/repos/ubuntu1804/x86_64 Release\n",

150 | "Get:7 http://ppa.launchpad.net/c2d4u.team/c2d4u4.0+/ubuntu bionic InRelease [15.9 kB]\n",

151 | "Get:8 https://developer.download.nvidia.com/compute/cuda/repos/ubuntu1804/x86_64 Release.gpg [836 B]\n",

152 | "Get:9 http://storage.googleapis.com/tensorflow-serving-apt stable/tensorflow-model-server-universal amd64 Packages [348 B]\n",

153 | "Get:10 http://security.ubuntu.com/ubuntu bionic-security InRelease [88.7 kB]\n",

154 | "Hit:12 http://archive.ubuntu.com/ubuntu bionic InRelease\n",

155 | "Get:13 http://storage.googleapis.com/tensorflow-serving-apt stable/tensorflow-model-server amd64 Packages [341 B]\n",

156 | "Get:14 https://developer.download.nvidia.com/compute/cuda/repos/ubuntu1804/x86_64 Packages [718 kB]\n",

157 | "Get:15 http://archive.ubuntu.com/ubuntu bionic-updates InRelease [88.7 kB]\n",

158 | "Hit:16 http://ppa.launchpad.net/cran/libgit2/ubuntu bionic InRelease\n",

159 | "Get:17 http://security.ubuntu.com/ubuntu bionic-security/main amd64 Packages [2,326 kB]\n",

160 | "Get:18 http://ppa.launchpad.net/deadsnakes/ppa/ubuntu bionic InRelease [15.9 kB]\n",

161 | "Get:19 http://archive.ubuntu.com/ubuntu bionic-backports InRelease [74.6 kB]\n",

162 | "Get:20 http://archive.ubuntu.com/ubuntu bionic-updates/universe amd64 Packages [2,202 kB]\n",

163 | "Hit:21 http://ppa.launchpad.net/graphics-drivers/ppa/ubuntu bionic InRelease\n",

164 | "Get:22 http://security.ubuntu.com/ubuntu bionic-security/universe amd64 Packages [1,428 kB]\n",

165 | "Get:23 http://ppa.launchpad.net/c2d4u.team/c2d4u4.0+/ubuntu bionic/main Sources [1,799 kB]\n",

166 | "Get:24 http://security.ubuntu.com/ubuntu bionic-security/restricted amd64 Packages [567 kB]\n",

167 | "Get:25 http://archive.ubuntu.com/ubuntu bionic-updates/restricted amd64 Packages [600 kB]\n",

168 | "Get:26 http://archive.ubuntu.com/ubuntu bionic-updates/main amd64 Packages [2,761 kB]\n",

169 | "Get:27 http://ppa.launchpad.net/c2d4u.team/c2d4u4.0+/ubuntu bionic/main amd64 Packages [921 kB]\n",

170 | "Get:28 http://ppa.launchpad.net/deadsnakes/ppa/ubuntu bionic/main amd64 Packages [40.8 kB]\n",

171 | "Fetched 13.7 MB in 7s (1,901 kB/s)\n",

172 | "Reading package lists... Done\n",

173 | "Building dependency tree \n",

174 | "Reading state information... Done\n",

175 | "107 packages can be upgraded. Run 'apt list --upgradable' to see them.\n",

176 | "Reading package lists... Done\n",

177 | "Building dependency tree \n",

178 | "Reading state information... Done\n",

179 | "The following NEW packages will be installed:\n",

180 | " tensorflow-model-server\n",

181 | "0 upgraded, 1 newly installed, 0 to remove and 107 not upgraded.\n",

182 | "Need to get 347 MB of archives.\n",

183 | "After this operation, 0 B of additional disk space will be used.\n",

184 | "Get:1 http://storage.googleapis.com/tensorflow-serving-apt stable/tensorflow-model-server amd64 tensorflow-model-server all 2.6.0 [347 MB]\n",

185 | "Fetched 347 MB in 6s (62.7 MB/s)\n",

186 | "Selecting previously unselected package tensorflow-model-server.\n",

187 | "(Reading database ... 148492 files and directories currently installed.)\n",

188 | "Preparing to unpack .../tensorflow-model-server_2.6.0_all.deb ...\n",

189 | "Unpacking tensorflow-model-server (2.6.0) ...\n",

190 | "Setting up tensorflow-model-server (2.6.0) ...\n"

191 | ]

192 | }

193 | ]

194 | },

195 | {

196 | "cell_type": "markdown",

197 | "metadata": {

198 | "id": "hDohKPa-bcvR"

199 | },

200 | "source": [

201 | "## Create Dataset"

202 | ]

203 | },

204 | {

205 | "cell_type": "code",

206 | "metadata": {

207 | "id": "M5cvtTcebTRt"

208 | },

209 | "source": [

210 | "xs = np.array([-1.0, 0.0, 1.0, 2.0, 3.0, 4.0], dtype=float)\n",

211 | "ys = np.array([-3.0, -1.0, 1.0, 3.0, 5.0, 7.0], dtype=float)"

212 | ],

213 | "execution_count": 4,

214 | "outputs": []

215 | },

216 | {

217 | "cell_type": "markdown",

218 | "metadata": {

219 | "id": "fq08v3qUbmLB"

220 | },

221 | "source": [

222 | "## Build and Train the Model"

223 | ]

224 | },

225 | {

226 | "cell_type": "code",

227 | "metadata": {

228 | "id": "dgeizyx4bTPR"

229 | },

230 | "source": [

231 | "model = tf.keras.Sequential([tf.keras.layers.Dense(units=1, input_shape=[1])])\n",

232 | "\n",

233 | "model.compile(optimizer='sgd',\n",

234 | " loss='mean_squared_error')\n",

235 | "\n",

236 | "history = model.fit(xs, ys, epochs=500, verbose=0)"

237 | ],

238 | "execution_count": 5,

239 | "outputs": []

240 | },

241 | {

242 | "cell_type": "markdown",

243 | "metadata": {

244 | "id": "bPeoZJFFbqKy"

245 | },

246 | "source": [

247 | "## Test the Model"

248 | ]

249 | },

250 | {

251 | "cell_type": "code",

252 | "metadata": {

253 | "colab": {

254 | "base_uri": "https://localhost:8080/"

255 | },

256 | "id": "c-pd6x_lbotj",

257 | "outputId": "6102f316-0202-46d4-b070-a86ef4906aa7"

258 | },

259 | "source": [

260 | "print(model.predict([10.0]))"

261 | ],

262 | "execution_count": 6,

263 | "outputs": [

264 | {

265 | "output_type": "stream",

266 | "name": "stdout",

267 | "text": [

268 | "[[18.9758]]\n"

269 | ]

270 | }

271 | ]

272 | },

273 | {

274 | "cell_type": "markdown",

275 | "metadata": {

276 | "id": "XaNLcKYXbr98"

277 | },

278 | "source": [

279 | "## Save the Model"

280 | ]

281 | },

282 | {

283 | "cell_type": "code",

284 | "metadata": {

285 | "colab": {

286 | "base_uri": "https://localhost:8080/"

287 | },

288 | "id": "lx0xz2dFbu_G",

289 | "outputId": "5c605be5-6855-468c-a2a4-4cb9d1ac1b81"

290 | },

291 | "source": [

292 | "MODEL_DIR = tempfile.gettempdir()\n",

293 | "\n",

294 | "version = 1\n",

295 | "\n",

296 | "export_path = os.path.join(MODEL_DIR, str(version))\n",

297 | "\n",

298 | "if os.path.isdir(export_path):\n",

299 | " print('\\nAlready saved a model, cleaning up\\n')\n",

300 | " !rm -r {export_path}\n",

301 | "\n",

302 | "model.save(export_path, save_format=\"tf\")\n",

303 | "\n",

304 | "print('\\nexport_path = {}'.format(export_path))\n",

305 | "!ls -l {export_path}"

306 | ],

307 | "execution_count": 19,

308 | "outputs": [

309 | {

310 | "output_type": "stream",

311 | "name": "stdout",

312 | "text": [

313 | "INFO:tensorflow:Assets written to: /tmp/1/assets\n",

314 | "\n",

315 | "export_path = /tmp/1\n",

316 | "total 52\n",

317 | "drwxr-xr-x 2 root root 4096 Sep 17 15:56 assets\n",

318 | "-rw-r--r-- 1 root root 4077 Sep 17 15:56 keras_metadata.pb\n",

319 | "-rw-r--r-- 1 root root 38997 Sep 17 15:56 saved_model.pb\n",

320 | "drwxr-xr-x 2 root root 4096 Sep 17 15:56 variables\n"

321 | ]

322 | }

323 | ]

324 | },

325 | {

326 | "cell_type": "markdown",

327 | "metadata": {

328 | "id": "347CyI51cAwU"

329 | },

330 | "source": [

331 | "## Examine Your Saved Model"

332 | ]

333 | },

334 | {

335 | "cell_type": "code",

336 | "metadata": {

337 | "colab": {

338 | "base_uri": "https://localhost:8080/"

339 | },

340 | "id": "aF7vIfTrcBzK",

341 | "outputId": "50d22736-b9a7-4594-f66d-bd14822ec5eb"

342 | },

343 | "source": [

344 | "!saved_model_cli show --dir {export_path} --all"

345 | ],

346 | "execution_count": 20,

347 | "outputs": [

348 | {

349 | "output_type": "stream",

350 | "name": "stdout",

351 | "text": [

352 | "\n",

353 | "MetaGraphDef with tag-set: 'serve' contains the following SignatureDefs:\n",

354 | "\n",

355 | "signature_def['__saved_model_init_op']:\n",

356 | " The given SavedModel SignatureDef contains the following input(s):\n",

357 | " The given SavedModel SignatureDef contains the following output(s):\n",

358 | " outputs['__saved_model_init_op'] tensor_info:\n",

359 | " dtype: DT_INVALID\n",

360 | " shape: unknown_rank\n",

361 | " name: NoOp\n",

362 | " Method name is: \n",

363 | "\n",

364 | "signature_def['serving_default']:\n",

365 | " The given SavedModel SignatureDef contains the following input(s):\n",

366 | " inputs['dense_input'] tensor_info:\n",

367 | " dtype: DT_FLOAT\n",

368 | " shape: (-1, 1)\n",

369 | " name: serving_default_dense_input:0\n",

370 | " The given SavedModel SignatureDef contains the following output(s):\n",

371 | " outputs['dense'] tensor_info:\n",

372 | " dtype: DT_FLOAT\n",

373 | " shape: (-1, 1)\n",

374 | " name: StatefulPartitionedCall:0\n",

375 | " Method name is: tensorflow/serving/predict\n",

376 | "WARNING: Logging before flag parsing goes to stderr.\n",

377 | "W0917 15:56:19.721764 139950523524992 deprecation.py:506] From /usr/local/lib/python2.7/dist-packages/tensorflow_core/python/ops/resource_variable_ops.py:1786: calling __init__ (from tensorflow.python.ops.resource_variable_ops) with constraint is deprecated and will be removed in a future version.\n",

378 | "Instructions for updating:\n",

379 | "If using Keras pass *_constraint arguments to layers.\n",

380 | "\n",

381 | "Defined Functions:\n",

382 | " Function Name: '__call__'\n",

383 | " Option #1\n",

384 | " Callable with:\n",

385 | " Argument #1\n",

386 | " dense_input: TensorSpec(shape=(None, 1), dtype=tf.float32, name=u'dense_input')\n",

387 | " Argument #2\n",

388 | " DType: bool\n",

389 | " Value: False\n",

390 | " Argument #3\n",

391 | " DType: NoneType\n",

392 | " Value: None\n",

393 | " Option #2\n",

394 | " Callable with:\n",

395 | " Argument #1\n",

396 | " inputs: TensorSpec(shape=(None, 1), dtype=tf.float32, name=u'inputs')\n",

397 | " Argument #2\n",

398 | " DType: bool\n",

399 | " Value: False\n",

400 | " Argument #3\n",

401 | " DType: NoneType\n",

402 | " Value: None\n",

403 | " Option #3\n",

404 | " Callable with:\n",

405 | " Argument #1\n",

406 | " inputs: TensorSpec(shape=(None, 1), dtype=tf.float32, name=u'inputs')\n",

407 | " Argument #2\n",

408 | " DType: bool\n",

409 | " Value: True\n",

410 | " Argument #3\n",

411 | " DType: NoneType\n",

412 | " Value: None\n",

413 | " Option #4\n",

414 | " Callable with:\n",

415 | " Argument #1\n",

416 | " dense_input: TensorSpec(shape=(None, 1), dtype=tf.float32, name=u'dense_input')\n",

417 | " Argument #2\n",

418 | " DType: bool\n",

419 | " Value: True\n",

420 | " Argument #3\n",

421 | " DType: NoneType\n",

422 | " Value: None\n",

423 | "\n",

424 | " Function Name: '_default_save_signature'\n",

425 | "Traceback (most recent call last):\n",

426 | " File \"/usr/local/bin/saved_model_cli\", line 8, in \n",

427 | " sys.exit(main())\n",

428 | " File \"/usr/local/lib/python2.7/dist-packages/tensorflow_core/python/tools/saved_model_cli.py\", line 990, in main\n",

429 | " args.func(args)\n",

430 | " File \"/usr/local/lib/python2.7/dist-packages/tensorflow_core/python/tools/saved_model_cli.py\", line 691, in show\n",

431 | " _show_all(args.dir)\n",

432 | " File \"/usr/local/lib/python2.7/dist-packages/tensorflow_core/python/tools/saved_model_cli.py\", line 283, in _show_all\n",

433 | " _show_defined_functions(saved_model_dir)\n",

434 | " File \"/usr/local/lib/python2.7/dist-packages/tensorflow_core/python/tools/saved_model_cli.py\", line 186, in _show_defined_functions\n",

435 | " function._list_all_concrete_functions_for_serialization() # pylint: disable=protected-access\n",

436 | "AttributeError: '_WrapperFunction' object has no attribute '_list_all_concrete_functions_for_serialization'\n"

437 | ]

438 | }

439 | ]

440 | },

441 | {

442 | "cell_type": "markdown",

443 | "metadata": {

444 | "id": "A6qeJl22cMvn"

445 | },

446 | "source": [

447 | "## Run the TF-Model Server"

448 | ]

449 | },

450 | {

451 | "cell_type": "code",

452 | "metadata": {

453 | "id": "pMkoC0LPcOyS"

454 | },

455 | "source": [

456 | "os.environ[\"MODEL_DIR\"] = MODEL_DIR"

457 | ],

458 | "execution_count": 21,

459 | "outputs": []

460 | },

461 | {

462 | "cell_type": "code",

463 | "metadata": {

464 | "colab": {

465 | "base_uri": "https://localhost:8080/"

466 | },

467 | "id": "xxRfpLMicPU8",

468 | "outputId": "9fcfce5e-9e3a-4368-c590-ad419a24660b"

469 | },

470 | "source": [

471 | "%%bash --bg \n",

472 | "nohup tensorflow_model_server \\\n",

473 | " --rest_api_port=8501 \\\n",

474 | " --model_name=number_model \\\n",

475 | " --model_base_path=\"${MODEL_DIR}\" >server.log 2>&1"

476 | ],

477 | "execution_count": 22,

478 | "outputs": [

479 | {

480 | "output_type": "stream",

481 | "name": "stdout",

482 | "text": [

483 | "Starting job # 3 in a separate thread.\n"

484 | ]

485 | }

486 | ]

487 | },

488 | {

489 | "cell_type": "code",

490 | "metadata": {

491 | "colab": {

492 | "base_uri": "https://localhost:8080/"

493 | },

494 | "id": "c7EnK2sKcVJA",

495 | "outputId": "e1e351bb-15fe-417f-d92a-bb5cf8111bd9"

496 | },

497 | "source": [

498 | "!tail server.log"

499 | ],

500 | "execution_count": 23,

501 | "outputs": [

502 | {

503 | "output_type": "stream",

504 | "name": "stdout",

505 | "text": [

506 | "2021-09-17 15:56:25.923299: I external/org_tensorflow/tensorflow/cc/saved_model/loader.cc:283] SavedModel load for tags { serve }; Status: success: OK. Took 38797 microseconds.\n",

507 | "2021-09-17 15:56:25.923752: I tensorflow_serving/servables/tensorflow/saved_model_warmup_util.cc:59] No warmup data file found at /tmp/1/assets.extra/tf_serving_warmup_requests\n",

508 | "2021-09-17 15:56:25.923894: I tensorflow_serving/core/loader_harness.cc:87] Successfully loaded servable version {name: number_model version: 1}\n",

509 | "2021-09-17 15:56:25.924369: I tensorflow_serving/model_servers/server_core.cc:486] Finished adding/updating models\n",

510 | "2021-09-17 15:56:25.924435: I tensorflow_serving/model_servers/server.cc:133] Using InsecureServerCredentials\n",

511 | "2021-09-17 15:56:25.924454: I tensorflow_serving/model_servers/server.cc:383] Profiler service is enabled\n",

512 | "2021-09-17 15:56:25.924922: I tensorflow_serving/model_servers/server.cc:409] Running gRPC ModelServer at 0.0.0.0:8500 ...\n",

513 | "[warn] getaddrinfo: address family for nodename not supported\n",

514 | "2021-09-17 15:56:25.925536: I tensorflow_serving/model_servers/server.cc:430] Exporting HTTP/REST API at:localhost:8501 ...\n",

515 | "[evhttp_server.cc : 245] NET_LOG: Entering the event loop ...\n"

516 | ]

517 | }

518 | ]

519 | },

520 | {

521 | "cell_type": "markdown",

522 | "metadata": {

523 | "id": "4DDMhPbacWyF"

524 | },

525 | "source": [

526 | "## Create JSON Object with Test Data"

527 | ]

528 | },

529 | {

530 | "cell_type": "code",

531 | "metadata": {

532 | "colab": {

533 | "base_uri": "https://localhost:8080/"

534 | },

535 | "id": "m53pqNUvcXyD",

536 | "outputId": "68fe207c-bf78-48f6-9f5a-2fd286abbe0e"

537 | },

538 | "source": [

539 | "xs = np.array([[9.0], [10.0]])\n",

540 | "data = json.dumps({\"signature_name\": \"serving_default\", \"instances\": xs.tolist()})\n",

541 | "print(data)"

542 | ],

543 | "execution_count": 24,

544 | "outputs": [

545 | {

546 | "output_type": "stream",

547 | "name": "stdout",

548 | "text": [

549 | "{\"signature_name\": \"serving_default\", \"instances\": [[9.0], [10.0]]}\n"

550 | ]

551 | }

552 | ]

553 | },

554 | {

555 | "cell_type": "markdown",

556 | "metadata": {

557 | "id": "DG3J6CPvcdBi"

558 | },

559 | "source": [

560 | "## Make Inference Request"

561 | ]

562 | },

563 | {

564 | "cell_type": "code",

565 | "metadata": {

566 | "colab": {

567 | "base_uri": "https://localhost:8080/"

568 | },

569 | "id": "WU248tgOceUp",

570 | "outputId": "291d89c3-afd0-4cbc-be55-ba9cf5bce432"

571 | },

572 | "source": [

573 | "def model_predict():\n",

574 | " headers = {\"content-type\": \"application/json\"}\n",

575 | " json_response = requests.post('http://localhost:8501/v1/models/number_model:predict', data=data, headers=headers)\n",

576 | "\n",

577 | " print(json_response.text)\n",

578 | "\n",

579 | " predictions = json.loads(json_response.text)['predictions']\n",

580 | " print(predictions)\n",

581 | "\n",

582 | "model_predict()"

583 | ],

584 | "execution_count": 25,

585 | "outputs": [

586 | {

587 | "output_type": "stream",

588 | "name": "stdout",

589 | "text": [

590 | "{\n",

591 | " \"predictions\": [[16.9793072], [18.9758]\n",

592 | " ]\n",

593 | "}\n",

594 | "[[16.9793072], [18.9758]]\n"

595 | ]

596 | }

597 | ]

598 | }

599 | ]

600 | }

--------------------------------------------------------------------------------

/Weight_Pruning_in_Keras_with_Fashion_MNIST.ipynb:

--------------------------------------------------------------------------------

1 | {

2 | "nbformat": 4,

3 | "nbformat_minor": 0,

4 | "metadata": {

5 | "colab": {

6 | "name": "Weight Pruning in Keras with Fashion MNIST.ipynb",

7 | "provenance": [],

8 | "collapsed_sections": [],

9 | "toc_visible": true

10 | },

11 | "kernelspec": {

12 | "name": "python3",

13 | "display_name": "Python 3"

14 | },

15 | "language_info": {

16 | "name": "python"

17 | },

18 | "accelerator": "GPU"

19 | },

20 | "cells": [

21 | {

22 | "cell_type": "markdown",

23 | "metadata": {

24 | "id": "a7k5Nhs1Ixcy"

25 | },

26 | "source": [

27 | "# Weight Pruning\n",

28 | "\n",

29 | "**Overview**\n",

30 | "\n",

31 | "Magnitude-based weight pruning gradually zeroes out model weights during the training process to achieve model sparsity. Sparse models are easier to compress, and we can skip the zeroes during inference for latency improvements.\n",

32 | "\n",

33 | "This technique brings improvements via model compression. In the future, framework support for this technique will provide latency improvements. We've seen up to 6x improvements in model compression with minimal loss of accuracy.\n",

34 | "\n",

35 | "The technique is being evaluated in various speech applications, such as speech recognition and text-to-speech, and has been experimented on across various vision and translation models.\n",

36 | "\n",

37 | "In this example we will be using Fashion MNIST dataset.\n",

38 | "This example require Tensorflow 2.4 version or higher."

39 | ]

40 | },

41 | {

42 | "cell_type": "markdown",

43 | "metadata": {

44 | "id": "4rB3VhAMJsgv"

45 | },

46 | "source": [

47 | "## Initial Setup"

48 | ]

49 | },

50 | {

51 | "cell_type": "code",

52 | "metadata": {

53 | "colab": {

54 | "base_uri": "https://localhost:8080/"

55 | },

56 | "id": "dNDzooiWJxjV",

57 | "outputId": "b6c895a2-0730-4561-fa20-abec18560571"

58 | },

59 | "source": [

60 | "!nvidia-smi"

61 | ],

62 | "execution_count": 1,

63 | "outputs": [

64 | {

65 | "output_type": "stream",

66 | "text": [

67 | "Fri Mar 26 13:11:16 2021 \n",

68 | "+-----------------------------------------------------------------------------+\n",

69 | "| NVIDIA-SMI 460.56 Driver Version: 460.32.03 CUDA Version: 11.2 |\n",

70 | "|-------------------------------+----------------------+----------------------+\n",

71 | "| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC |\n",

72 | "| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |\n",

73 | "| | | MIG M. |\n",

74 | "|===============================+======================+======================|\n",

75 | "| 0 Tesla T4 Off | 00000000:00:04.0 Off | 0 |\n",

76 | "| N/A 66C P8 11W / 70W | 0MiB / 15109MiB | 0% Default |\n",

77 | "| | | N/A |\n",

78 | "+-------------------------------+----------------------+----------------------+\n",

79 | " \n",

80 | "+-----------------------------------------------------------------------------+\n",

81 | "| Processes: |\n",

82 | "| GPU GI CI PID Type Process name GPU Memory |\n",

83 | "| ID ID Usage |\n",

84 | "|=============================================================================|\n",

85 | "| No running processes found |\n",

86 | "+-----------------------------------------------------------------------------+\n"

87 | ],

88 | "name": "stdout"

89 | }

90 | ]

91 | },

92 | {

93 | "cell_type": "code",

94 | "metadata": {

95 | "colab": {

96 | "base_uri": "https://localhost:8080/"

97 | },

98 | "id": "KV21lnJbJ1qH",

99 | "outputId": "8928d429-37f6-45c9-f1be-eb2b9d1888a7"

100 | },

101 | "source": [

102 | "!pip install -q tensorflow-model-optimization"

103 | ],

104 | "execution_count": 2,

105 | "outputs": [

106 | {

107 | "output_type": "stream",

108 | "text": [

109 | "\u001b[?25l\r\u001b[K |██ | 10kB 26.3MB/s eta 0:00:01\r\u001b[K |███▉ | 20kB 32.9MB/s eta 0:00:01\r\u001b[K |█████▊ | 30kB 21.9MB/s eta 0:00:01\r\u001b[K |███████▋ | 40kB 25.0MB/s eta 0:00:01\r\u001b[K |█████████▌ | 51kB 22.1MB/s eta 0:00:01\r\u001b[K |███████████▍ | 61kB 24.5MB/s eta 0:00:01\r\u001b[K |█████████████▎ | 71kB 19.0MB/s eta 0:00:01\r\u001b[K |███████████████▏ | 81kB 20.0MB/s eta 0:00:01\r\u001b[K |█████████████████ | 92kB 19.2MB/s eta 0:00:01\r\u001b[K |███████████████████ | 102kB 19.2MB/s eta 0:00:01\r\u001b[K |████████████████████▉ | 112kB 19.2MB/s eta 0:00:01\r\u001b[K |██████████████████████▊ | 122kB 19.2MB/s eta 0:00:01\r\u001b[K |████████████████████████▊ | 133kB 19.2MB/s eta 0:00:01\r\u001b[K |██████████████████████████▋ | 143kB 19.2MB/s eta 0:00:01\r\u001b[K |████████████████████████████▌ | 153kB 19.2MB/s eta 0:00:01\r\u001b[K |██████████████████████████████▍ | 163kB 19.2MB/s eta 0:00:01\r\u001b[K |████████████████████████████████| 174kB 19.2MB/s \n",

110 | "\u001b[?25h"

111 | ],

112 | "name": "stdout"

113 | }

114 | ]

115 | },

116 | {

117 | "cell_type": "markdown",

118 | "metadata": {

119 | "id": "Z17MXMWfKA7U"

120 | },

121 | "source": [

122 | "## Helper Functions - To determine the file size"

123 | ]

124 | },

125 | {

126 | "cell_type": "code",

127 | "metadata": {

128 | "id": "aQzc0ZcdKF1H"

129 | },

130 | "source": [

131 | "def get_file_size(file_path):\n",

132 | " size = os.path.getsize(file_path)\n",

133 | " return size\n",

134 | "\n",

135 | "def convert_bytes(size, unit=None):\n",

136 | " if unit == \"KB\":\n",

137 | " return print('File Size: ' + str(round(size/1024, 3)) + 'Kilobytes')\n",

138 | " elif unit == 'MB':\n",

139 | " return print('File Size: ' + str(round(size/(1024*1024), 3)) + 'Megabytes')\n",

140 | " else:\n",

141 | " return print('File Size: ' + str(size) + 'bytes')"

142 | ],

143 | "execution_count": 3,

144 | "outputs": []

145 | },

146 | {

147 | "cell_type": "markdown",

148 | "metadata": {

149 | "id": "McOFIWu6Jll8"

150 | },

151 | "source": [

152 | "## Import the necessary modules"

153 | ]

154 | },

155 | {

156 | "cell_type": "code",

157 | "metadata": {

158 | "id": "gxKwKMCoIA3p"

159 | },

160 | "source": [

161 | "import os\n",

162 | "import time\n",

163 | "import numpy as np\n",

164 | "import pandas as pd\n",

165 | "import matplotlib.pyplot as plt\n",

166 | "import tempfile\n",

167 | "from sklearn.metrics import accuracy_score\n",

168 | "from sys import getsizeof\n",

169 | "\n",

170 | "import tensorflow as tf\n",

171 | "import tensorflow_model_optimization as tfmot\n",

172 | "from tensorflow import keras\n",

173 | "from tensorflow.keras.models import Sequential\n",

174 | "from tensorflow.keras.layers import Conv2D, Dense, Flatten, MaxPooling2D, GlobalAvgPool2D, Dropout\n",

175 | "\n",

176 | "%load_ext tensorboard"

177 | ],

178 | "execution_count": 4,

179 | "outputs": []

180 | },

181 | {

182 | "cell_type": "markdown",

183 | "metadata": {

184 | "id": "jDImDEBpKoh_"

185 | },

186 | "source": [

187 | "## Load the Fashion MNIST dataset"

188 | ]

189 | },

190 | {

191 | "cell_type": "markdown",

192 | "metadata": {

193 | "id": "XAJHZTedLLgc"

194 | },

195 | "source": [

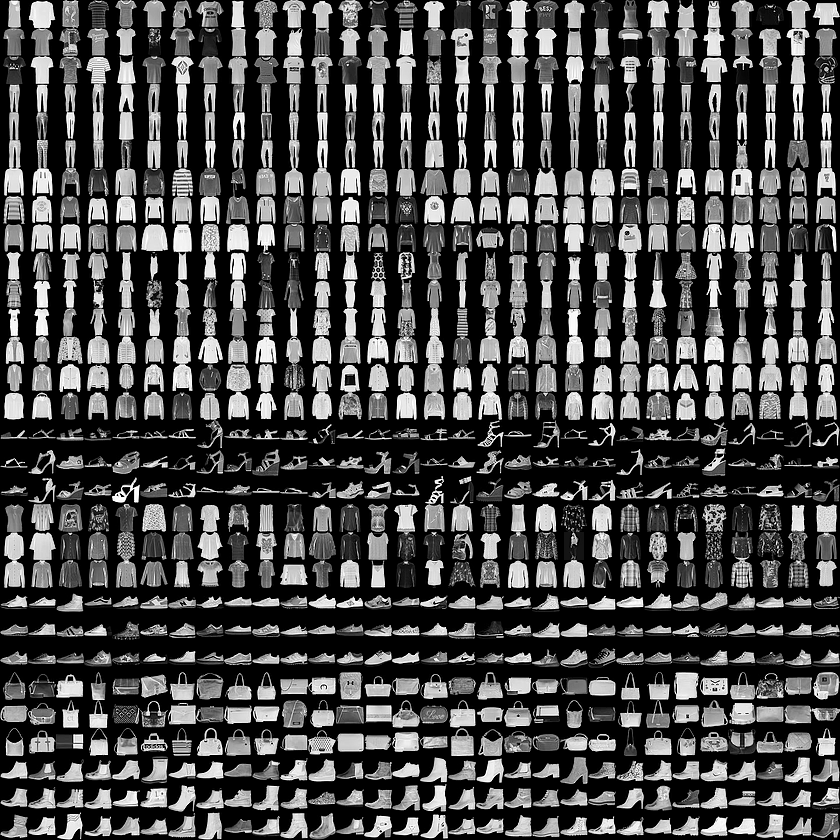

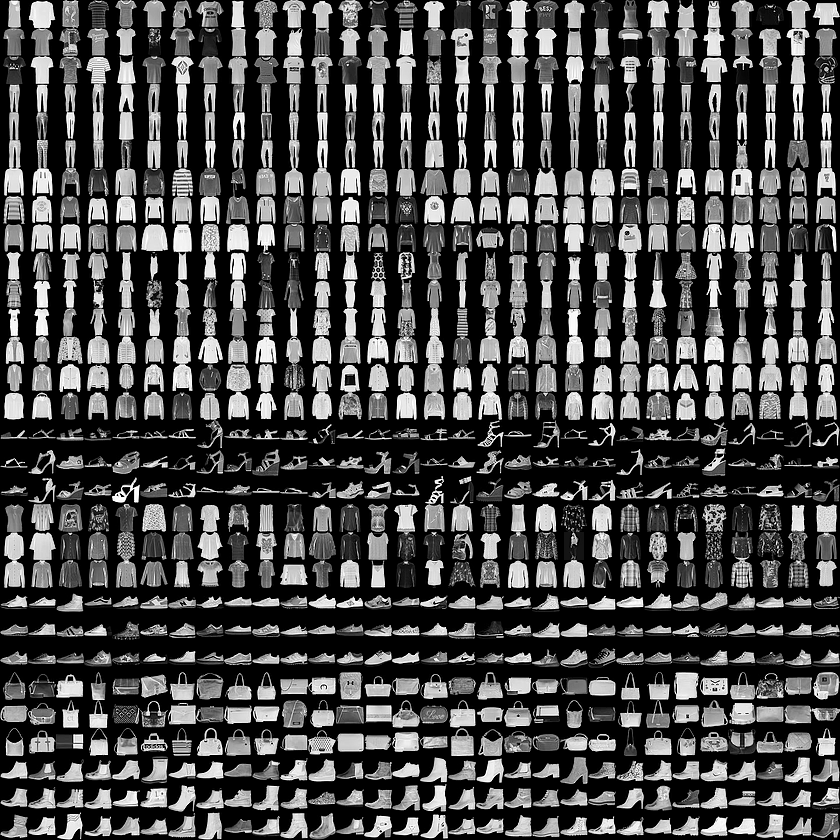

196 | "The **[Fashion MNIST](https://github.com/zalandoresearch/fashion-mnist)** dataset which contains 70,000 grayscale images in 10 categories. The images show individual articles of clothing at low resolution (28 by 28 pixels), as seen here:\n",

197 | "\n",

198 | ""

199 | ]

200 | },

201 | {

202 | "cell_type": "markdown",

203 | "metadata": {

204 | "id": "Sguh6K9CWfBO"

205 | },

206 | "source": [

207 | "## Baseline Model"

208 | ]

209 | },

210 | {

211 | "cell_type": "code",

212 | "metadata": {

213 | "colab": {

214 | "base_uri": "https://localhost:8080/"

215 | },

216 | "id": "6t8m1DhyKpZa",

217 | "outputId": "95e33ff8-7a78-4fd0-b860-8f8d59ba2310"

218 | },

219 | "source": [

220 | "fashion_mnist = tf.keras.datasets.fashion_mnist\n",

221 | "(x_train, y_train), (x_test, y_test) = fashion_mnist.load_data()\n",

222 | "\n",

223 | "#Storing test labels\n",

224 | "test_labels = y_test\n",

225 | "\n",

226 | "x_train = x_train.astype(\"float32\") / 255.0\n",

227 | "x_train = np.reshape(x_train, (-1, 28, 28, 1))\n",

228 | "y_train = tf.one_hot(y_train, 10)\n",

229 | "\n",

230 | "x_test = x_test.astype(\"float32\") / 255.0\n",

231 | "x_test = np.reshape(x_test, (-1, 28, 28, 1))\n",

232 | "y_test = tf.one_hot(y_test, 10)"

233 | ],

234 | "execution_count": 5,

235 | "outputs": [

236 | {

237 | "output_type": "stream",

238 | "text": [

239 | "Downloading data from https://storage.googleapis.com/tensorflow/tf-keras-datasets/train-labels-idx1-ubyte.gz\n",

240 | "32768/29515 [=================================] - 0s 0us/step\n",

241 | "Downloading data from https://storage.googleapis.com/tensorflow/tf-keras-datasets/train-images-idx3-ubyte.gz\n",

242 | "26427392/26421880 [==============================] - 0s 0us/step\n",

243 | "Downloading data from https://storage.googleapis.com/tensorflow/tf-keras-datasets/t10k-labels-idx1-ubyte.gz\n",

244 | "8192/5148 [===============================================] - 0s 0us/step\n",

245 | "Downloading data from https://storage.googleapis.com/tensorflow/tf-keras-datasets/t10k-images-idx3-ubyte.gz\n",

246 | "4423680/4422102 [==============================] - 0s 0us/step\n"

247 | ],

248 | "name": "stdout"

249 | }

250 | ]

251 | },

252 | {

253 | "cell_type": "code",

254 | "metadata": {

255 | "id": "ypSVOZOoOlCi"

256 | },

257 | "source": [

258 | "class_name = ['T-shirt/top', 'Trouser', 'Pullover', 'Dress', 'Coat', 'Sandal', 'Shirt', 'Sneaker', 'Bag', 'Ankle Boot']"

259 | ],

260 | "execution_count": 6,

261 | "outputs": []

262 | },

263 | {

264 | "cell_type": "markdown",

265 | "metadata": {

266 | "id": "To8FXs70MQAD"

267 | },

268 | "source": [

269 | "#### Display the shape of the training as well testing images and labels"

270 | ]

271 | },

272 | {

273 | "cell_type": "code",

274 | "metadata": {

275 | "colab": {

276 | "base_uri": "https://localhost:8080/"

277 | },

278 | "id": "VhxoNwadKMyq",

279 | "outputId": "c63bd33f-f40a-4e59-d063-464c1a00f3af"

280 | },

281 | "source": [

282 | "print(\"Training Image Shape: \",x_train.shape)\n",

283 | "print(\"Training Label Shape\", y_train.shape)\n",

284 | "print(\"Testing Image Shape: \",x_test.shape)\n",

285 | "print(\"Testing Label Shape\", y_test.shape)"

286 | ],

287 | "execution_count": 7,

288 | "outputs": [

289 | {

290 | "output_type": "stream",

291 | "text": [

292 | "Training Image Shape: (60000, 28, 28, 1)\n",

293 | "Training Label Shape (60000, 10)\n",

294 | "Testing Image Shape: (10000, 28, 28, 1)\n",

295 | "Testing Label Shape (10000, 10)\n"

296 | ],

297 | "name": "stdout"

298 | }

299 | ]

300 | },

301 | {

302 | "cell_type": "markdown",

303 | "metadata": {

304 | "id": "LtttX65TMblr"

305 | },

306 | "source": [

307 | "### Define the Hyperparameters"

308 | ]

309 | },

310 | {

311 | "cell_type": "code",

312 | "metadata": {

313 | "id": "zU2puLX0MHxF"

314 | },

315 | "source": [

316 | "AUTO = tf.data.AUTOTUNE\n",

317 | "BATCH_SIZE = 64\n",

318 | "EPOCHS = 10\n",

319 | "NUM_CLASSES=10"

320 | ],

321 | "execution_count": 8,

322 | "outputs": []

323 | },

324 | {

325 | "cell_type": "markdown",

326 | "metadata": {

327 | "id": "bZHWAHlrMh5z"

328 | },

329 | "source": [

330 | "### Creating the Data Pipeline"

331 | ]

332 | },

333 | {

334 | "cell_type": "code",

335 | "metadata": {

336 | "id": "LRJJrk5fMfuo"

337 | },

338 | "source": [

339 | "train_ds = tf.data.Dataset.from_tensor_slices((x_train, y_train))\n",

340 | "\n",

341 | "train_ds = (\n",

342 | " train_ds\n",

343 | " .shuffle(BATCH_SIZE * 100)\n",

344 | " .batch(BATCH_SIZE)\n",

345 | ")\n",

346 | "\n",

347 | "test_ds = tf.data.Dataset.from_tensor_slices((x_test, y_test))\n",

348 | "\n",

349 | "test_ds = (\n",

350 | " test_ds\n",

351 | " .batch(BATCH_SIZE)\n",

352 | ")"

353 | ],

354 | "execution_count": 9,

355 | "outputs": []

356 | },

357 | {

358 | "cell_type": "markdown",

359 | "metadata": {

360 | "id": "FFKt67u-OOuZ"

361 | },

362 | "source": [

363 | "___Pipeline___ is ready!"

364 | ]

365 | },

366 | {

367 | "cell_type": "markdown",

368 | "metadata": {

369 | "id": "9c7Zdm8HOWLX"

370 | },

371 | "source": [

372 | "### Visiualise the Training Images"

373 | ]

374 | },

375 | {

376 | "cell_type": "code",

377 | "metadata": {

378 | "colab": {

379 | "base_uri": "https://localhost:8080/",

380 | "height": 591

381 | },

382 | "id": "uv3BXZ5HN-eT",

383 | "outputId": "bdc959c5-9bbc-4caa-94f4-d1882cc5756e"

384 | },

385 | "source": [

386 | "sample_images, sample_labels = next(iter(train_ds))\n",

387 | "plt.figure(figsize=(10, 10))\n",

388 | "for i, (image, label) in enumerate(zip(sample_images[:9], sample_labels[:9])):\n",

389 | " ax = plt.subplot(3, 3, i + 1)\n",

390 | " plt.imshow(image.numpy().squeeze())\n",

391 | " plt.title(class_name[np.argmax(label.numpy().tolist())])\n",

392 | " plt.axis(\"off\")"

393 | ],

394 | "execution_count": 10,

395 | "outputs": [

396 | {

397 | "output_type": "display_data",

398 | "data": {