;

7 |

8 | We provide PyTorch implementations for both unpaired and paired image-to-image translation.

9 |

10 | The code was written by [Jun-Yan Zhu](https://github.com/junyanz) and [Taesung Park](https://github.com/taesung), and supported by [Tongzhou Wang](https://ssnl.github.io/).

11 |

12 | This PyTorch implementation produces results comparable to or better than our original Torch software. If you would like to reproduce the same results as in the papers, check out the original [CycleGAN Torch](https://github.com/junyanz/CycleGAN) and [pix2pix Torch](https://github.com/phillipi/pix2pix) code

13 |

14 | **Note**: The current software works well with PyTorch 0.41+. Check out the older [branch](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/tree/pytorch0.3.1) that supports PyTorch 0.1-0.3.

15 |

16 | You may find useful information in [training/test tips](docs/tips.md) and [frequently asked questions](docs/qa.md). To implement custom models and datasets, check out our [templates](#custom-model-and-dataset). To help users better understand and adapt our codebase, we provide an [overview](docs/overview.md) of the code structure of this repository.

17 |

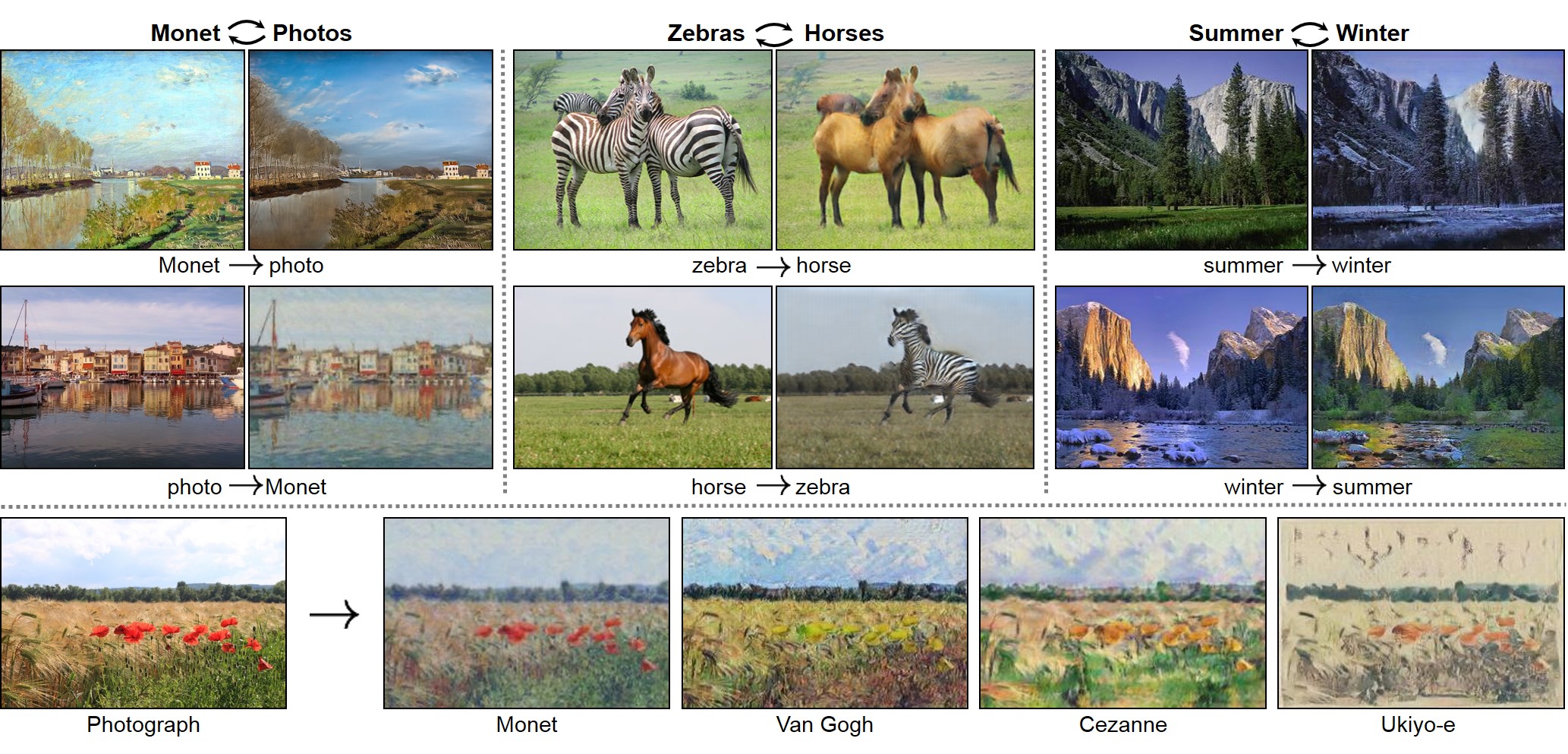

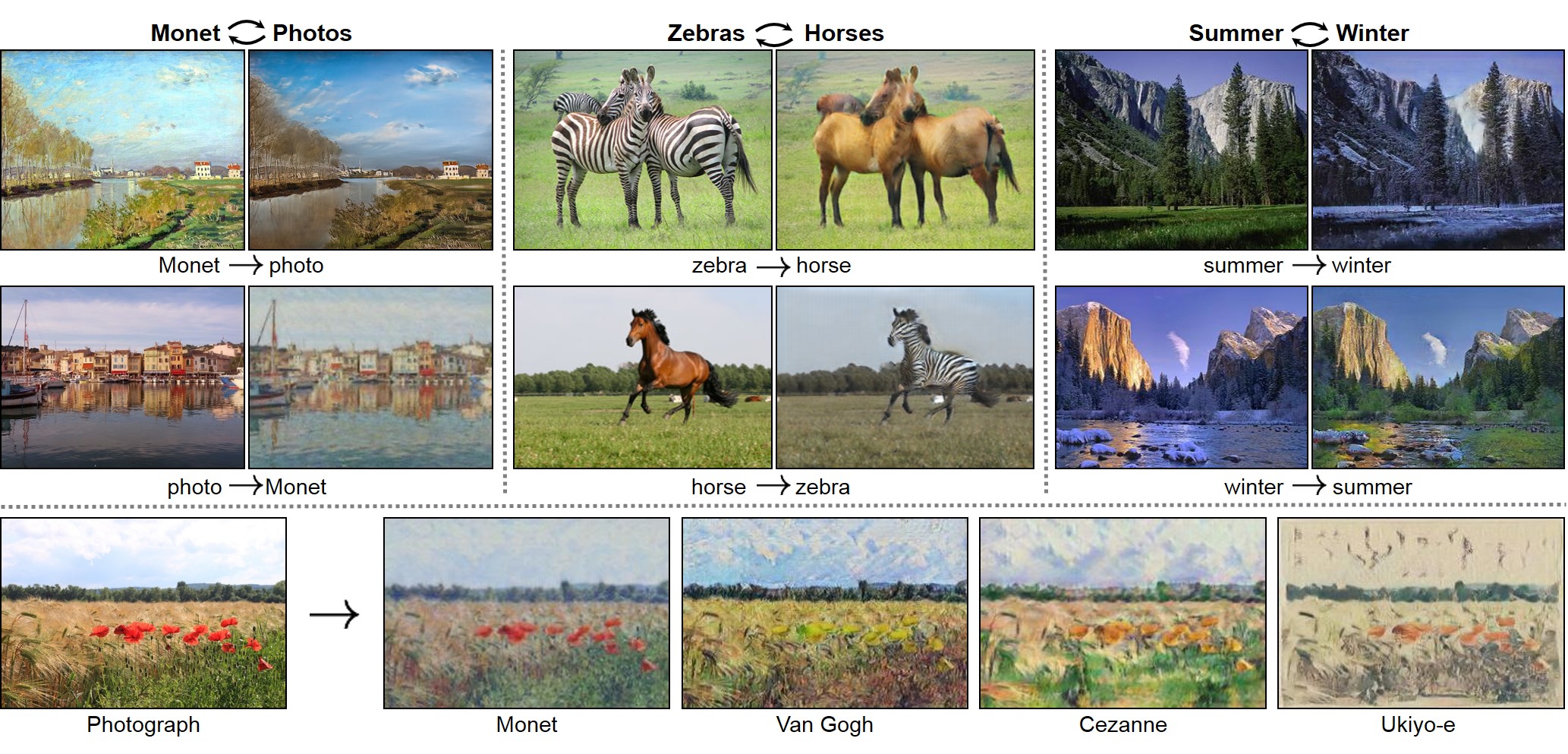

18 | **CycleGAN: [Project](https://junyanz.github.io/CycleGAN/) | [Paper](https://arxiv.org/pdf/1703.10593.pdf) | [Torch](https://github.com/junyanz/CycleGAN)**

19 |  20 |

21 |

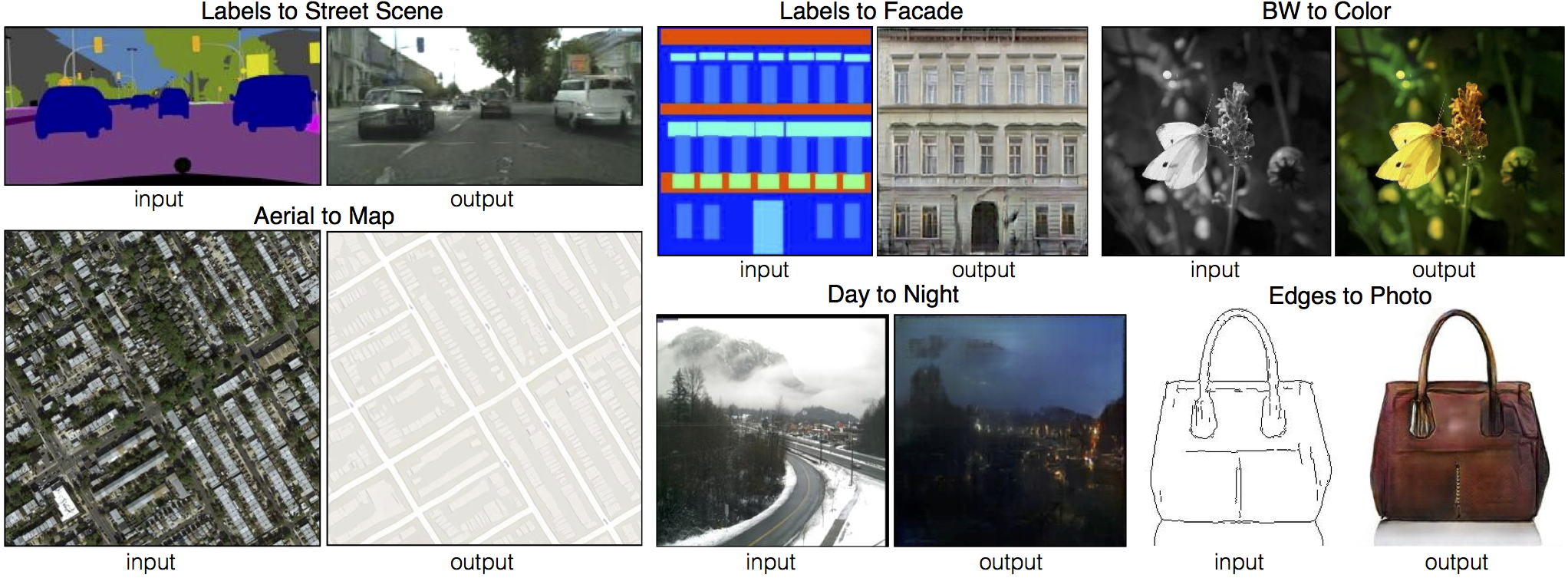

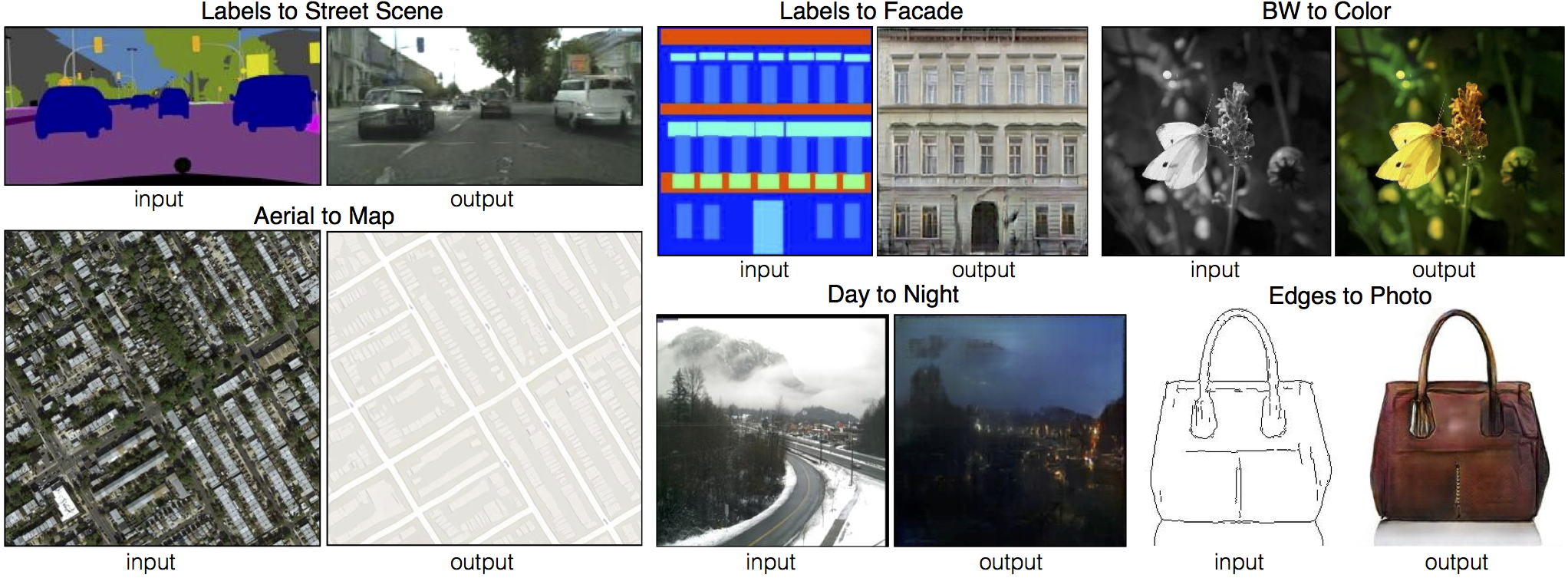

22 | **Pix2pix: [Project](https://phillipi.github.io/pix2pix/) | [Paper](https://arxiv.org/pdf/1611.07004.pdf) | [Torch](https://github.com/phillipi/pix2pix)**

23 |

24 |

20 |

21 |

22 | **Pix2pix: [Project](https://phillipi.github.io/pix2pix/) | [Paper](https://arxiv.org/pdf/1611.07004.pdf) | [Torch](https://github.com/phillipi/pix2pix)**

23 |

24 |  25 |

26 |

27 | **[EdgesCats Demo](https://affinelayer.com/pixsrv/) | [pix2pix-tensorflow](https://github.com/affinelayer/pix2pix-tensorflow) | by [Christopher Hesse](https://twitter.com/christophrhesse)**

28 |

29 |

25 |

26 |

27 | **[EdgesCats Demo](https://affinelayer.com/pixsrv/) | [pix2pix-tensorflow](https://github.com/affinelayer/pix2pix-tensorflow) | by [Christopher Hesse](https://twitter.com/christophrhesse)**

28 |

29 |  30 |

31 | If you use this code for your research, please cite:

32 |

33 | Unpaired Image-to-Image Translation using Cycle-Consistent Adversarial Networks.

30 |

31 | If you use this code for your research, please cite:

32 |

33 | Unpaired Image-to-Image Translation using Cycle-Consistent Adversarial Networks.

34 | [Jun-Yan Zhu](https://people.eecs.berkeley.edu/~junyanz/)\*, [Taesung Park](https://taesung.me/)\*, [Phillip Isola](https://people.eecs.berkeley.edu/~isola/), [Alexei A. Efros](https://people.eecs.berkeley.edu/~efros). In ICCV 2017. (* equal contributions) [[Bibtex]](https://junyanz.github.io/CycleGAN/CycleGAN.txt)

35 |

36 |

37 | Image-to-Image Translation with Conditional Adversarial Networks.

38 | [Phillip Isola](https://people.eecs.berkeley.edu/~isola), [Jun-Yan Zhu](https://people.eecs.berkeley.edu/~junyanz), [Tinghui Zhou](https://people.eecs.berkeley.edu/~tinghuiz), [Alexei A. Efros](https://people.eecs.berkeley.edu/~efros). In CVPR 2017. [[Bibtex]](http://people.csail.mit.edu/junyanz/projects/pix2pix/pix2pix.bib)

39 |

40 | ## Talks and Course

41 | pix2pix slides: [keynote](http://efrosgans.eecs.berkeley.edu/CVPR18_slides/pix2pix.key) | [pdf](http://efrosgans.eecs.berkeley.edu/CVPR18_slides/pix2pix.pdf),

42 | CycleGAN slides: [pptx](http://efrosgans.eecs.berkeley.edu/CVPR18_slides/CycleGAN.pptx) | [pdf](http://efrosgans.eecs.berkeley.edu/CVPR18_slides/CycleGAN.pdf)

43 |

44 | CycleGAN course assignment [code](http://www.cs.toronto.edu/~rgrosse/courses/csc321_2018/assignments/a4-code.zip) and [handout](http://www.cs.toronto.edu/~rgrosse/courses/csc321_2018/assignments/a4-handout.pdf) designed by Prof. [Roger Grosse](http://www.cs.toronto.edu/~rgrosse/) for [CSC321](http://www.cs.toronto.edu/~rgrosse/courses/csc321_2018/) "Intro to Neural Networks and Machine Learning" at University of Toronto. Please contact the instructor if you would like to adopt it in your course.

45 |

46 | ## Other implementations

47 | ### CycleGAN

48 | [Tensorflow] (by Harry Yang),

49 | [Tensorflow] (by Archit Rathore),

50 | [Tensorflow] (by Van Huy),

51 | [Tensorflow] (by Xiaowei Hu),

52 | [Tensorflow-simple] (by Zhenliang He),

53 | [TensorLayer] (by luoxier),

54 | [Chainer] (by Yanghua Jin),

55 | [Minimal PyTorch] (by yunjey),

56 | [Mxnet] (by Ldpe2G),

57 | [lasagne/keras] (by tjwei)

58 |

59 |

60 | ### pix2pix

61 | [Tensorflow] (by Christopher Hesse),

62 | [Tensorflow] (by Eyyüb Sariu),

63 | [Tensorflow (face2face)] (by Dat Tran),

64 | [Tensorflow (film)] (by Arthur Juliani),

65 | [Tensorflow (zi2zi)] (by Yuchen Tian),

66 | [Chainer] (by mattya),

67 | [tf/torch/keras/lasagne] (by tjwei),

68 | [Pytorch] (by taey16)

69 |

70 |

71 |

72 | ## Prerequisites

73 | - Linux or macOS

74 | - Python 3

75 | - CPU or NVIDIA GPU + CUDA CuDNN

76 |

77 | ## Getting Started

78 | ### Installation

79 |

80 | - Clone this repo:

81 | ```bash

82 | git clone https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix

83 | cd pytorch-CycleGAN-and-pix2pix

84 | ```

85 |

86 | - Install [PyTorch](http://pytorch.org and) 0.4+ and other dependencies (e.g., torchvision, [visdom](https://github.com/facebookresearch/visdom) and [dominate](https://github.com/Knio/dominate)).

87 | - For pip users, please type the command `pip install -r requirements.txt`.

88 | - For Conda users, we provide a installation script `./scripts/conda_deps.sh`. Alternatively, you can create a new Conda environment using `conda env create -f environment.yml`.

89 | - For Docker users, we provide the pre-built Docker image and Dockerfile. Please refer to our [Docker](docs/docker.md) page.

90 |

91 | ### CycleGAN train/test

92 | - Download a CycleGAN dataset (e.g. maps):

93 | ```bash

94 | bash ./datasets/download_cyclegan_dataset.sh maps

95 | ```

96 | - To view training results and loss plots, run `python -m visdom.server` and click the URL http://localhost:8097.

97 | - Train a model:

98 | ```bash

99 | #!./scripts/train_cyclegan.sh

100 | python train.py --dataroot ./datasets/maps --name maps_cyclegan --model cycle_gan

101 | ```

102 | To see more intermediate results, check out `./checkpoints/maps_cyclegan/web/index.html`.

103 | - Test the model:

104 | ```bash

105 | #!./scripts/test_cyclegan.sh

106 | python test.py --dataroot ./datasets/maps --name maps_cyclegan --model cycle_gan

107 | ```

108 | - The test results will be saved to a html file here: `./results/maps_cyclegan/latest_test/index.html`.

109 |

110 | ### pix2pix train/test

111 | - Download a pix2pix dataset (e.g.facades):

112 | ```bash

113 | bash ./datasets/download_pix2pix_dataset.sh facades

114 | ```

115 | - Train a model:

116 | ```bash

117 | #!./scripts/train_pix2pix.sh

118 | python train.py --dataroot ./datasets/facades --name facades_pix2pix --model pix2pix --direction BtoA

119 | ```

120 | - To view training results and loss plots, run `python -m visdom.server` and click the URL http://localhost:8097. To see more intermediate results, check out `./checkpoints/facades_pix2pix/web/index.html`.

121 |

122 | - Test the model (`bash ./scripts/test_pix2pix.sh`):

123 | ```bash

124 | #!./scripts/test_pix2pix.sh

125 | python test.py --dataroot ./datasets/facades --name facades_pix2pix --model pix2pix --direction BtoA

126 | ```

127 | - The test results will be saved to a html file here: `./results/facades_pix2pix/test_latest/index.html`. You can find more scripts at `scripts` directory.

128 | - To train and test pix2pix-based colorization models, please add `--model colorization` and `--dataset_mode colorization`. See our training [tips](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/blob/master/docs/tips.md#notes-on-colorization) for more details.

129 |

130 | ### Apply a pre-trained model (CycleGAN)

131 | - You can download a pretrained model (e.g. horse2zebra) with the following script:

132 | ```bash

133 | bash ./scripts/download_cyclegan_model.sh horse2zebra

134 | ```

135 | - The pretrained model is saved at `./checkpoints/{name}_pretrained/latest_net_G.pth`. Check [here](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/blob/master/scripts/download_cyclegan_model.sh#L3) for all the available CycleGAN models.

136 | - To test the model, you also need to download the horse2zebra dataset:

137 | ```bash

138 | bash ./datasets/download_cyclegan_dataset.sh horse2zebra

139 | ```

140 |

141 | - Then generate the results using

142 | ```bash

143 | python test.py --dataroot datasets/horse2zebra/testA --name horse2zebra_pretrained --model test --no_dropout

144 | ```

145 | - The option `--model test` is used for generating results of CycleGAN only for one side. This option will automatically set `--dataset_mode single`, which only loads the images from one set. On the contrary, using `--model cycle_gan` requires loading and generating results in both directions, which is sometimes unnecessary. The results will be saved at `./results/`. Use `--results_dir {directory_path_to_save_result}` to specify the results directory.

146 |

147 | - For your own experiments, you might want to specify `--netG`, `--norm`, `--no_dropout` to match the generator architecture of the trained model.

148 |

149 | ### Apply a pre-trained model (pix2pix)

150 | Download a pre-trained model with `./scripts/download_pix2pix_model.sh`.

151 |

152 | - Check [here](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/blob/master/scripts/download_pix2pix_model.sh#L3) for all the available pix2pix models. For example, if you would like to download label2photo model on the Facades dataset,

153 | ```bash

154 | bash ./scripts/download_pix2pix_model.sh facades_label2photo

155 | ```

156 | - Download the pix2pix facades datasets:

157 | ```bash

158 | bash ./datasets/download_pix2pix_dataset.sh facades

159 | ```

160 | - Then generate the results using

161 | ```bash

162 | python test.py --dataroot ./datasets/facades/ --direction BtoA --model pix2pix --name facades_label2photo_pretrained

163 | ```

164 | - Note that we specified `--direction BtoA` as Facades dataset's A to B direction is photos to labels.

165 |

166 | - If you would like to apply a pre-trained model to a collection of input images (rather than image pairs), please use `--model test` option. See `./scripts/test_single.sh` for how to apply a model to Facade label maps (stored in the directory `facades/testB`).

167 |

168 | - See a list of currently available models at `./scripts/download_pix2pix_model.sh`

169 |

170 | ## [Docker](docs/docker.md)

171 | We provide the pre-built Docker image and Dockerfile that can run this code repo. See [docker](docs/docker.md).

172 |

173 | ## [Datasets](docs/datasets.md)

174 | Download pix2pix/CycleGAN datasets and create your own datasets.

175 |

176 | ## [Training/Test Tips](docs/tips.md)

177 | Best practice for training and testing your models.

178 |

179 | ## [Frequently Asked Questions](docs/qa.md)

180 | Before you post a new question, please first look at the above Q & A and existing GitHub issues.

181 |

182 | ## Custom Model and Dataset

183 | If you plan to implement custom models and dataset for your new applications, we provide a dataset [template](data/template_dataset.py) and a model [template](models/template_model.py) as a starting point.

184 |

185 | ## [Code structure](docs/overview.md)

186 | To help users better understand and use our code, we briefly overview the functionality and implementation of each package and each module.

187 |

188 | ## Pull Request

189 | You are always welcome to contribute to this repository by sending a [pull request](https://help.github.com/articles/about-pull-requests/).

190 | Please run `flake8 --ignore E501 .` and `python ./scripts/test_before_push.py` before you commit the code. Please also update the code structure [overview](docs/overview.md) accordingly if you add or remove files.

191 |

192 | ## Citation

193 | If you use this code for your research, please cite our papers.

194 | ```

195 | @inproceedings{CycleGAN2017,

196 | title={Unpaired Image-to-Image Translation using Cycle-Consistent Adversarial Networkss},

197 | author={Zhu, Jun-Yan and Park, Taesung and Isola, Phillip and Efros, Alexei A},

198 | booktitle={Computer Vision (ICCV), 2017 IEEE International Conference on},

199 | year={2017}

200 | }

201 |

202 |

203 | @inproceedings{isola2017image,

204 | title={Image-to-Image Translation with Conditional Adversarial Networks},

205 | author={Isola, Phillip and Zhu, Jun-Yan and Zhou, Tinghui and Efros, Alexei A},

206 | booktitle={Computer Vision and Pattern Recognition (CVPR), 2017 IEEE Conference on},

207 | year={2017}

208 | }

209 | ```

210 |

211 |

212 |

213 | ## Related Projects

214 | **[CycleGAN-Torch](https://github.com/junyanz/CycleGAN) |

215 | [pix2pix-Torch](https://github.com/phillipi/pix2pix) | [pix2pixHD](https://github.com/NVIDIA/pix2pixHD) |

216 | [iGAN](https://github.com/junyanz/iGAN) |

217 | [BicycleGAN](https://github.com/junyanz/BicycleGAN) | [vid2vid](https://tcwang0509.github.io/vid2vid/)**

218 |

219 | ## Cat Paper Collection

220 | If you love cats, and love reading cool graphics, vision, and learning papers, please check out the Cat Paper [Collection](https://github.com/junyanz/CatPapers).

221 |

222 | ## Acknowledgments

223 | Our code is inspired by [pytorch-DCGAN](https://github.com/pytorch/examples/tree/master/dcgan).

224 |

--------------------------------------------------------------------------------

/Untitled Diagram.drawio:

--------------------------------------------------------------------------------

1 |

2 |

3 |

4 |

5 |

6 |

7 |

8 |

9 |

10 |

11 |

12 |

13 |

14 |

15 |

16 |

17 |

18 |

19 |

20 |

21 |

22 |

23 |

24 |

25 |

26 |

27 |

28 |

29 |

--------------------------------------------------------------------------------

/data/__init__.py:

--------------------------------------------------------------------------------

1 | """This package includes all the modules related to data loading and preprocessing

2 |

3 | To add a custom dataset class called 'dummy', you need to add a file called 'dummy_dataset.py' and define a subclass 'DummyDataset' inherited from BaseDataset.

4 | You need to implement four functions:

5 | -- <__init__>: initialize the class, first call BaseDataset.__init__(self, opt).

6 | -- <__len__>: return the size of dataset.

7 | -- <__getitem__>: get a data point from data loader.

8 | -- : (optionally) add dataset-specific options and set default options.

9 |

10 | Now you can use the dataset class by specifying flag '--dataset_mode dummy'.

11 | See our template dataset class 'template_dataset.py' for more details.

12 | """

13 | import importlib

14 | import torch.utils.data

15 | from data.base_dataset import BaseDataset

16 |

17 |

18 | def find_dataset_using_name(dataset_name):

19 | """Import the module "data/[dataset_name]_dataset.py".

20 |

21 | In the file, the class called DatasetNameDataset() will

22 | be instantiated. It has to be a subclass of BaseDataset,

23 | and it is case-insensitive.

24 | """

25 | dataset_filename = "data." + dataset_name + "_dataset"

26 | datasetlib = importlib.import_module(dataset_filename)

27 |

28 | dataset = None

29 | target_dataset_name = dataset_name.replace('_', '') + 'dataset'

30 | for name, cls in datasetlib.__dict__.items():

31 | if name.lower() == target_dataset_name.lower() \

32 | and issubclass(cls, BaseDataset):

33 | dataset = cls

34 |

35 | if dataset is None:

36 | raise NotImplementedError("In %s.py, there should be a subclass of BaseDataset with class name that matches %s in lowercase." % (dataset_filename, target_dataset_name))

37 |

38 | return dataset

39 |

40 |

41 | def get_option_setter(dataset_name):

42 | """Return the static method of the dataset class."""

43 | dataset_class = find_dataset_using_name(dataset_name)

44 | return dataset_class.modify_commandline_options

45 |

46 |

47 | def create_dataset(opt):

48 | """Create a dataset given the option.

49 |

50 | This function wraps the class CustomDatasetDataLoader.

51 | This is the main interface between this package and 'train.py'/'test.py'

52 |

53 | Example:

54 | >>> from data import create_dataset

55 | >>> dataset = create_dataset(opt)

56 | """

57 | data_loader = CustomDatasetDataLoader(opt)

58 | dataset = data_loader.load_data()

59 | return dataset

60 |

61 |

62 | class CustomDatasetDataLoader():

63 | """Wrapper class of Dataset class that performs multi-threaded data loading"""

64 |

65 | def __init__(self, opt):

66 | """Initialize this class

67 |

68 | Step 1: create a dataset instance given the name [dataset_mode]

69 | Step 2: create a multi-threaded data loader.

70 | """

71 | self.opt = opt

72 | dataset_class = find_dataset_using_name(opt.dataset_mode)

73 | self.dataset = dataset_class(opt)

74 | print("dataset [%s] was created" % type(self.dataset).__name__)

75 | self.dataloader = torch.utils.data.DataLoader(

76 | self.dataset,

77 | batch_size=opt.batch_size,

78 | shuffle=not opt.serial_batches,

79 | num_workers=int(opt.num_threads))

80 |

81 | def load_data(self):

82 | return self

83 |

84 | def __len__(self):

85 | """Return the number of data in the dataset"""

86 | return min(len(self.dataset), self.opt.max_dataset_size)

87 |

88 | def __iter__(self):

89 | """Return a batch of data"""

90 | for i, data in enumerate(self.dataloader):

91 | if i * self.opt.batch_size >= self.opt.max_dataset_size:

92 | break

93 | yield data

94 |

--------------------------------------------------------------------------------

/data/aligned_dataset.py:

--------------------------------------------------------------------------------

1 | import os.path

2 | from data.base_dataset import BaseDataset, get_params, get_transform

3 | from data.image_folder import make_dataset

4 | from PIL import Image

5 |

6 |

7 | class AlignedDataset(BaseDataset):

8 | """A dataset class for paired image dataset.

9 |

10 | It assumes that the directory '/path/to/data/train' contains image pairs in the form of {A,B}.

11 | During test time, you need to prepare a directory '/path/to/data/test'.

12 | """

13 |

14 | def __init__(self, opt):

15 | """Initialize this dataset class.

16 |

17 | Parameters:

18 | opt (Option class) -- stores all the experiment flags; needs to be a subclass of BaseOptions

19 | """

20 | BaseDataset.__init__(self, opt)

21 | self.dir_AB = os.path.join(opt.dataroot, opt.phase) # get the image directory

22 | self.AB_paths = sorted(make_dataset(self.dir_AB, opt.max_dataset_size)) # get image paths

23 | assert(self.opt.load_size >= self.opt.crop_size) # crop_size should be smaller than the size of loaded image

24 | self.input_nc = self.opt.output_nc if self.opt.direction == 'BtoA' else self.opt.input_nc

25 | self.output_nc = self.opt.input_nc if self.opt.direction == 'BtoA' else self.opt.output_nc

26 |

27 | def __getitem__(self, index):

28 | """Return a data point and its metadata information.

29 |

30 | Parameters:

31 | index - - a random integer for data indexing

32 |

33 | Returns a dictionary that contains A, B, A_paths and B_paths

34 | A (tensor) - - an image in the input domain

35 | B (tensor) - - its corresponding image in the target domain

36 | A_paths (str) - - image paths

37 | B_paths (str) - - image paths (same as A_paths)

38 | """

39 | # read a image given a random integer index

40 | AB_path = self.AB_paths[index]

41 | AB = Image.open(AB_path).convert('RGB')

42 | # split AB image into A and B

43 | w, h = AB.size

44 | w2 = int(w / 2)

45 | A = AB.crop((0, 0, w2, h))

46 | B = AB.crop((w2, 0, w, h))

47 |

48 | # apply the same transform to both A and B

49 | transform_params = get_params(self.opt, A.size)

50 | A_transform = get_transform(self.opt, transform_params, grayscale=(self.input_nc == 1))

51 | B_transform = get_transform(self.opt, transform_params, grayscale=(self.output_nc == 1))

52 |

53 | A = A_transform(A)

54 | B = B_transform(B)

55 |

56 | return {'A': A, 'B': B, 'A_paths': AB_path, 'B_paths': AB_path}

57 |

58 | def __len__(self):

59 | """Return the total number of images in the dataset."""

60 | return len(self.AB_paths)

61 |

--------------------------------------------------------------------------------

/data/base_dataset.py:

--------------------------------------------------------------------------------

1 | """This module implements an abstract base class (ABC) 'BaseDataset' for datasets.

2 |

3 | It also includes common transformation functions (e.g., get_transform, __scale_width), which can be later used in subclasses.

4 | """

5 | import random

6 | import numpy as np

7 | import torch.utils.data as data

8 | from PIL import Image

9 | import torchvision.transforms as transforms

10 | from abc import ABC, abstractmethod

11 |

12 |

13 | class BaseDataset(data.Dataset, ABC):

14 | """This class is an abstract base class (ABC) for datasets.

15 |

16 | To create a subclass, you need to implement the following four functions:

17 | -- <__init__>: initialize the class, first call BaseDataset.__init__(self, opt).

18 | -- <__len__>: return the size of dataset.

19 | -- <__getitem__>: get a data point.

20 | -- : (optionally) add dataset-specific options and set default options.

21 | """

22 |

23 | def __init__(self, opt):

24 | """Initialize the class; save the options in the class

25 |

26 | Parameters:

27 | opt (Option class)-- stores all the experiment flags; needs to be a subclass of BaseOptions

28 | """

29 | self.opt = opt

30 | self.root = opt.dataroot

31 |

32 | @staticmethod

33 | def modify_commandline_options(parser, is_train):

34 | """Add new dataset-specific options, and rewrite default values for existing options.

35 |

36 | Parameters:

37 | parser -- original option parser

38 | is_train (bool) -- whether training phase or test phase. You can use this flag to add training-specific or test-specific options.

39 |

40 | Returns:

41 | the modified parser.

42 | """

43 | return parser

44 |

45 | @abstractmethod

46 | def __len__(self):

47 | """Return the total number of images in the dataset."""

48 | return 0

49 |

50 | @abstractmethod

51 | def __getitem__(self, index):

52 | """Return a data point and its metadata information.

53 |

54 | Parameters:

55 | index - - a random integer for data indexing

56 |

57 | Returns:

58 | a dictionary of data with their names. It ususally contains the data itself and its metadata information.

59 | """

60 | pass

61 |

62 |

63 | def get_params(opt, size):

64 | w, h = size

65 | new_h = h

66 | new_w = w

67 | if opt.preprocess == 'resize_and_crop':

68 | new_h = new_w = opt.load_size

69 | elif opt.preprocess == 'scale_width_and_crop':

70 | new_w = opt.load_size

71 | new_h = opt.load_size * h // w

72 |

73 | x = random.randint(0, np.maximum(0, new_w - opt.crop_size))

74 | y = random.randint(0, np.maximum(0, new_h - opt.crop_size))

75 |

76 | flip = random.random() > 0.5

77 |

78 | return {'crop_pos': (x, y), 'flip': flip}

79 |

80 |

81 | def get_transform(opt, params=None, grayscale=False, method=Image.BICUBIC, convert=True):

82 | transform_list = []

83 | if grayscale:

84 | transform_list.append(transforms.Grayscale(1))

85 | if 'resize' in opt.preprocess:

86 | osize = [opt.load_size, opt.load_size]

87 | transform_list.append(transforms.Resize(osize, method))

88 | elif 'scale_width' in opt.preprocess:

89 | transform_list.append(transforms.Lambda(lambda img: __scale_width(img, opt.load_size, method)))

90 |

91 | if 'crop' in opt.preprocess:

92 | if params is None:

93 | transform_list.append(transforms.RandomCrop(opt.crop_size))

94 | else:

95 | transform_list.append(transforms.Lambda(lambda img: __crop(img, params['crop_pos'], opt.crop_size)))

96 |

97 | if opt.preprocess == 'none':

98 | transform_list.append(transforms.Lambda(lambda img: __make_power_2(img, base=4, method=method)))

99 |

100 | if not opt.no_flip:

101 | if params is None:

102 | transform_list.append(transforms.RandomHorizontalFlip())

103 | elif params['flip']:

104 | transform_list.append(transforms.Lambda(lambda img: __flip(img, params['flip'])))

105 |

106 | if convert:

107 | transform_list += [transforms.ToTensor()]

108 | if grayscale:

109 | transform_list += [transforms.Normalize((0.5,), (0.5,))]

110 | else:

111 | transform_list += [transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))]

112 | return transforms.Compose(transform_list)

113 |

114 |

115 | def __make_power_2(img, base, method=Image.BICUBIC):

116 | ow, oh = img.size

117 | h = int(round(oh / base) * base)

118 | w = int(round(ow / base) * base)

119 | if (h == oh) and (w == ow):

120 | return img

121 |

122 | __print_size_warning(ow, oh, w, h)

123 | return img.resize((w, h), method)

124 |

125 |

126 | def __scale_width(img, target_width, method=Image.BICUBIC):

127 | ow, oh = img.size

128 | if (ow == target_width):

129 | return img

130 | w = target_width

131 | h = int(target_width * oh / ow)

132 | return img.resize((w, h), method)

133 |

134 |

135 | def __crop(img, pos, size):

136 | ow, oh = img.size

137 | x1, y1 = pos

138 | tw = th = size

139 | if (ow > tw or oh > th):

140 | return img.crop((x1, y1, x1 + tw, y1 + th))

141 | return img

142 |

143 |

144 | def __flip(img, flip):

145 | if flip:

146 | return img.transpose(Image.FLIP_LEFT_RIGHT)

147 | return img

148 |

149 |

150 | def __print_size_warning(ow, oh, w, h):

151 | """Print warning information about image size(only print once)"""

152 | if not hasattr(__print_size_warning, 'has_printed'):

153 | print("The image size needs to be a multiple of 4. "

154 | "The loaded image size was (%d, %d), so it was adjusted to "

155 | "(%d, %d). This adjustment will be done to all images "

156 | "whose sizes are not multiples of 4" % (ow, oh, w, h))

157 | __print_size_warning.has_printed = True

158 |

--------------------------------------------------------------------------------

/data/colorization_dataset.py:

--------------------------------------------------------------------------------

1 | import os.path

2 | from data.base_dataset import BaseDataset, get_transform

3 | from data.image_folder import make_dataset

4 | from skimage import color # require skimage

5 | from PIL import Image

6 | import numpy as np

7 | import torchvision.transforms as transforms

8 |

9 |

10 | class ColorizationDataset(BaseDataset):

11 | """This dataset class can load a set of natural images in RGB, and convert RGB format into (L, ab) pairs in Lab color space.

12 |

13 | This dataset is required by pix2pix-based colorization model ('--model colorization')

14 | """

15 | @staticmethod

16 | def modify_commandline_options(parser, is_train):

17 | """Add new dataset-specific options, and rewrite default values for existing options.

18 |

19 | Parameters:

20 | parser -- original option parser

21 | is_train (bool) -- whether training phase or test phase. You can use this flag to add training-specific or test-specific options.

22 |

23 | Returns:

24 | the modified parser.

25 |

26 | By default, the number of channels for input image is 1 (L) and

27 | the nubmer of channels for output image is 2 (ab). The direction is from A to B

28 | """

29 | parser.set_defaults(input_nc=1, output_nc=2, direction='AtoB')

30 | return parser

31 |

32 | def __init__(self, opt):

33 | """Initialize this dataset class.

34 |

35 | Parameters:

36 | opt (Option class) -- stores all the experiment flags; needs to be a subclass of BaseOptions

37 | """

38 | BaseDataset.__init__(self, opt)

39 | self.dir = os.path.join(opt.dataroot)

40 | self.AB_paths = sorted(make_dataset(self.dir, opt.max_dataset_size))

41 | assert(opt.input_nc == 1 and opt.output_nc == 2 and opt.direction == 'AtoB')

42 | self.transform = get_transform(self.opt, convert=False)

43 |

44 | def __getitem__(self, index):

45 | """Return a data point and its metadata information.

46 |

47 | Parameters:

48 | index - - a random integer for data indexing

49 |

50 | Returns a dictionary that contains A, B, A_paths and B_paths

51 | A (tensor) - - the L channel of an image

52 | B (tensor) - - the ab channels of the same image

53 | A_paths (str) - - image paths

54 | B_paths (str) - - image paths (same as A_paths)

55 | """

56 | path = self.AB_paths[index]

57 | im = Image.open(path).convert('RGB')

58 | im = self.transform(im)

59 | im = np.array(im)

60 | lab = color.rgb2lab(im).astype(np.float32)

61 | lab_t = transforms.ToTensor()(lab)

62 | A = lab_t[[0], ...] / 50.0 - 1.0

63 | B = lab_t[[1, 2], ...] / 110.0

64 | return {'A': A, 'B': B, 'A_paths': path, 'B_paths': path}

65 |

66 | def __len__(self):

67 | """Return the total number of images in the dataset."""

68 | return len(self.AB_paths)

69 |

--------------------------------------------------------------------------------

/data/image_folder.py:

--------------------------------------------------------------------------------

1 | """A modified image folder class

2 |

3 | We modify the official PyTorch image folder (https://github.com/pytorch/vision/blob/master/torchvision/datasets/folder.py)

4 | so that this class can load images from both current directory and its subdirectories.

5 | """

6 |

7 | import torch.utils.data as data

8 |

9 | from PIL import Image

10 | import os

11 | import os.path

12 |

13 | IMG_EXTENSIONS = [

14 | '.jpg', '.JPG', '.jpeg', '.JPEG',

15 | '.png', '.PNG', '.ppm', '.PPM', '.bmp', '.BMP',

16 | ]

17 |

18 |

19 | def is_image_file(filename):

20 | return any(filename.endswith(extension) for extension in IMG_EXTENSIONS)

21 |

22 |

23 | def make_dataset(dir, max_dataset_size=float("inf")):

24 | images = []

25 | assert os.path.isdir(dir), '%s is not a valid directory' % dir

26 |

27 | for root, _, fnames in sorted(os.walk(dir)):

28 | for fname in fnames:

29 | if is_image_file(fname):

30 | path = os.path.join(root, fname)

31 | images.append(path)

32 | return images[:min(max_dataset_size, len(images))]

33 |

34 |

35 | def default_loader(path):

36 | return Image.open(path).convert('RGB')

37 |

38 |

39 | class ImageFolder(data.Dataset):

40 |

41 | def __init__(self, root, transform=None, return_paths=False,

42 | loader=default_loader):

43 | imgs = make_dataset(root)

44 | if len(imgs) == 0:

45 | raise(RuntimeError("Found 0 images in: " + root + "\n"

46 | "Supported image extensions are: " +

47 | ",".join(IMG_EXTENSIONS)))

48 |

49 | self.root = root

50 | self.imgs = imgs

51 | self.transform = transform

52 | self.return_paths = return_paths

53 | self.loader = loader

54 |

55 | def __getitem__(self, index):

56 | path = self.imgs[index]

57 | img = self.loader(path)

58 | if self.transform is not None:

59 | img = self.transform(img)

60 | if self.return_paths:

61 | return img, path

62 | else:

63 | return img

64 |

65 | def __len__(self):

66 | return len(self.imgs)

67 |

--------------------------------------------------------------------------------

/data/single_dataset.py:

--------------------------------------------------------------------------------

1 | from data.base_dataset import BaseDataset, get_transform

2 | from data.image_folder import make_dataset

3 | from PIL import Image

4 |

5 |

6 | class SingleDataset(BaseDataset):

7 | """This dataset class can load a set of images specified by the path --dataroot /path/to/data.

8 |

9 | It can be used for generating CycleGAN results only for one side with the model option '-model test'.

10 | """

11 |

12 | def __init__(self, opt):

13 | """Initialize this dataset class.

14 |

15 | Parameters:

16 | opt (Option class) -- stores all the experiment flags; needs to be a subclass of BaseOptions

17 | """

18 | BaseDataset.__init__(self, opt)

19 | self.A_paths = sorted(make_dataset(opt.dataroot, opt.max_dataset_size))

20 | input_nc = self.opt.output_nc if self.opt.direction == 'BtoA' else self.opt.input_nc

21 | self.transform = get_transform(opt, grayscale=(input_nc == 1))

22 |

23 | def __getitem__(self, index):

24 | """Return a data point and its metadata information.

25 |

26 | Parameters:

27 | index - - a random integer for data indexing

28 |

29 | Returns a dictionary that contains A and A_paths

30 | A(tensor) - - an image in one domain

31 | A_paths(str) - - the path of the image

32 | """

33 | A_path = self.A_paths[index]

34 | A_img = Image.open(A_path).convert('RGB')

35 | A = self.transform(A_img)

36 | return {'A': A, 'A_paths': A_path}

37 |

38 | def __len__(self):

39 | """Return the total number of images in the dataset."""

40 | return len(self.A_paths)

41 |

--------------------------------------------------------------------------------

/data/template_dataset.py:

--------------------------------------------------------------------------------

1 | """Dataset class template

2 |

3 | This module provides a template for users to implement custom datasets.

4 | You can specify '--dataset_mode template' to use this dataset.

5 | The class name should be consistent with both the filename and its dataset_mode option.

6 | The filename should be _dataset.py

7 | The class name should be Dataset.py

8 | You need to implement the following functions:

9 | -- : Add dataset-specific options and rewrite default values for existing options.

10 | -- <__init__>: Initialize this dataset class.

11 | -- <__getitem__>: Return a data point and its metadata information.

12 | -- <__len__>: Return the number of images.

13 | """

14 | from data.base_dataset import BaseDataset, get_transform

15 | # from data.image_folder import make_dataset

16 | # from PIL import Image

17 |

18 |

19 | class TemplateDataset(BaseDataset):

20 | """A template dataset class for you to implement custom datasets."""

21 | @staticmethod

22 | def modify_commandline_options(parser, is_train):

23 | """Add new dataset-specific options, and rewrite default values for existing options.

24 |

25 | Parameters:

26 | parser -- original option parser

27 | is_train (bool) -- whether training phase or test phase. You can use this flag to add training-specific or test-specific options.

28 |

29 | Returns:

30 | the modified parser.

31 | """

32 | parser.add_argument('--new_dataset_option', type=float, default=1.0, help='new dataset option')

33 | parser.set_defaults(max_dataset_size=10, new_dataset_option=2.0) # specify dataset-specific default values

34 | return parser

35 |

36 | def __init__(self, opt):

37 | """Initialize this dataset class.

38 |

39 | Parameters:

40 | opt (Option class) -- stores all the experiment flags; needs to be a subclass of BaseOptions

41 |

42 | A few things can be done here.

43 | - save the options (have been done in BaseDataset)

44 | - get image paths and meta information of the dataset.

45 | - define the image transformation.

46 | """

47 | # save the option and dataset root

48 | BaseDataset.__init__(self, opt)

49 | # get the image paths of your dataset;

50 | self.image_paths = [] # You can call sorted(make_dataset(self.root, opt.max_dataset_size)) to get all the image paths under the directory self.root

51 | # define the default transform function. You can use ; You can also define your custom transform function

52 | self.transform = get_transform(opt)

53 |

54 | def __getitem__(self, index):

55 | """Return a data point and its metadata information.

56 |

57 | Parameters:

58 | index -- a random integer for data indexing

59 |

60 | Returns:

61 | a dictionary of data with their names. It usually contains the data itself and its metadata information.

62 |

63 | Step 1: get a random image path: e.g., path = self.image_paths[index]

64 | Step 2: load your data from the disk: e.g., image = Image.open(path).convert('RGB').

65 | Step 3: convert your data to a PyTorch tensor. You can use helpder functions such as self.transform. e.g., data = self.transform(image)

66 | Step 4: return a data point as a dictionary.

67 | """

68 | path = 'temp' # needs to be a string

69 | data_A = None # needs to be a tensor

70 | data_B = None # needs to be a tensor

71 | return {'data_A': data_A, 'data_B': data_B, 'path': path}

72 |

73 | def __len__(self):

74 | """Return the total number of images."""

75 | return len(self.image_paths)

76 |

--------------------------------------------------------------------------------

/data/unaligned_dataset.py:

--------------------------------------------------------------------------------

1 | import os.path

2 | from data.base_dataset import BaseDataset, get_transform

3 | from data.image_folder import make_dataset

4 | from PIL import Image

5 | import random

6 |

7 |

8 | class UnalignedDataset(BaseDataset):

9 | """

10 | This dataset class can load unaligned/unpaired datasets.

11 |

12 | It requires two directories to host training images from domain A '/path/to/data/trainA'

13 | and from domain B '/path/to/data/trainB' respectively.

14 | You can train the model with the dataset flag '--dataroot /path/to/data'.

15 | Similarly, you need to prepare two directories:

16 | '/path/to/data/testA' and '/path/to/data/testB' during test time.

17 | """

18 |

19 | def __init__(self, opt):

20 | """Initialize this dataset class.

21 |

22 | Parameters:

23 | opt (Option class) -- stores all the experiment flags; needs to be a subclass of BaseOptions

24 | """

25 | BaseDataset.__init__(self, opt)

26 | self.dir_A = os.path.join(opt.dataroot, opt.phase + 'A') # create a path '/path/to/data/trainA'

27 | self.dir_B = os.path.join(opt.dataroot, opt.phase + 'B') # create a path '/path/to/data/trainB'

28 |

29 | self.A_paths = sorted(make_dataset(self.dir_A, opt.max_dataset_size)) # load images from '/path/to/data/trainA'

30 | self.B_paths = sorted(make_dataset(self.dir_B, opt.max_dataset_size)) # load images from '/path/to/data/trainB'

31 | self.A_size = len(self.A_paths) # get the size of dataset A

32 | self.B_size = len(self.B_paths) # get the size of dataset B

33 | btoA = self.opt.direction == 'BtoA'

34 | input_nc = self.opt.output_nc if btoA else self.opt.input_nc # get the number of channels of input image

35 | output_nc = self.opt.input_nc if btoA else self.opt.output_nc # get the number of channels of output image

36 | self.transform_A = get_transform(self.opt, grayscale=(input_nc == 1))

37 | self.transform_B = get_transform(self.opt, grayscale=(output_nc == 1))

38 |

39 | def __getitem__(self, index):

40 | """Return a data point and its metadata information.

41 |

42 | Parameters:

43 | index (int) -- a random integer for data indexing

44 |

45 | Returns a dictionary that contains A, B, A_paths and B_paths

46 | A (tensor) -- an image in the input domain

47 | B (tensor) -- its corresponding image in the target domain

48 | A_paths (str) -- image paths

49 | B_paths (str) -- image paths

50 | """

51 | A_path = self.A_paths[index % self.A_size] # make sure index is within then range

52 | if self.opt.serial_batches: # make sure index is within then range

53 | index_B = index % self.B_size

54 | else: # randomize the index for domain B to avoid fixed pairs.

55 | index_B = random.randint(0, self.B_size - 1)

56 | B_path = self.B_paths[index_B]

57 | A_img = Image.open(A_path).convert('RGB')

58 | B_img = Image.open(B_path).convert('RGB')

59 | # apply image transformation

60 | A = self.transform_A(A_img)

61 | B = self.transform_B(B_img)

62 |

63 | return {'A': A, 'B': B, 'A_paths': A_path, 'B_paths': B_path}

64 |

65 | def __len__(self):

66 | """Return the total number of images in the dataset.

67 |

68 | As we have two datasets with potentially different number of images,

69 | we take a maximum of

70 | """

71 | return max(self.A_size, self.B_size)

72 |

--------------------------------------------------------------------------------

/docs/Dockerfile:

--------------------------------------------------------------------------------

1 | FROM nvidia/cuda:9.0-base

2 |

3 | RUN apt update && apt install -y wget unzip curl bzip2 git

4 | RUN curl -LO http://repo.continuum.io/miniconda/Miniconda-latest-Linux-x86_64.sh

5 | RUN bash Miniconda-latest-Linux-x86_64.sh -p /miniconda -b

6 | RUN rm Miniconda-latest-Linux-x86_64.sh

7 | ENV PATH=/miniconda/bin:${PATH}

8 | RUN conda update -y conda

9 |

10 | RUN conda install -y pytorch torchvision -c pytorch

11 | RUN mkdir /workspace/ && cd /workspace/ && git clone https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix.git && cd pytorch-CycleGAN-and-pix2pix && pip install -r requirements.txt

12 |

13 | WORKDIR /workspace

--------------------------------------------------------------------------------

/docs/datasets.md:

--------------------------------------------------------------------------------

1 |

2 |

3 | ### CycleGAN Datasets

4 | Download the CycleGAN datasets using the following script. Some of the datasets are collected by other researchers. Please cite their papers if you use the data.

5 | ```bash

6 | bash ./datasets/download_cyclegan_dataset.sh dataset_name

7 | ```

8 | - `facades`: 400 images from the [CMP Facades dataset](http://cmp.felk.cvut.cz/~tylecr1/facade). [[Citation](datasets/bibtex/facades.tex)]

9 | - `cityscapes`: 2975 images from the [Cityscapes training set](https://www.cityscapes-dataset.com). [[Citation](datasets/bibtex/cityscapes.tex)]

10 | - `maps`: 1096 training images scraped from Google Maps.

11 | - `horse2zebra`: 939 horse images and 1177 zebra images downloaded from [ImageNet](http://www.image-net.org) using keywords `wild horse` and `zebra`

12 | - `apple2orange`: 996 apple images and 1020 orange images downloaded from [ImageNet](http://www.image-net.org) using keywords `apple` and `navel orange`.

13 | - `summer2winter_yosemite`: 1273 summer Yosemite images and 854 winter Yosemite images were downloaded using Flickr API. See more details in our paper.

14 | - `monet2photo`, `vangogh2photo`, `ukiyoe2photo`, `cezanne2photo`: The art images were downloaded from [Wikiart](https://www.wikiart.org/). The real photos are downloaded from Flickr using the combination of the tags *landscape* and *landscapephotography*. The training set size of each class is Monet:1074, Cezanne:584, Van Gogh:401, Ukiyo-e:1433, Photographs:6853.

15 | - `iphone2dslr_flower`: both classes of images were downlaoded from Flickr. The training set size of each class is iPhone:1813, DSLR:3316. See more details in our paper.

16 |

17 | To train a model on your own datasets, you need to create a data folder with two subdirectories `trainA` and `trainB` that contain images from domain A and B. You can test your model on your training set by setting `--phase train` in `test.py`. You can also create subdirectories `testA` and `testB` if you have test data.

18 |

19 | You should **not** expect our method to work on just any random combination of input and output datasets (e.g. `cats<->keyboards`). From our experiments, we find it works better if two datasets share similar visual content. For example, `landscape painting<->landscape photographs` works much better than `portrait painting <-> landscape photographs`. `zebras<->horses` achieves compelling results while `cats<->dogs` completely fails.

20 |

21 | ### pix2pix datasets

22 | Download the pix2pix datasets using the following script. Some of the datasets are collected by other researchers. Please cite their papers if you use the data.

23 | ```bash

24 | bash ./datasets/download_pix2pix_dataset.sh dataset_name

25 | ```

26 | - `facades`: 400 images from [CMP Facades dataset](http://cmp.felk.cvut.cz/~tylecr1/facade). [[Citation](datasets/bibtex/facades.tex)]

27 | - `cityscapes`: 2975 images from the [Cityscapes training set](https://www.cityscapes-dataset.com). [[Citation](datasets/bibtex/cityscapes.tex)]

28 | - `maps`: 1096 training images scraped from Google Maps

29 | - `edges2shoes`: 50k training images from [UT Zappos50K dataset](http://vision.cs.utexas.edu/projects/finegrained/utzap50k). Edges are computed by [HED](https://github.com/s9xie/hed) edge detector + post-processing. [[Citation](datasets/bibtex/shoes.tex)]

30 | - `edges2handbags`: 137K Amazon Handbag images from [iGAN project](https://github.com/junyanz/iGAN). Edges are computed by [HED](https://github.com/s9xie/hed) edge detector + post-processing. [[Citation](datasets/bibtex/handbags.tex)]

31 | - `night2day`: around 20K natural scene images from [Transient Attributes dataset](http://transattr.cs.brown.edu/) [[Citation](datasets/bibtex/transattr.tex)]. To train a `day2night` pix2pix model, you need to add `--direction BtoA`.

32 |

33 | We provide a python script to generate pix2pix training data in the form of pairs of images {A,B}, where A and B are two different depictions of the same underlying scene. For example, these might be pairs {label map, photo} or {bw image, color image}. Then we can learn to translate A to B or B to A:

34 |

35 | Create folder `/path/to/data` with subfolders `A` and `B`. `A` and `B` should each have their own subfolders `train`, `val`, `test`, etc. In `/path/to/data/A/train`, put training images in style A. In `/path/to/data/B/train`, put the corresponding images in style B. Repeat same for other data splits (`val`, `test`, etc).

36 |

37 | Corresponding images in a pair {A,B} must be the same size and have the same filename, e.g., `/path/to/data/A/train/1.jpg` is considered to correspond to `/path/to/data/B/train/1.jpg`.

38 |

39 | Once the data is formatted this way, call:

40 | ```bash

41 | python datasets/combine_A_and_B.py --fold_A /path/to/data/A --fold_B /path/to/data/B --fold_AB /path/to/data

42 | ```

43 |

44 | This will combine each pair of images (A,B) into a single image file, ready for training.

45 |

--------------------------------------------------------------------------------

/docs/docker.md:

--------------------------------------------------------------------------------

1 | # Docker image with pytorch-CycleGAN-and-pix2pix

2 |

3 | We provide both Dockerfile and pre-built Docker container that can run this code repo.

4 |

5 | ## Prerequisite

6 |

7 | - Install [docker-ce](https://docs.docker.com/install/linux/docker-ce/ubuntu/)

8 | - Install [nvidia-docker](https://github.com/NVIDIA/nvidia-docker#quickstart)

9 |

10 | ## Running pre-built Dockerfile

11 |

12 | - Pull the pre-built docker file

13 |

14 | ```bash

15 | docker pull taesungp/pytorch-cyclegan-and-pix2pix

16 | ```

17 |

18 | - Start an interactive docker session. `-p 8097:8097` option is needed if you want to run `visdom` server on the Docker container.

19 |

20 | ```bash

21 | nvidia-docker run -it -p 8097:8097 taesungp/pytorch-cyclegan-and-pix2pix

22 | ```

23 |

24 | - Now you are in the Docker environment. Go to our code repo and start running things.

25 | ```bash

26 | cd /workspace/pytorch-CycleGAN-and-pix2pix

27 | bash datasets/download_pix2pix_dataset.sh facades

28 | python -m visdom.server &

29 | bash scripts/train_pix2pix.sh

30 | ```

31 |

32 | ## Running with Dockerfile

33 |

34 | We also posted the [Dockerfile](Dockerfile). To generate the pre-built file, download the Dockerfile in this directory and run

35 | ```bash

36 | docker build -t [target_tag] .

37 | ```

38 | in the directory that contains the Dockerfile.

39 |

--------------------------------------------------------------------------------

/docs/overview.md:

--------------------------------------------------------------------------------

1 | ## Overview of Code Structure

2 | To help users better understand and use our codebase, we briefly overview the functionality and implementation of each package and each module. Please see the documentation in each file for more details. If you have questions, you may find useful information in [training/test tips](tips.md) and [frequently asked questions](qa.md).

3 |

4 | [train.py](../train.py) is a general-purpose training script. It works for various models (with option `--model`: e.g., `pix2pix`, `cyclegan`, `colorization`) and different datasets (with option `--dataset_mode`: e.g., `aligned`, `unaligned`, `single`, `colorization`). See the main [README](.../README.md) and [training/test tips](tips.md) for more details.

5 |

6 | [test.py](../test.py) is a general-purpose test script. Once you have trained your model with `train.py`, you can use this script to test the model. It will load a saved model from `--checkpoints_dir` and save the results to `--results_dir`. See the main [README](.../README.md) and [training/test tips](tips.md) for more details.

7 |

8 |

9 | [data](../data) directory contains all the modules related to data loading and preprocessing. To add a custom dataset class called `dummy`, you need to add a file called `dummy_dataset.py` and define a subclass `DummyDataset` inherited from `BaseDataset`. You need to implement four functions: `__init__` (initialize the class, you need to first call `BaseDataset.__init__(self, opt)`), `__len__` (return the size of dataset), `__getitem__` (get a data point), and optionally `modify_commandline_options` (add dataset-specific options and set default options). Now you can use the dataset class by specifying flag `--dataset_mode dummy`. See our template dataset [class](../data/template_dataset.py) for an example. Below we explain each file in details.

10 |

11 | * [\_\_init\_\_.py](../data/__init__.py) implements the interface between this package and training and test scripts. `train.py` and `test.py` call `from data import create_dataset` and `dataset = create_dataset(opt)` to create a dataset given the option `opt`.

12 | * [base_dataset.py](../data/base_dataset.py) implements an abstract base class ([ABC](https://docs.python.org/3/library/abc.html)) for datasets. It also includes common transformation functions (e.g., `get_transform`, `__scale_width`), which can be later used in subclasses.

13 | * [image_folder.py](../data/image_folder.py) implements an image folder class. We modify the official PyTorch image folder [code](https://github.com/pytorch/vision/blob/master/torchvision/datasets/folder.py) so that this class can load images from both the current directory and its subdirectories.

14 | * [template_dataset.py](../data/template_dataset.py) provides a dataset template with detailed documentation. Check out this file if you plan to implement your own dataset.

15 | * [aligned_dataset.py](../data/aligned_dataset.py) includes a dataset class that can load image pairs. It assumes a single image directory `/path/to/data/train`, which contains image pairs in the form of {A,B}. See [here](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/blob/master/docs/tips.md#prepare-your-own-datasets-for-pix2pix) on how to prepare aligned datasets. During test time, you need to prepare a directory `/path/to/data/test` as test data.

16 | * [unaligned_dataset.py](../data/unaligned_dataset.py) includes a dataset class that can load unaligned/unpaired datasets. It assumes that two directories to host training images from domain A `/path/to/data/trainA` and from domain B `/path/to/data/trainB` respectively. Then you can train the model with the dataset flag `--dataroot /path/to/data`. Similarly, you need to prepare two directories `/path/to/data/testA` and `/path/to/data/testB` during test time.

17 | * [single_dataset.py](../data/single_dataset.py) includes a dataset class that can load a set of single images specified by the path `--dataroot /path/to/data`. It can be used for generating CycleGAN results only for one side with the model option `-model test`.

18 | * [colorization_dataset.py](../data/colorization_dataset.py) implements a dataset class that can load a set of nature images in RGB, and convert RGB format into (L, ab) pairs in [Lab](https://en.wikipedia.org/wiki/CIELAB_color_space) color space. It is required by pix2pix-based colorization model (`--model colorization`).

19 |

20 |

21 | [models](../models) directory contains modules related to objective functions, optimizations, and network architectures. To add a custom model class called `dummy`, you need to add a file called `dummy_model.py` and define a subclass `DummyModel` inherited from `BaseModel`. You need to implement four functions: `__init__` (initialize the class; you need to first call `BaseModel.__init__(self, opt)`), `set_input` (unpack data from dataset and apply preprocessing), `forward` (generate intermediate results), `optimize_parameters` (calculate loss, gradients, and update network weights), and optionally `modify_commandline_options` (add model-specific options and set default options). Now you can use the model class by specifying flag `--model dummy`. See our template model [class](../models/template_model.py) for an example. Below we explain each file in details.

22 |

23 | * [\_\_init\_\_.py](../models/__init__.py) implements the interface between this package and training and test scripts. `train.py` and `test.py` call `from models import create_model` and `model = create_model(opt)` to create a model given the option `opt`. You also need to call `model.setup(opt)` to properly initialize the model.

24 | * [base_model.py](../models/base_model.py) implements an abstract base class ([ABC](https://docs.python.org/3/library/abc.html)) for models. It also includes commonly used helper functions (e.g., `setup`, `test`, `update_learning_rate`, `save_networks`, `load_networks`), which can be later used in subclasses.

25 | * [template_model.py](../models/template_model.py) provides a model template with detailed documentation. Check out this file if you plan to implement your own model.

26 | * [pix2pix_model.py](../models/pix2pix_model.py) implements the pix2pix [model](https://phillipi.github.io/pix2pix/), for learning a mapping from input images to output images given paired data. The model training requires `--dataset_mode aligned` dataset. By default, it uses a `--netG unet256` [U-Net](https://arxiv.org/pdf/1505.04597.pdf) generator, a `--netD basic` discriminator (PatchGAN), and a `--gan_mode vanilla` GAN loss (standard cross-entropy objective).

27 | * [colorization_model.py](../models/colorization_model.py) implements a subclass of `Pix2PixModel` for image colorization (black & white image to colorful image). The model training requires `-dataset_model colorization` dataset. It trains a pix2pix model, mapping from L channel to ab channels in [Lab](https://en.wikipedia.org/wiki/CIELAB_color_space) color space. By default, the `colorization` dataset will automatically set `--input_nc 1` and `--output_nc 2`.

28 | * [cycle_gan_model.py](../models/cycle_gan_model.py) implements the CycleGAN [model](https://junyanz.github.io/CycleGAN/), for learning image-to-image translation without paired data. The model training requires `--dataset_mode unaligned` dataset. By default, it uses a `--netG resnet_9blocks` ResNet generator, a `--netD basic` discriminator (PatchGAN introduced by pix2pix), and a least-square GANs [objective](https://arxiv.org/abs/1611.04076) (`--gan_mode lsgan`).

29 | * [networks.py](../models/networks.py) module implements network architectures (both generators and discriminators), as well as normalization layers, initialization methods, optimization scheduler (i.e., learning rate policy), and GAN objective function (`vanilla`, `lsgan`, `wgangp`).

30 | * [test_model.py](../models/test_model.py) implements a model that can be used to generate CycleGAN results for only one direction. This model will automatically set `--dataset_mode single`, which only loads the images from one set. See the test [instruction](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix#apply-a-pre-trained-model-cyclegan) for more details.

31 |

32 | [options](../options) directory includes our option modules: training options, test options, and basic options (used in both training and test). `TrainOptions` and `TestOptions` are both subclasses of `BaseOptions`. They will reuse the options defined in `BaseOptions`.

33 | * [\_\_init\_\_.py](../options/__init__.py) is required to make Python treat the directory `options` as containing packages,

34 | * [base_options.py](../options/base_options.py) includes options that are used in both training and test. It also implements a few helper functions such as parsing, printing, and saving the options. It also gathers additional options defined in `modify_commandline_options` functions in both dataset class and model class.

35 | * [train_options.py](../options/train_options.py) includes options that are only used during training time.

36 | * [test_options.py](../options/test_options.py) includes options that are only used during test time.

37 |

38 |

39 | [util](../util) directory includes a miscellaneous collection of useful helper functions.

40 | * [\_\_init\_\_.py](../util/__init__.py) is required to make Python treat the directory `util` as containing packages,

41 | * [get_data.py](../util/get_data.py) provides a Python script for downloading CycleGAN and pix2pix datasets. Alternatively, You can also use bash scripts such as [download_pix2pix_model.sh](../scripts/download_pix2pix_model.sh) and [download_cyclegan_model.sh](../scripts/download_cyclegan_model.sh).

42 | * [html.py](../util/html.py) implements a module that saves images into a single HTML file. It consists of functions such as `add_header` (add a text header to the HTML file), `add_images` (add a row of images to the HTML file), `save` (save the HTML to the disk). It is based on Python library `dominate`, a Python library for creating and manipulating HTML documents using a DOM API.

43 | * [image_pool.py](../util/image_pool.py) implements an image buffer that stores previously generated images. This buffer enables us to update discriminators using a history of generated images rather than the ones produced by the latest generators. The original idea was discussed in this [paper](http://openaccess.thecvf.com/content_cvpr_2017/papers/Shrivastava_Learning_From_Simulated_CVPR_2017_paper.pdf). The size of the buffer is controlled by the flag `--pool_size`.

44 | * [visualizer.py](../util/visualizer.py) includes several functions that can display/save images and print/save logging information. It uses a Python library `visdom` for display and a Python library `dominate` (wrapped in `HTML`) for creating HTML files with images.

45 | * [util.py](../util/util.py) consists of simple helper functions such as `tensor2im` (convert a tensor array to a numpy image array), `diagnose_network` (calculate and print the mean of average absolute value of gradients), and `mkdirs` (create multiple directories).

46 |

--------------------------------------------------------------------------------

/docs/qa.md:

--------------------------------------------------------------------------------

1 | ## Frequently Asked Questions

2 | Before you post a new question, please first look at the following Q & A and existing GitHub issues. You may also want to read [Training/Test tips](docs/tips.md) for more suggestions.

3 |

4 | #### Connection Error:HTTPConnectionPool ([#230](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/230), [#24](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/24), [#38](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/38))

5 | Similar error messages include “Failed to establish a new connection/Connection refused”.

6 |

7 | Please start the visdom server before starting the training:

8 | ```bash

9 | python -m visdom.server

10 | ```

11 | To install the visdom, you can use the following command:

12 | ```bash

13 | pip install visdom

14 | ```

15 | You can also disable the visdom by setting `--display_id 0`.

16 |

17 | #### My PyTorch errors on CUDA related code.

18 | Try to run the following code snippet to make sure that CUDA is working (assuming using PyTorch >= 0.4):

19 | ```python

20 | import torch

21 | torch.cuda.init()

22 | print(torch.randn(1, device='cuda'))

23 | ```

24 |

25 | If you met an error, it is likely that your PyTorch build does not work with CUDA, e.g., it is installl from the official MacOS binary, or you have a GPU that is too old and not supported anymore. You may run the the code with CPU using `--gpu_ids -1`.

26 |

27 | #### TypeError: Object of type 'Tensor' is not JSON serializable ([#258](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/258))

28 | Similar errors: AttributeError: module 'torch' has no attribute 'device' ([#314](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/314))

29 |

30 | The current code only works with PyTorch 0.4+. An earlier PyTorch version can often cause the above errors.

31 |

32 | #### ValueError: empty range for randrange() ([#390](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/390), [#376](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/376), [#194](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/194))

33 | Similar error messages include "ConnectionRefusedError: [Errno 111] Connection refused"

34 |

35 | It is related to data augmentation step. It often happens when you use `--preprocess crop`. The program will crop random `crop_size x crop_size` patches out of the input training images. But if some of your image sizes (e.g., `256x384`) are smaller than the `crop_size` (e.g., 512), you will get this error. A simple fix will be to use other data augmentation methods such as `resize_and_crop` or `scale_width_and_crop`. Our program will automatically resize the images according to `load_size` before apply `crop_size x crop_size` cropping. Make sure that `load_size >= crop_size`.

36 |

37 |

38 | #### Can I continue/resume my training? ([#350](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/350), [#275](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/275), [#234](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/234), [#87](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/87))

39 | You can use the option `--continue_train`. Also set `--epoch_count` to specify a different starting epoch count. See more discussion in [training/test tips](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/blob/master/docs/tips.md#trainingtest-tips.

40 |

41 | #### Why does my training loss not converge? ([#335](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/335), [#164](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/164), [#30](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/30))

42 | Many GAN losses do not converge (exception: WGAN, WGAN-GP, etc. ) due to the nature of minimax optimization. For DCGAN and LSGAN objective, it is quite normal for the G and D losses to go up and down. It should be fine as long as they do not blow up.

43 |

44 | #### How can I make it work for my own data (e.g., 16-bit png, tiff, hyperspectral images)? ([#309](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/309), [#320](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/), [#202](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/202))

45 | The current code only supports RGB and grayscale images. If you would like to train the model on other data types, please follow the following steps:

46 |

47 | - change the parameters `--input_nc` and `--output_nc` to the number of channels in your input/output images.

48 | - Write your own custom data loader (It is easy as long as you know how to load your data with python). If you write a new data loader class, you need to change the flag `--dataset_mode` accordingly. Alternatively, you can modify the existing data loader. For aligned datasets, change this [line](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/blob/master/data/aligned_dataset.py#L41); For unaligned datasets, change these two [lines](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/blob/master/data/unaligned_dataset.py#L57).

49 |

50 | - If you use visdom and HTML to visualize the results, you may also need to change the visualization code.

51 |

52 | #### Multi-GPU Training ([#327](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/327), [#292](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/292), [#137](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/137), [#35](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/35))

53 | You can use Multi-GPU training by setting `--gpu_ids` (e.g., `--gpu_ids 0,1,2,3` for the first four GPUs on your machine.) To fully utilize all the GPUs, you need to increase your batch size. Try `--batch_size 4`, `--batch_size 16`, or even a larger batch_size. Each GPU will process batch_size/#GPUs images. The optimal batch size depends on the number of GPUs you have, GPU memory per GPU, and the resolution of your training images.

54 |

55 | We also recommend that you use the instance normalization for multi-GPU training by setting `--norm instance`. The current batch normalization might not work for multi-GPUs as the batchnorm parameters are not shared across different GPUs. Advanced users can try [synchronized batchnorm](https://github.com/vacancy/Synchronized-BatchNorm-PyTorch).

56 |

57 |

58 | #### Can I run the model on CPU? ([#310](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/310))

59 | Yes, you can set `--gpu_ids -1`. See [training/test tips](tips.md) for more details.

60 |

61 |

62 | #### Are pre-trained models available? ([#10](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/10))

63 | Yes, you can download pretrained models with the bash script `./scripts/download_cyclegan_model.sh`. See [here](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix#apply-a-pre-trained-model-cyclegan) for more details. We are slowly adding more models to the repo.

64 |

65 | #### Out of memory ([#174](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/174))

66 | CycleGAN is more memory-intensive than pix2pix as it requires two generators and two discriminators. If you would like to produce high-resolution images, you can do the following.

67 |

68 | - During training, train CycleGAN on cropped images of the training set. Please be careful not to change the aspect ratio or the scale of the original image, as this can lead to the training/test gap. You can usually do this by using `--preprocess crop` option, or `--preprocess scale_width_and_crop`.

69 |

70 | - Then at test time, you can load only one generator to produce the results in a single direction. This greatly saves GPU memory as you are not loading the discriminators and the other generator in the opposite direction. You can probably take the whole image as input. You can do this using `--model test --dataroot [path to the directory that contains your test images (e.g., ./datasets/horse2zebra/trainA)] --model_suffix _A --preprocess none`. You can use either `--preprocess none` or `--preprocess scale_width --crop_size [your_desired_image_width]`. Please see the [model_suffix](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/blob/master/models/test_model.py#L16) and [preprocess](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/blob/master/data/base_dataset.py#L24) for more details.

71 |

72 | #### What is the identity loss? ([#322](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/322), [#373](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/373), [#362](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/pull/362))

73 | We use the identity loss for our photo to painting application. The identity loss can regularize the generator to be close to an identity mapping when fed with real samples from the *target* domain. If something already looks like from the target domain, you should preserve the image without making additional changes. The generator trained with this loss will often be more conservative for unknown content. Please see more details in Sec 5.2 ''Photo generation from paintings'' and Figure 12 in the CycleGAN [paper](https://arxiv.org/pdf/1703.10593.pdf). The loss was first proposed in the Equation 6 of the prior work [[Taigman et al., 2017]](https://arxiv.org/pdf/1611.02200.pdf).

74 |

75 | #### The color gets inverted from the beginning of training ([#249](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/249))

76 | The authors also observe that the generator unnecessarily inverts the color of the input image early in training, and then never learns to undo the inversion. In this case, you can try two things.

77 |

78 | - First, try using identity loss `--lambda_identity 1.0` or `--lambda_identity 0.1`. We observe that the identity loss makes the generator to be more conservative and make fewer unnecessary changes. However, because of this, the change may not be as dramatic.

79 |

80 | - Second, try smaller variance when initializing weights by changing `--init_gain`. We observe that smaller variance in weight initialization results in less color inversion.

81 |

82 | #### For labels2photo Cityscapes evaluation, why does the pretrained FCN-8s model not work well on the original Cityscapes input images? ([#150](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/150))

83 | The model was trained on 256x256 images that are resized/upsampled to 1024x2048, so expected input images to the network are very blurry. The purpose of the resizing was to 1) keep the label maps in the original high resolution untouched and 2) avoid the need of changing the standard FCN training code for Cityscapes.

84 |

85 | #### How do I get the `ground-truth` numbers on the labels2photo Cityscapes evaluation? ([#150](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/150))

86 | You need to resize the original Cityscapes images to 256x256 before running the evaluation code.

87 |

88 |

89 | #### Using resize-conv to reduce checkerboard artifacts ([#190](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/190), [#64](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/64))

90 | This Distill [blog](https://distill.pub/2016/deconv-checkerboard/) discussed one of the potential causes of the checkerboard artifacts. You can fix that issue by switching from "deconvolution" to nearest-neighbor upsampling followed by regular convolution. Here is one implementation provided by [@SsnL](https://github.com/SsnL). You can replace the ConvTranspose2d with the following layers.

91 | ```python

92 | nn.Upsample(scale_factor = 2, mode='bilinear'),

93 | nn.ReflectionPad2d(1),

94 | nn.Conv2d(ngf * mult, int(ngf * mult / 2), kernel_size=3, stride=1, padding=0),

95 | ```

96 | We have also noticed that sometimes the checkboard artifacts will go away if you train long enough. Maybe you can try training your model a bit longer.

97 |

98 | #### pix2pix/CycleGAN has no random noise z ([#152](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/152))

99 | The current pix2pix/CycleGAN model does not take z as input. In both pix2pix and CycleGAN, we tried to add z to the generator: e.g., adding z to a latent state, concatenating with a latent state, applying dropout, etc., but often found the output did not vary significantly as a function of z. Conditional GANs do not need noise as long as the input is sufficiently complex so that the input can kind of play the role of noise. Without noise, the mapping is deterministic.

100 |

101 | Please check out the following papers that show ways of getting z to actually have a substantial effect: e.g., [BicycleGAN](https://github.com/junyanz/BicycleGAN), [AugmentedCycleGAN](https://arxiv.org/abs/1802.10151), [MUNIT](https://arxiv.org/abs/1804.04732), [DRIT](https://arxiv.org/pdf/1808.00948.pdf), etc.

102 |

103 | #### Experiment details (e.g., BW->color) ([#306](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/306))

104 | You can find more training details and hyperparameter settings in the appendix of [CycleGAN](https://arxiv.org/abs/1703.10593) and [pix2pix](https://arxiv.org/abs/1611.07004) papers.

105 |

106 | #### Results with [Cycada](https://arxiv.org/pdf/1711.03213.pdf)

107 | We generated the [result of translating GTA images to Cityscapes-style images](https://junyanz.github.io/CycleGAN/) using our Torch repo. Our PyTorch and Torch implementation seemed to produce a little bit different results, although we have not measured the FCN score using the pytorch-trained model. To reproduce the result of Cycada, please use the Torch repo for now.

108 |

--------------------------------------------------------------------------------

/docs/tips.md:

--------------------------------------------------------------------------------

1 | ## Training/test Tips

2 | #### Training/test options

3 | Please see `options/train_options.py` and `options/base_options.py` for the training flags; see `options/test_options.py` and `options/base_options.py` for the test flags. There are some model-specific flags as well, which are added in the model files, such as `--lambda_A` option in `model/cycle_gan_model.py`. The default values of these options are also adjusted in the model files.

4 | #### CPU/GPU (default `--gpu_ids 0`)

5 | Please set`--gpu_ids -1` to use CPU mode; set `--gpu_ids 0,1,2` for multi-GPU mode. You need a large batch size (e.g., `--batch_size 32`) to benefit from multiple GPUs.

6 |

7 | #### Visualization