├── .gitignore

├── LICENSE

├── README.md

├── SummaC - Additional Experiments.ipynb

├── SummaC - Main Results.ipynb

├── requirements.txt

├── script.sh

├── setup.py

├── summac

├── __init__.py

├── benchmark.py

├── model_baseline.py

├── model_guardrails.py

├── model_summac.py

├── run_baseline.py

├── train_summac.py

├── utils_misc.py

├── utils_optim.py

├── utils_scorer.py

└── utils_scoring.py

└── summac_conv_vitc_sent_perc_e.bin

/.gitignore:

--------------------------------------------------------------------------------

1 | env/

2 | __pycache__/

3 | .vscode/

--------------------------------------------------------------------------------

/LICENSE:

--------------------------------------------------------------------------------

1 | Apache License

2 | Version 2.0, January 2004

3 | http://www.apache.org/licenses/

4 |

5 | TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

6 |

7 | 1. Definitions.

8 |

9 | "License" shall mean the terms and conditions for use, reproduction,

10 | and distribution as defined by Sections 1 through 9 of this document.

11 |

12 | "Licensor" shall mean the copyright owner or entity authorized by

13 | the copyright owner that is granting the License.

14 |

15 | "Legal Entity" shall mean the union of the acting entity and all

16 | other entities that control, are controlled by, or are under common

17 | control with that entity. For the purposes of this definition,

18 | "control" means (i) the power, direct or indirect, to cause the

19 | direction or management of such entity, whether by contract or

20 | otherwise, or (ii) ownership of fifty percent (50%) or more of the

21 | outstanding shares, or (iii) beneficial ownership of such entity.

22 |

23 | "You" (or "Your") shall mean an individual or Legal Entity

24 | exercising permissions granted by this License.

25 |

26 | "Source" form shall mean the preferred form for making modifications,

27 | including but not limited to software source code, documentation

28 | source, and configuration files.

29 |

30 | "Object" form shall mean any form resulting from mechanical

31 | transformation or translation of a Source form, including but

32 | not limited to compiled object code, generated documentation,

33 | and conversions to other media types.

34 |

35 | "Work" shall mean the work of authorship, whether in Source or

36 | Object form, made available under the License, as indicated by a

37 | copyright notice that is included in or attached to the work

38 | (an example is provided in the Appendix below).

39 |

40 | "Derivative Works" shall mean any work, whether in Source or Object

41 | form, that is based on (or derived from) the Work and for which the

42 | editorial revisions, annotations, elaborations, or other modifications

43 | represent, as a whole, an original work of authorship. For the purposes

44 | of this License, Derivative Works shall not include works that remain

45 | separable from, or merely link (or bind by name) to the interfaces of,

46 | the Work and Derivative Works thereof.

47 |

48 | "Contribution" shall mean any work of authorship, including

49 | the original version of the Work and any modifications or additions

50 | to that Work or Derivative Works thereof, that is intentionally

51 | submitted to Licensor for inclusion in the Work by the copyright owner

52 | or by an individual or Legal Entity authorized to submit on behalf of

53 | the copyright owner. For the purposes of this definition, "submitted"

54 | means any form of electronic, verbal, or written communication sent

55 | to the Licensor or its representatives, including but not limited to

56 | communication on electronic mailing lists, source code control systems,

57 | and issue tracking systems that are managed by, or on behalf of, the

58 | Licensor for the purpose of discussing and improving the Work, but

59 | excluding communication that is conspicuously marked or otherwise

60 | designated in writing by the copyright owner as "Not a Contribution."

61 |

62 | "Contributor" shall mean Licensor and any individual or Legal Entity

63 | on behalf of whom a Contribution has been received by Licensor and

64 | subsequently incorporated within the Work.

65 |

66 | 2. Grant of Copyright License. Subject to the terms and conditions of

67 | this License, each Contributor hereby grants to You a perpetual,

68 | worldwide, non-exclusive, no-charge, royalty-free, irrevocable

69 | copyright license to reproduce, prepare Derivative Works of,

70 | publicly display, publicly perform, sublicense, and distribute the

71 | Work and such Derivative Works in Source or Object form.

72 |

73 | 3. Grant of Patent License. Subject to the terms and conditions of

74 | this License, each Contributor hereby grants to You a perpetual,

75 | worldwide, non-exclusive, no-charge, royalty-free, irrevocable

76 | (except as stated in this section) patent license to make, have made,

77 | use, offer to sell, sell, import, and otherwise transfer the Work,

78 | where such license applies only to those patent claims licensable

79 | by such Contributor that are necessarily infringed by their

80 | Contribution(s) alone or by combination of their Contribution(s)

81 | with the Work to which such Contribution(s) was submitted. If You

82 | institute patent litigation against any entity (including a

83 | cross-claim or counterclaim in a lawsuit) alleging that the Work

84 | or a Contribution incorporated within the Work constitutes direct

85 | or contributory patent infringement, then any patent licenses

86 | granted to You under this License for that Work shall terminate

87 | as of the date such litigation is filed.

88 |

89 | 4. Redistribution. You may reproduce and distribute copies of the

90 | Work or Derivative Works thereof in any medium, with or without

91 | modifications, and in Source or Object form, provided that You

92 | meet the following conditions:

93 |

94 | (a) You must give any other recipients of the Work or

95 | Derivative Works a copy of this License; and

96 |

97 | (b) You must cause any modified files to carry prominent notices

98 | stating that You changed the files; and

99 |

100 | (c) You must retain, in the Source form of any Derivative Works

101 | that You distribute, all copyright, patent, trademark, and

102 | attribution notices from the Source form of the Work,

103 | excluding those notices that do not pertain to any part of

104 | the Derivative Works; and

105 |

106 | (d) If the Work includes a "NOTICE" text file as part of its

107 | distribution, then any Derivative Works that You distribute must

108 | include a readable copy of the attribution notices contained

109 | within such NOTICE file, excluding those notices that do not

110 | pertain to any part of the Derivative Works, in at least one

111 | of the following places: within a NOTICE text file distributed

112 | as part of the Derivative Works; within the Source form or

113 | documentation, if provided along with the Derivative Works; or,

114 | within a display generated by the Derivative Works, if and

115 | wherever such third-party notices normally appear. The contents

116 | of the NOTICE file are for informational purposes only and

117 | do not modify the License. You may add Your own attribution

118 | notices within Derivative Works that You distribute, alongside

119 | or as an addendum to the NOTICE text from the Work, provided

120 | that such additional attribution notices cannot be construed

121 | as modifying the License.

122 |

123 | You may add Your own copyright statement to Your modifications and

124 | may provide additional or different license terms and conditions

125 | for use, reproduction, or distribution of Your modifications, or

126 | for any such Derivative Works as a whole, provided Your use,

127 | reproduction, and distribution of the Work otherwise complies with

128 | the conditions stated in this License.

129 |

130 | 5. Submission of Contributions. Unless You explicitly state otherwise,

131 | any Contribution intentionally submitted for inclusion in the Work

132 | by You to the Licensor shall be under the terms and conditions of

133 | this License, without any additional terms or conditions.

134 | Notwithstanding the above, nothing herein shall supersede or modify

135 | the terms of any separate license agreement you may have executed

136 | with Licensor regarding such Contributions.

137 |

138 | 6. Trademarks. This License does not grant permission to use the trade

139 | names, trademarks, service marks, or product names of the Licensor,

140 | except as required for reasonable and customary use in describing the

141 | origin of the Work and reproducing the content of the NOTICE file.

142 |

143 | 7. Disclaimer of Warranty. Unless required by applicable law or

144 | agreed to in writing, Licensor provides the Work (and each

145 | Contributor provides its Contributions) on an "AS IS" BASIS,

146 | WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

147 | implied, including, without limitation, any warranties or conditions

148 | of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

149 | PARTICULAR PURPOSE. You are solely responsible for determining the

150 | appropriateness of using or redistributing the Work and assume any

151 | risks associated with Your exercise of permissions under this License.

152 |

153 | 8. Limitation of Liability. In no event and under no legal theory,

154 | whether in tort (including negligence), contract, or otherwise,

155 | unless required by applicable law (such as deliberate and grossly

156 | negligent acts) or agreed to in writing, shall any Contributor be

157 | liable to You for damages, including any direct, indirect, special,

158 | incidental, or consequential damages of any character arising as a

159 | result of this License or out of the use or inability to use the

160 | Work (including but not limited to damages for loss of goodwill,

161 | work stoppage, computer failure or malfunction, or any and all

162 | other commercial damages or losses), even if such Contributor

163 | has been advised of the possibility of such damages.

164 |

165 | 9. Accepting Warranty or Additional Liability. While redistributing

166 | the Work or Derivative Works thereof, You may choose to offer,

167 | and charge a fee for, acceptance of support, warranty, indemnity,

168 | or other liability obligations and/or rights consistent with this

169 | License. However, in accepting such obligations, You may act only

170 | on Your own behalf and on Your sole responsibility, not on behalf

171 | of any other Contributor, and only if You agree to indemnify,

172 | defend, and hold each Contributor harmless for any liability

173 | incurred by, or claims asserted against, such Contributor by reason

174 | of your accepting any such warranty or additional liability.

175 |

176 | END OF TERMS AND CONDITIONS

177 |

178 | APPENDIX: How to apply the Apache License to your work.

179 |

180 | To apply the Apache License to your work, attach the following

181 | boilerplate notice, with the fields enclosed by brackets "[]"

182 | replaced with your own identifying information. (Don't include

183 | the brackets!) The text should be enclosed in the appropriate

184 | comment syntax for the file format. We also recommend that a

185 | file or class name and description of purpose be included on the

186 | same "printed page" as the copyright notice for easier

187 | identification within third-party archives.

188 |

189 | Copyright [yyyy] [name of copyright owner]

190 |

191 | Licensed under the Apache License, Version 2.0 (the "License");

192 | you may not use this file except in compliance with the License.

193 | You may obtain a copy of the License at

194 |

195 | http://www.apache.org/licenses/LICENSE-2.0

196 |

197 | Unless required by applicable law or agreed to in writing, software

198 | distributed under the License is distributed on an "AS IS" BASIS,

199 | WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

200 | See the License for the specific language governing permissions and

201 | limitations under the License.

202 |

--------------------------------------------------------------------------------

/README.md:

--------------------------------------------------------------------------------

1 | # SummaC: Summary Consistency Detection

2 |

3 | This repository contains the code for TACL2021 paper: SummaC: Re-Visiting NLI-based Models for Inconsistency Detection in Summarization

4 |

5 | We release: (1) the trained SummaC models, (2) the SummaC Benchmark and data loaders, (3) training and evaluation scripts.

6 |

7 |

8 |  9 |

9 |

10 |

11 | ## Installing/Using SummaC

12 |

13 | [Update] Thanks to @Aktsvigun for the help, we now have a pip package, making it easy to install the SummaC models:

14 | ```

15 | pip install summac

16 | ```

17 |

18 | Requirement issues: in v0.0.4, we've reduced package dependencies to facilitate installation. We recommend you install `torch` first and verify it works before installing `summac`.

19 |

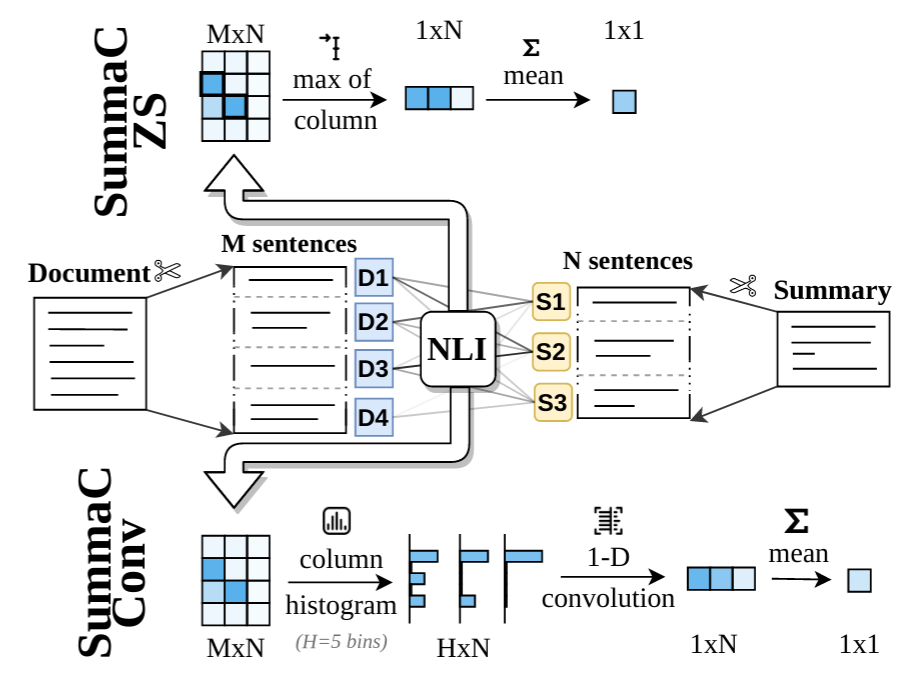

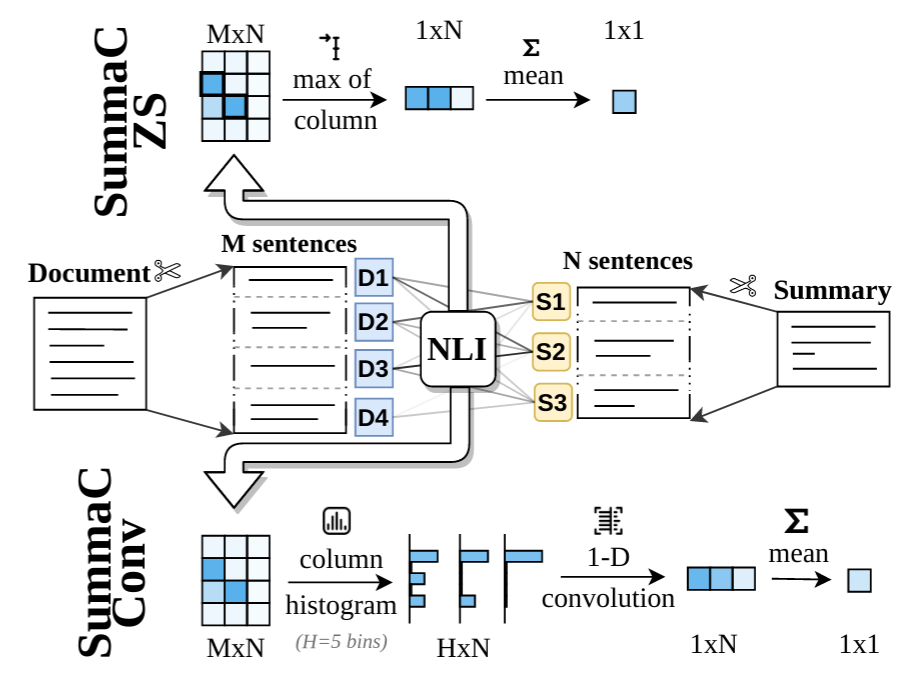

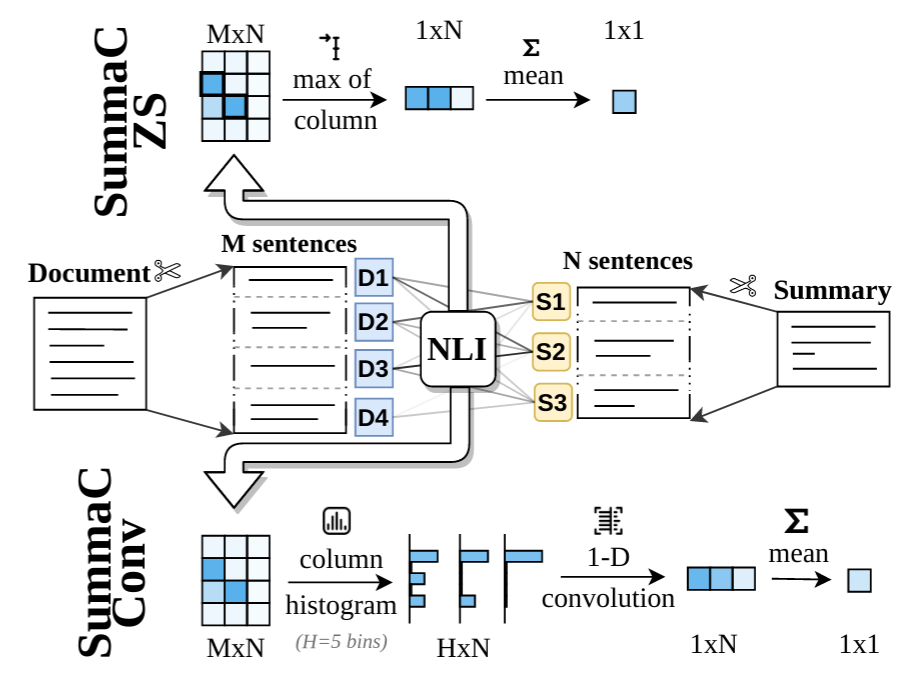

20 | The two trained models SummaC-ZS and SummaC-Conv are implemented in `model_summac` ([link](https://github.com/tingofurro/summac/blob/master/model_summac.py)). Once the package is installed, the models can be used like this:

21 |

22 | ### Example use

23 |

24 | ```python

25 | from summac.model_summac import SummaCZS, SummaCConv

26 |

27 | model_zs = SummaCZS(granularity="sentence", model_name="vitc", device="cpu") # If you have a GPU: switch to: device="cuda"

28 | model_conv = SummaCConv(models=["vitc"], bins='percentile', granularity="sentence", nli_labels="e", device="cpu", start_file="default", agg="mean")

29 |

30 | document = """Scientists are studying Mars to learn about the Red Planet and find landing sites for future missions.

31 | One possible site, known as Arcadia Planitia, is covered instrange sinuous features.

32 | The shapes could be signs that the area is actually made of glaciers, which are large masses of slow-moving ice.

33 | Arcadia Planitia is in Mars' northern lowlands."""

34 |

35 | summary1 = "There are strange shape patterns on Arcadia Planitia. The shapes could indicate the area might be made of glaciers. This makes Arcadia Planitia ideal for future missions."

36 | score_zs1 = model_zs.score([document], [summary1])

37 | score_conv1 = model_conv.score([document], [summary1])

38 | print("[Summary 1] SummaCZS Score: %.3f; SummacConv score: %.3f" % (score_zs1["scores"][0], score_conv1["scores"][0])) # [Summary 1] SummaCZS Score: 0.582; SummacConv score: 0.536

39 |

40 | summary2 = "There are strange shape patterns on Arcadia Planitia. The shapes could indicate the area might be made of glaciers."

41 | score_zs2 = model_zs.score([document], [summary2])

42 | score_conv2 = model_conv.score([document], [summary2])

43 | print("[Summary 2] SummaCZS Score: %.3f; SummacConv score: %.3f" % (score_zs2["scores"][0], score_conv2["scores"][0])) # [Summary 2] SummaCZS Score: 0.877; SummacConv score: 0.709

44 | ```

45 |

46 | We recommend using the SummaCConv models, as experiments from the paper show it provides better predictions. Two notebooks provide experimental details: [SummaC - Main Results.ipynb](https://github.com/tingofurro/summac/blob/master/SummaC%20-%20Main%20Results.ipynb) for the main results (Table 2) and [SummaC - Additional Experiments.ipynb](https://github.com/tingofurro/summac/blob/master/SummaC%20-%20Additional%20Experiments.ipynb) for additional experiments (Tables 1, 3, 4, 5, 6) from the paper.

47 |

48 | ### SummaC Benchmark

49 |

50 | The SummaC Benchmark consists of 6 summary consistency datasets that have been standardized to a binary classification task. The datasets included are:

51 |

52 |

53 |

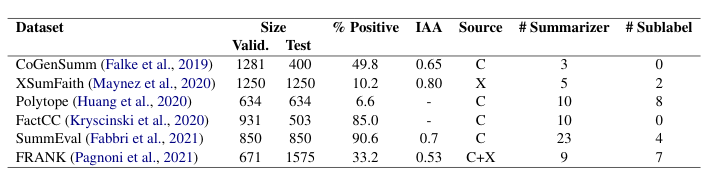

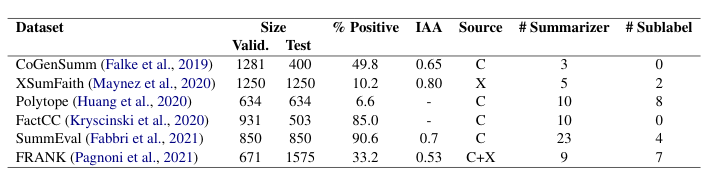

54 | % Positive is the percentage of positive (consistent) summaries. IAA is the inter-annotator agreement (Fleiss Kappa). Source is the dataset used for the source documents (CNN/DM or XSum). # Summarizers is the number of summarizers (extractive and abstractive) included in the dataset. # Sublabel is the number of labels in the typology used to label summary errors.

55 |

56 |

57 | The data-loaders for the benchmark are included in `benchmark.py` ([link](https://github.com/tingofurro/summac/blob/master/summac/benchmark.py)). Each dataset in the benchmark downloads automatically on first run. To load the benchmark:

58 | ```py

59 | from summac.benchmark import SummaCBenchmark

60 | benchmark_val = SummaCBenchmark(benchmark_folder="/path/to/summac_benchmark/", cut="val", hf_datasets_cache_dir = "/path/to/huggingface_datasets_cache_dir/")

61 | frank_dataset = benchmark_val.get_dataset("frank")

62 | print(frank_dataset[300]) # {"document: "A Darwin woman has become a TV [...]", "claim": "natalia moon , 23 , has become a tv sensation [...]", "label": 0, "cut": "val", "model_name": "s2s", "error_type": "LinkE"}

63 | ```

64 |

65 |

66 |

67 | ## Cite the work

68 |

69 | If you make use of the code, models, or algorithm, please cite our paper.

70 | ```

71 | @article{Laban2022SummaCRN,

72 | title={SummaC: Re-Visiting NLI-based Models for Inconsistency Detection in Summarization},

73 | author={Philippe Laban and Tobias Schnabel and Paul N. Bennett and Marti A. Hearst},

74 | journal={Transactions of the Association for Computational Linguistics},

75 | year={2022},

76 | volume={10},

77 | pages={163-177}

78 | }

79 | ```

80 |

81 | ## Contributing

82 |

83 | If you'd like to contribute, or have questions or suggestions, you can contact us at phillab@berkeley.edu. All contributions welcome, for example helping make the benchmark more easily downloadable, or improving model performance on the benchmark.

84 |

--------------------------------------------------------------------------------

/SummaC - Additional Experiments.ipynb:

--------------------------------------------------------------------------------

1 | {

2 | "cells": [

3 | {

4 | "cell_type": "code",

5 | "execution_count": 1,

6 | "metadata": {},

7 | "outputs": [

8 | {

9 | "name": "stderr",

10 | "output_type": "stream",

11 | "text": [

12 | "2021-10-31 22:47:43,882 [3845] WARNING datasets.builder:355: [JupyterRequire] Using custom data configuration default\n",

13 | "2021-10-31 22:47:43,888 [3845] WARNING datasets.builder:510: [JupyterRequire] Reusing dataset xsum (/home/phillab/.cache/huggingface/datasets/xsum/default/1.2.0/4957825a982999fbf80bca0b342793b01b2611e021ef589fb7c6250b3577b499)\n",

14 | "2021-10-31 22:47:48,828 [3845] WARNING datasets.builder:510: [JupyterRequire] Reusing dataset cnn_dailymail (/home/phillab/.cache/huggingface/datasets/cnn_dailymail/3.0.0/3.0.0/3cb851bf7cf5826e45d49db2863f627cba583cbc32342df7349dfe6c38060234)\n"

15 | ]

16 | },

17 | {

18 | "name": "stdout",

19 | "output_type": "stream",

20 | "text": [

21 | " name N N_pos N_neg frac_pos\n",

22 | "0 cogensumm 400 312 88 0.780000\n",

23 | "1 xsumfaith 1250 130 1120 0.104000\n",

24 | "2 polytope 634 41 593 0.064669\n",

25 | "3 factcc 503 441 62 0.876740\n",

26 | "4 summeval 850 770 80 0.905882\n",

27 | "5 frank 1575 529 1046 0.335873\n"

28 | ]

29 | }

30 | ],

31 | "source": [

32 | "import sklearn, torch, numpy as np, json, os, tqdm, pandas as pd, nltk, utils_misc, seaborn as sns, sys, glob\n",

33 | "sys.path.insert(0, \"/home/phillab/summac/\")\n",

34 | "from model_summac import SummaCConv, SummaCZS, model_map\n",

35 | "from utils_summac_benchmark import SummaCBenchmark\n",

36 | "from utils_scoring import ScorerWrapper\n",

37 | "import utils_summac_benchmark\n",

38 | "\n",

39 | "cm = sns.light_palette(\"green\", as_cmap=True)\n",

40 | "benchmark = SummaCBenchmark(cut=\"test\")\n",

41 | "benchmark.print_stats()\n",

42 | "\n",

43 | "def path_to_model_info(file_path):\n",

44 | " toks = file_path.split(\"/\")\n",

45 | " file_name = toks[-1].replace(\".bin\", \"\")\n",

46 | " model_type = \"histo\"\n",

47 | " model_card, granularity, bins, nli_labels, acc = file_name.split(\"_\")\n",

48 | " acc = float(acc.replace(\"bacc\", \"\").replace(\"f1\", \"\"))\n",

49 | " return {\"model_type\": model_type, \"model_card\": model_card, \"granularity\": granularity, \"bins\": bins, \"acc\": acc, \"model_path\": file_path, \"nli_labels\": nli_labels}"

50 | ]

51 | },

52 | {

53 | "cell_type": "markdown",

54 | "metadata": {

55 | "heading_collapsed": true

56 | },

57 | "source": [

58 | "# Table 3: NLI Model Selection\n"

59 | ]

60 | },

61 | {

62 | "cell_type": "code",

63 | "execution_count": 2,

64 | "metadata": {

65 | "hidden": true,

66 | "scrolled": false

67 | },

68 | "outputs": [

69 | {

70 | "name": "stdout",

71 | "output_type": "stream",

72 | "text": [

73 | "\n",

74 | "\n",

75 | "\n",

76 | "\n",

77 | "\n",

78 | "\n",

79 | "\n",

80 | "\n"

81 | ]

82 | }

83 | ],

84 | "source": [

85 | "scorers = []\n",

86 | "model_keys = list(model_map.keys())#+ [\"decomp\"]\n",

87 | "# model_keys = [\"decomp\"]\n",

88 | "\n",

89 | "for model_key in model_keys:\n",

90 | " scorers.append({\"name\": \"ZS-%s\" % (model_key.upper().replace(\"-\", \"_\")), \"model\": SummaCZS(granularity=\"sentence\", model_name=model_key), \"sign\": 1, \"only_doc\": True})\n",

91 | " \n",

92 | " # Add a histogram based-model\n",

93 | " model_files = glob.glob(\"/home/phillab/models/summac/%s_sentence*\" % (model_key))\n",

94 | " if len(model_files) == 0:\n",

95 | " print(\"No model for [%s] was found\" % (model_key))\n",

96 | " continue\n",

97 | " best = sorted([path_to_model_info(mf) for mf in model_files], key=lambda m: m[\"acc\"])[-1]\n",

98 | " scorers.append({\"name\": \"Histo-%s\" % (model_key.upper().replace(\"-\", \"_\")), \"model\": SummaCConv(bins=best[\"bins\"], nli_labels=best[\"nli_labels\"], models=[model_key], granularity=\"sentence\", start_file=best[\"model_path\"]), \"sign\": 1})\n",

99 | "\n",

100 | "scorer_doc = ScorerWrapper(scorers, scoring_method=\"sum\", max_batch_size=20, use_caching=True)"

101 | ]

102 | },

103 | {

104 | "cell_type": "code",

105 | "execution_count": 3,

106 | "metadata": {

107 | "hidden": true,

108 | "scrolled": false

109 | },

110 | "outputs": [

111 | {

112 | "name": "stderr",

113 | "output_type": "stream",

114 | "text": [

115 | "Using custom data configuration default\n",

116 | "Reusing dataset xsum (/home/phillab/.cache/huggingface/datasets/xsum/default/1.2.0/4957825a982999fbf80bca0b342793b01b2611e021ef589fb7c6250b3577b499)\n",

117 | "Reusing dataset cnn_dailymail (/home/phillab/.cache/huggingface/datasets/cnn_dailymail/3.0.0/3.0.0/3cb851bf7cf5826e45d49db2863f627cba583cbc32342df7349dfe6c38060234)\n",

118 | " 0%| | 0/400 [00:00\n",

232 | "Balanced Accuracy | | score |

| nli_name | model_type | |

\n",

233 | " \n",

234 | " | ANLI | \n",

235 | " Histo | \n",

236 | " 0.699 | \n",

237 | "

\n",

238 | " \n",

239 | " | ZS | \n",

240 | " 0.717 | \n",

241 | "

\n",

242 | " \n",

243 | " | MNLI | \n",

244 | " Histo | \n",

245 | " 0.73 | \n",

246 | "

\n",

247 | " \n",

248 | " | ZS | \n",

249 | " 0.709 | \n",

250 | "

\n",

251 | " \n",

252 | " | MNLI_BASE | \n",

253 | " Histo | \n",

254 | " 0.698 | \n",

255 | "

\n",

256 | " \n",

257 | " | ZS | \n",

258 | " 0.695 | \n",

259 | "

\n",

260 | " \n",

261 | " | SNLI_BASE | \n",

262 | " Histo | \n",

263 | " 0.64 | \n",

264 | "

\n",

265 | " \n",

266 | " | ZS | \n",

267 | " 0.666 | \n",

268 | "

\n",

269 | " \n",

270 | " | SNLI_LARGE | \n",

271 | " Histo | \n",

272 | " 0.624 | \n",

273 | "

\n",

274 | " \n",

275 | " | ZS | \n",

276 | " 0.666 | \n",

277 | "

\n",

278 | " \n",

279 | " | VITC | \n",

280 | " Histo | \n",

281 | " 0.74 | \n",

282 | "

\n",

283 | " \n",

284 | " | ZS | \n",

285 | " 0.721 | \n",

286 | "

\n",

287 | " \n",

288 | " | VITC_BASE | \n",

289 | " Histo | \n",

290 | " 0.712 | \n",

291 | "

\n",

292 | " \n",

293 | " | ZS | \n",

294 | " 0.679 | \n",

295 | "

\n",

296 | " \n",

297 | " | VITC_ONLY | \n",

298 | " Histo | \n",

299 | " 0.728 | \n",

300 | "

\n",

301 | " \n",

302 | " | ZS | \n",

303 | " 0.711 | \n",

304 | "

\n",

305 | "

"

306 | ],

307 | "text/plain": [

308 | ""

309 | ]

310 | },

311 | "execution_count": 3,

312 | "metadata": {},

313 | "output_type": "execute_result"

314 | }

315 | ],

316 | "source": [

317 | "benchmark = SummaCBenchmark(cut=\"test\")\n",

318 | "\n",

319 | "results = {}\n",

320 | "for dataset in benchmark.tasks:\n",

321 | " print(\"======= %s ========\" % (dataset[\"name\"]))\n",

322 | " datas = dataset[\"task\"]\n",

323 | " labels = [d[\"label\"] for d in datas]\n",

324 | " utils_summac_benchmark.compute_doc_level(scorer_doc, datas)\n",

325 | " \n",

326 | " for pred_label in datas[0].keys():\n",

327 | " if \"pred_\" not in pred_label or \"total\" in pred_label: continue\n",

328 | " balanced_acc = sklearn.metrics.balanced_accuracy_score(labels, [d[pred_label] for d in datas])\n",

329 | " model_name, input_type = pred_label.replace(\"pred_\", \"\").split(\"|\")\n",

330 | " model_type, nli_name = model_name.split(\"-\")\n",

331 | " k = (model_type, nli_name)\n",

332 | " if k not in results:\n",

333 | " results[k] = []\n",

334 | " results[k].append(balanced_acc)\n",

335 | "\n",

336 | "cleaned_results = []\n",

337 | "for (model_type, nli), vs in results.items():\n",

338 | " cleaned_results.append({\"nli_name\": nli, \"model_type\": model_type, \"score\": np.mean(vs)})\n",

339 | " \n",

340 | "pd.DataFrame(cleaned_results).groupby([\"nli_name\", \"model_type\"]).agg({\"score\": \"sum\"}).style.set_precision(3).set_caption(\"Balanced Accuracy\")"

341 | ]

342 | },

343 | {

344 | "cell_type": "code",

345 | "execution_count": 4,

346 | "metadata": {

347 | "hidden": true

348 | },

349 | "outputs": [],

350 | "source": [

351 | "for scorer in scorers:\n",

352 | " scorer[\"model\"].save_imager_cache()"

353 | ]

354 | },

355 | {

356 | "cell_type": "markdown",

357 | "metadata": {

358 | "heading_collapsed": true

359 | },

360 | "source": [

361 | "# Table 4: Choice of NLI Category"

362 | ]

363 | },

364 | {

365 | "cell_type": "code",

366 | "execution_count": null,

367 | "metadata": {

368 | "hidden": true

369 | },

370 | "outputs": [],

371 | "source": [

372 | "scorers = []\n",

373 | "for model_key in [\"vitc\", \"mnli\", \"anli\"]:\n",

374 | " for nli_labels in [\"e\", \"c\", \"n\", \"ec\", \"en\", \"cn\", \"ecn\"]:\n",

375 | " \n",

376 | " model_files = glob.glob(\"/home/phillab/models/summac/%s_sentence_percentile_%s*\" % (model_key, nli_labels))\n",

377 | " if len(model_files) == 0:\n",

378 | " print(\"No model for [%s, %s] was found\" % (model_key, nli_labels))\n",

379 | " continue\n",

380 | " best = sorted([path_to_model_info(mf) for mf in model_files], key=lambda m: m[\"acc\"])[-1]\n",

381 | " scorers.append({\"name\": \"Histo-%s-%s\" % (model_key.upper().replace(\"-\", \"_\"), nli_labels), \"model\": SummaCConv(bins=best[\"bins\"], nli_labels=best[\"nli_labels\"], models=[model_key], granularity=\"sentence\", start_file=best[\"model_path\"]), \"sign\": 1})\n",

382 | "\n",

383 | "scorer_doc = ScorerWrapper(scorers, max_batch_size=20, use_caching=True)\n",

384 | "print(\"%d scorers loaded\" % (len(scorers)))\n",

385 | "\n",

386 | "benchmark = SummaCBenchmark(cut=\"test\")\n",

387 | "\n",

388 | "results = {}\n",

389 | "for dataset in benchmark.tasks:\n",

390 | " print(\"======= %s ========\" % (dataset[\"name\"]))\n",

391 | " datas = dataset[\"task\"]\n",

392 | " labels = [d[\"label\"] for d in datas]\n",

393 | " utils_summac_benchmark.compute_doc_level(scorer_doc, datas)\n",

394 | " \n",

395 | " for pred_label in datas[0].keys():\n",

396 | " if \"pred_\" not in pred_label or \"total\" in pred_label: continue\n",

397 | " balanced_acc = sklearn.metrics.balanced_accuracy_score(labels, [d[pred_label] for d in datas])\n",

398 | " model_name, input_type = pred_label.replace(\"pred_\", \"\").split(\"|\")\n",

399 | " model_type, nli_name, nli_labels = model_name.split(\"-\")\n",

400 | " k = (nli_name, nli_labels)\n",

401 | " if k not in results:\n",

402 | " results[k] = []\n",

403 | " results[k].append(balanced_acc)\n",

404 | "\n",

405 | "cleaned_results = []\n",

406 | "for (nli, nli_labels), vs in results.items():\n",

407 | " cleaned_results.append({\"nli_name\": nli, \"nli_labels\": nli_labels, \"model_type\": model_type, \"score\": np.mean(vs)})\n",

408 | " \n",

409 | "pd.DataFrame(cleaned_results).groupby([\"nli_name\", \"nli_labels\"]).agg({\"score\": \"sum\"}).style.set_precision(3).set_caption(\"Balanced Accuracy\")"

410 | ]

411 | },

412 | {

413 | "cell_type": "markdown",

414 | "metadata": {

415 | "heading_collapsed": true

416 | },

417 | "source": [

418 | "# Table 5: Granularity Selection\n"

419 | ]

420 | },

421 | {

422 | "cell_type": "code",

423 | "execution_count": 2,

424 | "metadata": {

425 | "hidden": true

426 | },

427 | "outputs": [

428 | {

429 | "name": "stdout",

430 | "output_type": "stream",

431 | "text": [

432 | "\n",

433 | "\n",

434 | "\n",

435 | "\n",

436 | "\n",

437 | "\n",

438 | "\n",

439 | "\n",

440 | "\n",

441 | "\n",

442 | "\n",

443 | "\n",

444 | "28 scorers loaded\n"

445 | ]

446 | }

447 | ],

448 | "source": [

449 | "scorers = []\n",

450 | "granularities = [\"document\", \"document-sentence\", \"paragraph-document\", \"paragraph-sentence\", \"2sents-document\", \"2sents-sentence\", \"sentence-document\", \"sentence\"]\n",

451 | "\n",

452 | "for model_key in [\"mnli\", \"vitc\"]:\n",

453 | "# for granularity in [\"document\", \"document-sentence\", \"paragraph-document\", \"paragraph-sentence\", \"2sents-sentence\", \"sentence-document\", \"sentence\"]:\n",

454 | " for granularity in granularities:\n",

455 | "# for granularity in [\"sentence-document\"]:\n",

456 | " scorers.append({\"name\": \"ZS-%s-%s\" % (model_key.upper().replace(\"-\", \"_\"), granularity.replace(\"-\", \"_\")), \"model\": SummaCZS(granularity=granularity, model_name=model_key), \"sign\": 1})\n",

457 | " if granularity.startswith(\"document\"):\n",

458 | " continue\n",

459 | " model_files = glob.glob(\"/home/phillab/models/summac/%s_%s*\" % (model_key, granularity))\n",

460 | " if len(model_files) == 0:\n",

461 | " print(\"No model for [%s, %s] was found\" % (model_key, granularity))\n",

462 | " continue\n",

463 | " best = sorted([path_to_model_info(mf) for mf in model_files], key=lambda m: m[\"acc\"])[-1]\n",

464 | " scorers.append({\"name\": \"Histo-%s-%s\" % (model_key.upper().replace(\"-\", \"_\"), granularity.replace(\"-\", \"_\")), \"model\": SummaCConv(bins=best[\"bins\"], nli_labels=best[\"nli_labels\"], models=[model_key], granularity=granularity, start_file=best[\"model_path\"]), \"sign\": 1})\n",

465 | "\n",

466 | "scorer_doc = ScorerWrapper(scorers, max_batch_size=20, use_caching=True)\n",

467 | "print(\"%d scorers loaded\" % (len(scorers)))"

468 | ]

469 | },

470 | {

471 | "cell_type": "code",

472 | "execution_count": 3,

473 | "metadata": {

474 | "hidden": true

475 | },

476 | "outputs": [

477 | {

478 | "name": "stderr",

479 | "output_type": "stream",

480 | "text": [

481 | " 10%|█ | 40/400 [00:00<00:01, 304.49it/s]"

482 | ]

483 | },

484 | {

485 | "name": "stdout",

486 | "output_type": "stream",

487 | "text": [

488 | "======= cogensumm ========\n"

489 | ]

490 | },

491 | {

492 | "name": "stderr",

493 | "output_type": "stream",

494 | "text": [

495 | "100%|██████████| 400/400 [00:01<00:00, 326.26it/s]\n",

496 | " 3%|▎ | 40/1250 [00:00<00:03, 326.44it/s]"

497 | ]

498 | },

499 | {

500 | "name": "stdout",

501 | "output_type": "stream",

502 | "text": [

503 | "======= xsumfaith ========\n"

504 | ]

505 | },

506 | {

507 | "name": "stderr",

508 | "output_type": "stream",

509 | "text": [

510 | "100%|██████████| 1250/1250 [00:02<00:00, 462.43it/s]\n",

511 | " 6%|▋ | 40/634 [00:00<00:01, 330.76it/s]"

512 | ]

513 | },

514 | {

515 | "name": "stdout",

516 | "output_type": "stream",

517 | "text": [

518 | "======= polytope ========\n"

519 | ]

520 | },

521 | {

522 | "name": "stderr",

523 | "output_type": "stream",

524 | "text": [

525 | "100%|██████████| 634/634 [00:01<00:00, 386.73it/s]\n",

526 | " 8%|▊ | 40/503 [00:00<00:01, 341.95it/s]"

527 | ]

528 | },

529 | {

530 | "name": "stdout",

531 | "output_type": "stream",

532 | "text": [

533 | "======= factcc ========\n"

534 | ]

535 | },

536 | {

537 | "name": "stderr",

538 | "output_type": "stream",

539 | "text": [

540 | "100%|██████████| 503/503 [00:01<00:00, 441.78it/s]\n",

541 | " 5%|▍ | 40/850 [00:00<00:02, 356.76it/s]"

542 | ]

543 | },

544 | {

545 | "name": "stdout",

546 | "output_type": "stream",

547 | "text": [

548 | "======= summeval ========\n"

549 | ]

550 | },

551 | {

552 | "name": "stderr",

553 | "output_type": "stream",

554 | "text": [

555 | "100%|██████████| 850/850 [00:02<00:00, 350.46it/s]\n",

556 | " 4%|▍ | 60/1575 [00:00<00:03, 423.98it/s]"

557 | ]

558 | },

559 | {

560 | "name": "stdout",

561 | "output_type": "stream",

562 | "text": [

563 | "======= frank ========\n"

564 | ]

565 | },

566 | {

567 | "name": "stderr",

568 | "output_type": "stream",

569 | "text": [

570 | "100%|██████████| 1575/1575 [00:03<00:00, 394.82it/s]\n"

571 | ]

572 | },

573 | {

574 | "data": {

575 | "text/html": [

576 | "Balanced Accuracy | | | score |

| nli_name | granularity | model_type | |

\n",

578 | " \n",

579 | " | MNLI | \n",

580 | " 2sents_document | \n",

581 | " Histo | \n",

582 | " 0.638 | \n",

583 | "

\n",

584 | " \n",

585 | " | ZS | \n",

586 | " 0.64 | \n",

587 | "

\n",

588 | " \n",

589 | " | 2sents_sentence | \n",

590 | " Histo | \n",

591 | " 0.737 | \n",

592 | "

\n",

593 | " \n",

594 | " | ZS | \n",

595 | " 0.712 | \n",

596 | "

\n",

597 | " \n",

598 | " | document | \n",

599 | " ZS | \n",

600 | " 0.564 | \n",

601 | "

\n",

602 | " \n",

603 | " | document_sentence | \n",

604 | " ZS | \n",

605 | " 0.574 | \n",

606 | "

\n",

607 | " \n",

608 | " | paragraph_document | \n",

609 | " Histo | \n",

610 | " 0.616 | \n",

611 | "

\n",

612 | " \n",

613 | " | ZS | \n",

614 | " 0.598 | \n",

615 | "

\n",

616 | " \n",

617 | " | paragraph_sentence | \n",

618 | " Histo | \n",

619 | " 0.647 | \n",

620 | "

\n",

621 | " \n",

622 | " | ZS | \n",

623 | " 0.652 | \n",

624 | "

\n",

625 | " \n",

626 | " | sentence | \n",

627 | " Histo | \n",

628 | " 0.73 | \n",

629 | "

\n",

630 | " \n",

631 | " | ZS | \n",

632 | " 0.703 | \n",

633 | "

\n",

634 | " \n",

635 | " | sentence_document | \n",

636 | " Histo | \n",

637 | " 0.62 | \n",

638 | "

\n",

639 | " \n",

640 | " | ZS | \n",

641 | " 0.587 | \n",

642 | "

\n",

643 | " \n",

644 | " | VITC | \n",

645 | " 2sents_document | \n",

646 | " Histo | \n",

647 | " 0.713 | \n",

648 | "

\n",

649 | " \n",

650 | " | ZS | \n",

651 | " 0.697 | \n",

652 | "

\n",

653 | " \n",

654 | " | 2sents_sentence | \n",

655 | " Histo | \n",

656 | " 0.741 | \n",

657 | "

\n",

658 | " \n",

659 | " | ZS | \n",

660 | " 0.725 | \n",

661 | "

\n",

662 | " \n",

663 | " | document | \n",

664 | " ZS | \n",

665 | " 0.721 | \n",

666 | "

\n",

667 | " \n",

668 | " | document_sentence | \n",

669 | " ZS | \n",

670 | " 0.731 | \n",

671 | "

\n",

672 | " \n",

673 | " | paragraph_document | \n",

674 | " Histo | \n",

675 | " 0.712 | \n",

676 | "

\n",

677 | " \n",

678 | " | ZS | \n",

679 | " 0.698 | \n",

680 | "

\n",

681 | " \n",

682 | " | paragraph_sentence | \n",

683 | " Histo | \n",

684 | " 0.743 | \n",

685 | "

\n",

686 | " \n",

687 | " | ZS | \n",

688 | " 0.726 | \n",

689 | "

\n",

690 | " \n",

691 | " | sentence | \n",

692 | " Histo | \n",

693 | " 0.74 | \n",

694 | "

\n",

695 | " \n",

696 | " | ZS | \n",

697 | " 0.718 | \n",

698 | "

\n",

699 | " \n",

700 | " | sentence_document | \n",

701 | " Histo | \n",

702 | " 0.697 | \n",

703 | "

\n",

704 | " \n",

705 | " | ZS | \n",

706 | " 0.684 | \n",

707 | "

\n",

708 | "

"

709 | ],

710 | "text/plain": [

711 | ""

712 | ]

713 | },

714 | "execution_count": 3,

715 | "metadata": {},

716 | "output_type": "execute_result"

717 | }

718 | ],

719 | "source": [

720 | "results = {}\n",

721 | "for dataset in benchmark.tasks:\n",

722 | " print(\"======= %s ========\" % (dataset[\"name\"]))\n",

723 | " datas = dataset[\"task\"]\n",

724 | " labels = [d[\"label\"] for d in datas]\n",

725 | " utils_summac_benchmark.compute_doc_level(scorer_doc, datas)\n",

726 | " \n",

727 | " # Can be removed if re-running\n",

728 | " for scorer in scorers:\n",

729 | " scorer[\"model\"].save_imager_cache()\n",

730 | " \n",

731 | " for pred_label in datas[0].keys():\n",

732 | " if \"pred_\" not in pred_label or \"total\" in pred_label: continue\n",

733 | " balanced_acc = sklearn.metrics.balanced_accuracy_score(labels, [d[pred_label] for d in datas])\n",

734 | " model_name, input_type = pred_label.replace(\"pred_\", \"\").split(\"|\")\n",

735 | " \n",

736 | " model_type, nli_name, gran = model_name.split(\"-\")\n",

737 | " k = (model_type, nli_name, gran)\n",

738 | " if k not in results:\n",

739 | " results[k] = []\n",

740 | " results[k].append(balanced_acc)\n",

741 | "\n",

742 | "cleaned_results = []\n",

743 | "for (model_type, nli, gran), vs in results.items():\n",

744 | " cleaned_results.append({\"nli_name\": nli, \"granularity\": gran, \"model_type\": model_type, \"score\": np.mean(vs)})\n",

745 | " \n",

746 | "pd.DataFrame(cleaned_results).groupby([\"nli_name\", \"granularity\", \"model_type\"]).agg({\"score\": \"sum\"}).style.set_precision(3).set_caption(\"Balanced Accuracy\")"

747 | ]

748 | },

749 | {

750 | "cell_type": "markdown",

751 | "metadata": {},

752 | "source": [

753 | "# Table 6: SummaCZS Operator Choice"

754 | ]

755 | },

756 | {

757 | "cell_type": "code",

758 | "execution_count": 7,

759 | "metadata": {},

760 | "outputs": [

761 | {

762 | "name": "stdout",

763 | "output_type": "stream",

764 | "text": [

765 | "9 scorers loaded\n"

766 | ]

767 | }

768 | ],

769 | "source": [

770 | "scorers = []\n",

771 | "for op1 in [\"min\", \"mean\", \"max\"]:\n",

772 | " for op2 in [\"min\", \"mean\", \"max\"]:\n",

773 | " scorers.append({\"name\": \"ZS-%s-%s\" % (op1, op2), \"model\": SummaCZS(granularity=\"sentence\", model_name=\"vitc\", op1=op1, op2=op2), \"sign\": 1})\n",

774 | " \n",

775 | "scorer_doc = ScorerWrapper(scorers, max_batch_size=20, use_caching=True)\n",

776 | "print(\"%d scorers loaded\" % (len(scorers)))"

777 | ]

778 | },

779 | {

780 | "cell_type": "code",

781 | "execution_count": 8,

782 | "metadata": {},

783 | "outputs": [

784 | {

785 | "name": "stderr",

786 | "output_type": "stream",

787 | "text": [

788 | "2021-07-31 14:15:34,909 [6185] WARNING datasets.builder:355: [JupyterRequire] Using custom data configuration default\n",

789 | "2021-07-31 14:15:34,912 [6185] WARNING datasets.builder:510: [JupyterRequire] Reusing dataset xsum (/home/phillab/.cache/huggingface/datasets/xsum/default/1.2.0/4957825a982999fbf80bca0b342793b01b2611e021ef589fb7c6250b3577b499)\n",

790 | "2021-07-31 14:15:39,162 [6185] WARNING datasets.builder:510: [JupyterRequire] Reusing dataset cnn_dailymail (/home/phillab/.cache/huggingface/datasets/cnn_dailymail/3.0.0/3.0.0/3cb851bf7cf5826e45d49db2863f627cba583cbc32342df7349dfe6c38060234)\n",

791 | "100%|██████████| 400/400 [00:00<00:00, 4027.15it/s]"

792 | ]

793 | },

794 | {

795 | "name": "stdout",

796 | "output_type": "stream",

797 | "text": [

798 | "======= cogensumm ========\n"

799 | ]

800 | },

801 | {

802 | "name": "stderr",

803 | "output_type": "stream",

804 | "text": [

805 | "\n",

806 | " 38%|███▊ | 480/1250 [00:00<00:00, 4655.20it/s]"

807 | ]

808 | },

809 | {

810 | "name": "stdout",

811 | "output_type": "stream",

812 | "text": [

813 | "======= xsumfaith ========\n"

814 | ]

815 | },

816 | {

817 | "name": "stderr",

818 | "output_type": "stream",

819 | "text": [

820 | "100%|██████████| 1250/1250 [00:00<00:00, 4622.31it/s]\n",

821 | "100%|██████████| 634/634 [00:00<00:00, 4340.38it/s]"

822 | ]

823 | },

824 | {

825 | "name": "stdout",

826 | "output_type": "stream",

827 | "text": [

828 | "======= polytope ========\n"

829 | ]

830 | },

831 | {

832 | "name": "stderr",

833 | "output_type": "stream",

834 | "text": [

835 | "\n",

836 | "100%|██████████| 503/503 [00:00<00:00, 4521.10it/s]"

837 | ]

838 | },

839 | {

840 | "name": "stdout",

841 | "output_type": "stream",

842 | "text": [

843 | "======= factcc ========\n"

844 | ]

845 | },

846 | {

847 | "name": "stderr",

848 | "output_type": "stream",

849 | "text": [

850 | "\n",

851 | " 52%|█████▏ | 440/850 [00:00<00:00, 4396.31it/s]"

852 | ]

853 | },

854 | {

855 | "name": "stdout",

856 | "output_type": "stream",

857 | "text": [

858 | "======= summeval ========\n"

859 | ]

860 | },

861 | {

862 | "name": "stderr",

863 | "output_type": "stream",

864 | "text": [

865 | "100%|██████████| 850/850 [00:00<00:00, 3651.48it/s]\n",

866 | " 30%|███ | 480/1575 [00:00<00:00, 4628.48it/s]"

867 | ]

868 | },

869 | {

870 | "name": "stdout",

871 | "output_type": "stream",

872 | "text": [

873 | "======= frank ========\n"

874 | ]

875 | },

876 | {

877 | "name": "stderr",

878 | "output_type": "stream",

879 | "text": [

880 | "100%|██████████| 1575/1575 [00:00<00:00, 4369.67it/s]\n"

881 | ]

882 | },

883 | {

884 | "data": {

885 | "text/html": [

886 | "Balanced Accuracy | | score |

| op1 | op2 | |

\n",

888 | " \n",

889 | " | max | \n",

890 | " max | \n",

891 | " 0.691 | \n",

892 | "

\n",

893 | " \n",

894 | " | mean | \n",

895 | " 0.718 | \n",

896 | "

\n",

897 | " \n",

898 | " | min | \n",

899 | " 0.72 | \n",

900 | "

\n",

901 | " \n",

902 | " | mean | \n",

903 | " max | \n",

904 | " 0.62 | \n",

905 | "

\n",

906 | " \n",

907 | " | mean | \n",

908 | " 0.628 | \n",

909 | "

\n",

910 | " \n",

911 | " | min | \n",

912 | " 0.605 | \n",

913 | "

\n",

914 | " \n",

915 | " | min | \n",

916 | " max | \n",

917 | " 0.574 | \n",

918 | "

\n",

919 | " \n",

920 | " | mean | \n",

921 | " 0.557 | \n",

922 | "

\n",

923 | " \n",

924 | " | min | \n",

925 | " 0.531 | \n",

926 | "

\n",

927 | "

"

928 | ],

929 | "text/plain": [

930 | ""

931 | ]

932 | },

933 | "execution_count": 8,

934 | "metadata": {},

935 | "output_type": "execute_result"

936 | }

937 | ],

938 | "source": [

939 | "benchmark = SummaCBenchmark(cut=\"test\")\n",

940 | "\n",

941 | "results = {}\n",

942 | "for dataset in benchmark.tasks:\n",

943 | " print(\"======= %s ========\" % (dataset[\"name\"]))\n",

944 | " datas = dataset[\"task\"]\n",

945 | " labels = [d[\"label\"] for d in datas]\n",

946 | " utils_summac_benchmark.compute_doc_level(scorer_doc, datas)\n",

947 | " \n",

948 | " for pred_label in datas[0].keys():\n",

949 | " if \"pred_\" not in pred_label or \"total\" in pred_label: continue\n",

950 | " balanced_acc = sklearn.metrics.balanced_accuracy_score(labels, [d[pred_label] for d in datas])\n",

951 | " model_name, input_type = pred_label.replace(\"pred_\", \"\").split(\"|\")\n",

952 | " model_type, op1, op2 = model_name.split(\"-\")\n",

953 | " k = (op1, op2)\n",

954 | " if k not in results:\n",

955 | " results[k] = []\n",

956 | " results[k].append(balanced_acc)\n",

957 | "\n",

958 | "cleaned_results = []\n",

959 | "for (op1, op2), vs in results.items():\n",

960 | " cleaned_results.append({\"op1\": op1, \"op2\": op2, \"score\": np.mean(vs)})\n",

961 | " \n",

962 | "pd.DataFrame(cleaned_results).groupby([\"op1\", \"op2\"]).agg({\"score\": \"sum\"}).style.set_precision(3).set_caption(\"Balanced Accuracy\")"

963 | ]

964 | }

965 | ],

966 | "metadata": {

967 | "finalized": {

968 | "timestamp": 1625710190289,

969 | "trusted": true

970 | },

971 | "kernelspec": {

972 | "display_name": "Python 3",

973 | "language": "python",

974 | "name": "python3"

975 | },

976 | "language_info": {

977 | "codemirror_mode": {

978 | "name": "ipython",

979 | "version": 3

980 | },

981 | "file_extension": ".py",

982 | "mimetype": "text/x-python",

983 | "name": "python",

984 | "nbconvert_exporter": "python",

985 | "pygments_lexer": "ipython3",

986 | "version": "3.7.6"

987 | }

988 | },

989 | "nbformat": 4,

990 | "nbformat_minor": 4

991 | }

992 |

--------------------------------------------------------------------------------

/SummaC - Main Results.ipynb:

--------------------------------------------------------------------------------

1 | {

2 | "cells": [

3 | {

4 | "cell_type": "code",

5 | "execution_count": 1,

6 | "metadata": {},

7 | "outputs": [

8 | {

9 | "name": "stderr",

10 | "output_type": "stream",

11 | "text": [

12 | "Using custom data configuration default\n",

13 | "Reusing dataset xsum (/home/phillab/.cache/huggingface/datasets/xsum/default/1.2.0/4957825a982999fbf80bca0b342793b01b2611e021ef589fb7c6250b3577b499)\n",

14 | "Reusing dataset cnn_dailymail (/home/phillab/.cache/huggingface/datasets/cnn_dailymail/3.0.0/3.0.0/3cb851bf7cf5826e45d49db2863f627cba583cbc32342df7349dfe6c38060234)\n"

15 | ]

16 | },

17 | {

18 | "name": "stdout",

19 | "output_type": "stream",

20 | "text": [

21 | " name N N_pos N_neg frac_pos\n",

22 | "0 cogensumm 400 312 88 0.780000\n",

23 | "1 xsumfaith 1250 130 1120 0.104000\n",

24 | "2 polytope 634 41 593 0.064669\n",

25 | "3 factcc 503 441 62 0.876740\n",

26 | "4 summeval 850 770 80 0.905882\n",

27 | "5 frank 1575 529 1046 0.335873\n"

28 | ]

29 | }

30 | ],

31 | "source": [

32 | "from utils_summac_benchmark import SummaCBenchmark\n",

33 | "import utils_summac_benchmark, random\n",

34 | "\n",

35 | "benchmark = SummaCBenchmark(benchmark_folder=\"/home/phillab/data/summac_benchmark/\", cut=\"test\")\n",

36 | "benchmark.print_stats()"

37 | ]

38 | },

39 | {

40 | "cell_type": "markdown",

41 | "metadata": {},

42 | "source": [

43 | "# Table 2: Main Table of Results\n"

44 | ]

45 | },

46 | {

47 | "cell_type": "code",

48 | "execution_count": 2,

49 | "metadata": {},

50 | "outputs": [

51 | {

52 | "name": "stdout",

53 | "output_type": "stream",

54 | "text": [

55 | "\n"

56 | ]

57 | }

58 | ],

59 | "source": [

60 | "import sklearn, torch, numpy as np, json, os, tqdm, pandas as pd, nltk, seaborn as sns\n",

61 | "from model_guardrails import NERInaccuracyPenalty\n",

62 | "from model_summac import SummaCConv, SummaCZS\n",

63 | "from model_baseline import BaselineScorer\n",

64 | "# from model_entailment import EntailmentScorer\n",

65 | "from model_classifier import Classifier\n",

66 | "from utils_scoring import ScorerWrapper\n",

67 | "\n",

68 | "use_cache = True\n",

69 | "scorers = [\n",

70 | " {\"name\": \"NER\", \"model\": NERInaccuracyPenalty(flipped=True), \"only_doc\": True, \"sign\": 1},\n",

71 | "# {\"name\": \"MNLI\", \"model\": EntailmentScorer(model_card=\"roberta-large-mnli\", contradiction_idx=0), \"sign\": 1},\n",

72 | " # {\"name\": \"FactCC-CLS\", \"model\": Classifier(model_card=\"roberta-base\", score_class=1, model_file=\"/home/phillab/models/cls_roberta-base_factcc_first_0_f1_0.4766.bin\"), \"sign\": 1, \"only_doc\": True},\n",

73 | " {\"name\": \"DAE\", \"model\": BaselineScorer(model=\"dae\"), \"only_doc\": True, \"sign\": 1},\n",

74 | " {\"name\": \"FEQA\", \"model\": BaselineScorer(model=\"feqa\"), \"only_doc\": True, \"sign\": 1},\n",

75 | " {\"name\": \"QuestEval\", \"model\": BaselineScorer(model=\"questeval\"), \"only_doc\": True, \"sign\": 1},\n",

76 | " {\"name\": \"SummaC-ZS-VITC-L\", \"model\": SummaCZS(granularity=\"sentence\", model_name=\"vitc\", imager_load_cache=use_cache), \"sign\": 1, \"only_doc\": True},\n",

77 | " {\"name\": \"SummaC-Histo-VITC-L\", \"model\": SummaCConv(models=[\"vitc\"], granularity=\"sentence\", start_file=\"/home/phillab/models/summac/vitc_sentence_percentile_e_bacc0.744.bin\", bins=\"percentile\", imager_load_cache=use_cache, device=\"cpu\"), \"sign\": 1, \"only_doc\": True},\n",

78 | "]\n",

79 | "\n",

80 | "scorer_doc = ScorerWrapper(scorers, scoring_method=\"sum\", max_batch_size=20, use_caching=True)\n",

81 | "scorer_para = ScorerWrapper([s for s in scorers if \"only_doc\" not in s], scoring_method=\"sum\", max_batch_size=20, use_caching=True)"

82 | ]

83 | },

84 | {

85 | "cell_type": "code",

86 | "execution_count": 3,

87 | "metadata": {

88 | "scrolled": false

89 | },

90 | "outputs": [

91 | {

92 | "name": "stderr",

93 | "output_type": "stream",

94 | "text": [

95 | "\r",

96 | " 0%| | 0/400 [00:00\n",

248 | " #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow0_col0 {\n",

249 | " : ;\n",

250 | " background-color: #9ece9e;\n",

251 | " color: #000000;\n",

252 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow0_col1 {\n",

253 | " : ;\n",

254 | " background-color: #ebf3eb;\n",

255 | " color: #000000;\n",

256 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow0_col2 {\n",

257 | " : ;\n",

258 | " background-color: #a5d1a5;\n",

259 | " color: #000000;\n",

260 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow0_col3 {\n",

261 | " : ;\n",

262 | " background-color: #9dcd9d;\n",

263 | " color: #000000;\n",

264 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow0_col4 {\n",

265 | " : ;\n",

266 | " background-color: #a5d1a5;\n",

267 | " color: #000000;\n",

268 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow0_col5 {\n",

269 | " : ;\n",

270 | " background-color: #e7f1e7;\n",

271 | " color: #000000;\n",

272 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow0_col6 {\n",

273 | " : ;\n",

274 | " background-color: #b4d8b4;\n",

275 | " color: #000000;\n",

276 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow1_col0 {\n",

277 | " : ;\n",

278 | " background-color: #acd4ac;\n",

279 | " color: #000000;\n",

280 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow1_col1 {\n",

281 | " : ;\n",

282 | " background-color: #c4e0c4;\n",

283 | " color: #000000;\n",

284 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow1_col2 {\n",

285 | " : ;\n",

286 | " background-color: #c5e0c5;\n",

287 | " color: #000000;\n",

288 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow1_col3 {\n",

289 | " : ;\n",

290 | " background-color: #e0eee0;\n",

291 | " color: #000000;\n",

292 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow1_col4 {\n",

293 | " : ;\n",

294 | " background-color: #ebf3eb;\n",

295 | " color: #000000;\n",

296 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow1_col5 {\n",

297 | " : ;\n",

298 | " background-color: #b9dbb9;\n",

299 | " color: #000000;\n",

300 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow1_col6 {\n",

301 | " : ;\n",

302 | " background-color: #d6e9d6;\n",

303 | " color: #000000;\n",

304 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow2_col0 {\n",

305 | " : ;\n",

306 | " background-color: #ebf3eb;\n",

307 | " color: #000000;\n",

308 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow2_col1 {\n",

309 | " : ;\n",

310 | " background-color: #94c994;\n",

311 | " color: #000000;\n",

312 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow2_col2 {\n",

313 | " : ;\n",

314 | " background-color: #ebf3eb;\n",

315 | " color: #000000;\n",

316 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow2_col3 {\n",

317 | " : ;\n",

318 | " background-color: #ebf3eb;\n",

319 | " color: #000000;\n",

320 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow2_col4 {\n",

321 | " : ;\n",

322 | " background-color: #dfeddf;\n",

323 | " color: #000000;\n",

324 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow2_col5 {\n",

325 | " : ;\n",

326 | " background-color: #ebf3eb;\n",

327 | " color: #000000;\n",

328 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow2_col6 {\n",

329 | " : ;\n",

330 | " background-color: #ebf3eb;\n",

331 | " color: #000000;\n",

332 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow3_col0 {\n",

333 | " : ;\n",

334 | " background-color: #a3d0a3;\n",

335 | " color: #000000;\n",

336 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow3_col1 {\n",

337 | " : ;\n",

338 | " background-color: #96c996;\n",

339 | " color: #000000;\n",

340 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow3_col2 {\n",

341 | " font-weight: bold;\n",

342 | " background-color: #75b975;\n",

343 | " color: #000000;\n",

344 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow3_col3 {\n",

345 | " : ;\n",

346 | " background-color: #badbba;\n",

347 | " color: #000000;\n",

348 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow3_col4 {\n",

349 | " : ;\n",

350 | " background-color: #9bcc9b;\n",

351 | " color: #000000;\n",

352 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow3_col5 {\n",

353 | " font-weight: bold;\n",

354 | " background-color: #76ba76;\n",

355 | " color: #000000;\n",

356 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow3_col6 {\n",

357 | " : ;\n",

358 | " background-color: #94c994;\n",

359 | " color: #000000;\n",

360 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow4_col0 {\n",

361 | " : ;\n",

362 | " background-color: #97ca97;\n",

363 | " color: #000000;\n",

364 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow4_col1 {\n",

365 | " font-weight: bold;\n",

366 | " background-color: #75b975;\n",

367 | " color: #000000;\n",

368 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow4_col2 {\n",

369 | " : ;\n",

370 | " background-color: #a6d1a6;\n",

371 | " color: #000000;\n",

372 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow4_col3 {\n",

373 | " font-weight: bold;\n",

374 | " background-color: #75b975;\n",

375 | " color: #000000;\n",

376 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow4_col4 {\n",

377 | " font-weight: bold;\n",

378 | " background-color: #75b975;\n",

379 | " color: #000000;\n",

380 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow4_col5 {\n",

381 | " : ;\n",

382 | " background-color: #78bb78;\n",

383 | " color: #000000;\n",

384 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow4_col6 {\n",

385 | " font-weight: bold;\n",

386 | " background-color: #75b975;\n",

387 | " color: #000000;\n",

388 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow5_col0 {\n",

389 | " font-weight: bold;\n",

390 | " background-color: #75b975;\n",

391 | " color: #000000;\n",

392 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow5_col1 {\n",

393 | " : ;\n",

394 | " background-color: #b2d7b1;\n",

395 | " color: #000000;\n",

396 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow5_col2 {\n",

397 | " : ;\n",

398 | " background-color: #a9d3a9;\n",

399 | " color: #000000;\n",

400 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow5_col3 {\n",

401 | " : ;\n",

402 | " background-color: #86c286;\n",

403 | " color: #000000;\n",

404 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow5_col4 {\n",

405 | " : ;\n",

406 | " background-color: #82c082;\n",

407 | " color: #000000;\n",

408 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow5_col5 {\n",

409 | " : ;\n",

410 | " background-color: #86c286;\n",

411 | " color: #000000;\n",

412 | " } #T_a290bb2c_3a8a_11ec_b554_d587d49477ferow5_col6 {\n",

413 | " : ;\n",

414 | " background-color: #84c084;\n",

415 | " color: #000000;\n",

416 | " }Balanced Accuracy | | cogensumm | xsumfaith | polytope | factcc | summeval | frank | overall |

| model_name | input | | | | | | | |

\n",

417 | " \n",

418 | " | DAE | \n",

419 | " doc | \n",

420 | " 0.634 | \n",

421 | " 0.508 | \n",

422 | " 0.628 | \n",

423 | " 0.759 | \n",

424 | " 0.703 | \n",

425 | " 0.617 | \n",

426 | " 0.642 | \n",

427 | "

\n",

428 | " \n",

429 | " | FEQA | \n",

430 | " doc | \n",

431 | " 0.61 | \n",

432 | " 0.56 | \n",

433 | " 0.578 | \n",

434 | " 0.536 | \n",

435 | " 0.538 | \n",

436 | " 0.699 | \n",

437 | " 0.587 | \n",

438 | "

\n",

439 | " \n",

440 | " | NER | \n",

441 | " doc | \n",

442 | " 0.502 | \n",

443 | " 0.623 | \n",

444 | " 0.517 | \n",

445 | " 0.5 | \n",

446 | " 0.568 | \n",

447 | " 0.609 | \n",

448 | " 0.553 | \n",

449 | "

\n",

450 | " \n",

451 | " | QuestEval | \n",

452 | " doc | \n",

453 | " 0.626 | \n",

454 | " 0.621 | \n",

455 | " 0.703 | \n",

456 | " 0.666 | \n",

457 | " 0.725 | \n",

458 | " 0.821 | \n",

459 | " 0.694 | \n",

460 | "

\n",

461 | " \n",

462 | " | SummaC-Histo-VITC-L | \n",

463 | " doc | \n",

464 | " 0.647 | \n",

465 | " 0.664 | \n",

466 | " 0.627 | \n",

467 | " 0.895 | \n",

468 | " 0.817 | \n",

469 | " 0.816 | \n",

470 | " 0.744 | \n",

471 | "

\n",

472 | " \n",

473 | " | SummaC-ZS-VITC-L | \n",

474 | " doc | \n",

475 | " 0.704 | \n",

476 | " 0.584 | \n",

477 | " 0.62 | \n",

478 | " 0.838 | \n",

479 | " 0.787 | \n",

480 | " 0.79 | \n",

481 | " 0.721 | \n",

482 | "

\n",

483 | "

"

484 | ],

485 | "text/plain": [

486 | ""

487 | ]

488 | },

489 | "execution_count": 4,

490 | "metadata": {},

491 | "output_type": "execute_result"

492 | }

493 | ],

494 | "source": [

495 | "cm = sns.light_palette(\"green\", as_cmap=True)\n",

496 | "\n",

497 | "def highlight_max(data):\n",

498 | " is_max = data == data.max()\n",

499 | " return ['font-weight: bold' if v else '' for v in is_max]\n",

500 | "\n",

501 | "df = pd.DataFrame(results)\n",

502 | "df = df.groupby([\"model_name\", \"input\"]).agg({\"%s_bacc\" % (d): \"mean\" for d in benchmark.task_name_to_task})\n",

503 | "df.rename(columns={k: k.replace(\"_bacc\", \"\") for k in df.keys()}, inplace=True)\n",

504 | "df.drop(\"total\",inplace=True)\n",

505 | "df[\"overall\"] = (df[\"factcc\"]+df[\"frank\"]+df[\"polytope\"]+df[\"cogensumm\"]+df[\"summeval\"]+df[\"xsumfaith\"]) / (6.0)\n",

506 | "\n",

507 | "df.style.apply(highlight_max).background_gradient(cmap=cm, high=1.0, low=0.0).set_precision(3).set_caption(\"Balanced Accuracy\")"

508 | ]

509 | },

510 | {

511 | "cell_type": "code",

512 | "execution_count": 16,

513 | "metadata": {},

514 | "outputs": [

515 | {

516 | "name": "stdout",

517 | "output_type": "stream",

518 | "text": [

519 | "DATASET NAME MODEL NAME \n",

520 | "cogensumm DAE - 0.634 (0.598 - 0.677) (0.594 - 0.688)\n",

521 | "--------------\n",

522 | "cogensumm SummaC-ZS-VITC-L - 0.704 (0.668 - 0.745) (0.654 - 0.749)\n",

523 | "cogensumm SummaC-Histo-VITC-L - 0.647 (0.618 - 0.680) (0.612 - 0.684)\n",

524 | "==================================================\n",

525 | "xsumfaith NER - 0.623 (0.610 - 0.640) (0.607 - 0.644)\n",

526 | "--------------\n",

527 | "xsumfaith SummaC-ZS-VITC-L - 0.584 (0.561 - 0.606) (0.553 - 0.614)\n",

528 | "xsumfaith SummaC-Histo-VITC-L - 0.664 (0.643 - 0.694) (0.638 - 0.704)\n",

529 | "Significant difference (p < 0.05)\n",

530 | "==================================================\n",

531 | "polytope QuestEval - 0.703 (0.672 - 0.742) (0.657 - 0.745)\n",

532 | "--------------\n",

533 | "polytope SummaC-ZS-VITC-L - 0.620 (0.570 - 0.667) (0.557 - 0.684)\n",

534 | "polytope SummaC-Histo-VITC-L - 0.627 (0.552 - 0.680) (0.547 - 0.690)\n",

535 | "==================================================\n",

536 | "factcc DAE - 0.759 (0.720 - 0.797) (0.708 - 0.808)\n",

537 | "--------------\n",

538 | "factcc SummaC-ZS-VITC-L - 0.838 (0.809 - 0.870) (0.803 - 0.880)\n",

539 | "Significant difference (p < 0.05)\n",

540 | "factcc SummaC-Histo-VITC-L - 0.895 (0.878 - 0.916) (0.875 - 0.926)\n",

541 | "Significant difference (p < 0.05)\n",

542 | "Significant difference (p < 0.01)\n",

543 | "==================================================\n",

544 | "summeval QuestEval - 0.725 (0.702 - 0.758) (0.697 - 0.764)\n",

545 | "--------------\n",

546 | "summeval SummaC-ZS-VITC-L - 0.787 (0.755 - 0.823) (0.748 - 0.829)\n",

547 | "summeval SummaC-Histo-VITC-L - 0.817 (0.793 - 0.851) (0.788 - 0.858)\n",

548 | "Significant difference (p < 0.05)\n",

549 | "Significant difference (p < 0.01)\n",

550 | "==================================================\n",

551 | "frank QuestEval - 0.821 (0.809 - 0.835) (0.808 - 0.836)\n",

552 | "--------------\n",

553 | "frank SummaC-ZS-VITC-L - 0.790 (0.776 - 0.803) (0.775 - 0.807)\n",

554 | "frank SummaC-Histo-VITC-L - 0.816 (0.804 - 0.827) (0.801 - 0.830)\n",

555 | "==================================================\n",

556 | "==========================\n",

557 | "==========================\n",

558 | "==========================\n",

559 | "OVERALL QuestEval - (0.684 - 0.709) (0.682 - 0.711)\n",

560 | "OVERALL SummaC-ZS-VITC-L - (0.709 - 0.735) (0.707 - 0.737)\n",

561 | "OVERALL SummaC-Histo-VITC-L - (0.734 - 0.757) (0.730 - 0.760)\n"

562 | ]

563 | }

564 | ],

565 | "source": [

566 | "# Analysis with confidence interval\n",

567 | "strongest_baseline = {\"cogensumm\": \"DAE\", \"xsumfaith\": \"NER\", \"polytope\": \"QuestEval\", \"factcc\": \"DAE\", \"summeval\": \"QuestEval\", \"frank\": \"QuestEval\"}\n",

568 | "\n",

569 | "P5 = 5 / 2 # Correction due to the fact that we are running 2 tests with the same data\n",

570 | "P1 = 1 / 2 # Correction due to the fact that we are running 2 tests with the same data\n",

571 | "\n",

572 | "def resample_balanced_acc(preds, labels, n_samples=100, sample_ratio=0.7):\n",

573 | " N = len(preds)\n",

574 | " idxs = list(range(N))\n",

575 | " N_batch = int(sample_ratio*N)\n",

576 | "\n",

577 | " bal_accs = []\n",

578 | " for _ in range(n_samples):\n",

579 | " random.shuffle(idxs)\n",

580 | " batch_preds = [preds[i] for i in idxs[:N_batch]]\n",

581 | " batch_labels = [labels[i] for i in idxs[:N_batch]]\n",

582 | " \n",

583 | " bal_accs.append(sklearn.metrics.balanced_accuracy_score(batch_labels, batch_preds))\n",

584 | " return bal_accs\n",

585 | "\n",

586 | "print(\"DATASET NAME\".ljust(15), \"MODEL NAME\".ljust(20))\n",

587 | "\n",

588 | "sampled_batch_preds = {res[\"model_name\"]: [] for res in results}\n",

589 | "for res in results:\n",

590 | " if res[\"model_name\"] == \"total\":\n",

591 | " print(\"==================================================\")\n",

592 | " continue\n",

593 | " \n",

594 | " samples = resample_balanced_acc(res[\"preds\"], res[\"labels\"])\n",

595 | " sampled_batch_preds[res[\"model_name\"]].append(samples)\n",

596 | " low5, high5 = np.percentile(samples, P5), np.percentile(samples, 100-P5)\n",

597 | " low1, high1 = np.percentile(samples, P1), np.percentile(samples, 100-P1)\n",

598 | " bacc = sklearn.metrics.balanced_accuracy_score(res[\"labels\"], res[\"preds\"])\n",

599 | " if \"SummaC\" in res[\"model_name\"] or res[\"model_name\"] == strongest_baseline[res[\"dataset_name\"]]:\n",

600 | " \n",

601 | " print(res[\"dataset_name\"].ljust(15), res[\"model_name\"].ljust(20), \" - %.3f (%.3f - %.3f) (%.3f - %.3f)\" % (bacc, low5, high5, low1, high1))\n",

602 | " if res[\"model_name\"] == strongest_baseline[res[\"dataset_name\"]]:\n",

603 | " bl5, bh5, bl1, bh1 = low5, high5, low1, high1\n",

604 | " print(\"--------------\")\n",

605 | " else:\n",

606 | " if low5 >= bh5:\n",

607 | " print(\"Significant difference (p < 0.05)\")\n",

608 | " if low1 >= bh1:\n",

609 | " print(\"Significant difference (p < 0.01)\")\n",

610 | "\n",

611 | "print(\"==========================\")\n",

612 | "print(\"==========================\")\n",

613 | "print(\"==========================\")\n",

614 | "\n",

615 | "baseline = np.mean(np.array(sampled_batch_preds[\"QuestEval\"]), axis=0)\n",

616 | "summaczs = np.mean(np.array(sampled_batch_preds[\"SummaC-ZS-VITC-L\"]), axis=0)\n",

617 | "summacconv = np.mean(np.array(sampled_batch_preds[\"SummaC-Histo-VITC-L\"]), axis=0)\n",

618 | "\n",

619 | "for model in [\"QuestEval\", \"SummaC-ZS-VITC-L\", \"SummaC-Histo-VITC-L\"]:\n",

620 | " samples = np.mean(np.array(sampled_batch_preds[model]), axis=0)\n",

621 | " low5, high5 = np.percentile(samples, P5), np.percentile(samples, 100-P5)\n",

622 | " low1, high1 = np.percentile(samples, P1), np.percentile(samples, 100-P1)\n",

623 | " \n",

624 | " print(\"OVERALL\".ljust(15), model.ljust(20), \" - (%.3f - %.3f) (%.3f - %.3f)\" % (low5, high5, low1, high1))"

625 | ]

626 | },

627 | {

628 | "cell_type": "markdown",

629 | "metadata": {},

630 | "source": [

631 | "## ROC AUC score"

632 | ]

633 | },

634 | {

635 | "cell_type": "code",

636 | "execution_count": 6,

637 | "metadata": {},

638 | "outputs": [

639 | {

640 | "data": {

641 | "text/html": [

642 | "ROC AUC | | cogensumm | xsumfaith | polytope | factcc | summeval | frank | overall |

| model_name | input | | | | | | | |

\n",

812 | " \n",

813 | " | DAE | \n",

814 | " doc | \n",

815 | " 0.678 | \n",

816 | " 0.413 | \n",

817 | " 0.641 | \n",

818 | " 0.827 | \n",

819 | " 0.774 | \n",

820 | " 0.643 | \n",

821 | " 0.663 | \n",

822 | "

\n",

823 | " \n",

824 | " | FEQA | \n",

825 | " doc | \n",

826 | " 0.608 | \n",

827 | " 0.534 | \n",

828 | " 0.546 | \n",

829 | " 0.507 | \n",

830 | " 0.522 | \n",

831 | " 0.748 | \n",

832 | " 0.577 | \n",

833 | "

\n",

834 | " \n",

835 | " | NER | \n",

836 | " doc | \n",

837 | " 0.502 | \n",

838 | " 0.623 | \n",

839 | " 0.517 | \n",

840 | " 0.5 | \n",

841 | " 0.568 | \n",

842 | " 0.609 | \n",

843 | " 0.553 | \n",

844 | "

\n",

845 | " \n",

846 | " | QuestEval | \n",

847 | " doc | \n",

848 | " 0.644 | \n",

849 | " 0.664 | \n",

850 | " 0.722 | \n",

851 | " 0.715 | \n",

852 | " 0.79 | \n",

853 | " 0.879 | \n",

854 | " 0.736 | \n",

855 | "

\n",

856 | " \n",

857 | " | SummaC-Histo-VITC-L | \n",

858 | " doc | \n",

859 | " 0.676 | \n",

860 | " 0.702 | \n",

861 | " 0.624 | \n",

862 | " 0.922 | \n",

863 | " 0.86 | \n",

864 | " 0.884 | \n",

865 | " 0.778 | \n",

866 | "

\n",

867 | " \n",

868 | " | SummaC-ZS-VITC-L | \n",

869 | " doc | \n",

870 | " 0.731 | \n",

871 | " 0.58 | \n",

872 | " 0.603 | \n",

873 | " 0.837 | \n",

874 | " 0.855 | \n",

875 | " 0.853 | \n",

876 | " 0.743 | \n",

877 | "

\n",

878 | "

"

879 | ],

880 | "text/plain": [

881 | ""

882 | ]

883 | },

884 | "execution_count": 6,

885 | "metadata": {},

886 | "output_type": "execute_result"

887 | }

888 | ],

889 | "source": [

890 | "df = pd.DataFrame(results)\n",

891 | "df = df.groupby([\"model_name\", \"input\"]).agg({\"%s_roc_auc\" % (d): \"mean\" for d in benchmark.task_name_to_task})\n",

892 | "df.rename(columns={k: k.replace(\"_roc_auc\", \"\") for k in df.keys()}, inplace=True)\n",

893 | "df.drop(\"total\",inplace=True)\n",

894 | "df[\"overall\"] = (df[\"factcc\"]+df[\"frank\"]+df[\"polytope\"]+df[\"cogensumm\"]+df[\"summeval\"]+df[\"xsumfaith\"]) / (6.0)\n",

895 | "\n",

896 | "df.style.apply(highlight_max).background_gradient(cmap=cm, high=1.0, low=0.0).set_precision(3).set_caption(\"ROC AUC\")"

897 | ]

898 | },

899 | {

900 | "cell_type": "code",

901 | "execution_count": 17,

902 | "metadata": {},

903 | "outputs": [

904 | {

905 | "name": "stdout",

906 | "output_type": "stream",

907 | "text": [

908 | "DATASET NAME MODEL NAME \n",

909 | "cogensumm DAE - 0.678 (0.639 - 0.726) (0.632 - 0.735)\n",

910 | "--------------\n",

911 | "cogensumm SummaC-ZS-VITC-L - 0.731 (0.697 - 0.767) (0.685 - 0.778)\n",

912 | "cogensumm SummaC-Histo-VITC-L - 0.676 (0.633 - 0.716) (0.627 - 0.720)\n",

913 | "==================================================\n",

914 | "xsumfaith QuestEval - 0.664 (0.631 - 0.688) (0.626 - 0.699)\n",

915 | "--------------\n",

916 | "xsumfaith SummaC-ZS-VITC-L - 0.580 (0.552 - 0.615) (0.547 - 0.616)\n",

917 | "xsumfaith SummaC-Histo-VITC-L - 0.702 (0.675 - 0.733) (0.666 - 0.740)\n",

918 | "==================================================\n",

919 | "polytope QuestEval - 0.722 (0.683 - 0.762) (0.682 - 0.766)\n",

920 | "--------------\n",

921 | "polytope SummaC-ZS-VITC-L - 0.603 (0.529 - 0.667) (0.524 - 0.685)\n",

922 | "polytope SummaC-Histo-VITC-L - 0.624 (0.560 - 0.679) (0.530 - 0.696)\n",

923 | "==================================================\n",

924 | "factcc DAE - 0.827 (0.793 - 0.863) (0.787 - 0.881)\n",

925 | "--------------\n",

926 | "factcc SummaC-ZS-VITC-L - 0.837 (0.800 - 0.879) (0.786 - 0.891)\n",

927 | "factcc SummaC-Histo-VITC-L - 0.922 (0.899 - 0.945) (0.895 - 0.952)\n",

928 | "Significant difference (p < 0.05)\n",

929 | "Significant difference (p < 0.01)\n",

930 | "==================================================\n",

931 | "summeval QuestEval - 0.790 (0.751 - 0.836) (0.750 - 0.843)\n",

932 | "--------------\n",

933 | "summeval SummaC-ZS-VITC-L - 0.855 (0.829 - 0.879) (0.817 - 0.887)\n",

934 | "summeval SummaC-Histo-VITC-L - 0.860 (0.837 - 0.883) (0.832 - 0.886)\n",

935 | "Significant difference (p < 0.05)\n",

936 | "==================================================\n",

937 | "frank QuestEval - 0.879 (0.870 - 0.888) (0.868 - 0.892)\n",

938 | "--------------\n",

939 | "frank SummaC-ZS-VITC-L - 0.853 (0.842 - 0.865) (0.839 - 0.867)\n",

940 | "frank SummaC-Histo-VITC-L - 0.884 (0.875 - 0.895) (0.869 - 0.898)\n",

941 | "==================================================\n",

942 | "==========================\n",

943 | "==========================\n",

944 | "==========================\n",

945 | "OVERALL QuestEval - (0.721 - 0.750) (0.715 - 0.751)\n",

946 | "OVERALL SummaC-ZS-VITC-L - (0.723 - 0.756) (0.719 - 0.764)\n",

947 | "OVERALL SummaC-Histo-VITC-L - (0.763 - 0.791) (0.762 - 0.792)\n"

948 | ]

949 | }

950 | ],

951 | "source": [

952 | "# Analysis with confidence interval\n",

953 | "strongest_baseline = {\"cogensumm\": \"DAE\", \"xsumfaith\": \"QuestEval\", \"polytope\": \"QuestEval\", \"factcc\": \"DAE\", \"summeval\": \"QuestEval\", \"frank\": \"QuestEval\"}\n",

954 | "\n",