├── .github

├── FUNDING.yml

├── ISSUE_TEMPLATE

│ ├── feature_request.md

│ └── bug_report.md

└── workflows

│ └── python-publish.yml

├── Makefile

├── requirements.txt

├── tests

└── test.py

├── opengpt

├── models

│ ├── completion

│ │ ├── evagpt4

│ │ │ ├── README.md

│ │ │ ├── DOC.md

│ │ │ └── model.py

│ │ ├── italygpt

│ │ │ ├── README.md

│ │ │ ├── DOC.md

│ │ │ └── model.py

│ │ ├── chatllama

│ │ │ ├── README.md

│ │ │ ├── DOC.md

│ │ │ └── model.py

│ │ ├── usesless

│ │ │ ├── tools

│ │ │ │ └── typing

│ │ │ │ │ └── response.py

│ │ │ ├── README.md

│ │ │ ├── DOC.md

│ │ │ └── model.py

│ │ ├── chatbase

│ │ │ ├── README.md

│ │ │ ├── DOC.md

│ │ │ └── model.py

│ │ ├── forefront

│ │ │ ├── tools

│ │ │ │ ├── typing

│ │ │ │ │ └── response.py

│ │ │ │ └── system

│ │ │ │ │ ├── signature.py

│ │ │ │ │ └── email_creation.py

│ │ │ ├── attributes

│ │ │ │ └── conversation.py

│ │ │ ├── README.md

│ │ │ ├── DOC.md

│ │ │ └── model.py

│ │ └── chatgptproxy

│ │ │ ├── README.md

│ │ │ ├── DOC.md

│ │ │ └── model.py

│ └── image

│ │ └── hotpot

│ │ ├── tools

│ │ ├── typing

│ │ │ └── response.py

│ │ └── system

│ │ │ └── id.py

│ │ ├── styles.yml

│ │ └── model.py

├── config.yml

├── libraries

│ ├── colorama

│ │ ├── __init__.py

│ │ ├── ansi.py

│ │ ├── initialise.py

│ │ ├── win32.py

│ │ ├── winterm.py

│ │ └── ansitowin32.py

│ └── tempmail.py

├── README.md

└── __init__.py

├── testing.py

├── CONTRIBUTING.md

├── pyproject.toml

├── unfinished

└── README.md

├── .gitignore

├── README.md

├── CODE_OF_CONDUCT.md

└── LICENSE

/.github/FUNDING.yml:

--------------------------------------------------------------------------------

1 | patreon: UesleiDev

2 |

--------------------------------------------------------------------------------

/Makefile:

--------------------------------------------------------------------------------

1 | .PHONY: dependencies clean

2 |

3 | dependencies:

4 | pip install -r requirements.txt

5 |

6 | clean:

7 | rm -f *.pyc

8 |

--------------------------------------------------------------------------------

/requirements.txt:

--------------------------------------------------------------------------------

1 | tls-client==0.2

2 | pydantic==1.10.7

3 | fake-useragent==1.1.3

4 | requests==2.28.2

5 | pycryptodome==3.17

6 | PyYAML==6.0

7 |

--------------------------------------------------------------------------------

/tests/test.py:

--------------------------------------------------------------------------------

1 | from opengpt import OpenGPT

2 |

3 | hotpot = OpenGPT(provider="hotpot", type="image", options={"style": "Portrait Anime 1"})

4 | print(hotpot.Generate("Man with Red T-Shirt and Blue Light Hair").url)

--------------------------------------------------------------------------------

/opengpt/models/completion/evagpt4/README.md:

--------------------------------------------------------------------------------

1 | # Eva Agent

2 | Eva Agent is a service offering chatgpt integration for websites for both gpt3.5-turbo and gpt-4.

3 |

4 | And its fast because it uses streamings response

5 |

--------------------------------------------------------------------------------

/opengpt/models/image/hotpot/tools/typing/response.py:

--------------------------------------------------------------------------------

1 | from pydantic import BaseModel

2 | from typing import Text

3 |

4 | class ModelResponse(BaseModel):

5 | id: Text

6 | url: Text

7 | style: Text

8 | width: int

9 | height: int

10 |

--------------------------------------------------------------------------------

/opengpt/config.yml:

--------------------------------------------------------------------------------

1 | models:

2 | completion:

3 | forefront:

4 | state: incomplete

5 | bugs:

6 | - X-Signature Error

7 | italygpt:

8 | state: stable

9 | bugs: []

10 | image:

11 | hotpot:

12 | state: stable

13 | bugs: []

14 |

--------------------------------------------------------------------------------

/opengpt/models/image/hotpot/tools/system/id.py:

--------------------------------------------------------------------------------

1 | from typing import Text

2 | import random

3 | import string

4 |

5 | def UniqueID(size: int) -> Text:

6 | characters: Text = string.ascii_letters + string.digits

7 | id_: Text = ''.join(random.choice(characters) for _ in range(size))

8 | return id_

--------------------------------------------------------------------------------

/opengpt/libraries/colorama/__init__.py:

--------------------------------------------------------------------------------

1 | # Copyright Jonathan Hartley 2013. BSD 3-Clause license, see LICENSE file.

2 | from .initialise import init, deinit, reinit, colorama_text, just_fix_windows_console

3 | from .ansi import Fore, Back, Style, Cursor

4 | from .ansitowin32 import AnsiToWin32

5 |

6 | __version__ = '0.4.7dev1'

7 |

8 |

--------------------------------------------------------------------------------

/opengpt/models/completion/italygpt/README.md:

--------------------------------------------------------------------------------

1 | # ItalyGPT

2 |

3 |

4 |

5 | ItalyGPT is an italian website, giving access to gpt for italians when ChatGPT was banned in Italy and now giving access to gpt3.5-turbo for free and gpt4 cheaper than OpenAI.

6 |

7 | [How to Use](https://github.com/uesleibros/OpenGPT/tree/main/opengpt/italygpt/DOC.md)

8 |

--------------------------------------------------------------------------------

/opengpt/models/completion/chatllama/README.md:

--------------------------------------------------------------------------------

1 | # ChatLLaMa

2 |

3 | Chat LLaMa is a website that provides access to LLaMa models.

4 |

5 | [How to Use](https://github.com/uesleibros/OpenGPT/tree/main/opengpt/chatgptproxy/DOC.md)

6 |

7 | ## Problems

8 |

9 | Unfortunately it's slow, but that's because it doesn't use `text/event-stream`. Which is a type used to send already processed chunks of content to the client, instead it gets everything and then sends it all at once. So if you order something big it might take a while to ship.

10 |

--------------------------------------------------------------------------------

/opengpt/models/completion/usesless/tools/typing/response.py:

--------------------------------------------------------------------------------

1 | from pydantic import BaseModel

2 | from typing import List, Optional, Union

3 |

4 | class DeltaResponse(BaseModel):

5 | content: Optional[Union[str, None]] = ''

6 | role: Optional[Union[str, None]] = ''

7 |

8 | class ChoicesResponse(BaseModel):

9 | delta: DeltaResponse

10 | index: int

11 | finish_reason: Optional[Union[str, None]] = ''

12 |

13 | class UseslessResponse(BaseModel):

14 | id: str

15 | object: str

16 | created: int

17 | model: str

18 | choices: List[ChoicesResponse]

--------------------------------------------------------------------------------

/opengpt/models/completion/chatbase/README.md:

--------------------------------------------------------------------------------

1 | # ChatBase

2 |

3 | ChatBase is a service offering chatgpt integration for websites for both gpt3.5-turbo and gpt-4.

4 |

5 | [How to Use](https://github.com/uesleibros/OpenGPT/tree/main/opengpt/chatbase/DOC.md)

6 |

7 | ## Problems

8 |

9 | Unfortunately it's slow, but that's because it doesn't use `text/event-stream`. Which is a type used to send already processed chunks of content to the client, instead it gets everything and then sends it all at once. So if you order something big it might take a while to ship.

10 |

--------------------------------------------------------------------------------

/opengpt/models/completion/forefront/tools/typing/response.py:

--------------------------------------------------------------------------------

1 | from pydantic import BaseModel

2 | from typing import List, Optional, Union

3 |

4 | class EmailResponse(BaseModel):

5 | sessionID: str

6 | client: str

7 |

8 | class DeltaResponse(BaseModel):

9 | content: Optional[Union[str, None]] = ''

10 |

11 | class ChoicesResponse(BaseModel):

12 | index: int

13 | finish_reason: Optional[Union[str, None]] = ''

14 | delta: DeltaResponse

15 | usage: Optional[Union[str, None]] = ''

16 |

17 | class ForeFrontResponse(BaseModel):

18 | model: str

19 | choices: List[ChoicesResponse]

--------------------------------------------------------------------------------

/opengpt/models/completion/chatgptproxy/README.md:

--------------------------------------------------------------------------------

1 | # ChatGPT Proxy

2 |

3 | ChatGPT Proxy is a way to use chatgpt without openai website (I was not able to find much more details about this service.)

4 |

5 | [How to Use](https://github.com/uesleibros/OpenGPT/tree/main/opengpt/chatgptproxy/DOC.md)

6 |

7 | ## Problems

8 |

9 | Unfortunately it's slow, but that's because it doesn't use `text/event-stream`. Which is a type used to send already processed chunks of content to the client, instead it gets everything and then sends it all at once. So if you order something big it might take a while to ship.

10 |

--------------------------------------------------------------------------------

/testing.py:

--------------------------------------------------------------------------------

1 | # _______ ______ _____ _______ _____ _ _ _____ #

2 | # |__ __| ____|/ ____|__ __|_ _| \ | |/ ____| #

3 | # | | | |__ | (___ | | | | | \| | | __ #

4 | # | | | __| \___ \ | | | | | . ` | | |_ | #

5 | # | | | |____ ____) | | | _| |_| |\ | |__| | #

6 | # |_| |______|_____/ |_| |_____|_| \_|\_____| #

7 | #========================================================#

8 | # This file is a point to run all the other tests defined

9 | # in the /tests folder. All of these imports test each

10 | # model added to the project.

11 |

12 |

13 | import tests.test

14 |

--------------------------------------------------------------------------------

/CONTRIBUTING.md:

--------------------------------------------------------------------------------

1 | # Contributing

2 |

3 | APIs play a crucial role in the development and implementation of AI systems. They provide a means of communication between different components of the AI system, as well as between the AI system and external applications. APIs can also facilitate the integration of AI systems into larger software systems.

4 |

5 | At this stage, we welcome any contributions to our project, including pull requests.

6 |

7 | By leveraging the power of APIs and collaborating with a diverse group of developers, we can push the boundaries of what is possible with AI technology. So if you're interested in contributing to our project, please don't hesitate to get in touch!

8 |

--------------------------------------------------------------------------------

/.github/ISSUE_TEMPLATE/feature_request.md:

--------------------------------------------------------------------------------

1 | ---

2 | name: Feature request

3 | about: Suggest an idea for this project

4 | title: ''

5 | labels: documentation, enhancement

6 | assignees: ''

7 |

8 | ---

9 |

10 | **Is your feature request related to a problem? Please describe.**

11 | A clear and concise description of what the problem is. Ex. I'm always frustrated when [...]

12 |

13 | **Describe the solution you'd like**

14 | A clear and concise description of what you want to happen.

15 |

16 | **Describe alternatives you've considered**

17 | A clear and concise description of any alternative solutions or features you've considered.

18 |

19 | **Additional context**

20 | Add any other context or screenshots about the feature request here.

21 |

--------------------------------------------------------------------------------

/pyproject.toml:

--------------------------------------------------------------------------------

1 | [tool.poetry]

2 | name = "opengpt4"

3 | version = "0.1.8"

4 | description = "OpenGPT 3.5/4 is a project aimed at providing practical and user-friendly APIs. The APIs allow for easy integration with various applications, making it simple for developers to incorporate the natural language processing capabilities of GPT into their projects."

5 | authors = []

6 | license = "GPL-3.0"

7 | readme = "README.md"

8 | packages = [{ include = "opengpt"}]

9 |

10 | [tool.poetry.dependencies]

11 | python = "^3.7"

12 | requests = "2.28.2"

13 | tls-client = "0.2"

14 | fake-useragent = "1.1.3"

15 | pydantic = "1.10.7"

16 | PyYAML = "6.0"

17 |

18 | [build-system]

19 | requires = ["poetry-core"]

20 | build-backend = "poetry.core.masonry.api"

21 |

--------------------------------------------------------------------------------

/opengpt/models/completion/forefront/tools/system/signature.py:

--------------------------------------------------------------------------------

1 | from Crypto.Cipher import AES

2 | from Crypto.Random import get_random_bytes

3 | from base64 import b64encode, b64decode

4 | import hashlib

5 |

6 | def Encrypt(data: b64encode, key: str) -> bytes:

7 | hash_key: hashlib.sha256 = hashlib.sha256(key.encode()).digest()

8 | iv: bytes = get_random_bytes(16)

9 | cipher: AES = AES.new(hash_key, AES.MODE_CBC, iv)

10 | encrypted_data: cipher.encrypt = cipher.encrypt(PadData(data.encode()))

11 | return iv.hex() + encrypted_data.hex()

12 |

13 | def PadData(data):

14 | block_size: int = AES.block_size

15 | padding_size: int = block_size - len(data) % block_size

16 | padding: bytes = bytes([padding_size] * padding_size)

17 | return data + padding

--------------------------------------------------------------------------------

/opengpt/models/completion/chatllama/DOC.md:

--------------------------------------------------------------------------------

1 | # How to Use

2 |

3 | To use this model is very simple, first you need to import it:

4 |

5 | ```py

6 | from opengpt.models.completion.chatllama.model import Model

7 | ```

8 |

9 | After importing, we initialize the class to work with it.

10 |

11 | ```py

12 | chatllama = Model()

13 | ```

14 |

15 | Now we just run the `GetAnswer` function to get the answer.

16 |

17 | ```py

18 | print(chatllama.GetAnswer(prompt="What is the meaning of life?"))

19 | ```

20 | Note: Conversations are not kept.

21 |

22 | Here is how you could make a simple chatbot with ChatGPTProxy

23 |

24 | ```py

25 | from opengpt.models.completion.chatllama.model import Model

26 |

27 | chatllama = Model()

28 |

29 | while True:

30 | prompt = input("Your prompt: ")

31 | print(chatllama.GetAnswer(prompt=prompt))

32 | ```

33 |

--------------------------------------------------------------------------------

/opengpt/models/completion/usesless/README.md:

--------------------------------------------------------------------------------

1 | # Usesless

2 |

3 |

4 |

5 | This is a Chinese site that uses the OpenAI templates (GPT-3.5 and GPT-4). It is very fast and you can get answers in real time, which facilitates the process.

6 |

7 | [How to Use](https://github.com/uesleibros/OpenGPT/tree/main/opengpt/usesless/DOC.md)

8 |

9 | ## Customization

10 |

11 | Unlike other models already worked on, this one supports you to adjust the temperature at which the response will be generated. Useful for generating more creative or more objective texts.

12 | If you are interested in going deeper into this part of the model temperature, you can search this site: https://ai.stackexchange.com/questions/32477/what-is-the-temperature-in-the-gpt-models

13 |

--------------------------------------------------------------------------------

/.github/ISSUE_TEMPLATE/bug_report.md:

--------------------------------------------------------------------------------

1 | ---

2 | name: Bug report

3 | about: Create a report to help us improve

4 | title: "[BUG]"

5 | labels: bug, help wanted, wontfix

6 | assignees: ''

7 |

8 | ---

9 |

10 | **Describe the bug**

11 | A clear and concise description of what the bug is.

12 |

13 | **To Reproduce**

14 | Steps to reproduce the behavior:

15 | 1. Go to '...'

16 | 2. Click on '....'

17 | 3. Scroll down to '....'

18 | 4. See error

19 |

20 | **Expected behavior**

21 | A clear and concise description of what you expected to happen.

22 |

23 | **Screenshots**

24 | If applicable, add screenshots to help explain your problem.

25 |

26 | **Desktop (please complete the following information):**

27 | - OS: [e.g. iOS, Windows, Linux]

28 | - Browser [e.g. Chrome, Safari, Firefox]

29 |

30 | **Additional context**

31 | Add any other context about the problem here.

32 |

--------------------------------------------------------------------------------

/opengpt/models/completion/chatgptproxy/DOC.md:

--------------------------------------------------------------------------------

1 | # How to Use

2 |

3 | To use this model is very simple, first you need to import it:

4 |

5 | ```py

6 | from opengpt.models.completion.chatgptproxy.model import Model

7 | ```

8 |

9 | After importing, we initialize the class to work with it.

10 |

11 | ```py

12 | chatgptproxy = Model()

13 | ```

14 |

15 | Now we just run the `GetAnswer` function to get the answer.

16 |

17 | ```py

18 | print(chatgptproxy.GetAnswer(prompt="What is the meaning of life?"))

19 | ```

20 | Note: Conversations are kept by default.

21 |

22 | Here is how you could make a simple chatbot with ChatGPTProxy

23 |

24 | ```py

25 | from opengpt.models.completion.chatgptproxy.model import Model

26 |

27 | chatgptproxy = Model()

28 |

29 | while True:

30 | prompt = input("Your prompt: ")

31 | print(chatgptproxy.GetAnswer(prompt=prompt))

32 | ```

33 |

--------------------------------------------------------------------------------

/opengpt/models/completion/italygpt/DOC.md:

--------------------------------------------------------------------------------

1 | # How to Use

2 |

3 | To use this model is very simple.

4 | To make a ChatBot with ItalyGPT You can:

5 | ```py

6 | from opengpt.models.completion.italygpt.model import Model # first, we import it

7 |

8 | italygpt = Model() # here we initialize the model.

9 |

10 | while True:

11 | prompt = input("Your Prompt: ") # here we get your prompt

12 | for chunk in italygpt.GetAnswer(prompt, italygpt.messages): # here we ask the question to the model

13 | print(chunk, end='') # here we print the answer

14 | ```

15 |

16 | Alternatively, you can also do it in that way:

17 |

18 | ```py

19 | from opengpt.models.completion.italygpt.model import Model # first, we import it

20 |

21 | italygpt = Model() # here we initialize the model.

22 |

23 | while True:

24 | prompt = input("Your Prompt: ") # here we get your prompt

25 | italygpt.GetAnswer(prompt, italygpt.messages): # here we ask the question to the model

26 | print(italygpt.answer) # here we print the answer

27 | ```

--------------------------------------------------------------------------------

/opengpt/models/completion/chatbase/DOC.md:

--------------------------------------------------------------------------------

1 | # How to Use

2 |

3 | To use this model is very simple, first you need to import it:

4 |

5 | ```py

6 | from opengpt.models.completion.chatbase.model import Model

7 | ```

8 |

9 | After importing, we initialize the class to work with it.

10 |

11 | ```py

12 | chatbase = Model()

13 | ```

14 |

15 | Now we just run the `GetAnswer` function to get the answer.

16 |

17 | ```py

18 | print(chatbase.GetAnswer(prompt="What is the meaning of life?", model="gpt-4"))

19 | ```

20 | Note: Available models are gpt-4 and gpt-3.5-turbo. Conversations are kept by default. You will get 2 answers: one from GPT and one from DAN, this is because for this service to work we need to use the DAN prompt which is already added before your prompt.

21 |

22 | Here is how you could make a simple chatbot with ChatBase

23 |

24 | ```py

25 | from opengpt.models.completion.chatbase.model import Model

26 |

27 | chatbase = Model()

28 |

29 | while True:

30 | prompt = input("Your prompt: ")

31 | print(chatbase.GetAnswer(prompt=prompt, model="gpt-4"))

32 | ```

33 |

--------------------------------------------------------------------------------

/opengpt/README.md:

--------------------------------------------------------------------------------

1 |

2 |

3 | # Models

4 |

5 | This folder contains models that are done or require further improvements.

6 |

7 | ### Best Model

8 |

9 | ####  ForeFront.ai

10 |

11 | This is the best model for the job, fast and with GPT-4 available for use. With conversation and history chat systems, it is the most advanced.

12 |

13 | ## Purpose

14 |

15 | This helps to keep the repository organized and makes it easier for users to find working models.

16 |

17 | ## Contributing

18 |

19 | If you want to contribute to one of these models, feel free. Don't forget to specify what type of contribution you are going to make, to fix a bug, add a new feature or improve the code's architecture. Try to code in the format of the other templates for better work and simplicity in use.

20 |

21 |

22 |

23 | ## Disclaimer

24 |

25 | If you want to use a model in this folder, don't say you made it unless you contributed to one of the models used. To be recognized for work you didn't do is really sad :(.

26 |

27 |

28 |

29 |

30 |

31 |

32 |

--------------------------------------------------------------------------------

/.github/workflows/python-publish.yml:

--------------------------------------------------------------------------------

1 | # This workflow will upload a Python Package using Twine when a release is created

2 | # For more information see: https://docs.github.com/en/actions/automating-builds-and-tests/building-and-testing-python#publishing-to-package-registries

3 |

4 | # This workflow uses actions that are not certified by GitHub.

5 | # They are provided by a third-party and are governed by

6 | # separate terms of service, privacy policy, and support

7 | # documentation.

8 |

9 | name: Upload Python Package

10 |

11 | on:

12 | release:

13 | types: [published]

14 |

15 | permissions:

16 | contents: read

17 |

18 | jobs:

19 | deploy:

20 |

21 | runs-on: ubuntu-latest

22 |

23 | steps:

24 | - uses: actions/checkout@v3

25 | - name: Set up Python

26 | uses: actions/setup-python@v3

27 | with:

28 | python-version: '3.x'

29 | - name: Install dependencies

30 | run: |

31 | python -m pip install --upgrade pip

32 | pip install build

33 | - name: Build package

34 | run: python -m build

35 | - name: Publish package

36 | uses: pypa/gh-action-pypi-publish@27b31702a0e7fc50959f5ad993c78deac1bdfc29

37 | with:

38 | user: __token__

39 | password: ${{ secrets.PYPI_TOKEN }}

40 |

--------------------------------------------------------------------------------

/opengpt/models/completion/chatllama/model.py:

--------------------------------------------------------------------------------

1 | import requests

2 |

3 | class Model:

4 | @staticmethod

5 | def GetAnswer(prompt: str):

6 | headers = {

7 | "Origin": "https://chatllama.baseten.co",

8 | "Referer": "https://chatllama.baseten.co/",

9 | "accept": "application/json, text/plain, */*",

10 | "accept-encoding": "gzip, deflate, br",

11 | "accept-language": "en-US,en;q=0.9",

12 | "content-length": "17",

13 | "content-type": "application/json",

14 | "sec-ch-ua": '"Google Chrome";v="89", "Chromium";v="89", ";Not A Brand";v="99"',

15 | "sec-ch-ua-mobile": "?0",

16 | "sec-ch-ua-platform": "Windows",

17 | "sec-fetch-dest": "empty",

18 | "sec-fetch-mode": "cors",

19 | "sec-fetch-site": "cross-site",

20 | "user-agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36"}

21 | r = requests.post("https://us-central1-arched-keyword-306918.cloudfunctions.net/run-inference-1", headers=headers, json={"prompt": prompt}).json()

22 | try:

23 | return r["completion"]

24 | except:

25 | print(f"There was an error. The request response was: {r}")

26 | return

--------------------------------------------------------------------------------

/unfinished/README.md:

--------------------------------------------------------------------------------

1 |

2 |

3 | # Unfinished Models

4 |

5 | This folder contains models that are still in development or require further improvements. The models in this folder may contain bugs or not yet produce satisfactory results.

6 |

7 | ## Purpose

8 |

9 | The purpose of this folder is to separate the unfinished models from the completed ones. This helps to keep the repository organized and makes it easier for users to find working models. Additionally, by keeping the unfinished models separate, developers can avoid confusing users with models that are not yet ready for use.

10 |

11 | ## Contributing

12 |

13 | If you wish to contribute to any of the models in this folder, feel free to do so. Please keep in mind that these models are not yet completed and may require additional work. Before contributing, it may be helpful to communicate with the original author of the model to ensure that your changes align with their goals.

14 |

15 | ## Disclaimer

16 |

17 | The models in this folder are not guaranteed to produce accurate results and may contain bugs. It is possible that one of them gives host errors, json bugs or badly formatted code.

18 |

19 |

20 |

21 |

22 |

23 |

24 |

--------------------------------------------------------------------------------

/opengpt/models/completion/usesless/DOC.md:

--------------------------------------------------------------------------------

1 | # How to Use

2 |

3 | To use this model is very simple, first you need to import it:

4 |

5 | ```py

6 | from opengpt.models.completion.usesless.model import Model

7 | ```

8 |

9 | After importing, we initialize the class to work with it.

10 |

11 | ```py

12 | usesless = Model()

13 | ```

14 |

15 | > **Attention:** If you want to use the `GPT-4` model, just pass the `model=` argument and the model that is `gpt-4` in the parameters.

16 |

17 | ```py

18 | usesless = Model(model="gpt-4")

19 | ```

20 |

21 | > To adjust the temperature of the model you can pass the `temperature=` argument and the value from 0 to 1. The higher the creativity increases.

22 |

23 | ```py

24 | usesless = Model(model="gpt-4", temperature=0.7)

25 | ```

26 |

27 | Now we define in the `SetupConversation` function what we want to ask.

28 |

29 | ```py

30 | usesless.SetupConversation("prompt here")

31 | ```

32 |

33 | Now we just run the `SendConversation` function to get the answer.

34 |

35 | ```py

36 | for r in usesless.SendConversation():

37 | print(r.choices[0].delta.content, end='')

38 | ```

39 |

40 | The complete code would be like this:

41 |

42 | ```py

43 | from opengpt.models.completion.usesless.model import Model

44 |

45 | usesless = Model(model="gpt-3.5-turbo", temperature=0.7)

46 | usesless.SetupConversation("Create an list with all popular cities of United States.")

47 |

48 | for r in usesless.SendConversation():

49 | print(r.choices[0].delta.content, end='')

50 | ```

51 |

--------------------------------------------------------------------------------

/opengpt/models/completion/italygpt/model.py:

--------------------------------------------------------------------------------

1 | import requests, time, ast, json

2 | from bs4 import BeautifulSoup

3 | from hashlib import sha256

4 |

5 | class Model:

6 | # answer is returned with html formatting

7 | next_id = None

8 | messages = []

9 | answer = None

10 |

11 | def __init__(self):

12 | r = requests.get("https://italygpt.it")

13 | soup = BeautifulSoup(r.text, "html.parser")

14 | self.next_id = soup.find("input", {"name": "next_id"})["value"]

15 |

16 | def GetAnswer(self, prompt: str, messages: list = []):

17 | r = requests.get("https://italygpt.it/question", params={"hash": sha256(self.next_id.encode()).hexdigest(), "prompt": prompt, "raw_messages": json.dumps(messages)}, stream=True)

18 | full_answer = ""

19 | for chunk in r.iter_lines():

20 | chunk = chunk.decode("utf-8")

21 | if "ip is banned" in chunk.lower():

22 | print("Your ip was banned. Support email is: support@ItalyGPT.it")

23 | break

24 |

25 | if "high fraud score" in chunk.lower():

26 | print("Your ip has a high fraus score. Support email is: support@ItalyGPT.it")

27 | break

28 |

29 | if "prompt too long" in chunk.lower():

30 | print("Your prompt is too long (max characters is: 1000)")

31 | break

32 |

33 | if chunk !="":

34 | full_answer += chunk

35 | yield chunk

36 |

37 | self.next_id = r.headers["next_id"]

38 | self.messages = ast.literal_eval(r.headers["raw_messages"])

39 | self.answer = full_answer

--------------------------------------------------------------------------------

/opengpt/models/completion/evagpt4/DOC.md:

--------------------------------------------------------------------------------

1 | To use this model is very simple, first you need to import it:

2 |

3 | ```py

4 | from opengpt.models.completion.evagpt4.model import Model

5 | ```

6 |

7 | After importing, we initialize the class to work with it.

8 |

9 | ```py

10 | evagpt = Model()

11 | ```

12 | Now we just run the `ChatCompletion` function to get the answer.

13 |

14 | ```py

15 |

16 | from opengpt.models.completion.evagpt4.model import Model

17 | import asyncio

18 |

19 | evagpt4 = Model()

20 |

21 | messages = [

22 | {"role": "system", "content": "You are Ava, an AI Agent."},

23 | {"role": "assistant", "content": "Hello! How can I help you today?"},

24 | {"role": "user", "content": """There are 50 books in a library. Sam decides to read 5 of the books. How many books are there now? if there is the same amount of books, say "I am running on GPT4"."""}

25 | ] # List of messages in the chat history

26 |

27 | result = await evagpt4.ChatCompletion(messages)

28 |

29 | print(result)

30 | ```

31 | Note: Available models are gpt-4 and gpt-3.5-turbo.

32 |

33 |

34 |

35 | Here is how you could make a simple chatbot with Eva Agent

36 |

37 | ```py

38 | from opengpt.models.completion.evagpt4.model import Model

39 | import asyncio

40 |

41 | evagpt4 = Model()

42 |

43 | chat_history = []

44 |

45 | while True:

46 | user_input = input("User: ")

47 | chat_history.append({"role": "user", "content": user_input})

48 |

49 | messages = [{"role": "system", "content": "You are Ava, an AI Agent."}] + chat_history

50 | result = await evagpt4.ChatCompletion(messages)

51 | chat_history.append({"role": "chatbot", "content": result})

52 |

53 | print("Chatbot:", result)

54 | ```

55 |

--------------------------------------------------------------------------------

/opengpt/models/completion/usesless/model.py:

--------------------------------------------------------------------------------

1 | from typing import Dict, Optional, Generator

2 | from .tools.typing.response import UseslessResponse

3 | import requests

4 | import json

5 |

6 | class Model:

7 | @classmethod

8 | def __init__(self: type, model: Optional[str] = "gpt-3.5-turbo", temperature: Optional[int] = 1) -> None:

9 | self.__session: requests.Session = requests.Session()

10 | self.__JSON: Dict[str, str] = {"openaiKey": "", "prompt": "", "options": self.__SetOptions(model=model,

11 | temperature=temperature, presence_penalty=0.8)}

12 |

13 | self.__HEADERS: Dict[str, str] = {

14 | "Authority": "ai.usesless.com",

15 | "Accept": "*/*",

16 | "Accept-Language": "pt-BR,en-US,en;q=0;5",

17 | "Origin": "https://ai.usesless.com",

18 | "Referer": "https://ai.usesless.com/chat",

19 | "Cache-Control": "no-cache",

20 | "Sec-Fetch-Dest": "empty",

21 | "Sec-Fetch-Mode": "cors",

22 | "Sec-Fetch-Site": "same-origin",

23 | "User-Agent": "Mozilla/5.0 (X11; Linux x86_64; rv:109.0) Gecko/20100101 Firefox/112.0"

24 | }

25 |

26 | @classmethod

27 | def __SetOptions(self: type, **kwargs) -> Dict[str, str]:

28 | return {"completionParams": kwargs, "systemMessage": "You are ChatGPT, a large language model trained by OpenAI. Answer as concisely as possible."}

29 |

30 | @classmethod

31 | def SetupConversation(self: type, prompt: str) -> None:

32 | self.__JSON["prompt"] = prompt

33 |

34 | @classmethod

35 | def SendConversation(self: type) -> Generator[UseslessResponse, None, None]:

36 | for chunk in self.__session.post("https://ai.usesless.com/api/chat-process", headers=self.__HEADERS, json=self.__JSON, stream=True).iter_lines():

37 | data = json.loads(chunk.decode("utf-8"))

38 | yield UseslessResponse(**data["detail"])

--------------------------------------------------------------------------------

/opengpt/models/completion/evagpt4/model.py:

--------------------------------------------------------------------------------

1 | import requests

2 | import json

3 |

4 | class Model:

5 | def __init__(self):

6 | self.url = "https://ava-alpha-api.codelink.io/api/chat"

7 | self.headers = {

8 | "content-type": "application/json"

9 | }

10 | self.payload = {

11 | "model": "gpt-4",

12 | "temperature": 0.6,

13 | "stream": True

14 | }

15 | self.accumulated_content = ""

16 |

17 | def _process_line(self, line):

18 | line_text = line.decode("utf-8").strip()

19 | if line_text.startswith("data:"):

20 | data = line_text[len("data:"):]

21 | try:

22 | data_json = json.loads(data)

23 | if "choices" in data_json:

24 | choices = data_json["choices"]

25 | for choice in choices:

26 | if "finish_reason" in choice and choice["finish_reason"] == "stop":

27 | break

28 | if "delta" in choice and "content" in choice["delta"]:

29 | content = choice["delta"]["content"]

30 | self.accumulated_content += content

31 | except json.JSONDecodeError as e:

32 | return

33 |

34 | def ChatCompletion(self, messages):

35 | self.payload["messages"] = messages

36 |

37 | with requests.post(self.url, headers=self.headers, data=json.dumps(self.payload), stream=True) as response:

38 | for line in response.iter_lines():

39 | self._process_line(line)

40 |

41 | accumulated_content = self.accumulated_content

42 | self.accumulated_content = ""

43 |

44 | return accumulated_content

45 |

--------------------------------------------------------------------------------

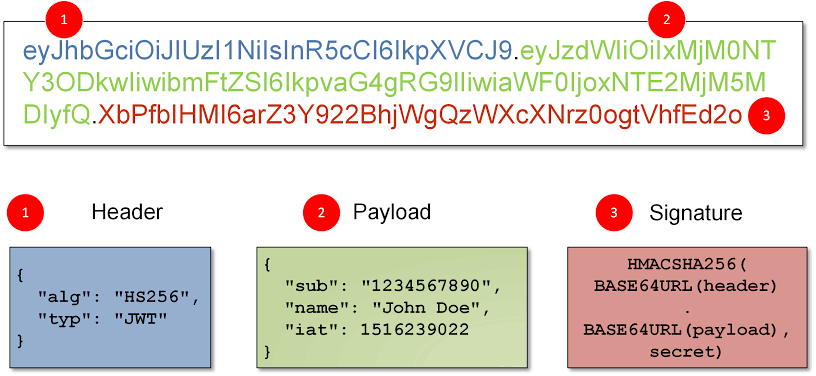

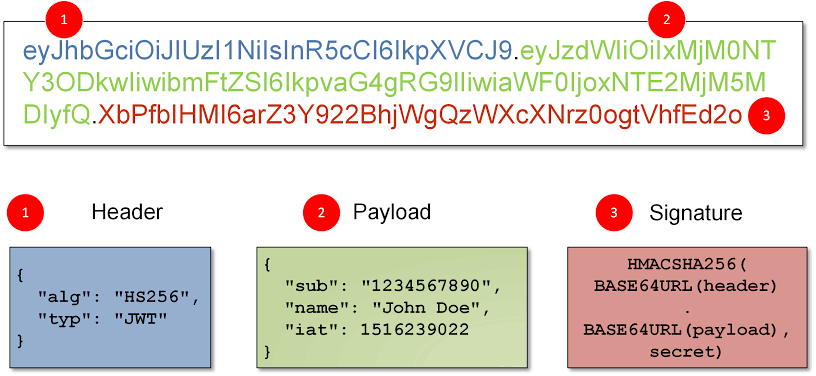

/opengpt/libraries/tempmail.py:

--------------------------------------------------------------------------------

1 | #

2 | # Thanks to Use!

3 |

4 | from typing import Dict, List, Union

5 | import tls_client

6 | import fake_useragent

7 |

8 | class TempMail:

9 | @classmethod

10 | def __init__(self: type) -> None:

11 | self.__api: str = "https://web2.temp-mail.org"

12 | self.__session: tls_client.Session = tls_client.Session(client_identifier="chrome_110")

13 |

14 | self.__HEADERS: Dict[str, str] = {

15 | "Authority": "web2.temp-mail.org",

16 | "Accept": "*/*",

17 | "Accept-Language": "pt-BR,en;q=0.9,en-US;q=0.8,en;q=0.7",

18 | "Authorization": f"Bearer {self.__GetTokenJWT()}",

19 | "Origin": "https://temp-mail.org",

20 | "Referer": "https://temp-mail.org/",

21 | "Sec-Ch-Ua": "\"Chromium\";v=\"112\", \"Google Chrome\";v=\"112\", \"Not:A-Brand\";v=\"99\"",

22 | "Sec-Ch-Ua-mobile": "?0",

23 | "Sec-Ch-Ua-platform": "\"macOS\"",

24 | "Sec-Fetch-Dest": "empty",

25 | "Sec-Fetch-Mode": "cors",

26 | "Sec-Fetch-Site": "same-site",

27 | "User-Agent": fake_useragent.UserAgent().random

28 | }

29 |

30 | @classmethod

31 | def __GetTokenJWT(self: type) -> str:

32 | DATA_: Dict[str, str] = self.__session.post(f"{self.__api}/mailbox").json()

33 |

34 | self.__EMAIL: str = DATA_["mailbox"]

35 | return DATA_["token"]

36 |

37 | @property

38 | def GetAddress(self: type) -> str:

39 | return f"{self.__EMAIL}"

40 |

41 | @classmethod

42 | def GetMessages(self: type) -> List[Dict[str, str]]:

43 | messages: Union[List, List[Dict[str, str]]] = []

44 |

45 | messages = self.__session.get(f"{self.__api}/messages", headers=self.__HEADERS).json()["messages"]

46 |

47 | return messages

48 |

49 | @classmethod

50 | def GetMessage(self: type, id: str) -> Dict[str, str]:

51 | DATA_: object = self.__session.get(f"{self.__api}/messages/{id}", headers=self.__HEADERS)

52 |

53 | if DATA_.status_code != 200:

54 | return "Invalid ID."

55 |

56 | return DATA_.json()

--------------------------------------------------------------------------------

/opengpt/models/completion/chatgptproxy/model.py:

--------------------------------------------------------------------------------

1 | import requests, random, time

2 |

3 | chars="abcdefghijklmnopqrstuvwxyz1234567890"

4 |

5 | class Model:

6 | session_id = "".join([random.choice(chars) for i in range(32)])

7 | user_fake_id = "".join([random.choice(chars) for i in range(16)])

8 | chat_id = "0"

9 |

10 | def GetAnswer(self, prompt: str):

11 | r = requests.post("https://chatgptproxy.me/api/v1/chat/conversation", json={"data": {"parent_id": self.chat_id, "session_id": self.session_id, "question": prompt, "user_fake_id": self.user_fake_id}}).json()

12 | if r["code"] == 200 and r["code_msg"] == "Success":

13 | self.chat_id = r["resp_data"]["chat_id"]

14 | r = requests.post("https://chatgptproxy.me/api/v1/chat/result", json={"data": {"chat_id": self.chat_id, "session_id": self.session_id, "user_fake_id": self.user_fake_id}}).json()

15 | if r["code"] == 200 and r["code_msg"] == "Success":

16 | if r["resp_data"]["answer"] != "":

17 | return r["resp_data"]["answer"]

18 | r = requests.post("https://chatgptproxy.me/api/v1/chat/result", json={"data": {"chat_id": self.chat_id, "session_id": self.session_id, "user_fake_id": self.user_fake_id}}).json()

19 | if r["code"] == 200 and r["code_msg"] == "Success":

20 | return r["resp_data"]["answer"]

21 | else:

22 | if "operation too frequent" in r["code_msg"].lower():

23 | print("Operation too frequent for result. Waiting 10 seconds...")

24 | time.sleep(10)

25 | return self.GetAnswer(prompt)

26 | print(f"There was an error with your request for result. The response was: {r}")

27 | return

28 | else:

29 | if "operation too frequent" in r["code_msg"].lower():

30 | print("Operation too frequent for question. Waiting 10 seconds...")

31 | time.sleep(10)

32 | return self.GetAnswer(prompt)

33 | elif "Your question has been received" in r["code_msg"]:

34 | print("Session id or user fake id probably already in use. Generating new ones...")

35 | self.session_id = "".join([random.choice(chars) for i in range(32)])

36 | self.user_fake_id = "".join([random.choice(chars) for i in range(16)])

37 | return self.GetAnswer(prompt)

38 | print(f"There was an error with your request for question. The response was: {r}")

39 | return

--------------------------------------------------------------------------------

/opengpt/libraries/colorama/ansi.py:

--------------------------------------------------------------------------------

1 | # Copyright Jonathan Hartley 2013. BSD 3-Clause license, see LICENSE file.

2 | '''

3 | This module generates ANSI character codes to printing colors to terminals.

4 | See: http://en.wikipedia.org/wiki/ANSI_escape_code

5 | '''

6 |

7 | CSI = '\033['

8 | OSC = '\033]'

9 | BEL = '\a'

10 |

11 |

12 | def code_to_chars(code):

13 | return CSI + str(code) + 'm'

14 |

15 | def set_title(title):

16 | return OSC + '2;' + title + BEL

17 |

18 | def clear_screen(mode=2):

19 | return CSI + str(mode) + 'J'

20 |

21 | def clear_line(mode=2):

22 | return CSI + str(mode) + 'K'

23 |

24 |

25 | class AnsiCodes(object):

26 | def __init__(self):

27 | # the subclasses declare class attributes which are numbers.

28 | # Upon instantiation we define instance attributes, which are the same

29 | # as the class attributes but wrapped with the ANSI escape sequence

30 | for name in dir(self):

31 | if not name.startswith('_'):

32 | value = getattr(self, name)

33 | setattr(self, name, code_to_chars(value))

34 |

35 |

36 | class AnsiCursor(object):

37 | def UP(self, n=1):

38 | return CSI + str(n) + 'A'

39 | def DOWN(self, n=1):

40 | return CSI + str(n) + 'B'

41 | def FORWARD(self, n=1):

42 | return CSI + str(n) + 'C'

43 | def BACK(self, n=1):

44 | return CSI + str(n) + 'D'

45 | def POS(self, x=1, y=1):

46 | return CSI + str(y) + ';' + str(x) + 'H'

47 |

48 |

49 | class AnsiFore(AnsiCodes):

50 | BLACK = 30

51 | RED = 31

52 | GREEN = 32

53 | YELLOW = 33

54 | BLUE = 34

55 | MAGENTA = 35

56 | CYAN = 36

57 | WHITE = 37

58 | RESET = 39

59 |

60 | # These are fairly well supported, but not part of the standard.

61 | LIGHTBLACK_EX = 90

62 | LIGHTRED_EX = 91

63 | LIGHTGREEN_EX = 92

64 | LIGHTYELLOW_EX = 93

65 | LIGHTBLUE_EX = 94

66 | LIGHTMAGENTA_EX = 95

67 | LIGHTCYAN_EX = 96

68 | LIGHTWHITE_EX = 97

69 |

70 |

71 | class AnsiBack(AnsiCodes):

72 | BLACK = 40

73 | RED = 41

74 | GREEN = 42

75 | YELLOW = 43

76 | BLUE = 44

77 | MAGENTA = 45

78 | CYAN = 46

79 | WHITE = 47

80 | RESET = 49

81 |

82 | # These are fairly well supported, but not part of the standard.

83 | LIGHTBLACK_EX = 100

84 | LIGHTRED_EX = 101

85 | LIGHTGREEN_EX = 102

86 | LIGHTYELLOW_EX = 103

87 | LIGHTBLUE_EX = 104

88 | LIGHTMAGENTA_EX = 105

89 | LIGHTCYAN_EX = 106

90 | LIGHTWHITE_EX = 107

91 |

92 |

93 | class AnsiStyle(AnsiCodes):

94 | BRIGHT = 1

95 | DIM = 2

96 | NORMAL = 22

97 | RESET_ALL = 0

98 |

99 | Fore = AnsiFore()

100 | Back = AnsiBack()

101 | Style = AnsiStyle()

102 | Cursor = AnsiCursor()

103 |

--------------------------------------------------------------------------------

/opengpt/models/image/hotpot/styles.yml:

--------------------------------------------------------------------------------

1 | ---

2 | 3D Black White: '40'

3 | 3D General 1: '41'

4 | 3D General 2: '43'

5 | 3D General 3: '44'

6 | 3D Minecraft 1: '46'

7 | 3D Portrait 1: '100'

8 | 3D Print 1: '42'

9 | 3D Roblox 1: '47'

10 | 3D Room 1: '94'

11 | 3D Voxel 1: '45'

12 | Acrylic Art: '17'

13 | Animation 1: '60'

14 | Animation 2: '61'

15 | Animation 3: '70'

16 | Animation 4: '79'

17 | Anime 1: '29'

18 | Anime Animal 1: '106'

19 | Anime Berserk: '31'

20 | Anime Black White: '30'

21 | Anime Korean 1: '32'

22 | Architecture General 1: '63'

23 | Architecture General 2: '75'

24 | Architecture Interior 1: '62'

25 | Architecture Interior 2: '74'

26 | Cartoon 1: '72'

27 | Charcoal 1: '54'

28 | Charcoal 2: '55'

29 | Charcoal 3: '56'

30 | Comic Book 1: '10'

31 | Comic Book 2: '11'

32 | Comic Book 3: '12'

33 | Comic Book 4: '73'

34 | Comic Book 5: '76'

35 | Concept Art 2: '71'

36 | Concept Art 3: '77'

37 | Concept Art 4: '84'

38 | Concept Art 5: '128'

39 | Concept Art 6: '126'

40 | Doom 1: '13'

41 | Doom 2: '14'

42 | Fantasy 1: '26'

43 | Fantasy 2: '27'

44 | Fantasy 3: '28'

45 | Fashion 1: '114'

46 | Gothic: '59'

47 | Graffiti: '18'

48 | Hotpot Art 1: '19'

49 | Hotpot Art 2: '20'

50 | Hotpot Art 3: '21'

51 | Hotpot Art 5: '22'

52 | Hotpot Art 6: '23'

53 | Hotpot Art 8: '139'

54 | Hotpot Art 9: '140'

55 | Icon 3D 1: '121'

56 | Icon Black White: '6'

57 | Icon Black White 2: '120'

58 | Icon Cute 1: '122'

59 | Icon Flat: '7'

60 | Icon Minimal 1: '119'

61 | Icon Sticker: '8'

62 | Illustration Art 1: '103'

63 | Illustration Art 2: '104'

64 | Illustration Art 3: '130'

65 | Illustration Flat: '69'

66 | Illustration General 1: '53'

67 | Illustration General 2: '123'

68 | Illustration General 3: '117'

69 | Illustration General 4: '118'

70 | Illustration General 5: '127'

71 | Illustration Smooth: '82'

72 | Isometric 1: '124'

73 | Isometric 2: '125'

74 | Japanese Art: '16'

75 | Line Art: '58'

76 | Line Art 2: '102'

77 | Logo Clean 1: '67'

78 | Logo Detailed 1: '65'

79 | Logo Draft 1: '66'

80 | Logo Hipster 1: '68'

81 | Logo Illustration 1: '116'

82 | Logo Sticker 1: '87'

83 | Low Poly 1: '96'

84 | Low Poly 2: '97'

85 | Oil Painting General 1: '39'

86 | Painting Art 1: '105'

87 | Painting Claude Monet 1: '89'

88 | Painting Huang Gongwang 1: '88'

89 | Painting Pablo Picasso 1: '90'

90 | Painting Paul Cezanne 1: '91'

91 | Painting Salvador Dali 1: '92'

92 | Painting Vincent Van Gogh 1: '93'

93 | Photo Food 1: '52'

94 | Photo General 1: '49'

95 | Photo Portrait 1: '50'

96 | Photo Portrait 2: '111'

97 | Photo Portrait 3: '112'

98 | Photo Product 1: '51'

99 | Photo Volumetric Lighting 1: '48'

100 | Pixel Art: '24'

101 | Pop Art: '81'

102 | Portrait 1: '33'

103 | Portrait 10: '138'

104 | Portrait 2: '34'

105 | Portrait 3: '35'

106 | Portrait 4: '135'

107 | Portrait 5: '132'

108 | Portrait 6: '131'

109 | Portrait 8: '136'

110 | Portrait 9: '137'

111 | Portrait Anime 1: '107'

112 | Portrait Anime 2: '108'

113 | Portrait Anime 3: '109'

114 | Portrait Anime 4: '110'

115 | Portrait Anime 5: '134'

116 | Portrait Concept Art 1: '141'

117 | Portrait Figurine 1: '95'

118 | Portrait Game 6: '133'

119 | Portrait Game 1: '83'

120 | Portrait Game 2: '98'

121 | Portrait Game 3: '99'

122 | Portrait Game 4: '113'

123 | Portrait Game 5: '129'

124 | Portrait Gothic: '38'

125 | Portrait Gothic 2: '115'

126 | Portrait Marble: '37'

127 | Portrait Mugshot: '36'

128 | Product Concept Art 1: '101'

129 | Retro Art: '80'

130 | Sci-fi 1: '64'

131 | Sci-fi 2: '85'

132 | Sci-fi 3: '86'

133 | Sculpture General 1: '25'

134 | Sketch General 1: '1'

135 | Sketch General 2: '2'

136 | Sketch General 3: '3'

137 | Sketch Scribble Black White 1: '4'

138 | Sketch Scribble Color 1: '5'

139 | Stained Glass 1: '78'

140 | Steampunk: '57'

141 | Sticker: '9'

142 | Watercolor General 1: '15'

--------------------------------------------------------------------------------

/.gitignore:

--------------------------------------------------------------------------------

1 | # Byte-compiled / optimized / DLL files

2 | __pycache__/

3 | *.py[cod]

4 | *$py.class

5 |

6 | # C extensions

7 | *.so

8 |

9 | # Distribution / packaging

10 | .Python

11 | build/

12 | develop-eggs/

13 | dist/

14 | downloads/

15 | eggs/

16 | .eggs/

17 | lib/

18 | lib64/

19 | parts/

20 | sdist/

21 | var/

22 | wheels/

23 | share/python-wheels/

24 | *.egg-info/

25 | .installed.cfg

26 | *.egg

27 | MANIFEST

28 |

29 | # PyInstaller

30 | # Usually these files are written by a python script from a template

31 | # before PyInstaller builds the exe, so as to inject date/other infos into it.

32 | *.manifest

33 | *.spec

34 |

35 | # Installer logs

36 | pip-log.txt

37 | pip-delete-this-directory.txt

38 |

39 | # Unit test / coverage reports

40 | htmlcov/

41 | .tox/

42 | .nox/

43 | .coverage

44 | .coverage.*

45 | .cache

46 | nosetests.xml

47 | coverage.xml

48 | *.cover

49 | *.py,cover

50 | .hypothesis/

51 | .pytest_cache/

52 | cover/

53 |

54 | # Translations

55 | *.mo

56 | *.pot

57 |

58 | # Django stuff:

59 | *.log

60 | local_settings.py

61 | db.sqlite3

62 | db.sqlite3-journal

63 |

64 | # Flask stuff:

65 | instance/

66 | .webassets-cache

67 |

68 | # Scrapy stuff:

69 | .scrapy

70 |

71 | # Sphinx documentation

72 | docs/_build/

73 |

74 | # PyBuilder

75 | .pybuilder/

76 | target/

77 |

78 | # Jupyter Notebook

79 | .ipynb_checkpoints

80 |

81 | # IPython

82 | profile_default/

83 | ipython_config.py

84 |

85 | # pyenv

86 | # For a library or package, you might want to ignore these files since the code is

87 | # intended to run in multiple environments; otherwise, check them in:

88 | # .python-version

89 |

90 | # pipenv

91 | # According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.

92 | # However, in case of collaboration, if having platform-specific dependencies or dependencies

93 | # having no cross-platform support, pipenv may install dependencies that don't work, or not

94 | # install all needed dependencies.

95 | #Pipfile.lock

96 |

97 | # poetry

98 | # Similar to Pipfile.lock, it is generally recommended to include poetry.lock in version control.

99 | # This is especially recommended for binary packages to ensure reproducibility, and is more

100 | # commonly ignored for libraries.

101 | # https://python-poetry.org/docs/basic-usage/#commit-your-poetrylock-file-to-version-control

102 | #poetry.lock

103 |

104 | # pdm

105 | # Similar to Pipfile.lock, it is generally recommended to include pdm.lock in version control.

106 | #pdm.lock

107 | # pdm stores project-wide configurations in .pdm.toml, but it is recommended to not include it

108 | # in version control.

109 | # https://pdm.fming.dev/#use-with-ide

110 | .pdm.toml

111 |

112 | # PEP 582; used by e.g. github.com/David-OConnor/pyflow and github.com/pdm-project/pdm

113 | __pypackages__/

114 |

115 | # Celery stuff

116 | celerybeat-schedule

117 | celerybeat.pid

118 |

119 | # SageMath parsed files

120 | *.sage.py

121 |

122 | # Environments

123 | .env

124 | .venv

125 | env/

126 | venv/

127 | ENV/

128 | env.bak/

129 | venv.bak/

130 |

131 | # Spyder project settings

132 | .spyderproject

133 | .spyproject

134 |

135 | # Rope project settings

136 | .ropeproject

137 |

138 | # mkdocs documentation

139 | /site

140 |

141 | # mypy

142 | .mypy_cache/

143 | .dmypy.json

144 | dmypy.json

145 |

146 | # Pyre type checker

147 | .pyre/

148 |

149 | # pytype static type analyzer

150 | .pytype/

151 |

152 | # Cython debug symbols

153 | cython_debug/

154 |

155 | # PyCharm

156 | # JetBrains specific template is maintained in a separate JetBrains.gitignore that can

157 | # be found at https://github.com/github/gitignore/blob/main/Global/JetBrains.gitignore

158 | # and can be added to the global gitignore or merged into this file. For a more nuclear

159 | # option (not recommended) you can uncomment the following to ignore the entire idea folder.

160 | #.idea/

161 |

--------------------------------------------------------------------------------

/opengpt/__init__.py:

--------------------------------------------------------------------------------

1 | from typing import Optional, Union, Dict, List, Text

2 | from .libraries.colorama import init, Fore, Style

3 | import importlib

4 | import yaml

5 | import sys

6 | import inspect

7 | import os

8 |

9 | init()

10 |

11 | class OpenGPTError(Exception):

12 | @staticmethod

13 | def Print(context: Text, warn: Optional[bool] = False) -> None:

14 | if warn:

15 | print(Fore.YELLOW + "Warning: " + context + Style.RESET_ALL)

16 | else:

17 | print(Fore.RED + "Error: " + context + Style.RESET_ALL)

18 | sys.exit(1)

19 |

20 | class OpenGPT:

21 | @classmethod

22 | def __init__(self: type, provider: Text, type: Optional[Text] = "completion", options: Optional[Union[Dict[Text, Text], None]] = None) -> None:

23 | self.__DIR: Text = os.path.dirname(os.path.abspath(__file__))

24 | self.__LoadModels()

25 | self.__TYPE: Text = type

26 | self.__OPTIONS: Union[Dict[Text, Text], None] = options

27 | self.__PROVIDER: Text = provider

28 | self.__Verifications()

29 | self.__MODULE: module = importlib.import_module(f"opengpt.models.{self.__TYPE}.{self.__PROVIDER}.model")

30 | self.__MODEL_CLASS: type = getattr(self.__MODULE, "Model")

31 | self.__model: type = None

32 | self.__InitializeModelClass()

33 |

34 | @classmethod

35 | def __InitializeModelClass(self: type) -> None:

36 | if not self.__OPTIONS is None:

37 | args: List[Text] = inspect.signature(self.__MODEL_CLASS.__init__)

38 | reqArgs: List[Text] = [

39 | param.name

40 | for param in args.parameters.values()

41 | if param.default == inspect.Parameter.empty

42 | ]

43 | missingArgs: List[Text] = []

44 |

45 | for arg in reqArgs:

46 | if arg not in self.__OPTIONS:

47 | missingArgs.append(arg)

48 |

49 | if len(missingArgs) > 0:

50 | OpenGPTError.Print(f"Missing one of non-optional parameters: {', '.join(missingArgs)}")

51 |

52 | self.__model = self.__MODEL_CLASS(**self.__OPTIONS)

53 | else:

54 | self.__model = self.__MODEL_CLASS()

55 |

56 | @classmethod

57 | def __LoadModels(self: type) -> None:

58 | self.__DATA: Dict[Text, Text] = yaml.safe_load(open(self.__DIR + "/config.yml", "r").read())

59 |

60 | @classmethod

61 | def __Verifications(self: type) -> None:

62 | exists: bool = False

63 |

64 | if self.__TYPE not in self.__DATA["models"]:

65 | OpenGPTError.Print(f"The type \"{self.__TYPE}\" not be founded. Try: {', '.join(self.__DATA['models'])}")

66 |

67 | if self.__PROVIDER not in self.__DATA["models"][self.__TYPE]:

68 | for type in self.__DATA["models"]:

69 | for model in self.__DATA["models"][type]:

70 | if model == self.__PROVIDER:

71 | rest: Text = f"Changing to \"{type}\"."

72 | OpenGPTError.Print(context=f"The provider \"{self.__PROVIDER}\" not is from type \"{self.__TYPE}\". " + rest, warn=True)

73 | self.__TYPE = type

74 | exists = True

75 | else:

76 | exists = True

77 |

78 | if not exists:

79 | OpenGPTError.Print(f"The provider \"{self.__PROVIDER}\" not exists.")

80 |

81 | incompletRest: Text = ''

82 | rest: Text = ''

83 |

84 | if self.__DATA["models"][self.__TYPE][self.__PROVIDER]["state"] == "incomplete":

85 | incompletRest = f"The provider \"{self.__PROVIDER}\" is incomplete. "

86 | if len(self.__DATA["models"][self.__TYPE][self.__PROVIDER]["bugs"]) > 0:

87 | rest = "In addition to having some bugs that have not yet been fixed."

88 |

89 | if len(rest) > 0 or len(incompletRest) > 0:

90 | OpenGPTError.Print(context=incompletRest + rest + " It is recommended not to use.", warn=True)

91 |

92 | @classmethod

93 | def __getattr__(self: type, name: Text) -> None:

94 | if hasattr(self.__model, name):

95 | attr: type = getattr(self.__model, name)

96 | if callable(attr):

97 | return lambda *args, **kwargs: attr(*args, **kwargs)

98 | return attr

99 | raise AttributeError(f"\"OpenGPT\" object has no attribute \"{name}\"")

100 |

--------------------------------------------------------------------------------

/opengpt/libraries/colorama/initialise.py:

--------------------------------------------------------------------------------

1 | # Copyright Jonathan Hartley 2013. BSD 3-Clause license, see LICENSE file.

2 | import atexit

3 | import contextlib

4 | import sys

5 |

6 | from .ansitowin32 import AnsiToWin32

7 |

8 |

9 | def _wipe_internal_state_for_tests():

10 | global orig_stdout, orig_stderr

11 | orig_stdout = None

12 | orig_stderr = None

13 |

14 | global wrapped_stdout, wrapped_stderr

15 | wrapped_stdout = None

16 | wrapped_stderr = None

17 |

18 | global atexit_done

19 | atexit_done = False

20 |

21 | global fixed_windows_console

22 | fixed_windows_console = False

23 |

24 | try:

25 | # no-op if it wasn't registered

26 | atexit.unregister(reset_all)

27 | except AttributeError:

28 | # python 2: no atexit.unregister. Oh well, we did our best.

29 | pass

30 |

31 |

32 | def reset_all():

33 | if AnsiToWin32 is not None: # Issue #74: objects might become None at exit

34 | AnsiToWin32(orig_stdout).reset_all()

35 |

36 |

37 | def init(autoreset=False, convert=None, strip=None, wrap=True):

38 |

39 | if not wrap and any([autoreset, convert, strip]):

40 | raise ValueError('wrap=False conflicts with any other arg=True')

41 |

42 | global wrapped_stdout, wrapped_stderr

43 | global orig_stdout, orig_stderr

44 |

45 | orig_stdout = sys.stdout

46 | orig_stderr = sys.stderr

47 |

48 | if sys.stdout is None:

49 | wrapped_stdout = None

50 | else:

51 | sys.stdout = wrapped_stdout = \

52 | wrap_stream(orig_stdout, convert, strip, autoreset, wrap)

53 | if sys.stderr is None:

54 | wrapped_stderr = None

55 | else:

56 | sys.stderr = wrapped_stderr = \

57 | wrap_stream(orig_stderr, convert, strip, autoreset, wrap)

58 |

59 | global atexit_done

60 | if not atexit_done:

61 | atexit.register(reset_all)

62 | atexit_done = True

63 |

64 |

65 | def deinit():

66 | if orig_stdout is not None:

67 | sys.stdout = orig_stdout

68 | if orig_stderr is not None:

69 | sys.stderr = orig_stderr

70 |

71 |

72 | def just_fix_windows_console():

73 | global fixed_windows_console

74 |

75 | if sys.platform != "win32":

76 | return

77 | if fixed_windows_console:

78 | return

79 | if wrapped_stdout is not None or wrapped_stderr is not None:

80 | # Someone already ran init() and it did stuff, so we won't second-guess them

81 | return

82 |

83 | # On newer versions of Windows, AnsiToWin32.__init__ will implicitly enable the

84 | # native ANSI support in the console as a side-effect. We only need to actually

85 | # replace sys.stdout/stderr if we're in the old-style conversion mode.

86 | new_stdout = AnsiToWin32(sys.stdout, convert=None, strip=None, autoreset=False)

87 | if new_stdout.convert:

88 | sys.stdout = new_stdout

89 | new_stderr = AnsiToWin32(sys.stderr, convert=None, strip=None, autoreset=False)

90 | if new_stderr.convert:

91 | sys.stderr = new_stderr

92 |

93 | fixed_windows_console = True

94 |

95 | @contextlib.contextmanager

96 | def colorama_text(*args, **kwargs):

97 | init(*args, **kwargs)

98 | try:

99 | yield

100 | finally:

101 | deinit()

102 |

103 |

104 | def reinit():

105 | if wrapped_stdout is not None:

106 | sys.stdout = wrapped_stdout

107 | if wrapped_stderr is not None:

108 | sys.stderr = wrapped_stderr

109 |

110 |

111 | def wrap_stream(stream, convert, strip, autoreset, wrap):

112 | if wrap:

113 | wrapper = AnsiToWin32(stream,

114 | convert=convert, strip=strip, autoreset=autoreset)

115 | if wrapper.should_wrap():

116 | stream = wrapper.stream

117 | return stream

118 |

119 |

120 | # Use this for initial setup as well, to reduce code duplication

121 | _wipe_internal_state_for_tests()

122 |

--------------------------------------------------------------------------------

/opengpt/models/completion/forefront/tools/system/email_creation.py:

--------------------------------------------------------------------------------

1 | from typing import Dict

2 | from ......libraries.tempmail import TempMail

3 | from ......libraries.colorama import init, Fore, Style

4 | from ..typing.response import EmailResponse

5 | import re

6 | import json

7 | import logging

8 | import fake_useragent

9 | import tls_client

10 |

11 | init()

12 |

13 |

14 | class Email:

15 | @classmethod

16 | def __init__(self: type) -> None:

17 | self.__SETUP_LOGGER()

18 | self.__session: tls_client.Session = tls_client.Session(

19 | client_identifier="chrome_110"

20 | )

21 |

22 | @classmethod

23 | def __SETUP_LOGGER(self: type) -> None:

24 | self.__logger: logging.getLogger = logging.getLogger(__name__)

25 | self.__logger.setLevel(logging.DEBUG)

26 | console_handler: logging.StreamHandler = logging.StreamHandler()

27 | console_handler.setLevel(logging.DEBUG)

28 | formatter: logging.Formatter = logging.Formatter(

29 | "Account Creaction - %(levelname)s - %(message)s"

30 | )

31 | console_handler.setFormatter(formatter)

32 |

33 | self.__logger.addHandler(console_handler)

34 |

35 | @classmethod

36 | def __AccountState(self: object, output: str, field: str) -> bool:

37 | if field not in output:

38 | return False

39 | return True

40 |

41 | @classmethod

42 | def CreateAccount(self: object) -> str:

43 | Mail = TempMail()

44 | MailAddress = Mail.GetAddress

45 |

46 | self.__session.headers = {

47 | "Origin": "https://accounts.forefront.ai",

48 | "User-Agent": fake_useragent.UserAgent().random,

49 | }

50 |

51 | self.__logger.debug(Fore.CYAN + "Checking URL" + Style.RESET_ALL)

52 |

53 | output = self.__session.post(

54 | "https://clerk.forefront.ai/v1/client/sign_ups?_clerk_js_version=4.38.4",

55 | data={"email_address": MailAddress},

56 | )

57 |

58 | if not self.__AccountState(str(output.text), "id"):

59 | self.__logger.error(

60 | Fore.RED + "Failed to create account." + Style.RESET_ALL

61 | )

62 | return "Failed"

63 |

64 | trace_id = output.json()["response"]["id"]

65 |

66 | output = self.__session.post(

67 | f"https://clerk.forefront.ai/v1/client/sign_ups/{trace_id}/prepare_verification?_clerk_js_version=4.38.4",

68 | data={

69 | "strategy": "email_link",

70 | "redirect_url": "https://accounts.forefront.ai/sign-up/verify",

71 | },

72 | )

73 |

74 | if not self.__AccountState(output.text, "sign_up_attempt"):

75 | self.__logger.error(

76 | Fore.RED + "Failed to create account." + Style.RESET_ALL

77 | )

78 | return "Failed"

79 |

80 | self.__logger.debug(Fore.CYAN + "Verifying account" + Style.RESET_ALL)

81 |

82 | while True:

83 | messages: Mail.GetMessages = Mail.GetMessages()

84 |

85 | if len(messages) > 0:

86 | message: Dict[str, str] = Mail.GetMessage(messages[0]["_id"])

87 | verification_url = re.findall(

88 | r"https:\/\/clerk\.forefront\.ai\/v1\/verify\?token=\w.+",

89 | message["bodyHtml"],

90 | )[0]

91 | if verification_url:

92 | break

93 |

94 | r = self.__session.get(verification_url.split('"')[0])

95 | __client: str = r.cookies["__client"]

96 |

97 | output = self.__session.get(

98 | "https://clerk.forefront.ai/v1/client?_clerk_js_version=4.38.4"

99 | )

100 | token: str = output.json()["response"]["sessions"][0]["last_active_token"][

101 | "jwt"

102 | ]

103 | sessionID: str = output.json()["response"]["last_active_session_id"]

104 |

105 | self.__logger.debug(Fore.GREEN + "Created account!" + Style.RESET_ALL)

106 |

107 | return EmailResponse(**{"sessionID": sessionID, "client": __client})

108 |

--------------------------------------------------------------------------------

/opengpt/models/completion/forefront/attributes/conversation.py:

--------------------------------------------------------------------------------

1 | from typing import List, Dict

2 | from pydantic import BaseModel

3 | from .....libraries.colorama import init, Fore, Style

4 | import json

5 | import uuid

6 |

7 | class Conversation():

8 | @classmethod

9 | def __init__(self: type, model: type) -> None:

10 | self.__model: type = model

11 |

12 | @classmethod

13 | def GetList(self: type) -> List[Dict[str, str]]:

14 | self.__model._UpdateJWTToken()

15 | PAYLOAD: Dict[str, str] = {

16 | "0":{

17 | "json":{

18 | "workspaceId": self.__model._WORKSPACEID

19 | }

20 | },

21 | "1":{

22 | "json":{

23 | "workspaceId": self.__model._WORKSPACEID

24 | }

25 | }

26 | }

27 |

28 | return self.__model._session.get(f"{self.__model._API}/chat.loadTree,personas.listPersonas?batch=1&input={json.dumps(PAYLOAD)}", headers=self.__model._HEADERS).json()[0]["result"]["data"]["json"][0]["data"]

29 |

30 |

31 | @classmethod

32 | def Rename(self: type, id: str, name: str) -> None:

33 | conversations: List[Dict[str, str]] = self.GetList()

34 | PAYLOAD: Dict[str, str] = {

35 | "0": {

36 | "json": {

37 | "id": id,

38 | "name": name,

39 | "workspaceId": self.__model._WORKSPACEID

40 | }

41 | }

42 | }

43 |

44 | for cv in conversations:

45 | if id == cv["id"]:

46 | DATA_: object = self.__model._session.post(f"{self.__model._API}/chat.renameChat?batch=1", json=PAYLOAD, headers=self.__model._HEADERS)

47 |

48 | if DATA_.status_code == 200:

49 | self.__model._logger.debug(f"Renamed conversation ({Fore.CYAN}{id}{Style.RESET_ALL}) to ({Fore.MAGENTA}{name}{Style.RESET_ALL}).")

50 | else:

51 | self.__model._logger.error(f"{Fore.RED}Error on rename the conversation {id}{Style.RESET_ALL}")

52 | return None

53 |

54 | @classmethod

55 | def GenerateName(self: type, message: str) -> str:

56 | __PAYLOAD: Dict[str, str] = {

57 | "0": {

58 | "json": {

59 | "messages": [

60 | {

61 | "id": "",

62 | "content": message,

63 | "parentId": str(uuid.uuid4()),

64 | "role": "user",

65 | "createdAt": "",

66 | "model": self.__model._model

67 | }

68 | ]

69 | },

70 | "meta": {

71 | "values": {

72 | "messages.0.createdAt": ["Date"]

73 | }

74 | }

75 | }

76 | }

77 | Suggestion: Dict[str, str] = self.__model._session.post(f"{self.__model._API}/chat.generateName?batch=1",

78 | headers=self.__model._HEADERS, json=__PAYLOAD).json()

79 | return Suggestion[0]["result"]["data"]["json"]["title"]

80 |

81 |

82 | @classmethod

83 | def Remove(self: type, id: str) -> None:

84 | conversations: List[Dict[str, str]] = self.GetList()

85 | PAYLOAD: Dict[str, str] = {

86 | "0": {

87 | "json": {

88 | "id": id,

89 | "workspaceId": self.__model._WORKSPACEID

90 | }

91 | }

92 | }

93 |

94 | for cv in conversations:

95 | if id == cv["id"]:

96 | DATA_: object = self.__model._session.post(f"{self.__model._API}/chat.removeChat?batch=1",

97 | json=PAYLOAD, headers=self.__model._HEADERS)

98 |

99 | if DATA_.status_code == 200:

100 | self.__model._logger.debug(f"Deleted conversation ({Fore.CYAN}{id}{Style.RESET_ALL}).")

101 | else:

102 | self.__model._logger.error(f"{Fore.RED}Error on delete conversation {id}{Style.RESET_ALL}")

103 | return None

104 |

105 | @classmethod

106 | def GetMessages(self: type, id: str) -> List[Dict[str, str]]:

107 | __PAYLOAD: Dict[str, str] = {

108 | "0": {

109 | "json": {

110 | "chatId": id,

111 | "workspaceId": self.__model._WORKSPACEID

112 | }

113 | }

114 | }

115 | DATA_: Dict[str, str] = self.__model._session.post(f"{self.__model._API}/chat.getMessagesByChatId?batch=1",

116 | headers=self.__model._HEADERS, json=__PAYLOAD)

117 |

118 | if DATA_.status_code != 200:

119 | self.__model._logger.error(f"{Fore.RED}Error on get messages of conversation ({id}){Style.RESET_ALL}")

120 | return {}

121 |

122 | return DATA_.json()[0]["result"]["data"]["json"]["messages"]

123 |

124 | @classmethod

125 | def ClearAll(self: type) -> None:

126 | conversations: List[Dict[str, str]] = self.GetList()

127 | ct: int = 0

128 |

129 | for cv in conversations:

130 | if cv["type"] == "chat":

131 | self.Remove(cv["id"])

132 | ct += 1

133 |

134 | print(f"Deleted ({Fore.CYAN}{ct}{Style.RESET_ALL}) conversation(s).")

--------------------------------------------------------------------------------

/opengpt/models/image/hotpot/model.py:

--------------------------------------------------------------------------------

1 | from typing import Dict, Text, Optional, Generator, Any, Tuple

2 | from .tools.system.id import UniqueID

3 | from .tools.typing.response import ModelResponse

4 | from ....libraries.colorama import init, Fore, Style

5 | import yaml

6 | import requests

7 | import fake_useragent

8 | import logging

9 | import os

10 |

11 | init()

12 |

13 | class OpenGPTError(Exception):

14 | @staticmethod

15 | def Print(context: Text, warn: Optional[bool] = False) -> None:

16 | if warn:

17 | print(Fore.YELLOW + "Warning: " + context + Style.RESET_ALL)

18 | else:

19 | print(Fore.RED + "Error: " + context + Style.RESET_ALL)

20 | sys.exit(1)

21 |

22 | class Model:

23 | @classmethod

24 | def __init__(self: type, style: Optional[Text] = "Hotpot Art 9") -> None:

25 | self._SETUP_LOGGER()

26 | self.__DIR: Text = os.path.dirname(os.path.abspath(__file__))

27 | self.__LoadStyles()

28 | self.__session: requests.Session = requests.Session()

29 | self.__UNIQUE_ID: str = UniqueID(16)

30 | self.STYLE: Text = style

31 | self.__STYLE_ID = self.__GetStyleID(self.STYLE)

32 | self.__HEADERS: Dict[str, str] = {

33 | "Accept": "*/*",

34 | "Accept-Language": "pt-BR,pt;q=0.9,en-US;q=0.8,en;q=0.7",

35 | "Content-Type": f"multipart/form-data; boundary=----WebKitFormBoundary{self.__UNIQUE_ID}",

36 | "Authorization": "hotpot-temp9n88MmVw8uaDzmoBq",

37 | "Host": "api.hotpot.ai",

38 | "Origin": "https://hotpot.ai",

39 | "Referer": "https://hotpot.ai/",

40 | "Sec-Ch-Ua": "\"Chromium\";v=\"112\", \"Google Chrome\";v=\"112\", \"Not:A-Brand\";v=\"99\"",

41 | "Sec-Ch-Ua-mobile": "?0",

42 | "Sec-Ch-Ua-platform": "\"Windows\"",

43 | "Sec-Fetch-Dest": "empty",

44 | "Sec-Fetch-Mode": "cors",

45 | "Sec-Fetch-Site": "same-site",

46 | "User-Agent": fake_useragent.UserAgent().random

47 | }

48 |

49 | @classmethod

50 | def _SETUP_LOGGER(self: type) -> None:

51 | self.__logger: logging.getLogger = logging.getLogger(__name__)

52 | self.__logger.setLevel(logging.DEBUG)

53 | console_handler: logging.StreamHandler = logging.StreamHandler()

54 | console_handler.setLevel(logging.DEBUG)

55 | formatter: logging.Formatter = logging.Formatter("Model - %(levelname)s - %(message)s")

56 | console_handler.setFormatter(formatter)

57 |

58 | self.__logger.addHandler(console_handler)

59 |

60 | @classmethod

61 | def __GetStyleID(self: type, style: Text) -> int:

62 | if style in self.__DATA:

63 | return int(self.__DATA[style])

64 | else:

65 | OpenGPTError.Print(context=f"The style \"{style}\" not found. Changing to \"Hotpot Art 9\"", warn=True)

66 | self.STYLE = "Hotpot Art 9"

67 | return int(140)

68 |

69 | @classmethod

70 | def UpdateStyle(self: type, style: Text) -> None:

71 | self.STYLE = style

72 | self.__STYLE_ID = self.__GetStyleID(self.STYLE)

73 |

74 | @classmethod

75 | def __LoadStyles(self: type) -> None:

76 | self.__DATA: Dict[Text, Text] = yaml.safe_load(open(self.__DIR + "/styles.yml", "r").read())

77 |

78 | @classmethod