├── src

└── darktable_lut_generator

│ ├── __init__.py

│ ├── styles

│ ├── __init__.py

│ ├── raw_lens_correction.dtstyle

│ ├── image.dtstyle

│ └── raw.dtstyle

│ ├── tryout_highlights.py

│ ├── make_rgb_image.py

│ ├── main.py

│ └── estimate_lut.py

├── .idea

└── .gitignore

├── images_readme

├── jpeg.jpg

├── raw.jpg

├── provia.jpg

└── samples.jpg

├── pyproject.toml

├── requirements.txt

├── MANIFEST.in

├── setup.py

├── README.md

└── LICENSE

/src/darktable_lut_generator/__init__.py:

--------------------------------------------------------------------------------

1 |

--------------------------------------------------------------------------------

/src/darktable_lut_generator/styles/__init__.py:

--------------------------------------------------------------------------------

1 |

--------------------------------------------------------------------------------

/.idea/.gitignore:

--------------------------------------------------------------------------------

1 | # Default ignored files

2 | /shelf/

3 | /workspace.xml

4 |

--------------------------------------------------------------------------------

/images_readme/jpeg.jpg:

--------------------------------------------------------------------------------

https://raw.githubusercontent.com/wilecoyote2015/darktabe_lut_generator/HEAD/images_readme/jpeg.jpg

--------------------------------------------------------------------------------

/images_readme/raw.jpg:

--------------------------------------------------------------------------------

https://raw.githubusercontent.com/wilecoyote2015/darktabe_lut_generator/HEAD/images_readme/raw.jpg

--------------------------------------------------------------------------------

/pyproject.toml:

--------------------------------------------------------------------------------

1 | [build-system]

2 | requires = ["setuptools>=42"]

3 | build-backend = "setuptools.build_meta"

4 |

5 |

--------------------------------------------------------------------------------

/images_readme/provia.jpg:

--------------------------------------------------------------------------------

https://raw.githubusercontent.com/wilecoyote2015/darktabe_lut_generator/HEAD/images_readme/provia.jpg

--------------------------------------------------------------------------------

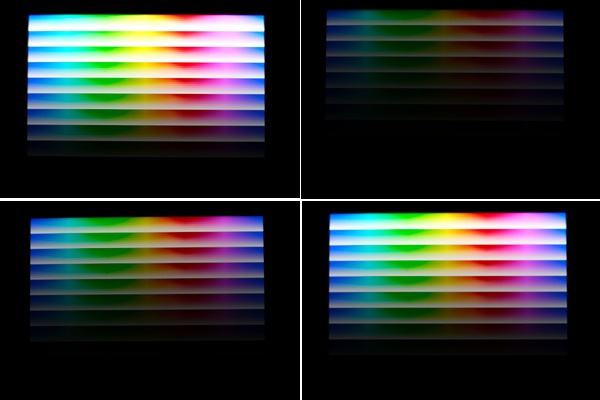

/images_readme/samples.jpg:

--------------------------------------------------------------------------------

https://raw.githubusercontent.com/wilecoyote2015/darktabe_lut_generator/HEAD/images_readme/samples.jpg

--------------------------------------------------------------------------------

/requirements.txt:

--------------------------------------------------------------------------------

1 | numpy

2 | scipy

3 | scikit-learn

4 | opencv-python

5 | tqdm

6 | pandas

7 | plotly

8 | colour-science

9 | statsmodels

10 |

--------------------------------------------------------------------------------

/MANIFEST.in:

--------------------------------------------------------------------------------

1 | include src/darktable_lut_generator/styles/image.dtstyle

2 | include src/darktable_lut_generator/styles/raw.dtstyle

3 | include src/darktable_lut_generator/styles/raw_lens_correction.dtstyle

--------------------------------------------------------------------------------

/src/darktable_lut_generator/tryout_highlights.py:

--------------------------------------------------------------------------------

1 | import PyOpenColorIO as OCIO

2 |

3 | # Load an existing configuration from the environment.

4 | # The resulting configuration is read-only. If $OCIO is set, it will use that.

5 | # Otherwise it will use an internal default.

6 | config = OCIO.GetCurrentConfig()

7 |

8 | # What color spaces exist?

9 | colorSpaceNames = [cs.getName() for cs in config.getColorSpaces()]

10 | print(colorSpaceNames)

11 |

12 | # Given a string, can we parse a color space name from it?

13 | inputString = 'myname_linear.exr'

14 | colorSpaceName = config.parseColorSpaceFromString(inputString)

15 | if colorSpaceName:

16 | print('Found color space', colorSpaceName)

17 | else:

18 | print('Could not get color space from string', inputString)

19 |

20 | # What is the name of scene-linear in the configuration?

21 | colorSpace = config.getColorSpace(OCIO.ROLE_SCENE_LINEAR)

22 | if colorSpace:

23 | print(colorSpace.getName())

24 | else:

25 | print('The role of scene-linear is not defined in the configuration')

26 |

27 | # For examples of how to actually perform the color transform math,

28 | # see 'Python: Processor' docs.

29 |

30 | # Create a new, empty, editable configuration

31 | config = OCIO.Config()

32 |

33 | # For additional examples of config manipulation, see

34 | # https://github.com/imageworks/OpenColorIO-Configs/blob/master/nuke-default/make.py

35 |

--------------------------------------------------------------------------------

/setup.py:

--------------------------------------------------------------------------------

1 | import setuptools

2 |

3 | with open("README.md", "r", encoding="utf-8") as fh:

4 | long_description = fh.read()

5 |

6 | setuptools.setup(

7 | name="darktable-lut-generator",

8 | version="0.1.2",

9 | author="Björn Sonnenschein",

10 | author_email="wilecoyote2015@gmail.com",

11 | description="Estimate a .cube 3D lookup table from camera images for the Darktable lut 3D module.",

12 | long_description=long_description,

13 | long_description_content_type="text/markdown",

14 | url="https://github.com/wilecoyote2015/darktabe_lut_generator",

15 | project_urls={

16 | "Bug Tracker": "https://github.com/wilecoyote2015/darktabe_lut_generator/issues",

17 | },

18 | classifiers=[

19 | "Programming Language :: Python :: 3",

20 | "License :: OSI Approved :: GNU Lesser General Public License v3 (LGPLv3)",

21 | "Operating System :: OS Independent",

22 | ],

23 | package_dir={"": "src"},

24 | packages=setuptools.find_packages(where="src"),

25 | python_requires=">=3.7",

26 | include_package_data=True,

27 | entry_points={

28 | 'console_scripts': [

29 | 'darktable_lut_generator=darktable_lut_generator.main:main',

30 | 'darktable_lut_generate_pattern=darktable_lut_generator.make_rgb_image:main'

31 | ]

32 | },

33 | install_requires=[

34 | 'numpy',

35 | 'scipy',

36 | 'sklearn',

37 | 'opencv-python',

38 | 'tqdm',

39 | 'plotly',

40 | 'pandas',

41 | ]

42 | )

43 |

--------------------------------------------------------------------------------

/src/darktable_lut_generator/styles/raw_lens_correction.dtstyle:

--------------------------------------------------------------------------------

1 |

2 | raw_lens_correctionrawprepare,0,invert,0,temperature,0,highlights,0,cacorrect,0,hotpixels,0,rawdenoise,0,demosaic,0,denoiseprofile,0,bilateral,0,rotatepixels,0,scalepixels,0,lens,0,cacorrectrgb,0,hazeremoval,0,ashift,0,flip,0,clipping,0,liquify,0,spots,0,retouch,0,exposure,0,mask_manager,0,tonemap,0,toneequal,0,crop,0,graduatednd,0,profile_gamma,0,equalizer,0,colorin,0,channelmixerrgb,0,diffuse,0,censorize,0,negadoctor,0,blurs,0,nlmeans,0,colorchecker,0,defringe,0,atrous,0,lowpass,0,highpass,0,sharpen,0,colortransfer,0,colormapping,0,channelmixer,0,basicadj,0,colorbalance,0,colorbalancergb,0,rgbcurve,0,rgblevels,0,basecurve,0,filmic,0,filmicrgb,0,lut3d,0,colisa,0,tonecurve,0,levels,0,shadhi,0,zonesystem,0,globaltonemap,0,relight,0,bilat,0,colorcorrection,0,colorcontrast,0,velvia,0,vibrance,0,colorzones,0,bloom,0,colorize,0,lowlight,0,monochrome,0,grain,0,soften,0,splittoning,0,vignette,0,colorreconstruct,0,colorout,0,clahe,0,finalscale,0,overexposed,0,rawoverexposed,0,dither,0,borders,0,watermark,0,gamma,0

3 |

--------------------------------------------------------------------------------

/src/darktable_lut_generator/styles/image.dtstyle:

--------------------------------------------------------------------------------

1 |

2 | imagerawprepare,0,invert,0,temperature,0,highlights,0,cacorrect,0,hotpixels,0,rawdenoise,0,demosaic,0,denoiseprofile,0,bilateral,0,rotatepixels,0,scalepixels,0,lens,0,cacorrectrgb,0,hazeremoval,0,ashift,0,flip,0,clipping,0,liquify,0,spots,0,retouch,0,exposure,0,mask_manager,0,tonemap,0,toneequal,0,crop,0,graduatednd,0,profile_gamma,0,equalizer,0,colorin,0,channelmixerrgb,0,diffuse,0,censorize,0,negadoctor,0,blurs,0,nlmeans,0,colorchecker,0,defringe,0,atrous,0,lowpass,0,highpass,0,sharpen,0,colortransfer,0,colormapping,0,channelmixer,0,basicadj,0,colorbalance,0,colorbalancergb,0,rgbcurve,0,rgblevels,0,basecurve,0,filmic,0,filmicrgb,0,lut3d,0,colisa,0,tonecurve,0,levels,0,shadhi,0,zonesystem,0,globaltonemap,0,relight,0,bilat,0,colorcorrection,0,colorcontrast,0,velvia,0,vibrance,0,colorzones,0,bloom,0,colorize,0,lowlight,0,monochrome,0,grain,0,soften,0,splittoning,0,vignette,0,colorreconstruct,0,colorout,0,clahe,0,finalscale,0,overexposed,0,rawoverexposed,0,dither,0,borders,0,watermark,0,gamma,0

3 |

--------------------------------------------------------------------------------

/src/darktable_lut_generator/make_rgb_image.py:

--------------------------------------------------------------------------------

1 | import cv2

2 | import numpy as np

3 | import argparse

4 |

5 | parser = argparse.ArgumentParser(

6 | # description='Generate .cube 3D LUT from jpg/raw sample pairs',

7 | usage='Generate simple test pattern for out-of-camera style estimation.'

8 | 'Display the generated pattern on a wide-gamut screen (OLED smartphone '

9 | 'with vivid color settings is fine).'

10 | ' Take approx. 5 photos of the screen with different exposure compensation values'

11 | ' wit RAW+JPEG setting. Those photos should provide a good input for the LUT estimation.'

12 | ' However, additional real-world sample images help, too.'

13 | )

14 |

15 | parser.add_argument(

16 | 'file_output',

17 | type=str,

18 | help='Desired filepath to store output image (with extension).'

19 | )

20 |

21 | args = parser.parse_args()

22 |

23 | max_ = 2 ** 8 - 1

24 |

25 | width = 1800

26 | height = 1200

27 | step_constant = 15

28 |

29 | n_luma_bands = 10

30 |

31 | n_px_segment = int(width / 6)

32 |

33 | ramp = np.linspace(0, max_, n_px_segment)

34 |

35 | ramp_r = np.concatenate(

36 | [

37 | np.full((n_px_segment,), max_, dtype=float),

38 | np.flip(ramp),

39 | np.full((n_px_segment * 2,), 0, dtype=float),

40 | ramp,

41 | np.full((n_px_segment,), max_, dtype=float)

42 | ],

43 | axis=0

44 | )

45 |

46 | ramp_g = np.roll(ramp_r, n_px_segment * 2)

47 | ramp_b = np.roll(ramp_g, n_px_segment * 2)

48 | band = np.stack([ramp_r, ramp_g, ramp_b], axis=1)

49 |

50 | result = np.zeros((height, width, 3))

51 |

52 | luma_band = np.zeros(

53 | (int(height / n_luma_bands), width, 3)

54 | )

55 | n_steps_saturation = int(luma_band.shape[0] / step_constant)

56 |

57 | for idx_step_saturation in range(n_steps_saturation):

58 | saturation = 1. - (idx_step_saturation / (n_steps_saturation - 1))

59 | band_saturated = (band - max_) * saturation + max_

60 |

61 | idx_band = step_constant * idx_step_saturation

62 |

63 | luma_band[idx_band:idx_band + step_constant] = np.tile(band_saturated[np.newaxis, ...], (step_constant, 1, 1))

64 |

65 | idx_y = 0

66 | for idx_luma_band in range(n_luma_bands):

67 | brightness_factor = 1. - (idx_luma_band / (n_luma_bands - 1))

68 | idx_start = idx_luma_band * luma_band.shape[0]

69 | result[idx_start: idx_start + luma_band.shape[0]] = luma_band * brightness_factor

70 |

71 | cv2.imwrite(args.file_output, result.astype(np.uint8))

72 |

--------------------------------------------------------------------------------

/src/darktable_lut_generator/styles/raw.dtstyle:

--------------------------------------------------------------------------------

1 |

2 | rawrawprepare,0,invert,0,temperature,0,highlights,0,cacorrect,0,hotpixels,0,rawdenoise,0,demosaic,0,denoiseprofile,0,bilateral,0,rotatepixels,0,scalepixels,0,lens,0,cacorrectrgb,0,hazeremoval,0,ashift,0,flip,0,clipping,0,liquify,0,spots,0,retouch,0,exposure,0,mask_manager,0,tonemap,0,toneequal,0,crop,0,graduatednd,0,profile_gamma,0,equalizer,0,colorin,0,channelmixerrgb,0,diffuse,0,censorize,0,negadoctor,0,blurs,0,nlmeans,0,colorchecker,0,defringe,0,atrous,0,lowpass,0,highpass,0,sharpen,0,colortransfer,0,colormapping,0,channelmixer,0,basicadj,0,colorbalance,0,colorbalancergb,0,rgbcurve,0,rgblevels,0,basecurve,0,filmic,0,filmicrgb,0,lut3d,0,colisa,0,tonecurve,0,levels,0,shadhi,0,zonesystem,0,globaltonemap,0,relight,0,bilat,0,colorcorrection,0,colorcontrast,0,velvia,0,vibrance,0,colorzones,0,bloom,0,colorize,0,lowlight,0,monochrome,0,grain,0,soften,0,splittoning,0,vignette,0,colorreconstruct,0,colorout,0,clahe,0,finalscale,0,overexposed,0,rawoverexposed,0,dither,0,borders,0,watermark,0,gamma,0

3 |

--------------------------------------------------------------------------------

/README.md:

--------------------------------------------------------------------------------

1 | This package estimates a .cube 3D lookup table (LUT) for use with the Darktable lut 3D module.

2 | It was designed to obtain 3D LUTs replicating in-camera jpeg styles.

3 | This is especially useful if one shoots large sets of RAW photos (e.g. for commission), where most shall simply

4 | resemble the standard out-of-camera (OOC) style when exported by darktable, while still being able to do some quick

5 | corrections on selected images while maintaining the OOC style.

6 | **The resulting LUTs are, if using the default processing style, intended for usage without Filmic/Basecurve etc. (Set auto-apply pixel workflow defaults to none)**

7 |

8 | Below is an example using an LUT estimated to match the Provia film simulation on a Fujifilm X-T3.

9 | First is the OOC Jpeg, second is the RAW processed in Darktable with the LUT and third is the RAW processed in Darktable

10 | without any corrections:

11 |

12 |

13 |

14 |

15 |

16 | # Installation

17 |

18 | Python 3 must be installed.

19 | Installation of Darktable LUT Generator via pip:

20 | ```pip install darktable_lut_generator```

21 |

22 | # Usage

23 |

24 | Run:

25 | ```darktable_lut_generator [path to directory with images] [output .cube file]```

26 | For help and further arguments, run

27 | ```darktable_lut_generator --help```

28 |

29 | A directory with image pairs of one RAW image and the corresponding OOC image (e.g. jpeg) is used as input.

30 | The images should represent a wide variety of colors; ideally, the whole Adobe RGB color space is covered.

31 | The resulting LUT is intended for application in Adobe RGB color space.

32 | Hence, it is advisable to also shoot the in-camera jpegs in Adobe RGB in order to cover the whole available gamut.

33 | In default configuration, Darktable may apply an exposure module with camera exposure bias correction automatically

34 | to raw files. The LUTs produced by this module are constructed to resemble the OOC jpeg when used on a raw

35 | image *without* the exposure bias correction. Also, the *filmic rgb* module should be turned off.

36 | Another issue is in-camera lens correction. By default, this script does not use darktable's lens-correction module.

37 | If possible, the images should be taken without any in-camera lens correction.

38 | If this is not possible (e.g. because in-camera lens correction cannot be disabled on the used camera), see `darktable_lut_generator --help` for the appropriate option to enable darktable's lens correction.

39 |

40 | The command

41 | ```darktable_lut_generate_pattern [path to output image]```

42 | may be used to generate a simple test pattern. If the pattern is displayed on a wide-gamut screen

43 | (an OLED smartphone with vidid color settings is fine), approx. 5 RAW+JPEG pairs can be photographed at different

44 | exposures. That may provide a good starting sample set and is often sufficient for good results, but additional real-world images are always

45 | helpful.

46 | When applying the resulting LUT to the RAWs with those test images, there will still be some artifacts near the gamut

47 | limits.

48 | I don't know (yet) whether this results from the estimation procedure or some issues / limited understanding

49 | regarding the exact color space transformations used by Darktable when processing / saving the sample images

50 | or when applying the LUT. An example of the test-set JPEGs generated by shooting a smartphone with the test pattern is

51 | given below:

52 |

53 |

54 | There are also some options helping the user to understand with the result interpretation for tweaking the settings

55 | and check the sample images.

56 | In particular, `--path_dir_out_info` defines a custom directory path to output some charts and images, like alignment

57 | results

58 | and visualizations of the generated LUT. **TODO: documentation of outputs**

59 |

60 | # Estimation

61 |

62 | Estimation is performed by estimating the differences to an identity LUT using linear regression with an appropriately constrained parameter space, assuming trilinear interpolation when applying the LUT.

63 | Very sparsely or non-sampled colors will be interpolated with neighboring colors. However, no sophisticated hyperparameter tuning has been conducted in order to identify sparsely sampled patches, especially regarding different cube size.

64 | `n_samples` pixels are sampled from the image, as using all pixels is computationally expensive.

65 | Sampling is performed weighted by the inverse estimated sample density conditioned on the raw pixel colors in order to

66 | obtain a sample with approximately uniform distribution over the represented colors.

67 | This reduces the needed sample count for good results by approx. an order of magnitude compared to drawing pixels

68 | uniformly.

69 |

70 | # Additional Resources

71 |

72 | ## About LUTs and color management

73 |

74 | https://docs.darktable.org/usermanual/3.8/en/module-reference/processing-modules/lut-3d/

75 | https://eng.aurelienpierre.com/

76 | https://library.imageworks.com/pdfs/imageworks-library-cinematic_color.pdf

77 |

78 | # Forums

79 |

80 | https://discuss.pixls.us/t/how-to-create-haldcluts-from-in-camera-processing-styles/12690

81 | https://discuss.pixls.us/t/help-me-build-a-lua-script-for-automatically-applying-fujifilm-film-simulations-and-more/30287

82 | https://discuss.pixls.us/t/creating-3d-cube-luts-for-camera-ooc-styles/30968

83 |

84 | # Similar tools

85 | https://github.com/bastibe/LUT-Maker

86 | https://github.com/savuori/haldclut_dt

87 |

88 |

89 |

90 |

91 |

92 |

--------------------------------------------------------------------------------

/src/darktable_lut_generator/main.py:

--------------------------------------------------------------------------------

1 | """

2 | Darktable LUT Generator: Generate .cube lookup tables from out-of-camera photos

3 | Copyright (C) 2021 Björn Sonnenschein

4 |

5 | This program is free software: you can redistribute it and/or modify

6 | it under the terms of the GNU General Public License as published by

7 | the Free Software Foundation, either version 3 of the License, or

8 | (at your option) any later version.

9 |

10 | This program is distributed in the hope that it will be useful,

11 | but WITHOUT ANY WARRANTY; without even the implied warranty of

12 | MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the

13 | GNU General Public License for more details.

14 |

15 | You should have received a copy of the GNU General Public License

16 | along with this program. If not, see .

17 | """

18 |

19 | import argparse

20 | import sys

21 |

22 | from darktable_lut_generator.estimate_lut import main as main_

23 |

24 |

25 | # TODO: add option to add conf lines that are passed with --conf to darktable.

26 |

27 | def main():

28 | parser = argparse.ArgumentParser(

29 | # description='Generate .cube 3D LUT from jpg/raw sample pairs',

30 | usage='This package estimates a .cube 3D lookup table for use with the Darktable lut 3D module. \n'

31 | 'A direktory with image pairs of one RAW image and the corresponding OOC image (e.g. JPEG) is used '

32 | 'as input. \n'

33 | 'The images should represent a wide variety of colors; ideally, the whole Adobe RGB color space is covered.\n'

34 | 'The resulting LUT is intended for application in Adobe RGB color space. Hence, it is advisable to shoot the images in'

35 | 'Adobe RGB.'

36 | '\n'

37 | 'Estimation is performed by estimating the differences to an identity LUT '

38 | 'using linear regression with LASSO regularization, assuming trilinear interpolation '

39 | 'when applying the LUT. \n'

40 | 'Very sparsely or non-sampled colors will fallback to identity. However, no sophisticated hyperparameter tuning'

41 | ' regarding the LASSO parameter has been conducted, especially regarding different cube size. \n'

42 | 'n_samples pixels are sampled from the image, as using all pixels is computationally expensive. \n'

43 | 'Sampling is performed weighted by the inverse estimated sample density conditioned on the raw pixel colors '

44 | 'in order to obtain a sample with approximately uniform distribution over the represented colors. \n'

45 | 'This reduces the needed sample count for good results by approx. an order of magnitude compared to drawing '

46 | 'pixels uniformly.'

47 | )

48 | parser.add_argument(

49 | 'dir_images',

50 | type=str,

51 | help='Directory with input image pairs. In the directory, for each raw image, exactly one (out of camera) image '

52 | 'must be present. The images of one pair must have the same base name, but different extension.'

53 | )

54 | parser.add_argument(

55 | 'file_lut_output',

56 | type=str,

57 | help='Desired filepath to store output 3D .cube LUT (with extension).'

58 | )

59 | parser.add_argument(

60 | '--n_samples',

61 | type=int,

62 | default=100000,

63 | help='Number of pixels to sample from the images for LUT estimation. '

64 | 'Higher values may produce more accurate results, but are slower and more memory intensive. '

65 | 'The default value works well. Try 10000 if running out of memory. Values over 500000 usually provide no '

66 | 'significant benefit, but this depends on the images and the lut size'

67 | 'Set to 0 to use all pixels (recommended with resize)'

68 | )

69 | parser.add_argument(

70 | '--size',

71 | type=int,

72 | default=9,

73 | help='Resulting cube resolution per dimension. '

74 | 'Keep in mind that for high sizes, much sample data covering many colors is needed for good generalization '

75 | 'performance.'

76 | )

77 | parser.add_argument(

78 | '--resize',

79 | type=int,

80 | default=1000,

81 | help='If provided, the input images are resized to this maximum border length. If 0, images are not resized, which'

82 | ' may result in long alignment runtimes, but better LUT quality.'

83 | )

84 | parser.add_argument(

85 | '--is_grayscale',

86 | action='store_true',

87 | help='Provide this flag if the image style is grayscale. Ensures that the resulting'

88 | ' lookup table contains only grayscale values.'

89 | )

90 | parser.set_defaults(is_grayscale=False)

91 | parser.add_argument(

92 | '--sample_uniform',

93 | action='store_true',

94 | help='Try to sample the pixels uniformly over the color space. This may help if particular colors are represented'

95 | ' by only small regions in the sample images.'

96 | )

97 | parser.set_defaults(sample_uniform=False)

98 |

99 | parser.add_argument(

100 | '--use_lens_correction',

101 | action='store_true',

102 | help='Use auto-applied lens correction module for the RAW image. Only effective without --path_style_raw.'

103 | ' Note that lens correction is a bit tricky as it can change the exposure, so that the resulting LUT may only yield good results'

104 | 'for images with the same lens and lens correction applied. It should be preferred to not use lens correctio and'

105 | ' also disable lens correction in camera. Then, alignment can usually also be disabled with --disable_image_alignment.'

106 | ' This setting is mainly intended for use with cameras that do not allow'

107 | ' disabling in-camera lens correction for the OOC JPEGs.'

108 | )

109 | parser.set_defaults(use_lens_correction=False)

110 | parser.add_argument(

111 | '--n_passes_alignment',

112 | type=int,

113 | default=2,

114 | help='Set the number of image alignment passes. If 0, no alignment is performed and the image pairs are just cropped to same size. '

115 | 'Values greater than 1 use passes of pre-alignment (see below). '

116 | 'Often, developed raws and OOC images do not overlap'

117 | ' perfectly. One may assume that the developed Raw has the same amount of additional'

118 | ' pixels on each side and is otherwise geometrically identical to the OOC image.'

119 | 'Then, the developed raw can simply be cropped accordingly. \n'

120 | 'The assumption does not hold in many real-world cases, though. In particular, in-camera lens correction'

121 | ' may distort the image. \n \n'

122 | ' A simple image alignment procedure is used'

123 | ' to align the images and compensate for some distortions by default. '

124 | 'Alignment is tricky, especially as OOC and RAW images usually exhibit different gradiation. '

125 | 'Pixel-Level alignment precision is necessary for good LUT estimation results and this is '

126 | 'not necessarily provided with alignment. Hence, it is important to check the alignment results.'

127 | 'Use the --path_dir_out_info to inspect'

128 | ' the generated images and assess whether alignment is necessary and if it works.'

129 | 'Generally, the best results are achieved by disabling in camera lens correction. \n \n'

130 | 'By default, two passes of LUT estimation are performed:'

131 | 'First, a rough estimate ot LUT is calculated without alignment. Then, this LUT is used to transform the '

132 | 'RAW image\'s colors for better alignment of the final pass. This is motivated by the problem that'

133 | ' the different color rendition of RAW and OOC images make proper alignment difficult.'

134 | 'If the first LUT estimate is not good enough, try 3 passes.'

135 | )

136 | parser.add_argument(

137 | '--align_translation_only',

138 | action='store_true',

139 | help='Use translation instead of affine transform for alignment..'

140 | )

141 | parser.set_defaults(align_translation_only=False)

142 | parser.add_argument(

143 | '--interpolation',

144 | type=str,

145 | default='trilinear',

146 | help='LUT interpolation. Either trilinear or tetrahedral.'

147 | )

148 | parser.add_argument(

149 | '--path_dt_cli',

150 | type=str,

151 | default=None,

152 | help='Path to the darktable-cli executable if it is not in PATH.'

153 | )

154 | parser.add_argument(

155 | '--path_style_raw',

156 | type=str,

157 | default=None,

158 | help='Path to an optional .dtstyle file for processing the raw images of the input image pairs. '

159 | 'Use this, for instance, to use a different color space or a different exposure so that the resulting LUT '

160 | 'will yield the correct result on a raw with the corresponding modules applied. '

161 | 'A practical example might be to shoot the sample images in a controlled environment and apply the color'

162 | ' calibration module with a color checker on all sample images in order to ensure proper input color space '

163 | 'transformation.'

164 | )

165 | parser.add_argument(

166 | '--path_style_image',

167 | type=str,

168 | default=None,

169 | help='Path to an optional .dtstyle file for processing the out of camera / processed images of the input image pairs. '

170 | 'This can be used to use different color spaces, but no further changes should be made to the image.'

171 | )

172 | parser.add_argument(

173 | '--paths_dirs_files_config_use',

174 | type=str,

175 | default=None,

176 | help='By default, darktable is called with an empty config directory, in order to prevent user settings on the'

177 | ' system from interfering with the LUT generation (e.g. by auto-applying presets). Here, a comma-separated'

178 | ' list of file or directory paths that will be copied to the empty darktable config directory'

179 | ' can be specified. A use case is if one wants to use raw presets with --path_style_raw that use'

180 | ' a custom input or output color profile.'

181 | 'This option can only be used if path_config_dir is not used.'

182 | )

183 |

184 | parser.add_argument(

185 | '--path_config_dir',

186 | type=str,

187 | default=None,

188 | help='By default, darktable is called with an empty config directory, in order to prevent user settings on the'

189 | ' system from interfering with the LUT generation (e.g. by auto-applying presets). Here, a config dir can be specified.'

190 | ' use this if you want to make an LUT specifically for your default settings applied to images.'

191 | 'to use. this option can only be used if paths_dirs_files_config_use is not used.'

192 | )

193 |

194 | parser.add_argument(

195 | '--path_dir_intermediate',

196 | type=str,

197 | default=None,

198 | help='Path to directory where intermediate converted images are stored..'

199 | )

200 | parser.add_argument(

201 | '--path_dir_out_info',

202 | type=str,

203 | default=None,

204 | help='Path to directory to output additional information / plots'

205 | )

206 | parser.add_argument(

207 | '--make_interpolated_estimates_red',

208 | action='store_true',

209 | help='In the resulting LUT, make estimates of colors that were interpolated due to unreliably few datapoints red. '

210 | 'Only applies if --no_interpolation_unsampled_colors is not set. Useful for debugging and identifying sparsely sampled colors.'

211 | )

212 | parser.set_defaults(make_interpolated_estimates_red=False)

213 | parser.add_argument(

214 | '--make_unchanged_red',

215 | action='store_true',

216 | help='In the resulting LUT, make colors that are estimated as unchanged w.r.t. an identity LUT red. Useful for debugging and identifying sparsely sampled colors.'

217 | )

218 | parser.set_defaults(make_unchanged_red=False)

219 | parser.add_argument(

220 | '--no_interpolation_unsampled_colors',

221 | action='store_true',

222 | help='By default, estimates for colors without or with only unreliably few samples (depending on'

223 | '--interpolate_unreliable_colors) are interpolated with neighboring colors. '

224 | 'This flag disables the interpolation, which may lead to wrong colors that are not covered well by the sample images..'

225 | )

226 | parser.set_defaults(no_interpolation_unsampled_colors=False)

227 | parser.add_argument('--title', default=None, help='The LUT title to write to the .cube file in the TITLE field')

228 | parser.add_argument('--comment', default=None,

229 | help='A comment that will be written in the header of the .cube file')

230 | parser.add_argument(

231 | '--interpolate_unreliable_colors',

232 | action='store_true',

233 | help='By default, estimates for colors with no samples are interpolated. '

234 | 'If this flag is active and --no_interpolation_unsampled_colors is NOT set '

235 | '(otherwise there is no interpolation at all), colors with '

236 | 'only a few samples are considered unreliable in contrast to only considering colors with no samples unreliable. '

237 | 'This may improve stability if there are some colors'

238 | ' represented by very few pixels.'

239 | 'TODO: Do some statistical inference to determine reliability of estimated parameters for more '

240 | 'sophisticated decision which colors to interpolate. But note that constrained optimization is used, '

241 | 'so that the statistical assumptions for OLS standard errors do not apply. In the one hand, '

242 | 'providing a statistically attractive measure for reliability may not be as trivial as it seems '

243 | 'intuitively. In the other hand, a simple approach might work well enough in practice. '

244 | 'If you like to contribute, you are welcome!'

245 | )

246 | parser.set_defaults(interpolate_unreliable_colors=False)

247 |

248 | args = parser.parse_args()

249 |

250 | main_(

251 | args.dir_images,

252 | args.file_lut_output,

253 | args.size,

254 | args.n_samples if args.n_samples > 0 else None,

255 | args.is_grayscale,

256 | args.resize,

257 | args.path_dt_cli,

258 | args.path_style_image,

259 | args.path_style_raw,

260 | args.path_dir_intermediate,

261 | args.path_dir_out_info,

262 | args.make_interpolated_estimates_red,

263 | args.make_unchanged_red,

264 | not args.no_interpolation_unsampled_colors,

265 | args.use_lens_correction,

266 | args.n_passes_alignment,

267 | args.align_translation_only,

268 | args.sample_uniform,

269 | not args.interpolate_unreliable_colors,

270 | args.interpolation,

271 | args.paths_dirs_files_config_use,

272 | args.path_config_dir,

273 | args.title,

274 | args.comment

275 | )

276 |

277 |

278 | if __name__ == '__main__':

279 | sys.exit(main())

280 |

--------------------------------------------------------------------------------

/LICENSE:

--------------------------------------------------------------------------------

1 | GNU GENERAL PUBLIC LICENSE

2 | Version 3, 29 June 2007

3 |

4 | Copyright (C) 2007 Free Software Foundation, Inc.

5 | Everyone is permitted to copy and distribute verbatim copies

6 | of this license document, but changing it is not allowed.

7 |

8 | Preamble

9 |

10 | The GNU General Public License is a free, copyleft license for

11 | software and other kinds of works.

12 |

13 | The licenses for most software and other practical works are designed

14 | to take away your freedom to share and change the works. By contrast,

15 | the GNU General Public License is intended to guarantee your freedom to

16 | share and change all versions of a program--to make sure it remains free

17 | software for all its users. We, the Free Software Foundation, use the

18 | GNU General Public License for most of our software; it applies also to

19 | any other work released this way by its authors. You can apply it to

20 | your programs, too.

21 |

22 | When we speak of free software, we are referring to freedom, not

23 | price. Our General Public Licenses are designed to make sure that you

24 | have the freedom to distribute copies of free software (and charge for

25 | them if you wish), that you receive source code or can get it if you

26 | want it, that you can change the software or use pieces of it in new

27 | free programs, and that you know you can do these things.

28 |

29 | To protect your rights, we need to prevent others from denying you

30 | these rights or asking you to surrender the rights. Therefore, you have

31 | certain responsibilities if you distribute copies of the software, or if

32 | you modify it: responsibilities to respect the freedom of others.

33 |

34 | For example, if you distribute copies of such a program, whether

35 | gratis or for a fee, you must pass on to the recipients the same

36 | freedoms that you received. You must make sure that they, too, receive

37 | or can get the source code. And you must show them these terms so they

38 | know their rights.

39 |

40 | Developers that use the GNU GPL protect your rights with two steps:

41 | (1) assert copyright on the software, and (2) offer you this License

42 | giving you legal permission to copy, distribute and/or modify it.

43 |

44 | For the developers' and authors' protection, the GPL clearly explains

45 | that there is no warranty for this free software. For both users' and

46 | authors' sake, the GPL requires that modified versions be marked as

47 | changed, so that their problems will not be attributed erroneously to

48 | authors of previous versions.

49 |

50 | Some devices are designed to deny users access to install or run

51 | modified versions of the software inside them, although the manufacturer

52 | can do so. This is fundamentally incompatible with the aim of

53 | protecting users' freedom to change the software. The systematic

54 | pattern of such abuse occurs in the area of products for individuals to

55 | use, which is precisely where it is most unacceptable. Therefore, we

56 | have designed this version of the GPL to prohibit the practice for those

57 | products. If such problems arise substantially in other domains, we

58 | stand ready to extend this provision to those domains in future versions

59 | of the GPL, as needed to protect the freedom of users.

60 |

61 | Finally, every program is threatened constantly by software patents.

62 | States should not allow patents to restrict development and use of

63 | software on general-purpose computers, but in those that do, we wish to

64 | avoid the special danger that patents applied to a free program could

65 | make it effectively proprietary. To prevent this, the GPL assures that

66 | patents cannot be used to render the program non-free.

67 |

68 | The precise terms and conditions for copying, distribution and

69 | modification follow.

70 |

71 | TERMS AND CONDITIONS

72 |

73 | 0. Definitions.

74 |

75 | "This License" refers to version 3 of the GNU General Public License.

76 |

77 | "Copyright" also means copyright-like laws that apply to other kinds of

78 | works, such as semiconductor masks.

79 |

80 | "The Program" refers to any copyrightable work licensed under this

81 | License. Each licensee is addressed as "you". "Licensees" and

82 | "recipients" may be individuals or organizations.

83 |

84 | To "modify" a work means to copy from or adapt all or part of the work

85 | in a fashion requiring copyright permission, other than the making of an

86 | exact copy. The resulting work is called a "modified version" of the

87 | earlier work or a work "based on" the earlier work.

88 |

89 | A "covered work" means either the unmodified Program or a work based

90 | on the Program.

91 |

92 | To "propagate" a work means to do anything with it that, without

93 | permission, would make you directly or secondarily liable for

94 | infringement under applicable copyright law, except executing it on a

95 | computer or modifying a private copy. Propagation includes copying,

96 | distribution (with or without modification), making available to the

97 | public, and in some countries other activities as well.

98 |

99 | To "convey" a work means any kind of propagation that enables other

100 | parties to make or receive copies. Mere interaction with a user through

101 | a computer network, with no transfer of a copy, is not conveying.

102 |

103 | An interactive user interface displays "Appropriate Legal Notices"

104 | to the extent that it includes a convenient and prominently visible

105 | feature that (1) displays an appropriate copyright notice, and (2)

106 | tells the user that there is no warranty for the work (except to the

107 | extent that warranties are provided), that licensees may convey the

108 | work under this License, and how to view a copy of this License. If

109 | the interface presents a list of user commands or options, such as a

110 | menu, a prominent item in the list meets this criterion.

111 |

112 | 1. Source Code.

113 |

114 | The "source code" for a work means the preferred form of the work

115 | for making modifications to it. "Object code" means any non-source

116 | form of a work.

117 |

118 | A "Standard Interface" means an interface that either is an official

119 | standard defined by a recognized standards body, or, in the case of

120 | interfaces specified for a particular programming language, one that

121 | is widely used among developers working in that language.

122 |

123 | The "System Libraries" of an executable work include anything, other

124 | than the work as a whole, that (a) is included in the normal form of

125 | packaging a Major Component, but which is not part of that Major

126 | Component, and (b) serves only to enable use of the work with that

127 | Major Component, or to implement a Standard Interface for which an

128 | implementation is available to the public in source code form. A

129 | "Major Component", in this context, means a major essential component

130 | (kernel, window system, and so on) of the specific operating system

131 | (if any) on which the executable work runs, or a compiler used to

132 | produce the work, or an object code interpreter used to run it.

133 |

134 | The "Corresponding Source" for a work in object code form means all

135 | the source code needed to generate, install, and (for an executable

136 | work) run the object code and to modify the work, including scripts to

137 | control those activities. However, it does not include the work's

138 | System Libraries, or general-purpose tools or generally available free

139 | programs which are used unmodified in performing those activities but

140 | which are not part of the work. For example, Corresponding Source

141 | includes interface definition files associated with source files for

142 | the work, and the source code for shared libraries and dynamically

143 | linked subprograms that the work is specifically designed to require,

144 | such as by intimate data communication or control flow between those

145 | subprograms and other parts of the work.

146 |

147 | The Corresponding Source need not include anything that users

148 | can regenerate automatically from other parts of the Corresponding

149 | Source.

150 |

151 | The Corresponding Source for a work in source code form is that

152 | same work.

153 |

154 | 2. Basic Permissions.

155 |

156 | All rights granted under this License are granted for the term of

157 | copyright on the Program, and are irrevocable provided the stated

158 | conditions are met. This License explicitly affirms your unlimited

159 | permission to run the unmodified Program. The output from running a

160 | covered work is covered by this License only if the output, given its

161 | content, constitutes a covered work. This License acknowledges your

162 | rights of fair use or other equivalent, as provided by copyright law.

163 |

164 | You may make, run and propagate covered works that you do not

165 | convey, without conditions so long as your license otherwise remains

166 | in force. You may convey covered works to others for the sole purpose

167 | of having them make modifications exclusively for you, or provide you

168 | with facilities for running those works, provided that you comply with

169 | the terms of this License in conveying all material for which you do

170 | not control copyright. Those thus making or running the covered works

171 | for you must do so exclusively on your behalf, under your direction

172 | and control, on terms that prohibit them from making any copies of

173 | your copyrighted material outside their relationship with you.

174 |

175 | Conveying under any other circumstances is permitted solely under

176 | the conditions stated below. Sublicensing is not allowed; section 10

177 | makes it unnecessary.

178 |

179 | 3. Protecting Users' Legal Rights From Anti-Circumvention Law.

180 |

181 | No covered work shall be deemed part of an effective technological

182 | measure under any applicable law fulfilling obligations under article

183 | 11 of the WIPO copyright treaty adopted on 20 December 1996, or

184 | similar laws prohibiting or restricting circumvention of such

185 | measures.

186 |

187 | When you convey a covered work, you waive any legal power to forbid

188 | circumvention of technological measures to the extent such circumvention

189 | is effected by exercising rights under this License with respect to

190 | the covered work, and you disclaim any intention to limit operation or

191 | modification of the work as a means of enforcing, against the work's

192 | users, your or third parties' legal rights to forbid circumvention of

193 | technological measures.

194 |

195 | 4. Conveying Verbatim Copies.

196 |

197 | You may convey verbatim copies of the Program's source code as you

198 | receive it, in any medium, provided that you conspicuously and

199 | appropriately publish on each copy an appropriate copyright notice;

200 | keep intact all notices stating that this License and any

201 | non-permissive terms added in accord with section 7 apply to the code;

202 | keep intact all notices of the absence of any warranty; and give all

203 | recipients a copy of this License along with the Program.

204 |

205 | You may charge any price or no price for each copy that you convey,

206 | and you may offer support or warranty protection for a fee.

207 |

208 | 5. Conveying Modified Source Versions.

209 |

210 | You may convey a work based on the Program, or the modifications to

211 | produce it from the Program, in the form of source code under the

212 | terms of section 4, provided that you also meet all of these conditions:

213 |

214 | a) The work must carry prominent notices stating that you modified

215 | it, and giving a relevant date.

216 |

217 | b) The work must carry prominent notices stating that it is

218 | released under this License and any conditions added under section

219 | 7. This requirement modifies the requirement in section 4 to

220 | "keep intact all notices".

221 |

222 | c) You must license the entire work, as a whole, under this

223 | License to anyone who comes into possession of a copy. This

224 | License will therefore apply, along with any applicable section 7

225 | additional terms, to the whole of the work, and all its parts,

226 | regardless of how they are packaged. This License gives no

227 | permission to license the work in any other way, but it does not

228 | invalidate such permission if you have separately received it.

229 |

230 | d) If the work has interactive user interfaces, each must display

231 | Appropriate Legal Notices; however, if the Program has interactive

232 | interfaces that do not display Appropriate Legal Notices, your

233 | work need not make them do so.

234 |

235 | A compilation of a covered work with other separate and independent

236 | works, which are not by their nature extensions of the covered work,

237 | and which are not combined with it such as to form a larger program,

238 | in or on a volume of a storage or distribution medium, is called an

239 | "aggregate" if the compilation and its resulting copyright are not

240 | used to limit the access or legal rights of the compilation's users

241 | beyond what the individual works permit. Inclusion of a covered work

242 | in an aggregate does not cause this License to apply to the other

243 | parts of the aggregate.

244 |

245 | 6. Conveying Non-Source Forms.

246 |

247 | You may convey a covered work in object code form under the terms

248 | of sections 4 and 5, provided that you also convey the

249 | machine-readable Corresponding Source under the terms of this License,

250 | in one of these ways:

251 |

252 | a) Convey the object code in, or embodied in, a physical product

253 | (including a physical distribution medium), accompanied by the

254 | Corresponding Source fixed on a durable physical medium

255 | customarily used for software interchange.

256 |

257 | b) Convey the object code in, or embodied in, a physical product

258 | (including a physical distribution medium), accompanied by a

259 | written offer, valid for at least three years and valid for as

260 | long as you offer spare parts or customer support for that product

261 | model, to give anyone who possesses the object code either (1) a

262 | copy of the Corresponding Source for all the software in the

263 | product that is covered by this License, on a durable physical

264 | medium customarily used for software interchange, for a price no

265 | more than your reasonable cost of physically performing this

266 | conveying of source, or (2) access to copy the

267 | Corresponding Source from a network server at no charge.

268 |

269 | c) Convey individual copies of the object code with a copy of the

270 | written offer to provide the Corresponding Source. This

271 | alternative is allowed only occasionally and noncommercially, and

272 | only if you received the object code with such an offer, in accord

273 | with subsection 6b.

274 |

275 | d) Convey the object code by offering access from a designated

276 | place (gratis or for a charge), and offer equivalent access to the

277 | Corresponding Source in the same way through the same place at no

278 | further charge. You need not require recipients to copy the

279 | Corresponding Source along with the object code. If the place to

280 | copy the object code is a network server, the Corresponding Source

281 | may be on a different server (operated by you or a third party)

282 | that supports equivalent copying facilities, provided you maintain

283 | clear directions next to the object code saying where to find the

284 | Corresponding Source. Regardless of what server hosts the

285 | Corresponding Source, you remain obligated to ensure that it is

286 | available for as long as needed to satisfy these requirements.

287 |

288 | e) Convey the object code using peer-to-peer transmission, provided

289 | you inform other peers where the object code and Corresponding

290 | Source of the work are being offered to the general public at no

291 | charge under subsection 6d.

292 |

293 | A separable portion of the object code, whose source code is excluded

294 | from the Corresponding Source as a System Library, need not be

295 | included in conveying the object code work.

296 |

297 | A "User Product" is either (1) a "consumer product", which means any

298 | tangible personal property which is normally used for personal, family,

299 | or household purposes, or (2) anything designed or sold for incorporation

300 | into a dwelling. In determining whether a product is a consumer product,

301 | doubtful cases shall be resolved in favor of coverage. For a particular

302 | product received by a particular user, "normally used" refers to a

303 | typical or common use of that class of product, regardless of the status

304 | of the particular user or of the way in which the particular user

305 | actually uses, or expects or is expected to use, the product. A product

306 | is a consumer product regardless of whether the product has substantial

307 | commercial, industrial or non-consumer uses, unless such uses represent

308 | the only significant mode of use of the product.

309 |

310 | "Installation Information" for a User Product means any methods,

311 | procedures, authorization keys, or other information required to install

312 | and execute modified versions of a covered work in that User Product from

313 | a modified version of its Corresponding Source. The information must

314 | suffice to ensure that the continued functioning of the modified object

315 | code is in no case prevented or interfered with solely because

316 | modification has been made.

317 |

318 | If you convey an object code work under this section in, or with, or

319 | specifically for use in, a User Product, and the conveying occurs as

320 | part of a transaction in which the right of possession and use of the

321 | User Product is transferred to the recipient in perpetuity or for a

322 | fixed term (regardless of how the transaction is characterized), the

323 | Corresponding Source conveyed under this section must be accompanied

324 | by the Installation Information. But this requirement does not apply

325 | if neither you nor any third party retains the ability to install

326 | modified object code on the User Product (for example, the work has

327 | been installed in ROM).

328 |

329 | The requirement to provide Installation Information does not include a

330 | requirement to continue to provide support service, warranty, or updates

331 | for a work that has been modified or installed by the recipient, or for

332 | the User Product in which it has been modified or installed. Access to a

333 | network may be denied when the modification itself materially and

334 | adversely affects the operation of the network or violates the rules and

335 | protocols for communication across the network.

336 |

337 | Corresponding Source conveyed, and Installation Information provided,

338 | in accord with this section must be in a format that is publicly

339 | documented (and with an implementation available to the public in

340 | source code form), and must require no special password or key for

341 | unpacking, reading or copying.

342 |

343 | 7. Additional Terms.

344 |

345 | "Additional permissions" are terms that supplement the terms of this

346 | License by making exceptions from one or more of its conditions.

347 | Additional permissions that are applicable to the entire Program shall

348 | be treated as though they were included in this License, to the extent

349 | that they are valid under applicable law. If additional permissions

350 | apply only to part of the Program, that part may be used separately

351 | under those permissions, but the entire Program remains governed by

352 | this License without regard to the additional permissions.

353 |

354 | When you convey a copy of a covered work, you may at your option

355 | remove any additional permissions from that copy, or from any part of

356 | it. (Additional permissions may be written to require their own

357 | removal in certain cases when you modify the work.) You may place

358 | additional permissions on material, added by you to a covered work,

359 | for which you have or can give appropriate copyright permission.

360 |

361 | Notwithstanding any other provision of this License, for material you

362 | add to a covered work, you may (if authorized by the copyright holders of

363 | that material) supplement the terms of this License with terms:

364 |

365 | a) Disclaiming warranty or limiting liability differently from the

366 | terms of sections 15 and 16 of this License; or

367 |

368 | b) Requiring preservation of specified reasonable legal notices or

369 | author attributions in that material or in the Appropriate Legal

370 | Notices displayed by works containing it; or

371 |

372 | c) Prohibiting misrepresentation of the origin of that material, or

373 | requiring that modified versions of such material be marked in

374 | reasonable ways as different from the original version; or

375 |

376 | d) Limiting the use for publicity purposes of names of licensors or

377 | authors of the material; or

378 |

379 | e) Declining to grant rights under trademark law for use of some

380 | trade names, trademarks, or service marks; or

381 |

382 | f) Requiring indemnification of licensors and authors of that

383 | material by anyone who conveys the material (or modified versions of

384 | it) with contractual assumptions of liability to the recipient, for

385 | any liability that these contractual assumptions directly impose on

386 | those licensors and authors.

387 |

388 | All other non-permissive additional terms are considered "further

389 | restrictions" within the meaning of section 10. If the Program as you

390 | received it, or any part of it, contains a notice stating that it is

391 | governed by this License along with a term that is a further

392 | restriction, you may remove that term. If a license document contains

393 | a further restriction but permits relicensing or conveying under this

394 | License, you may add to a covered work material governed by the terms

395 | of that license document, provided that the further restriction does

396 | not survive such relicensing or conveying.

397 |

398 | If you add terms to a covered work in accord with this section, you

399 | must place, in the relevant source files, a statement of the

400 | additional terms that apply to those files, or a notice indicating

401 | where to find the applicable terms.

402 |

403 | Additional terms, permissive or non-permissive, may be stated in the

404 | form of a separately written license, or stated as exceptions;

405 | the above requirements apply either way.

406 |

407 | 8. Termination.

408 |

409 | You may not propagate or modify a covered work except as expressly

410 | provided under this License. Any attempt otherwise to propagate or

411 | modify it is void, and will automatically terminate your rights under

412 | this License (including any patent licenses granted under the third

413 | paragraph of section 11).

414 |

415 | However, if you cease all violation of this License, then your

416 | license from a particular copyright holder is reinstated (a)

417 | provisionally, unless and until the copyright holder explicitly and

418 | finally terminates your license, and (b) permanently, if the copyright

419 | holder fails to notify you of the violation by some reasonable means

420 | prior to 60 days after the cessation.

421 |

422 | Moreover, your license from a particular copyright holder is

423 | reinstated permanently if the copyright holder notifies you of the

424 | violation by some reasonable means, this is the first time you have

425 | received notice of violation of this License (for any work) from that

426 | copyright holder, and you cure the violation prior to 30 days after

427 | your receipt of the notice.

428 |

429 | Termination of your rights under this section does not terminate the

430 | licenses of parties who have received copies or rights from you under

431 | this License. If your rights have been terminated and not permanently

432 | reinstated, you do not qualify to receive new licenses for the same

433 | material under section 10.

434 |

435 | 9. Acceptance Not Required for Having Copies.

436 |

437 | You are not required to accept this License in order to receive or

438 | run a copy of the Program. Ancillary propagation of a covered work

439 | occurring solely as a consequence of using peer-to-peer transmission

440 | to receive a copy likewise does not require acceptance. However,

441 | nothing other than this License grants you permission to propagate or

442 | modify any covered work. These actions infringe copyright if you do

443 | not accept this License. Therefore, by modifying or propagating a

444 | covered work, you indicate your acceptance of this License to do so.

445 |

446 | 10. Automatic Licensing of Downstream Recipients.

447 |

448 | Each time you convey a covered work, the recipient automatically

449 | receives a license from the original licensors, to run, modify and

450 | propagate that work, subject to this License. You are not responsible

451 | for enforcing compliance by third parties with this License.

452 |

453 | An "entity transaction" is a transaction transferring control of an

454 | organization, or substantially all assets of one, or subdividing an

455 | organization, or merging organizations. If propagation of a covered

456 | work results from an entity transaction, each party to that

457 | transaction who receives a copy of the work also receives whatever

458 | licenses to the work the party's predecessor in interest had or could

459 | give under the previous paragraph, plus a right to possession of the

460 | Corresponding Source of the work from the predecessor in interest, if

461 | the predecessor has it or can get it with reasonable efforts.

462 |

463 | You may not impose any further restrictions on the exercise of the

464 | rights granted or affirmed under this License. For example, you may

465 | not impose a license fee, royalty, or other charge for exercise of

466 | rights granted under this License, and you may not initiate litigation

467 | (including a cross-claim or counterclaim in a lawsuit) alleging that

468 | any patent claim is infringed by making, using, selling, offering for

469 | sale, or importing the Program or any portion of it.

470 |

471 | 11. Patents.

472 |

473 | A "contributor" is a copyright holder who authorizes use under this

474 | License of the Program or a work on which the Program is based. The

475 | work thus licensed is called the contributor's "contributor version".

476 |

477 | A contributor's "essential patent claims" are all patent claims

478 | owned or controlled by the contributor, whether already acquired or

479 | hereafter acquired, that would be infringed by some manner, permitted

480 | by this License, of making, using, or selling its contributor version,

481 | but do not include claims that would be infringed only as a

482 | consequence of further modification of the contributor version. For

483 | purposes of this definition, "control" includes the right to grant

484 | patent sublicenses in a manner consistent with the requirements of

485 | this License.

486 |

487 | Each contributor grants you a non-exclusive, worldwide, royalty-free

488 | patent license under the contributor's essential patent claims, to

489 | make, use, sell, offer for sale, import and otherwise run, modify and

490 | propagate the contents of its contributor version.

491 |

492 | In the following three paragraphs, a "patent license" is any express

493 | agreement or commitment, however denominated, not to enforce a patent

494 | (such as an express permission to practice a patent or covenant not to

495 | sue for patent infringement). To "grant" such a patent license to a

496 | party means to make such an agreement or commitment not to enforce a

497 | patent against the party.

498 |

499 | If you convey a covered work, knowingly relying on a patent license,

500 | and the Corresponding Source of the work is not available for anyone

501 | to copy, free of charge and under the terms of this License, through a

502 | publicly available network server or other readily accessible means,

503 | then you must either (1) cause the Corresponding Source to be so

504 | available, or (2) arrange to deprive yourself of the benefit of the

505 | patent license for this particular work, or (3) arrange, in a manner

506 | consistent with the requirements of this License, to extend the patent

507 | license to downstream recipients. "Knowingly relying" means you have

508 | actual knowledge that, but for the patent license, your conveying the

509 | covered work in a country, or your recipient's use of the covered work

510 | in a country, would infringe one or more identifiable patents in that

511 | country that you have reason to believe are valid.

512 |

513 | If, pursuant to or in connection with a single transaction or

514 | arrangement, you convey, or propagate by procuring conveyance of, a

515 | covered work, and grant a patent license to some of the parties

516 | receiving the covered work authorizing them to use, propagate, modify

517 | or convey a specific copy of the covered work, then the patent license

518 | you grant is automatically extended to all recipients of the covered

519 | work and works based on it.

520 |

521 | A patent license is "discriminatory" if it does not include within

522 | the scope of its coverage, prohibits the exercise of, or is

523 | conditioned on the non-exercise of one or more of the rights that are

524 | specifically granted under this License. You may not convey a covered

525 | work if you are a party to an arrangement with a third party that is

526 | in the business of distributing software, under which you make payment

527 | to the third party based on the extent of your activity of conveying

528 | the work, and under which the third party grants, to any of the

529 | parties who would receive the covered work from you, a discriminatory

530 | patent license (a) in connection with copies of the covered work

531 | conveyed by you (or copies made from those copies), or (b) primarily

532 | for and in connection with specific products or compilations that

533 | contain the covered work, unless you entered into that arrangement,

534 | or that patent license was granted, prior to 28 March 2007.

535 |

536 | Nothing in this License shall be construed as excluding or limiting

537 | any implied license or other defenses to infringement that may

538 | otherwise be available to you under applicable patent law.

539 |

540 | 12. No Surrender of Others' Freedom.

541 |

542 | If conditions are imposed on you (whether by court order, agreement or

543 | otherwise) that contradict the conditions of this License, they do not

544 | excuse you from the conditions of this License. If you cannot convey a

545 | covered work so as to satisfy simultaneously your obligations under this

546 | License and any other pertinent obligations, then as a consequence you may

547 | not convey it at all. For example, if you agree to terms that obligate you

548 | to collect a royalty for further conveying from those to whom you convey

549 | the Program, the only way you could satisfy both those terms and this

550 | License would be to refrain entirely from conveying the Program.

551 |

552 | 13. Use with the GNU Affero General Public License.

553 |

554 | Notwithstanding any other provision of this License, you have

555 | permission to link or combine any covered work with a work licensed

556 | under version 3 of the GNU Affero General Public License into a single

557 | combined work, and to convey the resulting work. The terms of this

558 | License will continue to apply to the part which is the covered work,

559 | but the special requirements of the GNU Affero General Public License,

560 | section 13, concerning interaction through a network will apply to the

561 | combination as such.

562 |

563 | 14. Revised Versions of this License.

564 |

565 | The Free Software Foundation may publish revised and/or new versions of

566 | the GNU General Public License from time to time. Such new versions will

567 | be similar in spirit to the present version, but may differ in detail to

568 | address new problems or concerns.

569 |

570 | Each version is given a distinguishing version number. If the

571 | Program specifies that a certain numbered version of the GNU General

572 | Public License "or any later version" applies to it, you have the

573 | option of following the terms and conditions either of that numbered

574 | version or of any later version published by the Free Software

575 | Foundation. If the Program does not specify a version number of the

576 | GNU General Public License, you may choose any version ever published

577 | by the Free Software Foundation.

578 |

579 | If the Program specifies that a proxy can decide which future

580 | versions of the GNU General Public License can be used, that proxy's

581 | public statement of acceptance of a version permanently authorizes you

582 | to choose that version for the Program.

583 |

584 | Later license versions may give you additional or different

585 | permissions. However, no additional obligations are imposed on any

586 | author or copyright holder as a result of your choosing to follow a

587 | later version.

588 |

589 | 15. Disclaimer of Warranty.

590 |

591 | THERE IS NO WARRANTY FOR THE PROGRAM, TO THE EXTENT PERMITTED BY

592 | APPLICABLE LAW. EXCEPT WHEN OTHERWISE STATED IN WRITING THE COPYRIGHT

593 | HOLDERS AND/OR OTHER PARTIES PROVIDE THE PROGRAM "AS IS" WITHOUT WARRANTY

594 | OF ANY KIND, EITHER EXPRESSED OR IMPLIED, INCLUDING, BUT NOT LIMITED TO,

595 | THE IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR

596 | PURPOSE. THE ENTIRE RISK AS TO THE QUALITY AND PERFORMANCE OF THE PROGRAM

597 | IS WITH YOU. SHOULD THE PROGRAM PROVE DEFECTIVE, YOU ASSUME THE COST OF

598 | ALL NECESSARY SERVICING, REPAIR OR CORRECTION.

599 |

600 | 16. Limitation of Liability.

601 |

602 | IN NO EVENT UNLESS REQUIRED BY APPLICABLE LAW OR AGREED TO IN WRITING

603 | WILL ANY COPYRIGHT HOLDER, OR ANY OTHER PARTY WHO MODIFIES AND/OR CONVEYS

604 | THE PROGRAM AS PERMITTED ABOVE, BE LIABLE TO YOU FOR DAMAGES, INCLUDING ANY

605 | GENERAL, SPECIAL, INCIDENTAL OR CONSEQUENTIAL DAMAGES ARISING OUT OF THE

606 | USE OR INABILITY TO USE THE PROGRAM (INCLUDING BUT NOT LIMITED TO LOSS OF

607 | DATA OR DATA BEING RENDERED INACCURATE OR LOSSES SUSTAINED BY YOU OR THIRD

608 | PARTIES OR A FAILURE OF THE PROGRAM TO OPERATE WITH ANY OTHER PROGRAMS),

609 | EVEN IF SUCH HOLDER OR OTHER PARTY HAS BEEN ADVISED OF THE POSSIBILITY OF

610 | SUCH DAMAGES.

611 |

612 | 17. Interpretation of Sections 15 and 16.

613 |

614 | If the disclaimer of warranty and limitation of liability provided

615 | above cannot be given local legal effect according to their terms,

616 | reviewing courts shall apply local law that most closely approximates

617 | an absolute waiver of all civil liability in connection with the

618 | Program, unless a warranty or assumption of liability accompanies a

619 | copy of the Program in return for a fee.

620 |

621 | END OF TERMS AND CONDITIONS

622 |

623 | How to Apply These Terms to Your New Programs

624 |

625 | If you develop a new program, and you want it to be of the greatest

626 | possible use to the public, the best way to achieve this is to make it

627 | free software which everyone can redistribute and change under these terms.

628 |

629 | To do so, attach the following notices to the program. It is safest

630 | to attach them to the start of each source file to most effectively

631 | state the exclusion of warranty; and each file should have at least

632 | the "copyright" line and a pointer to where the full notice is found.

633 |

634 |

635 | Copyright (C)

636 |

637 | This program is free software: you can redistribute it and/or modify

638 | it under the terms of the GNU General Public License as published by

639 | the Free Software Foundation, either version 3 of the License, or

640 | (at your option) any later version.

641 |

642 | This program is distributed in the hope that it will be useful,

643 | but WITHOUT ANY WARRANTY; without even the implied warranty of

644 | MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the

645 | GNU General Public License for more details.

646 |

647 | You should have received a copy of the GNU General Public License

648 | along with this program. If not, see .

649 |

650 | Also add information on how to contact you by electronic and paper mail.

651 |

652 | If the program does terminal interaction, make it output a short

653 | notice like this when it starts in an interactive mode:

654 |

655 | Copyright (C)

656 | This program comes with ABSOLUTELY NO WARRANTY; for details type `show w'.

657 | This is free software, and you are welcome to redistribute it

658 | under certain conditions; type `show c' for details.

659 |

660 | The hypothetical commands `show w' and `show c' should show the appropriate

661 | parts of the General Public License. Of course, your program's commands

662 | might be different; for a GUI interface, you would use an "about box".

663 |

664 | You should also get your employer (if you work as a programmer) or school,

665 | if any, to sign a "copyright disclaimer" for the program, if necessary.

666 | For more information on this, and how to apply and follow the GNU GPL, see

667 | .

668 |

669 | The GNU General Public License does not permit incorporating your program

670 | into proprietary programs. If your program is a subroutine library, you

671 | may consider it more useful to permit linking proprietary applications with

672 | the library. If this is what you want to do, use the GNU Lesser General

673 | Public License instead of this License. But first, please read

674 | .

675 |

--------------------------------------------------------------------------------

/src/darktable_lut_generator/estimate_lut.py:

--------------------------------------------------------------------------------

1 | """

2 | Darktable LUT Generator: Generate .cube lookup tables from out-of-camera photos

3 | Copyright (C) 2021 Björn Sonnenschein

4 |

5 | This program is free software: you can redistribute it and/or modify

6 | it under the terms of the GNU General Public License as published by

7 | the Free Software Foundation, either version 3 of the License, or

8 | (at your option) any later version.

9 |

10 | This program is distributed in the hope that it will be useful,

11 | but WITHOUT ANY WARRANTY; without even the implied warranty of

12 | MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the

13 | GNU General Public License for more details.

14 |

15 | You should have received a copy of the GNU General Public License

16 | along with this program. If not, see .

17 | """

18 | import shutil

19 |

20 | import colour

21 | from scipy.optimize import linprog

22 | from scipy import sparse

23 | # import tensorflow as tf

24 |

25 | from colour import LUT3D

26 |

27 | from scipy.interpolate import RegularGridInterpolator